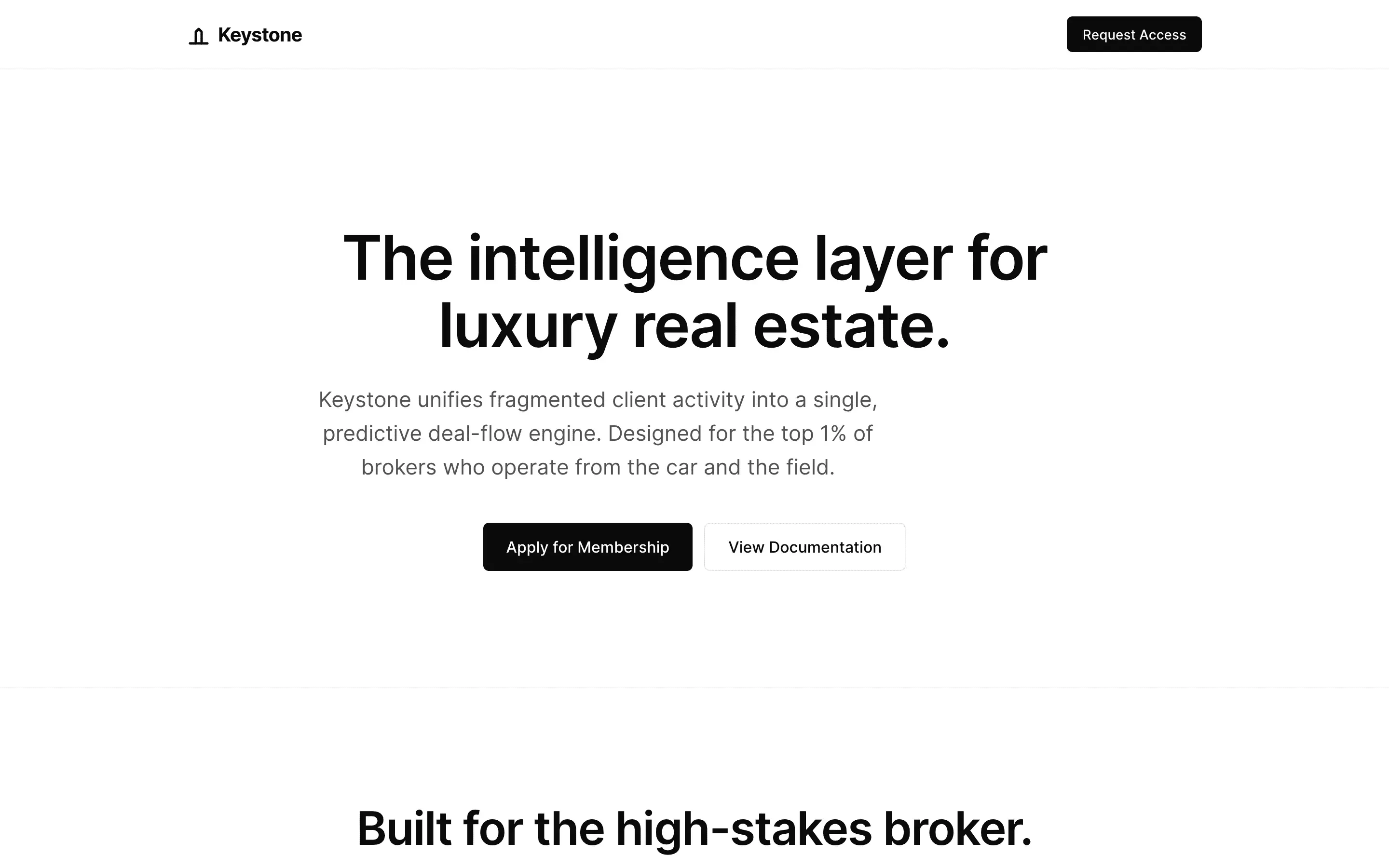

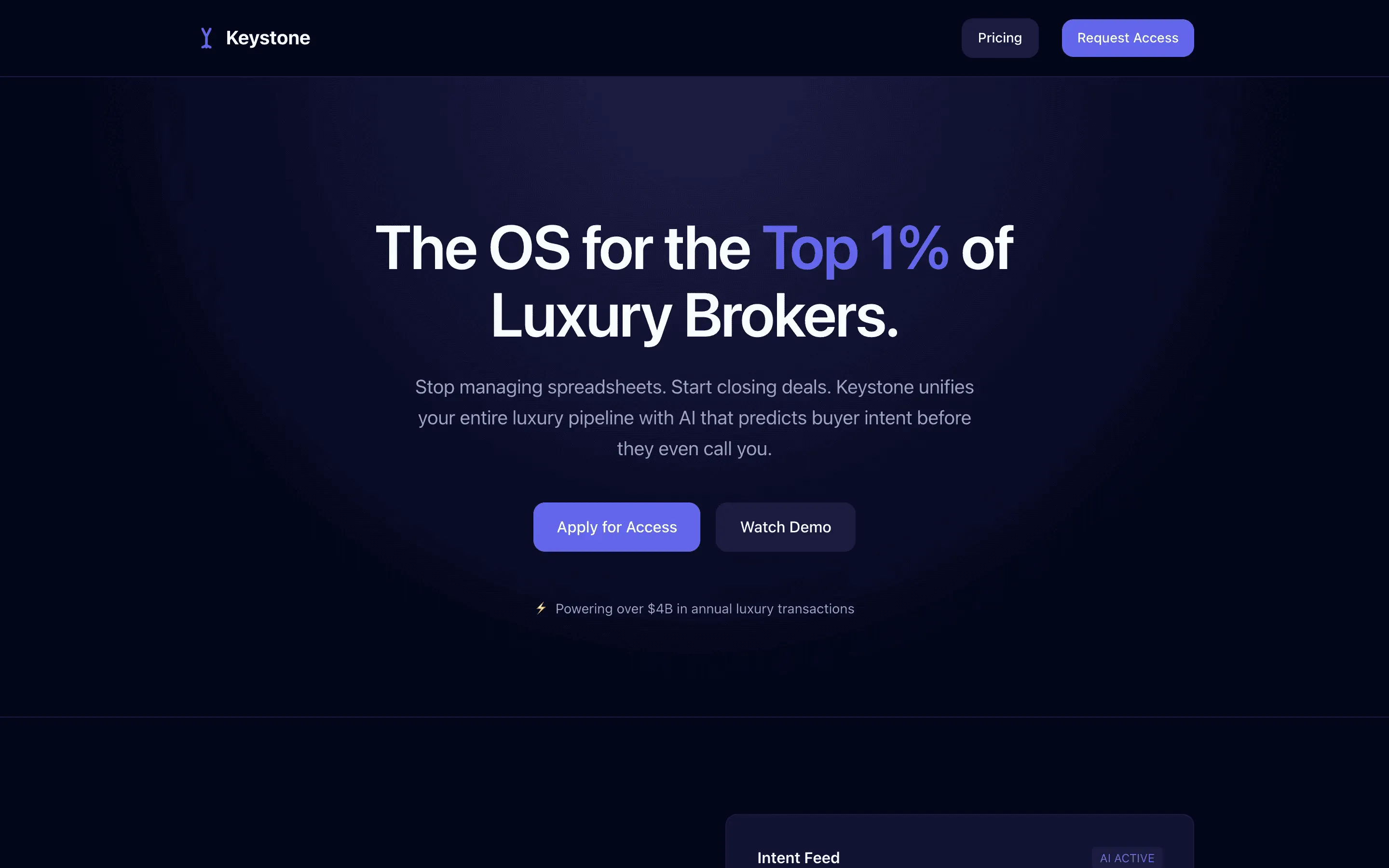

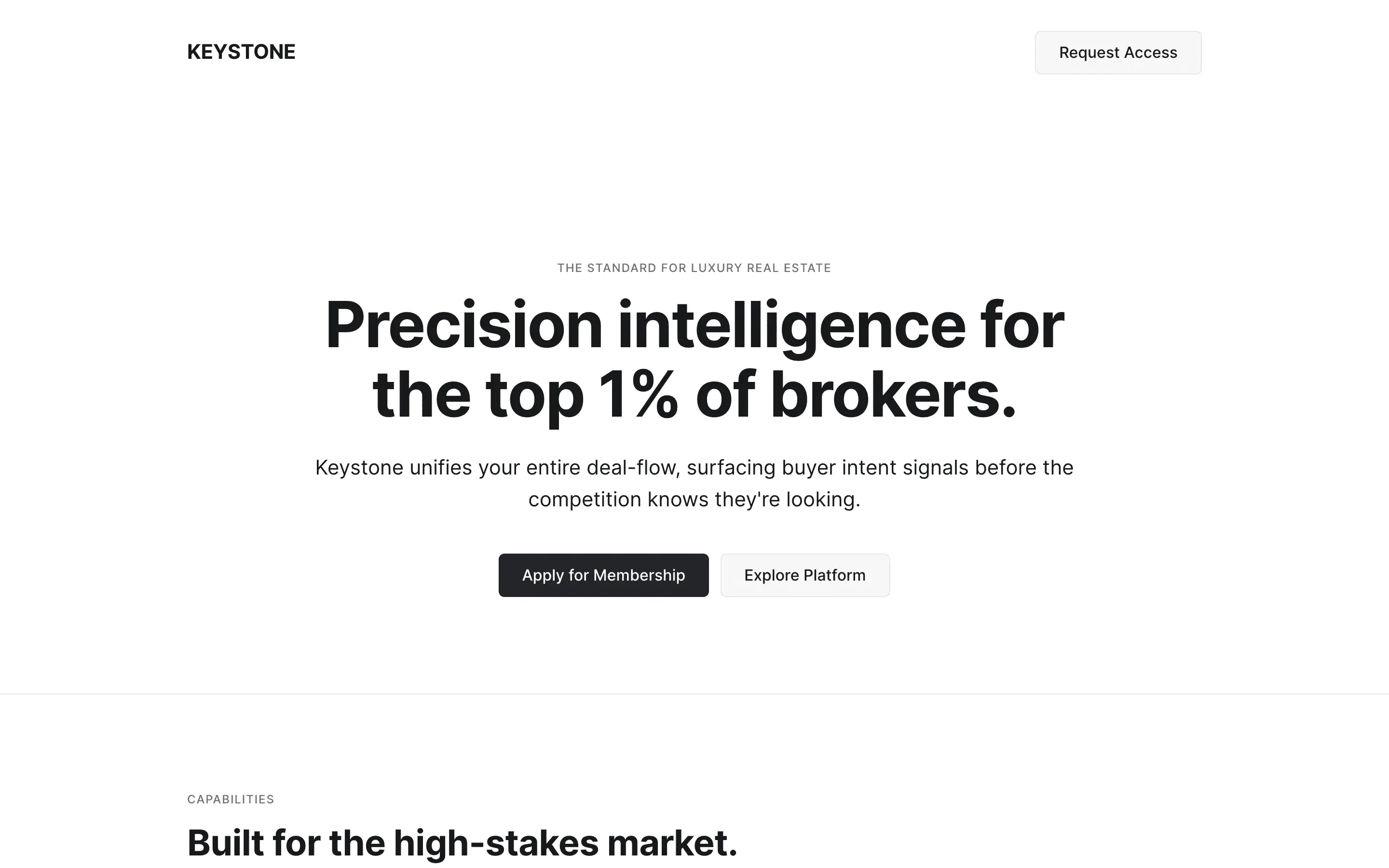

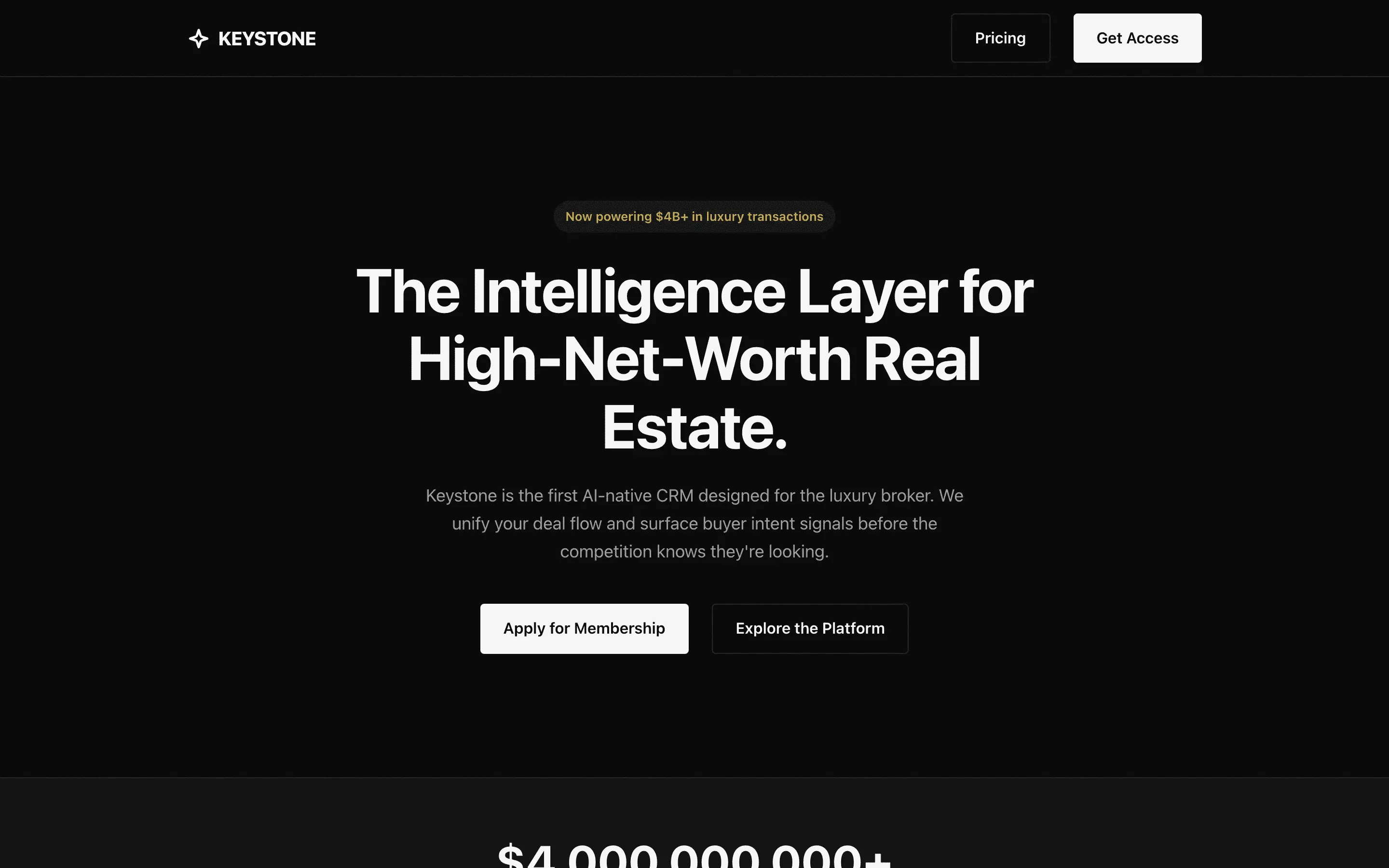

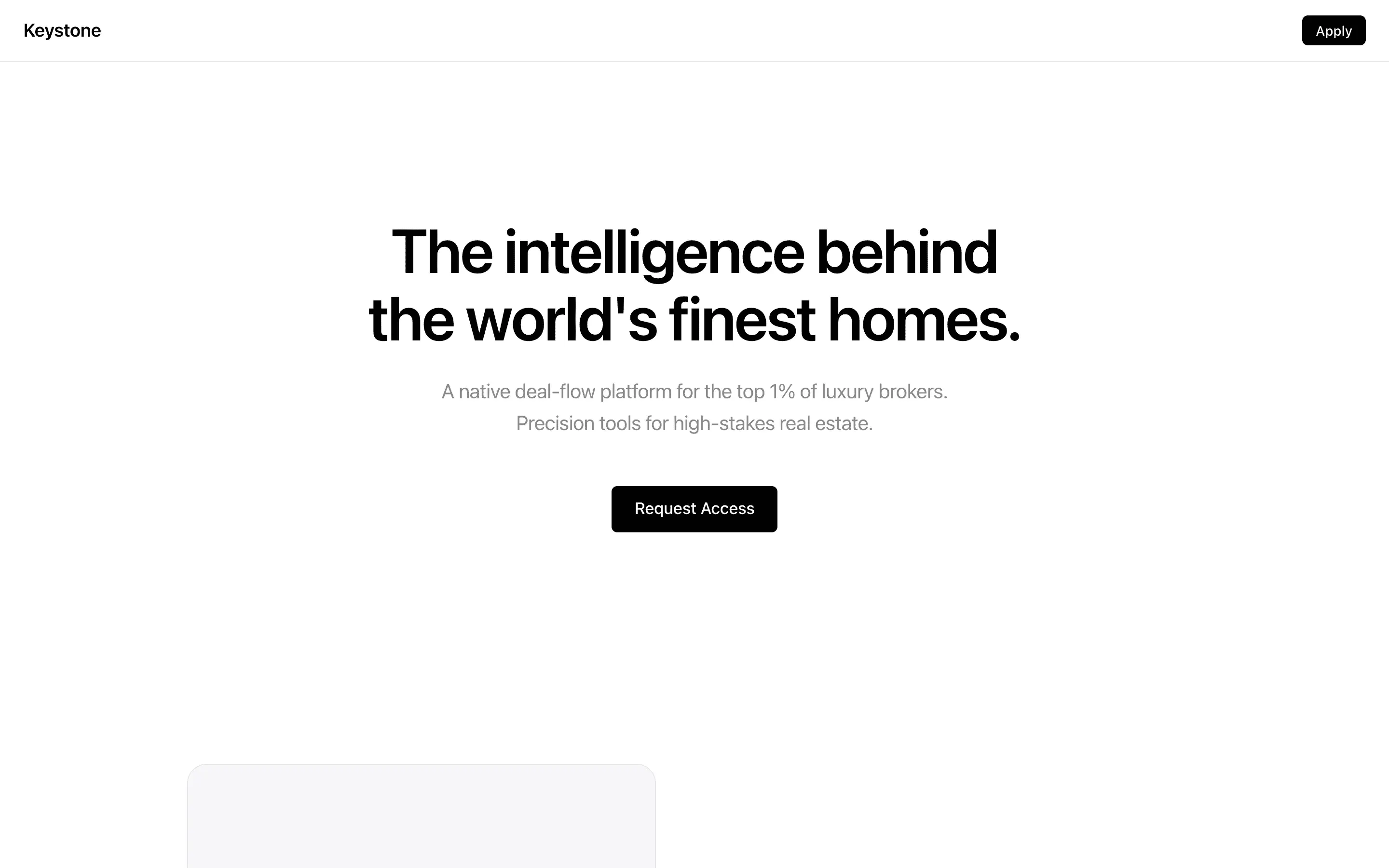

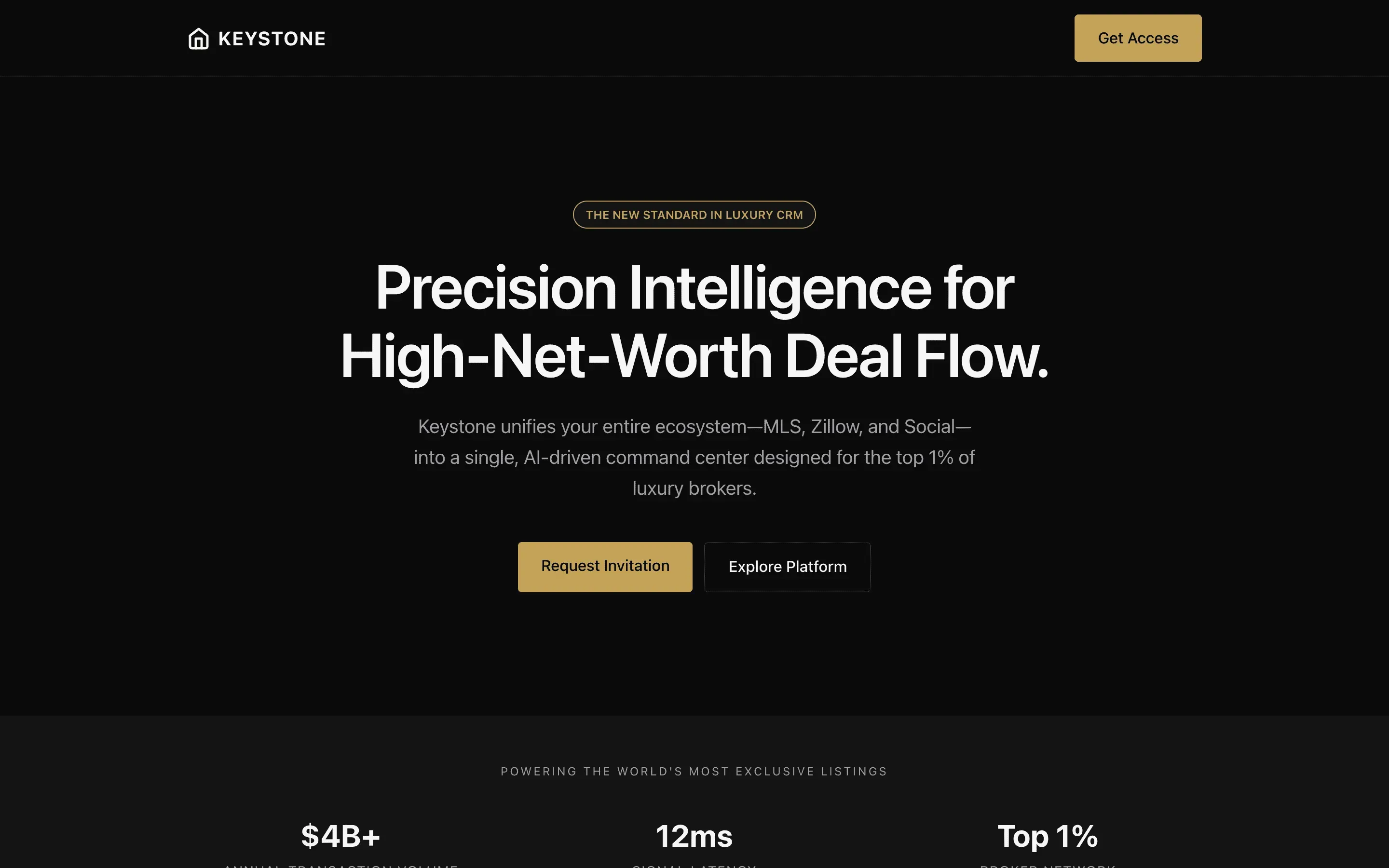

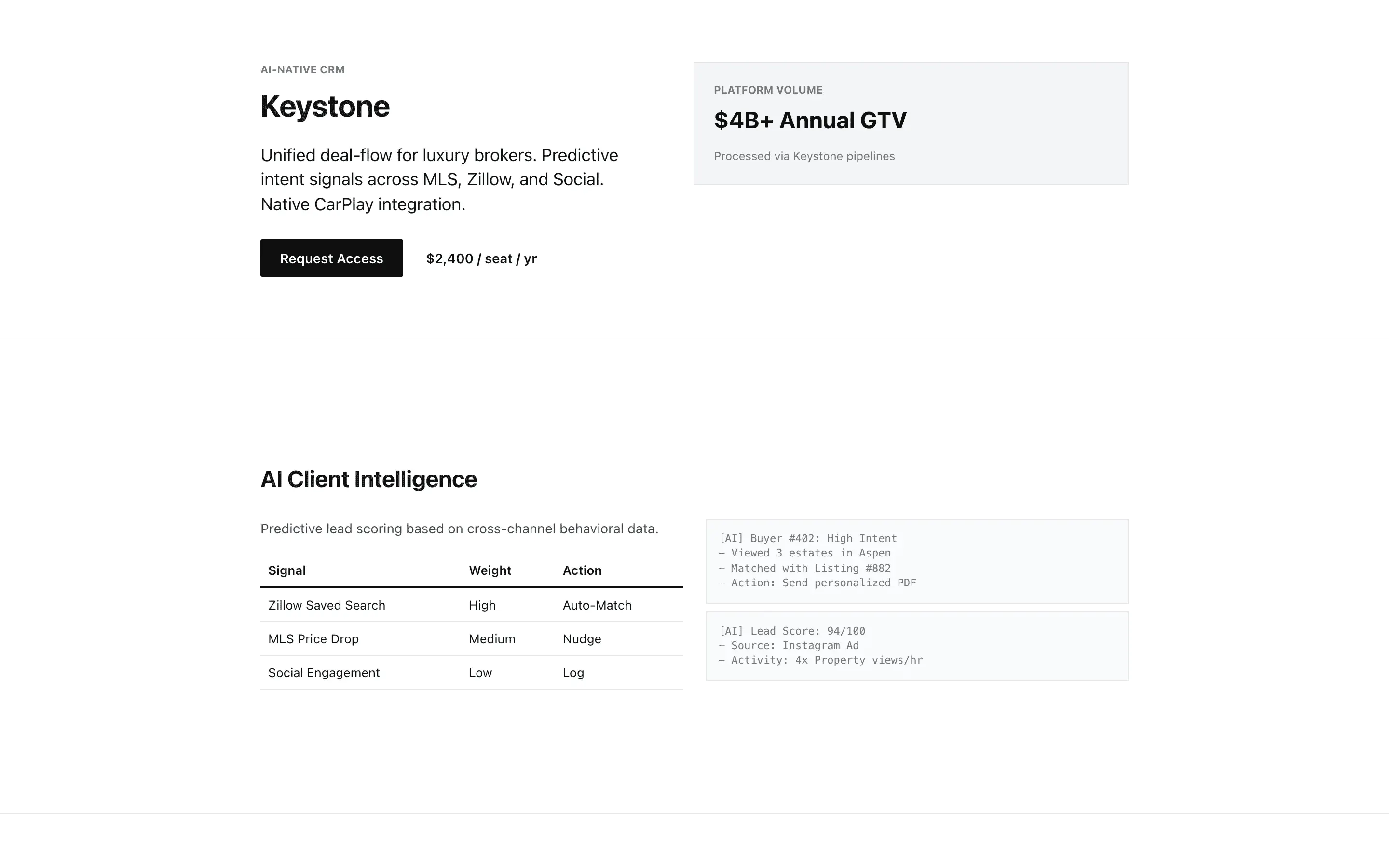

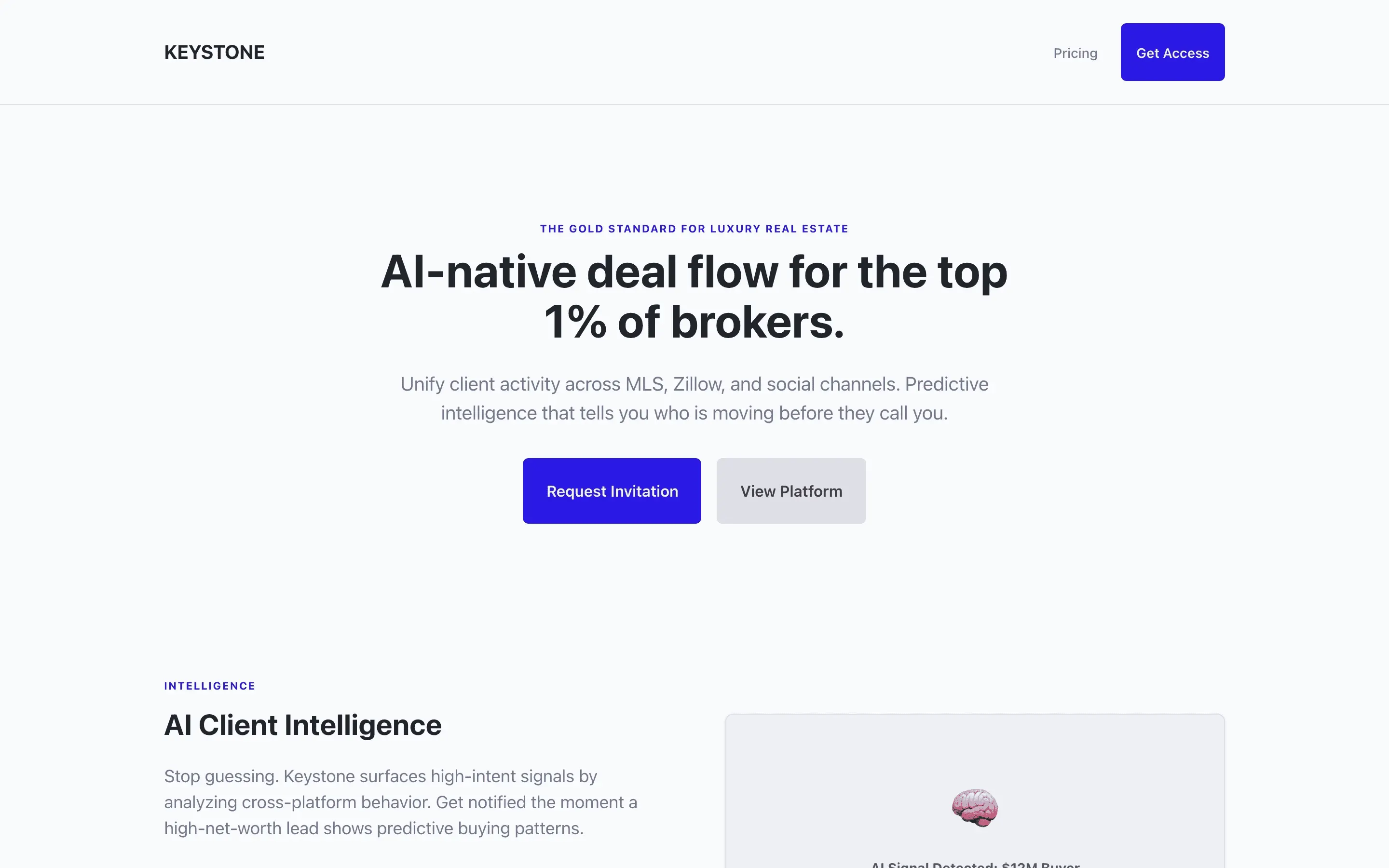

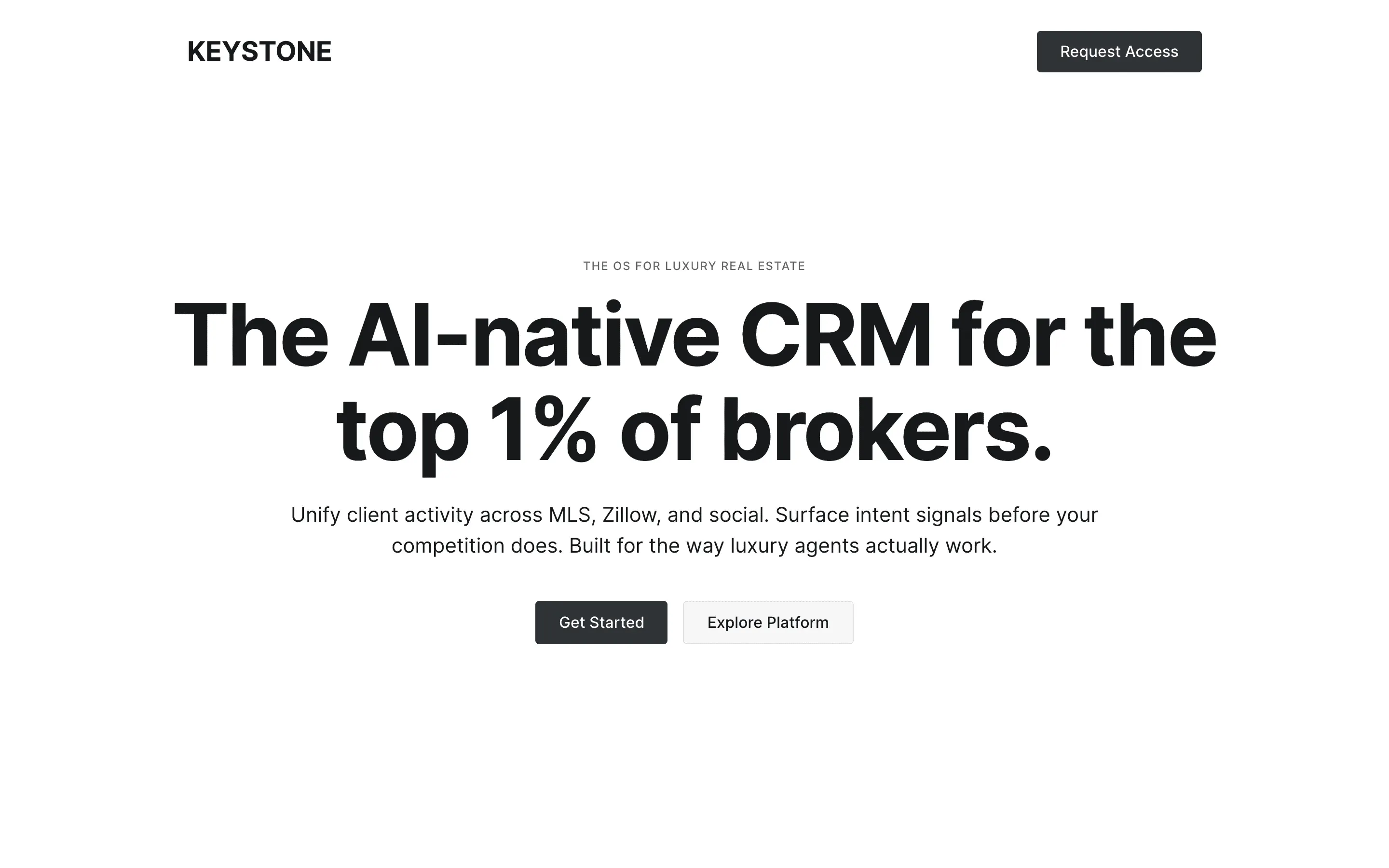

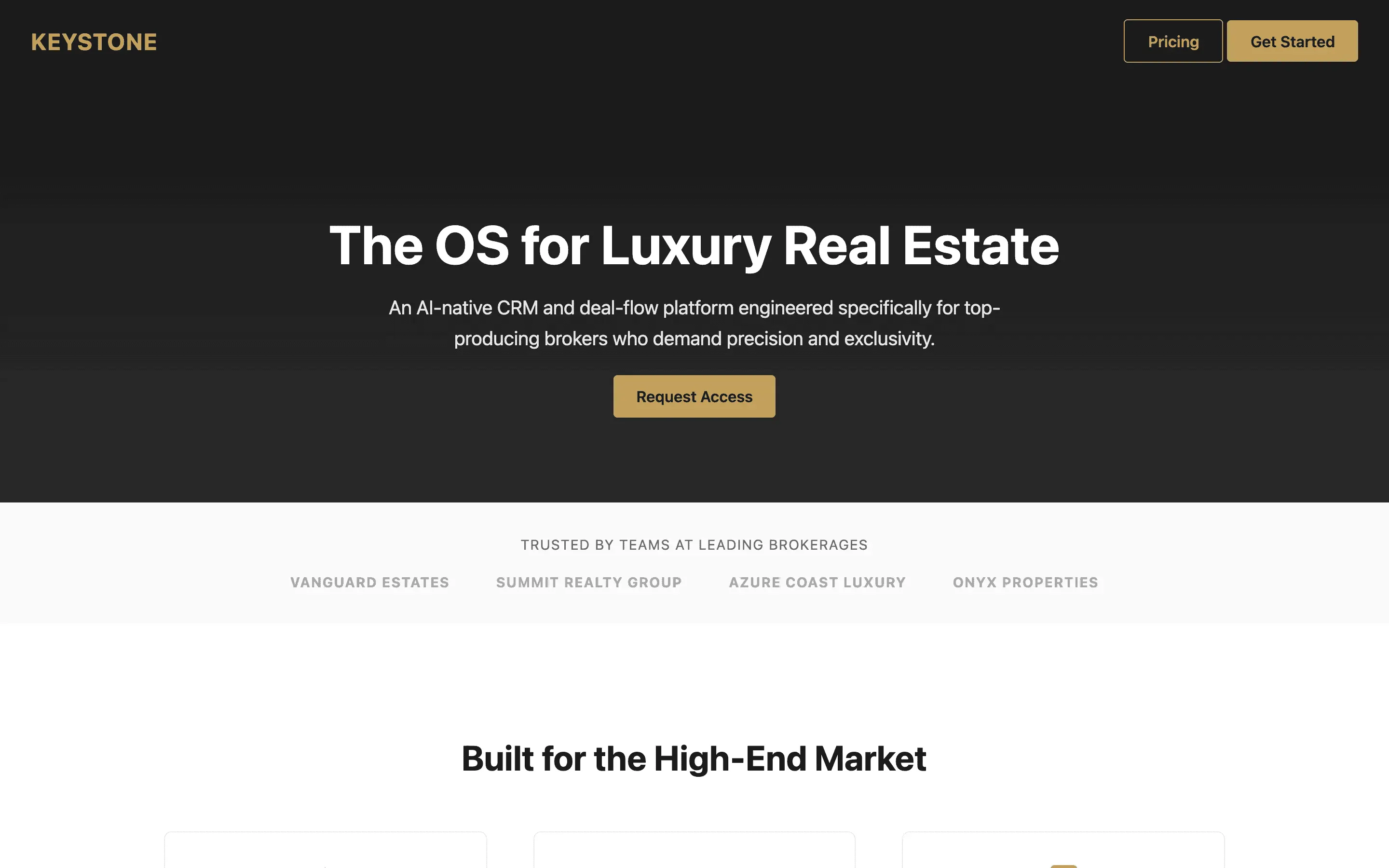

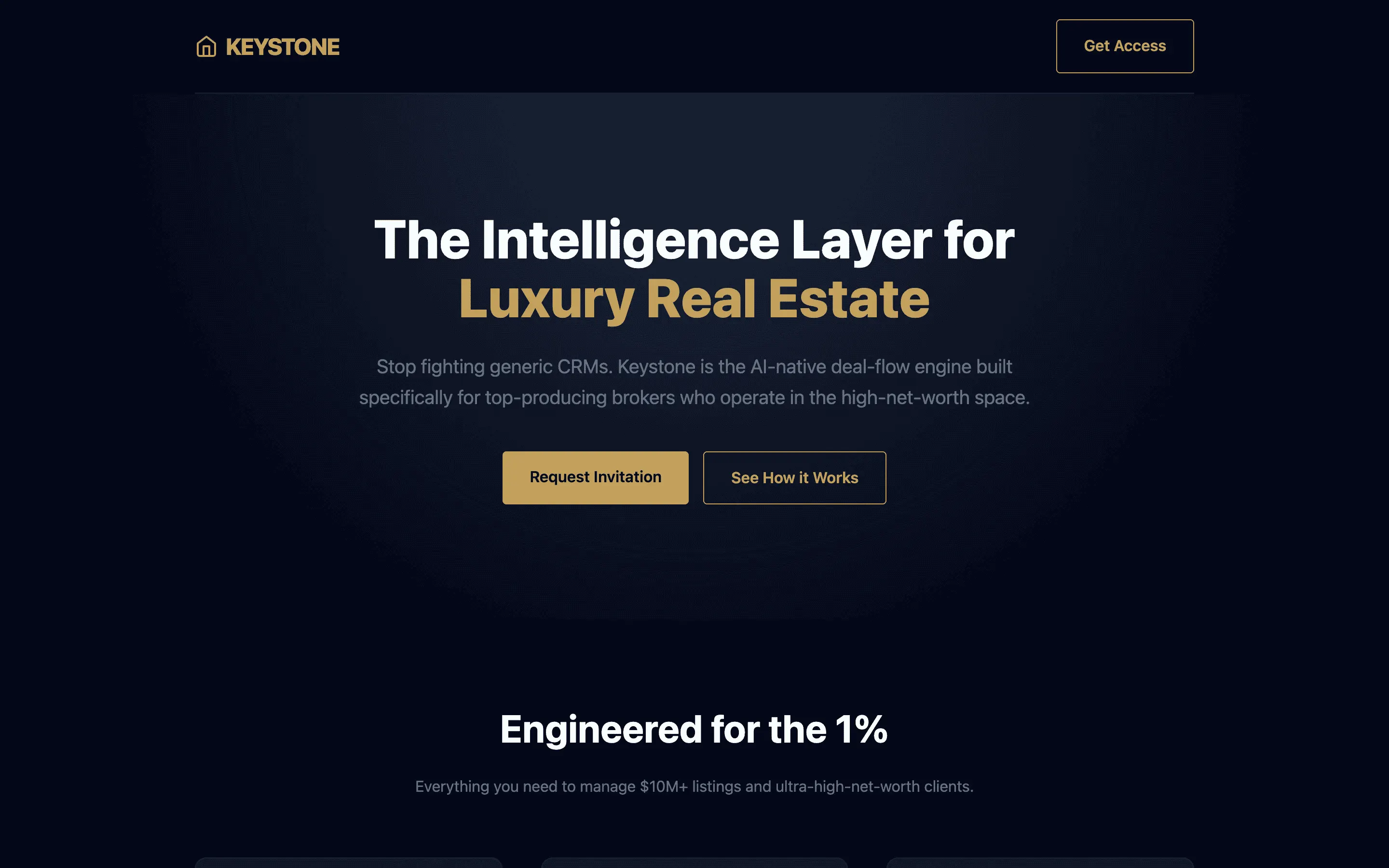

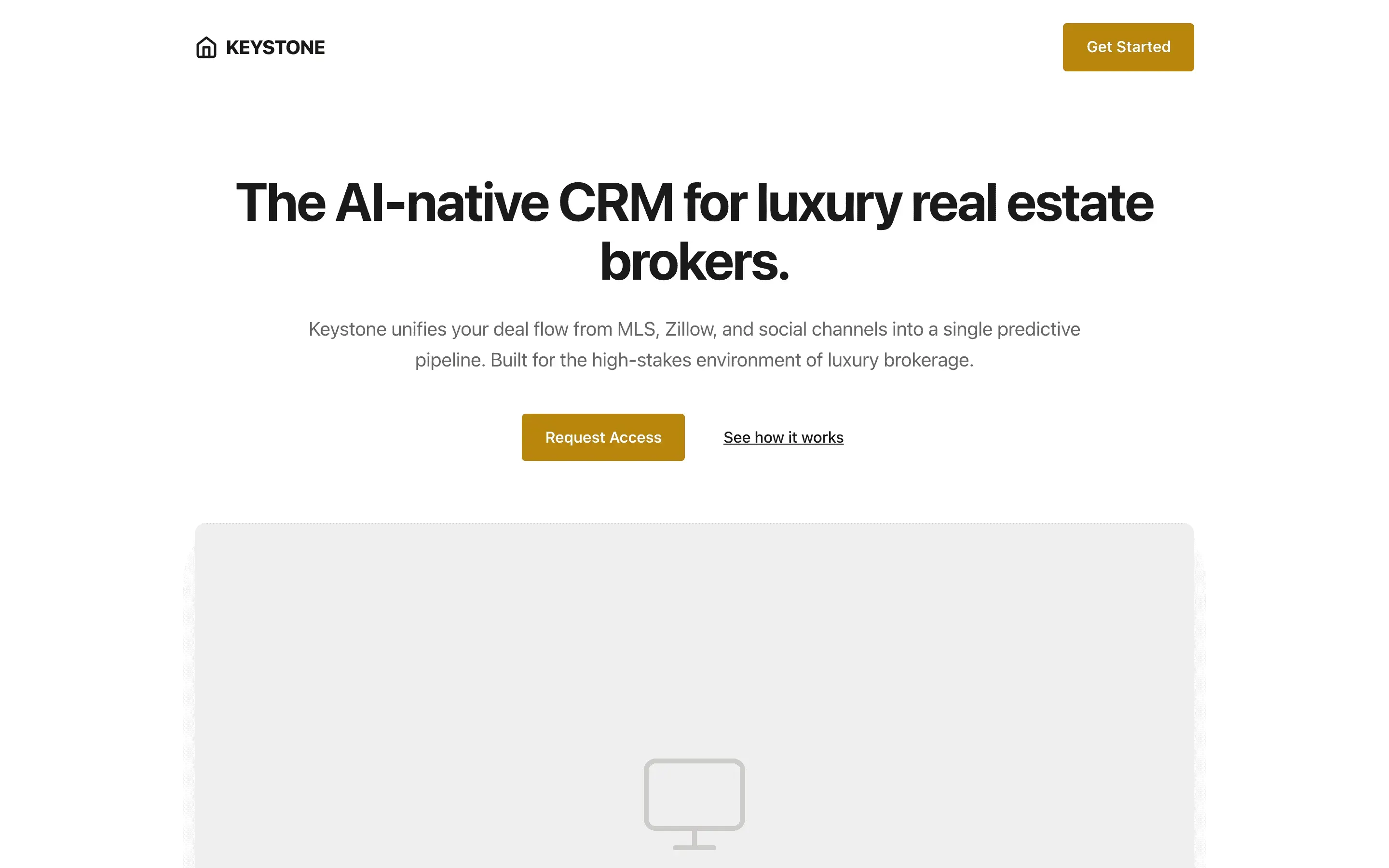

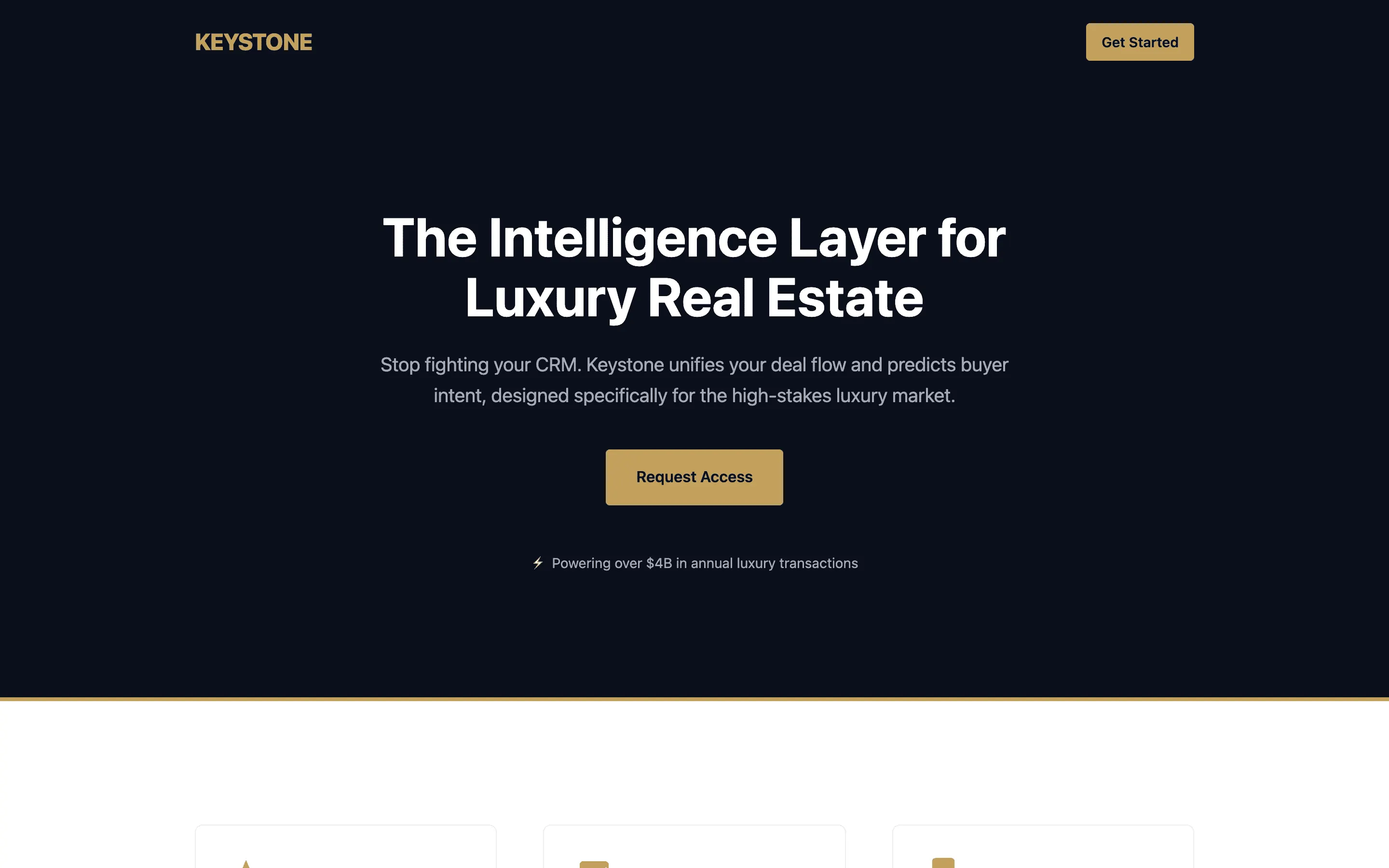

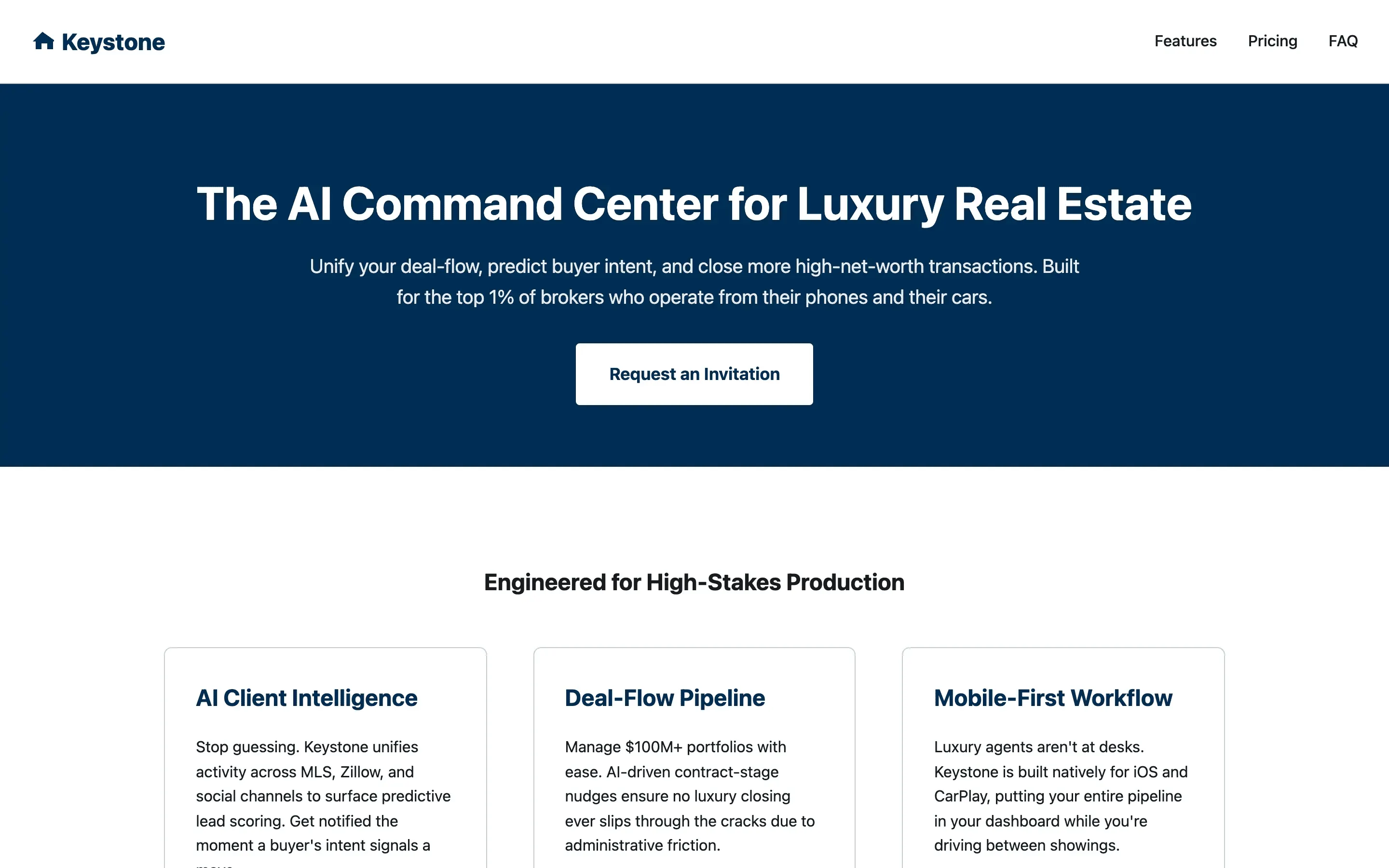

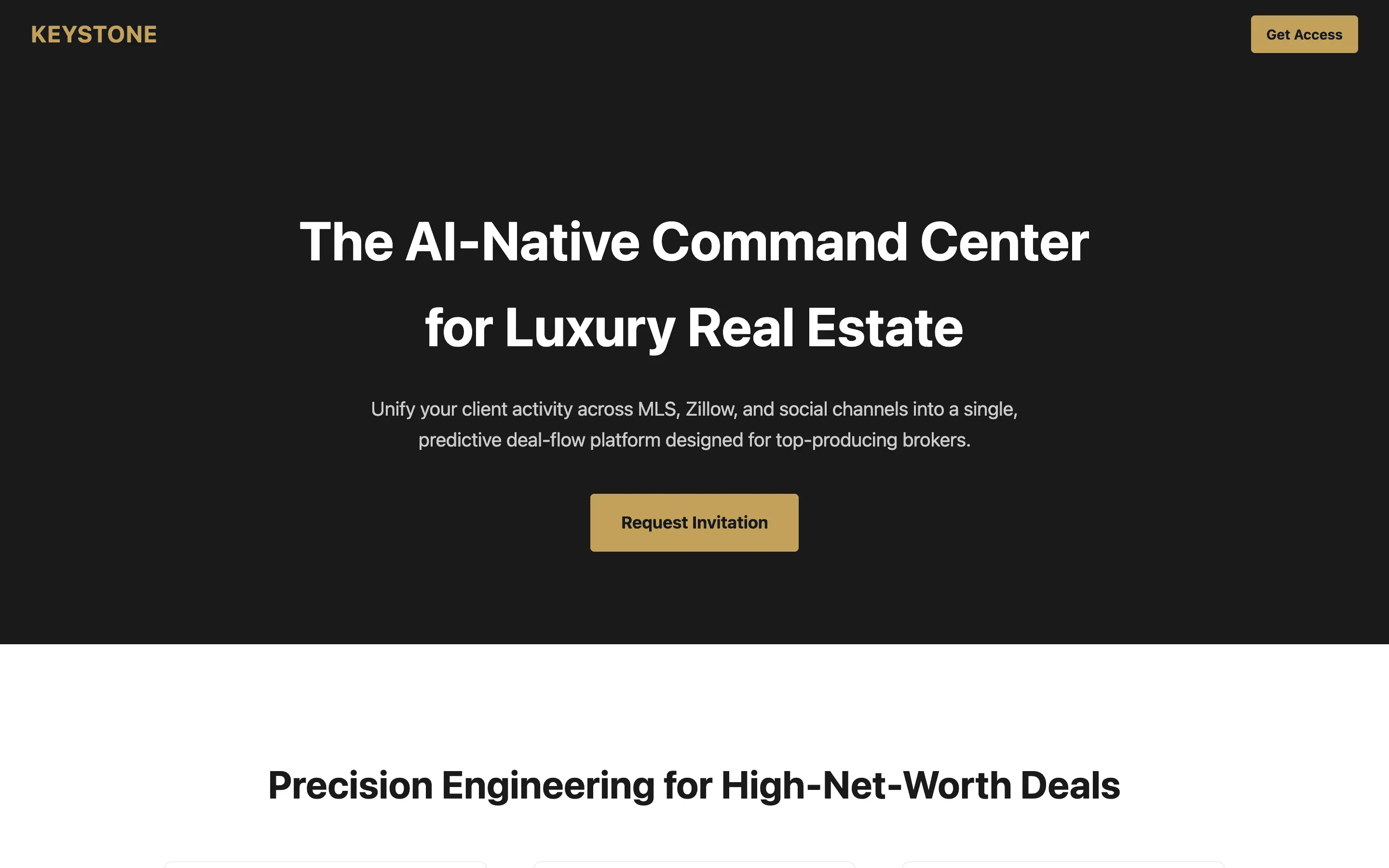

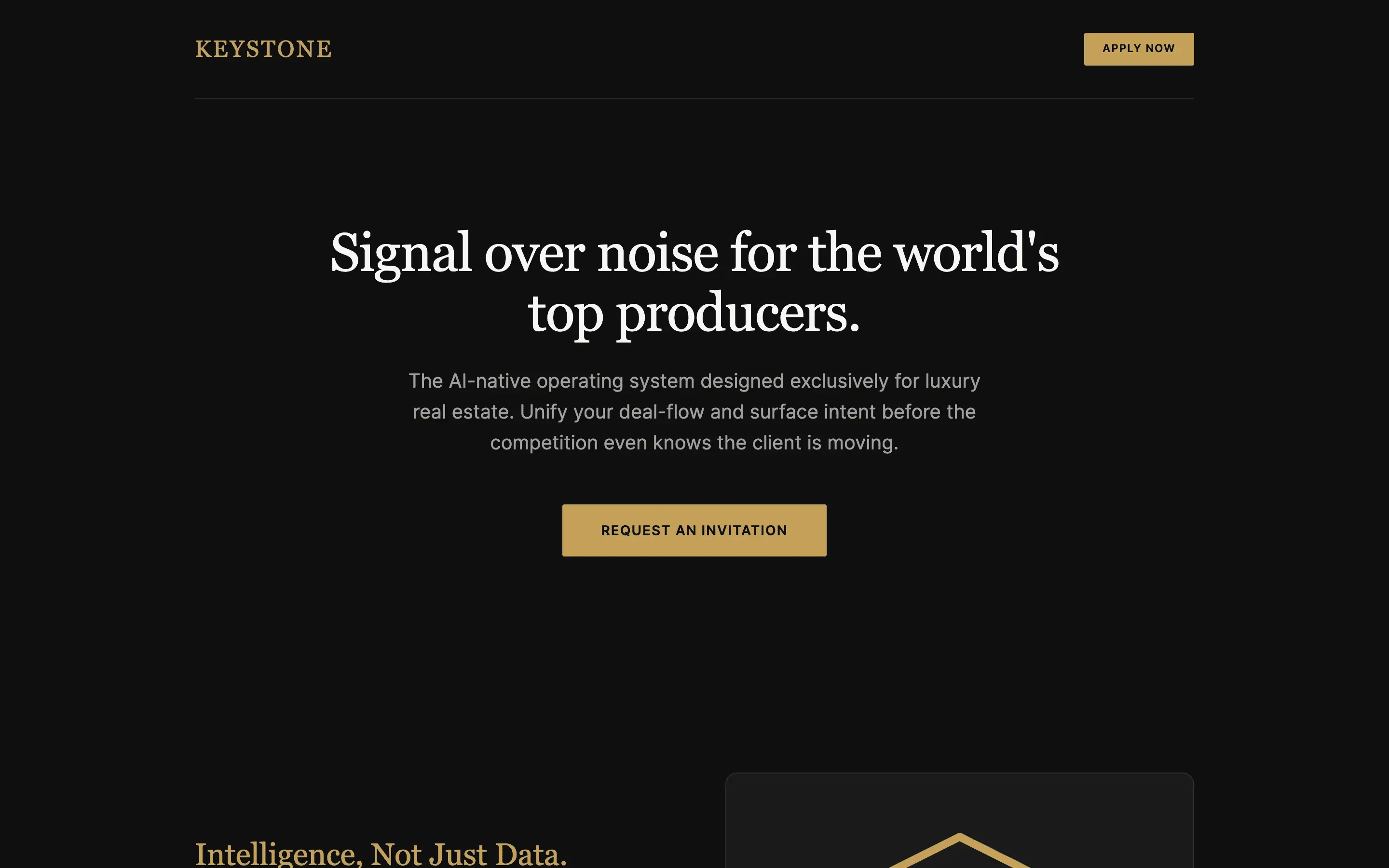

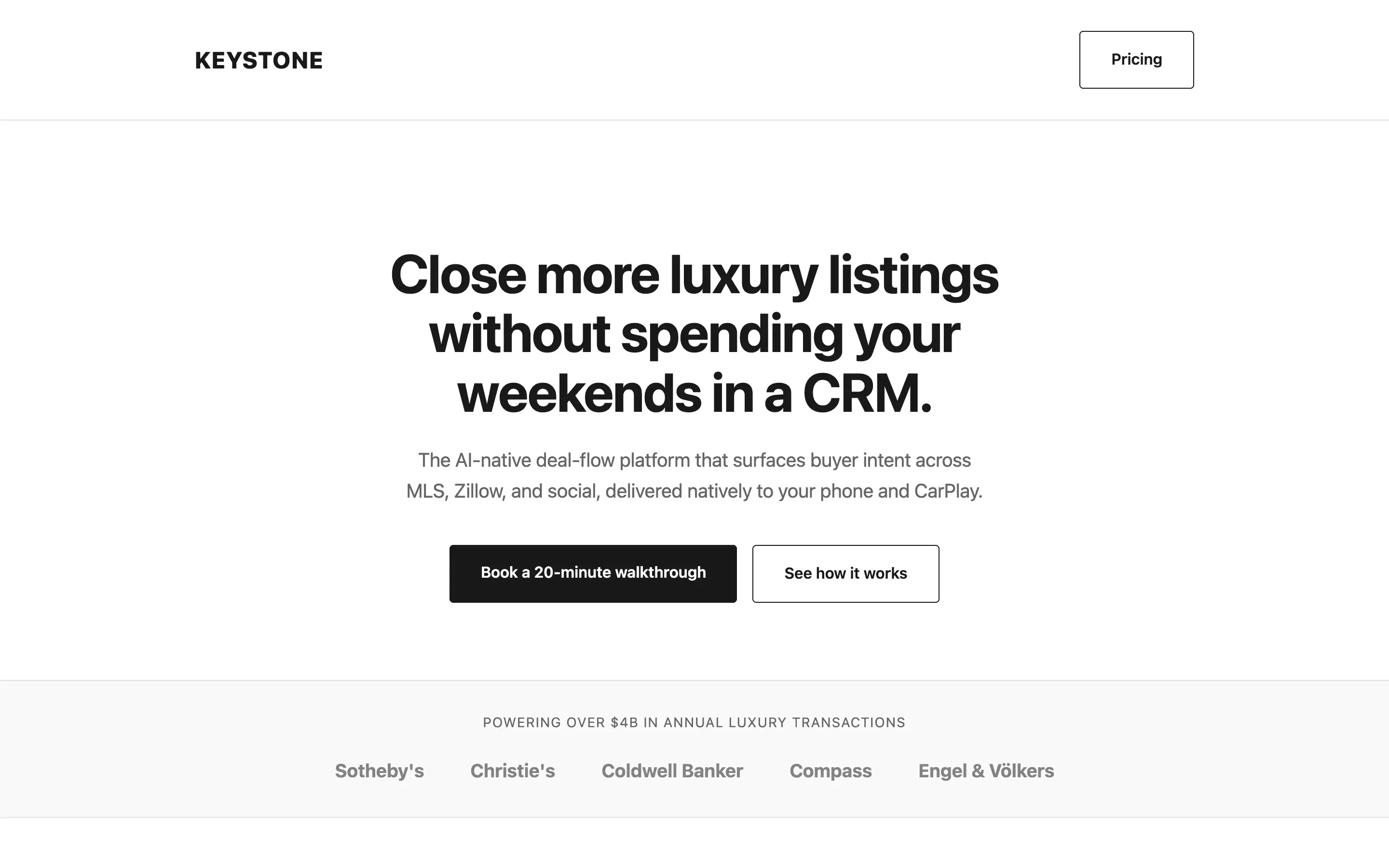

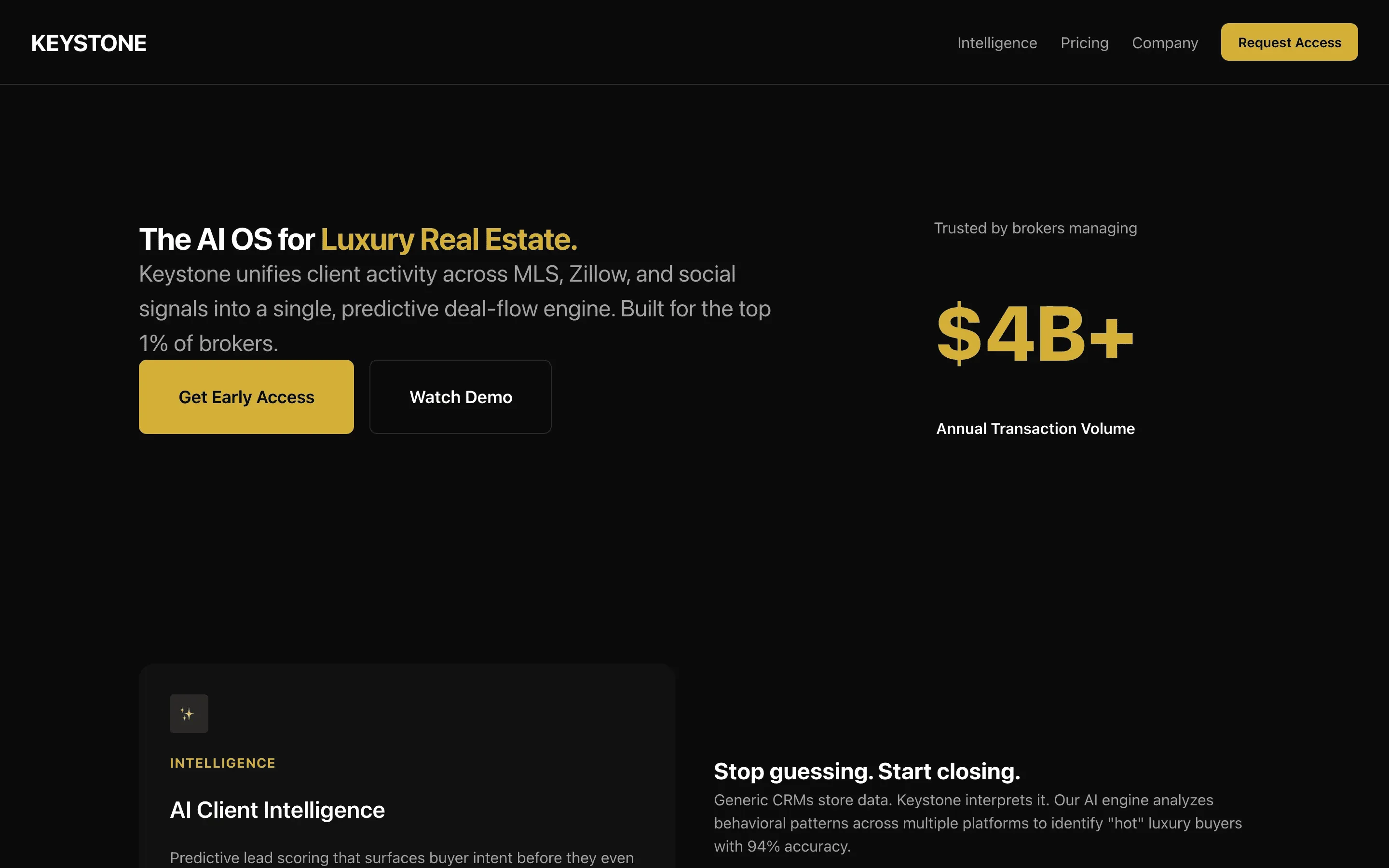

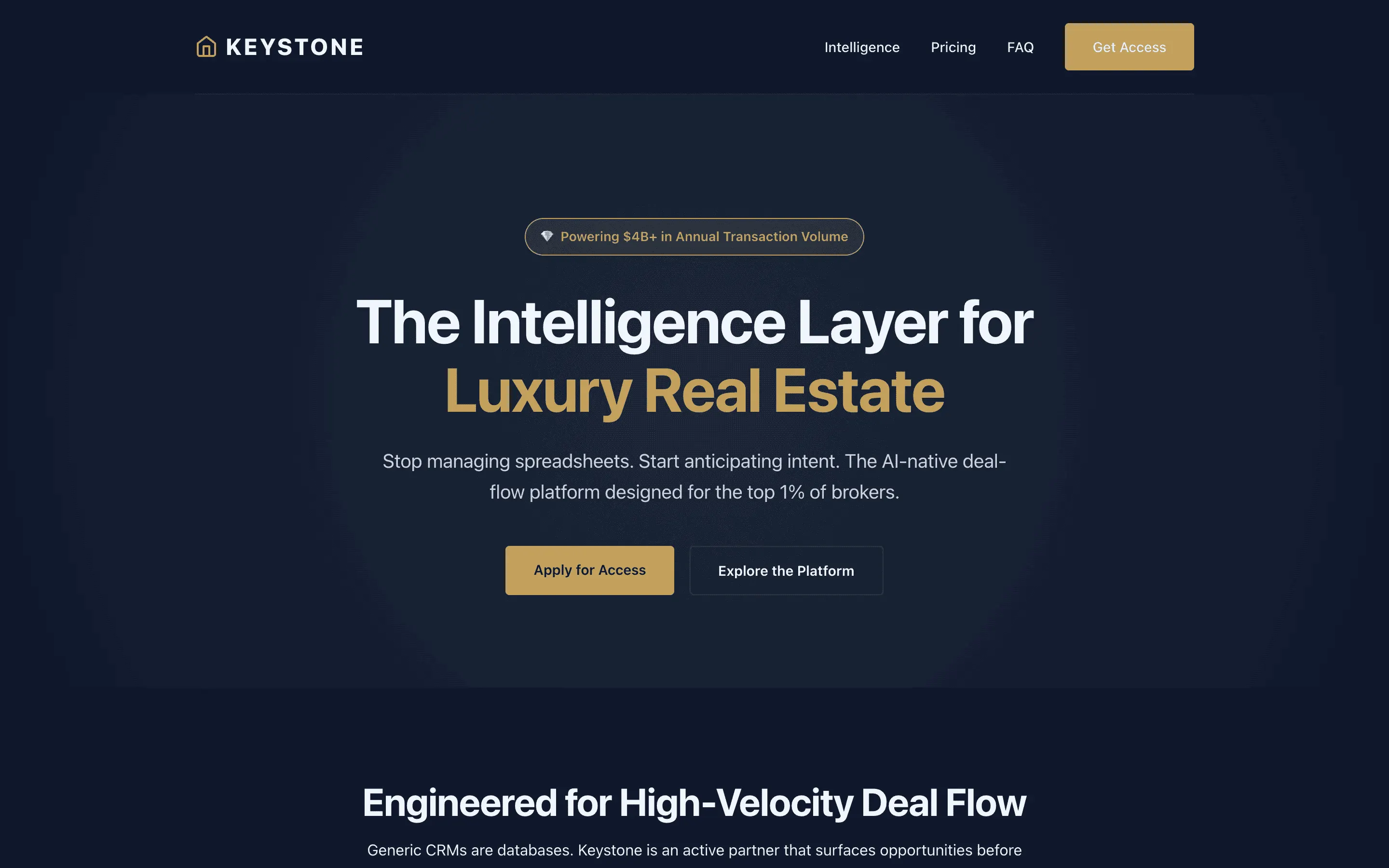

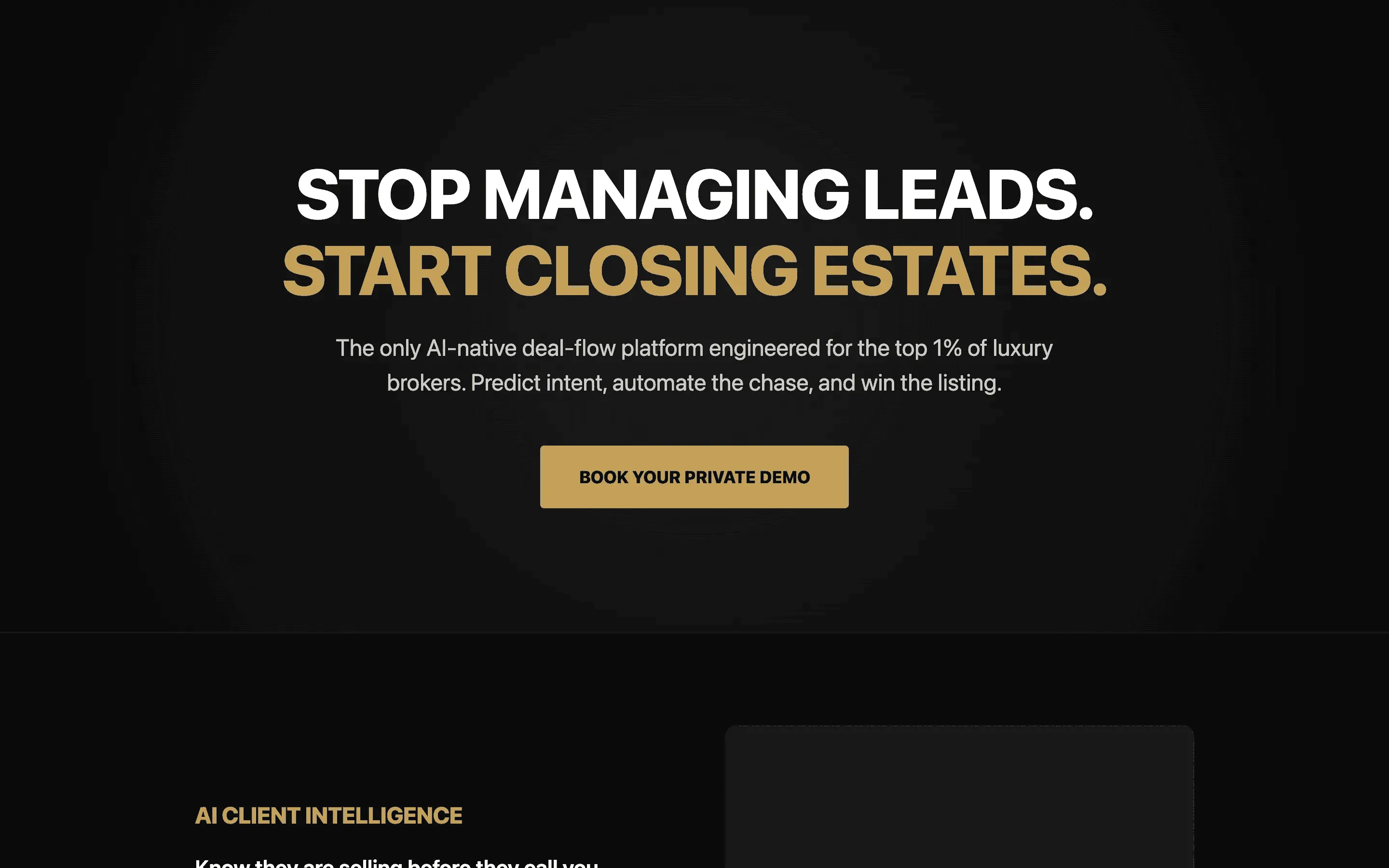

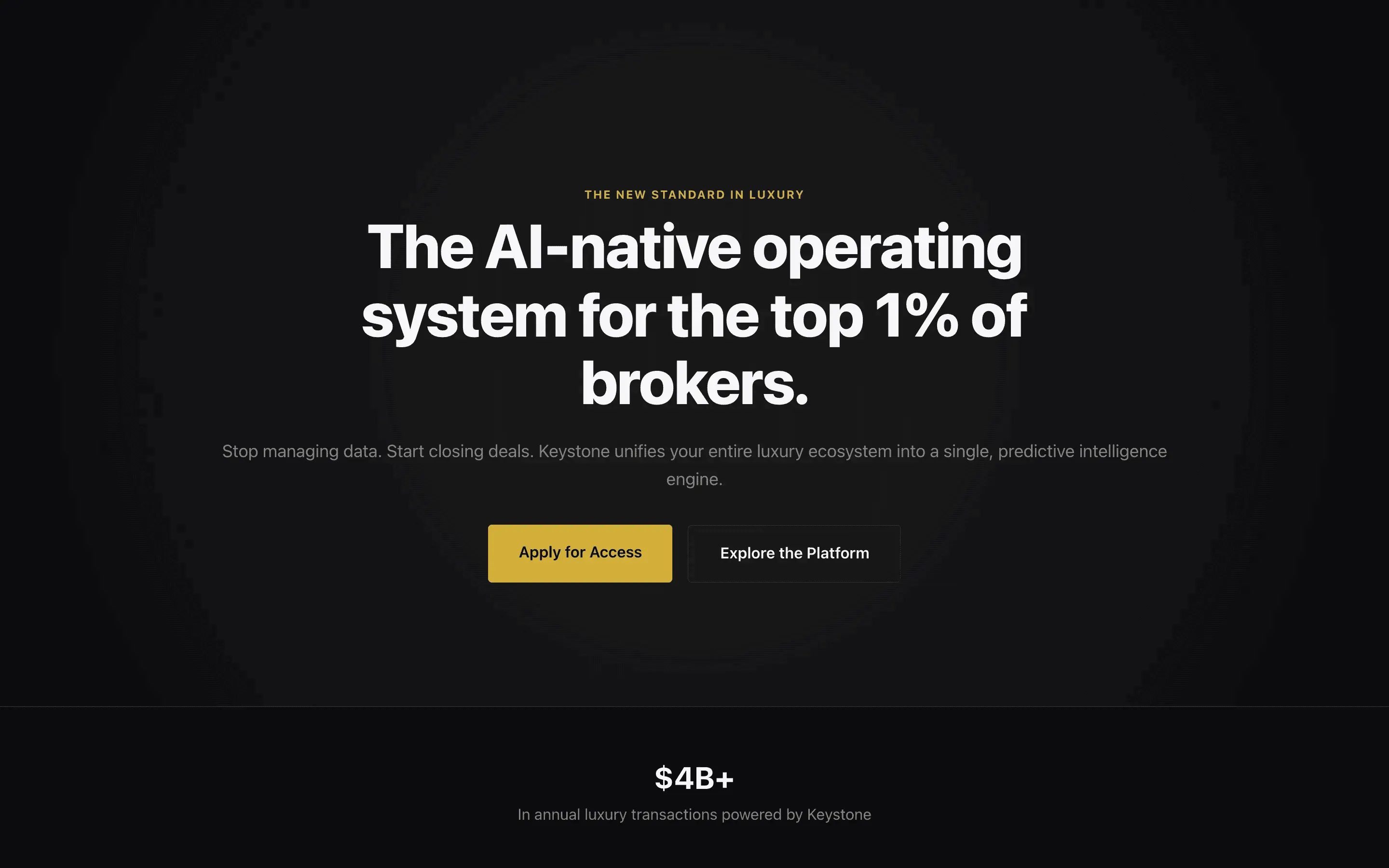

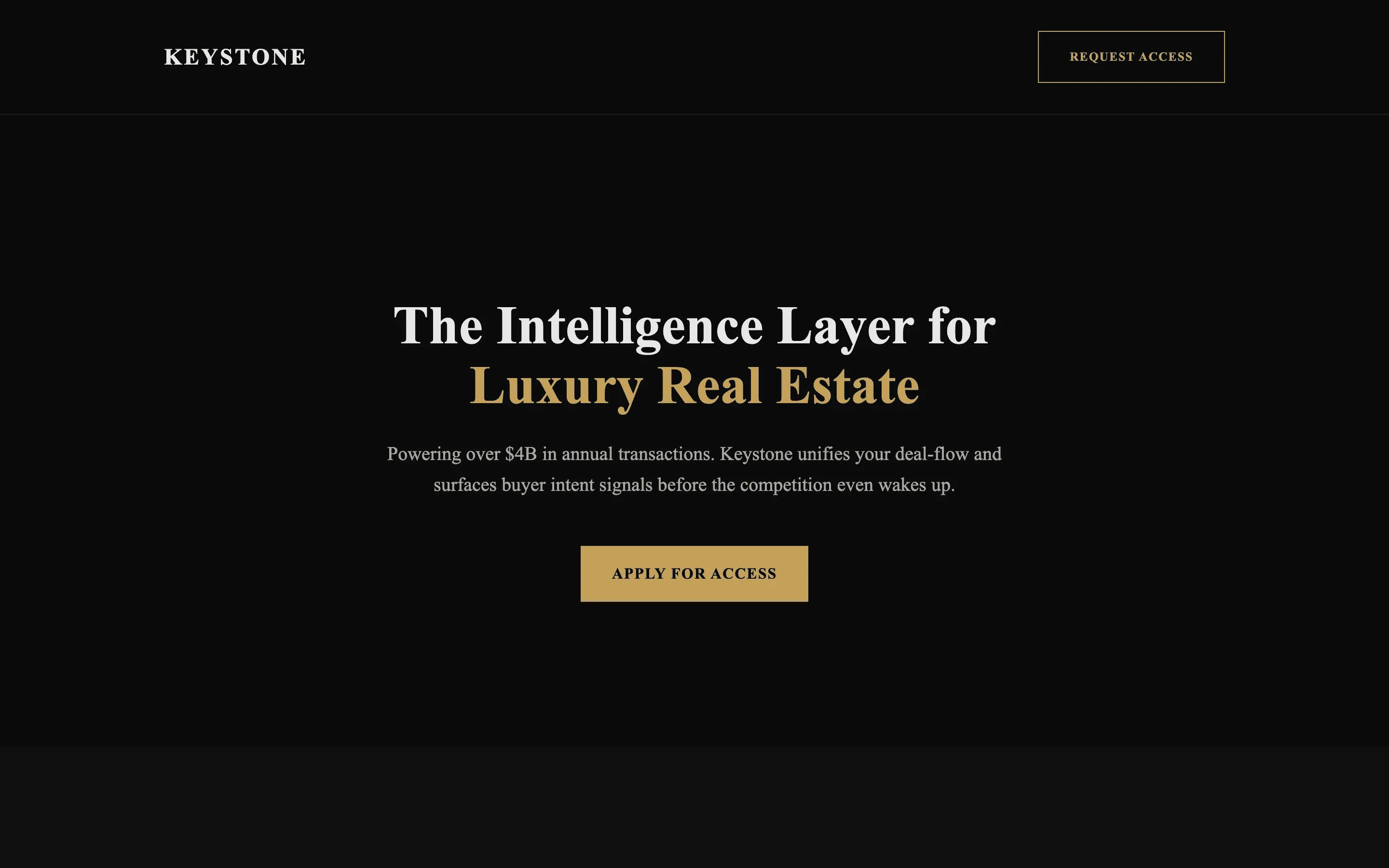

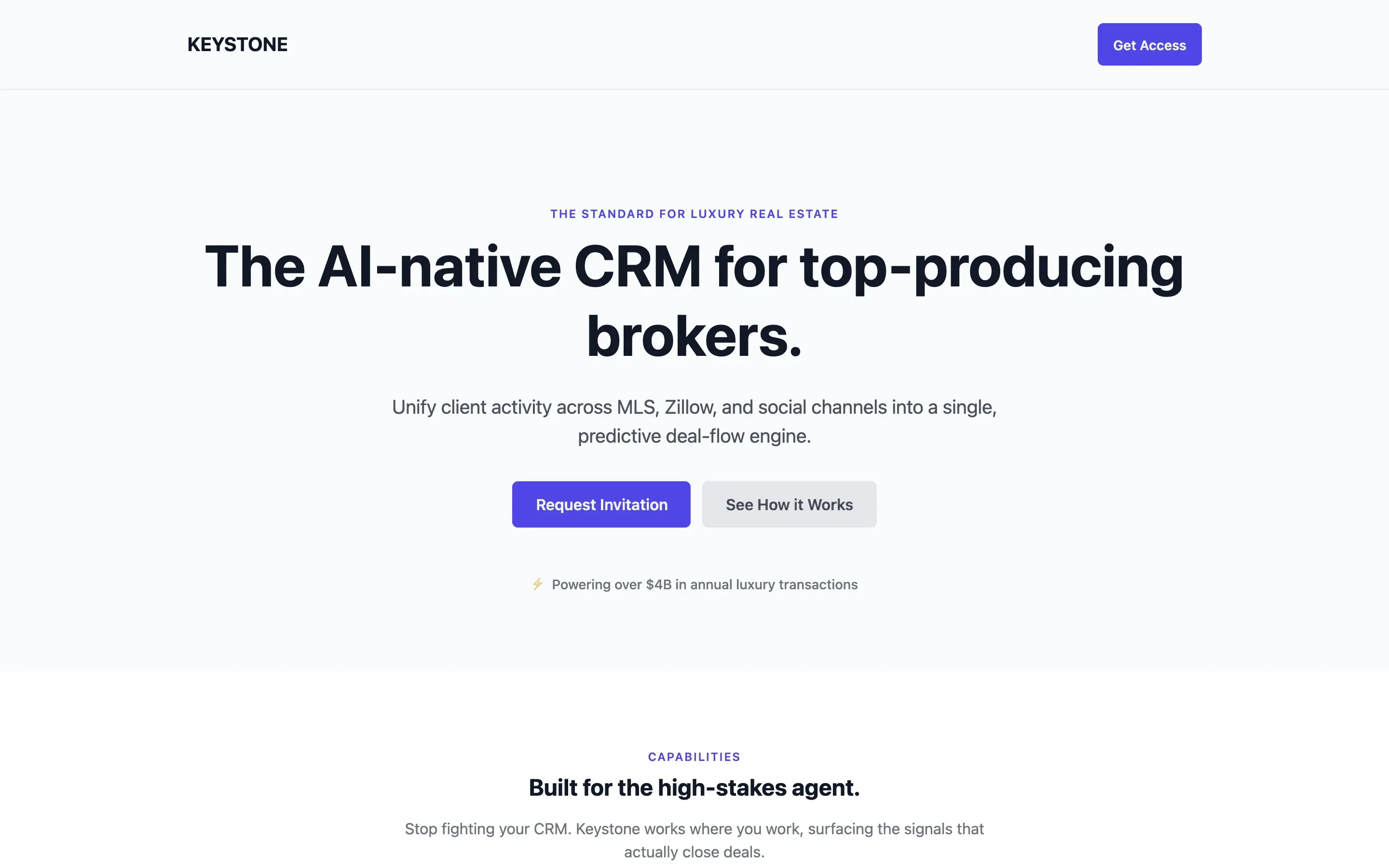

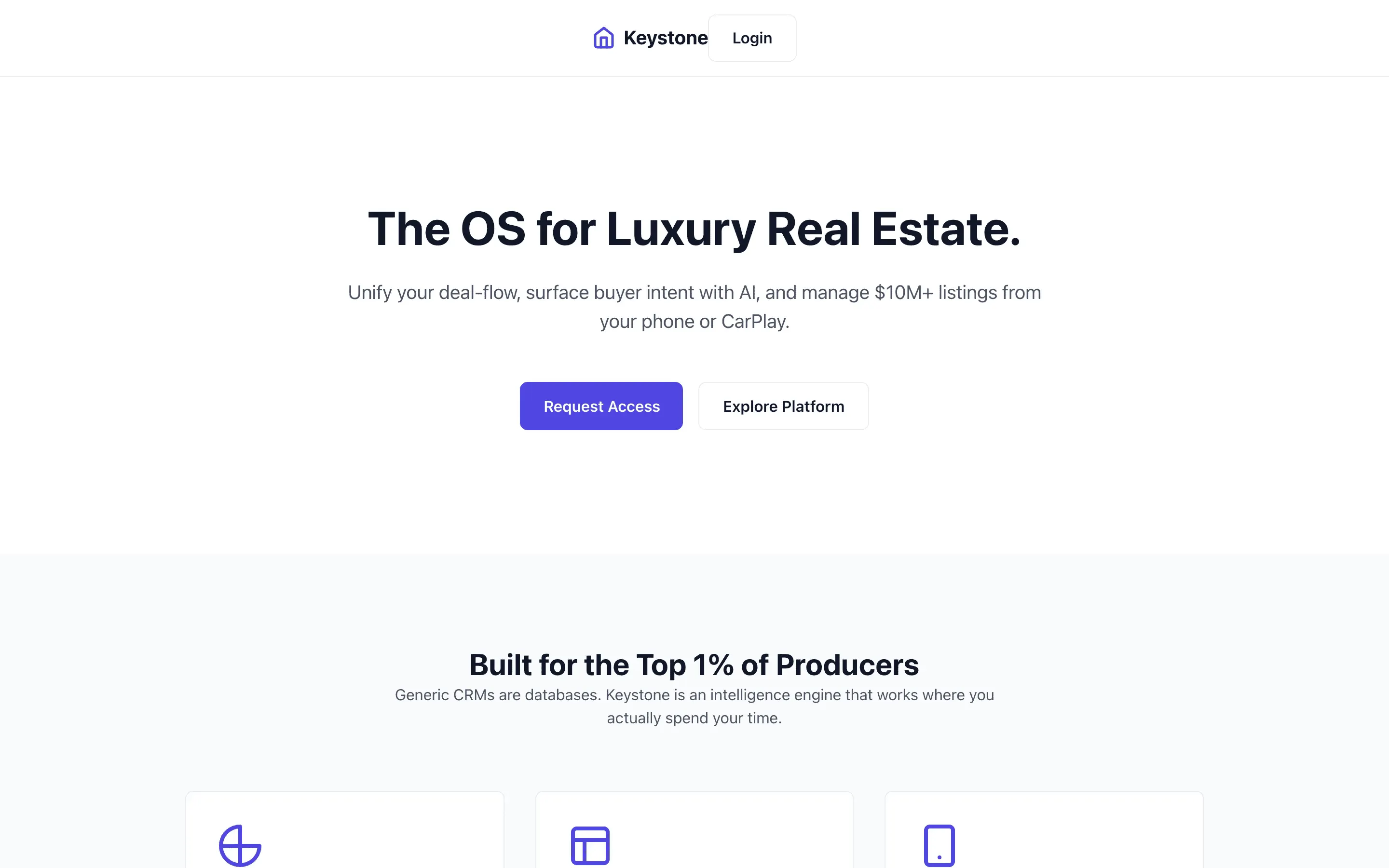

Stripe SVP of Design (expansive)

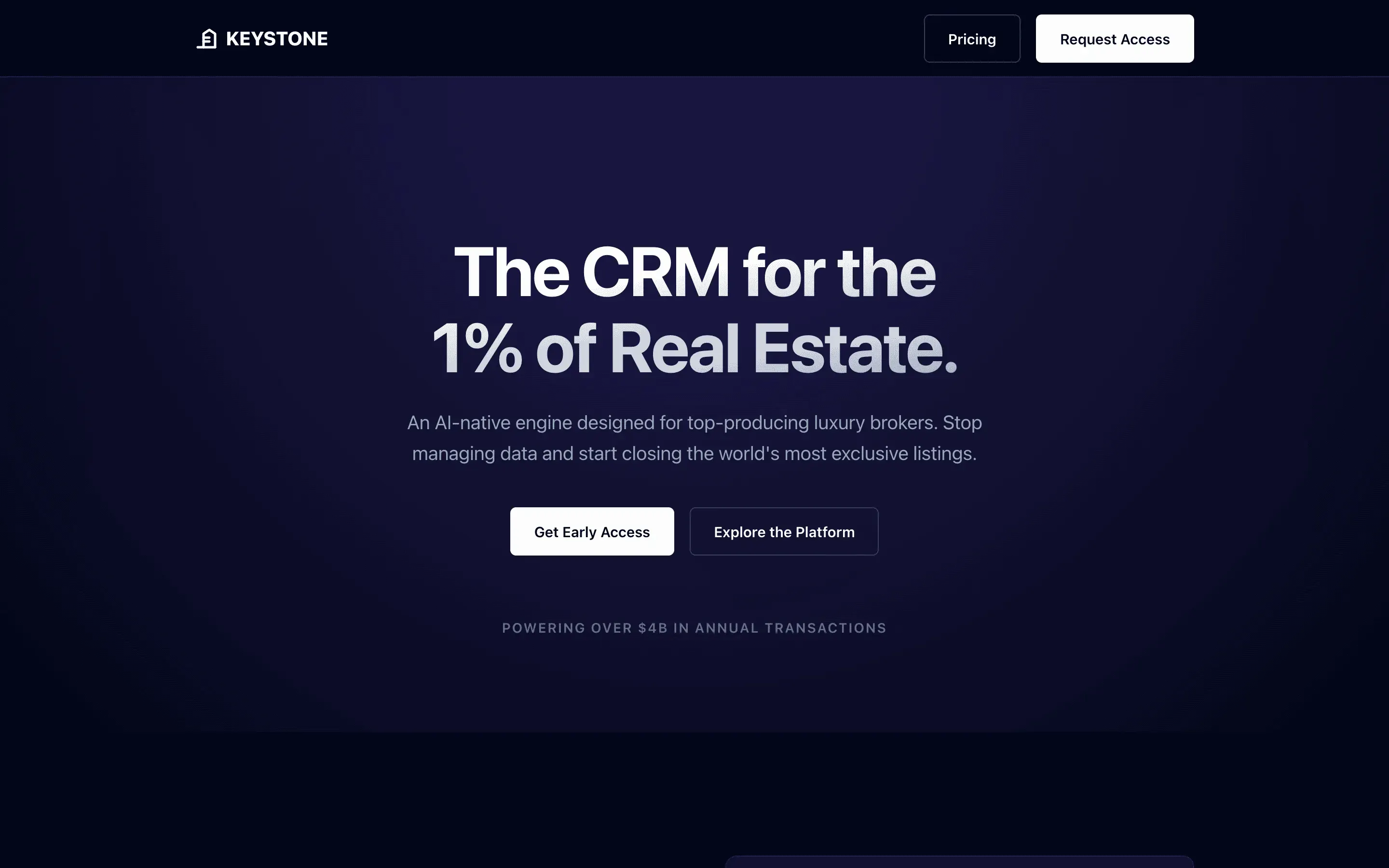

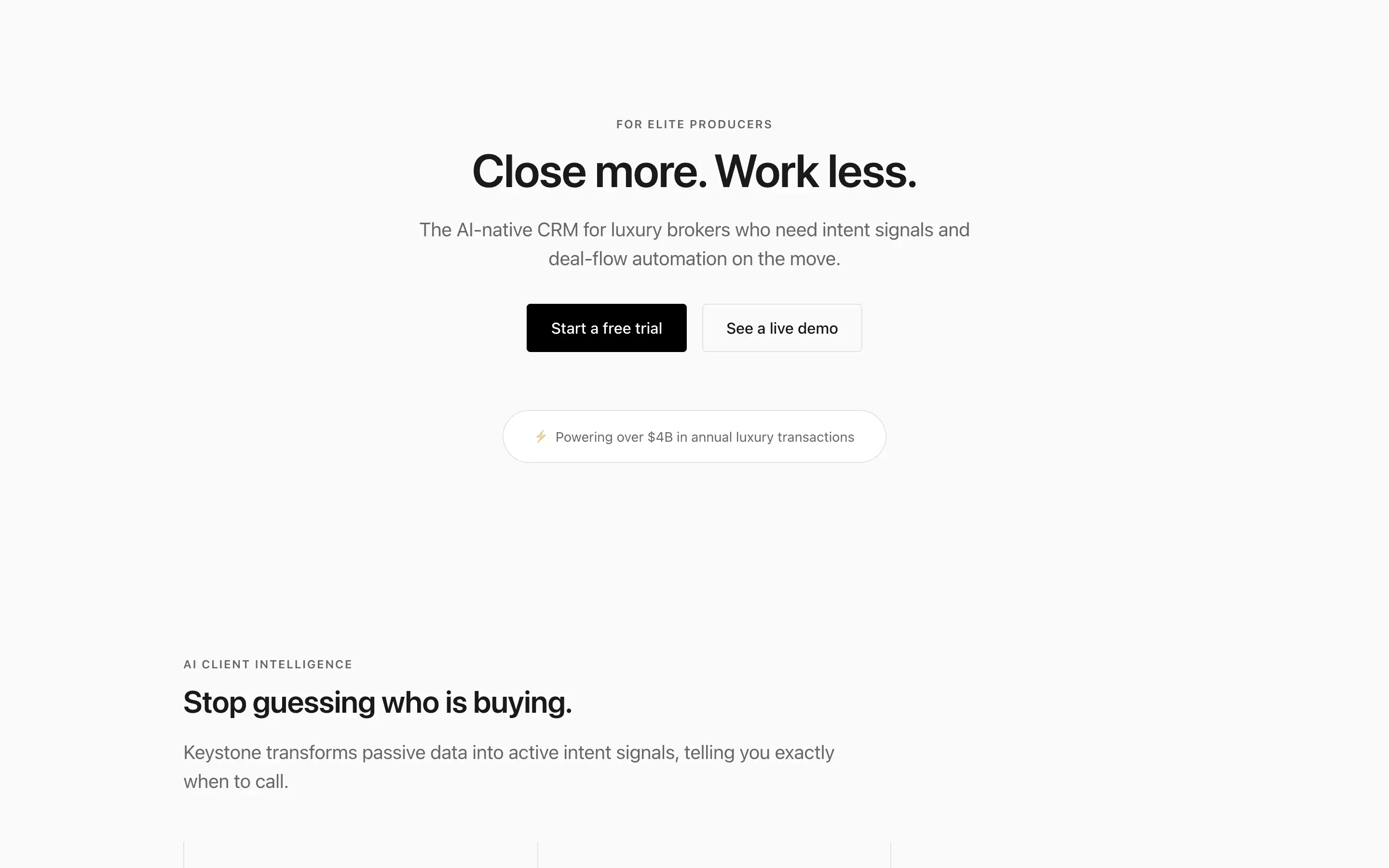

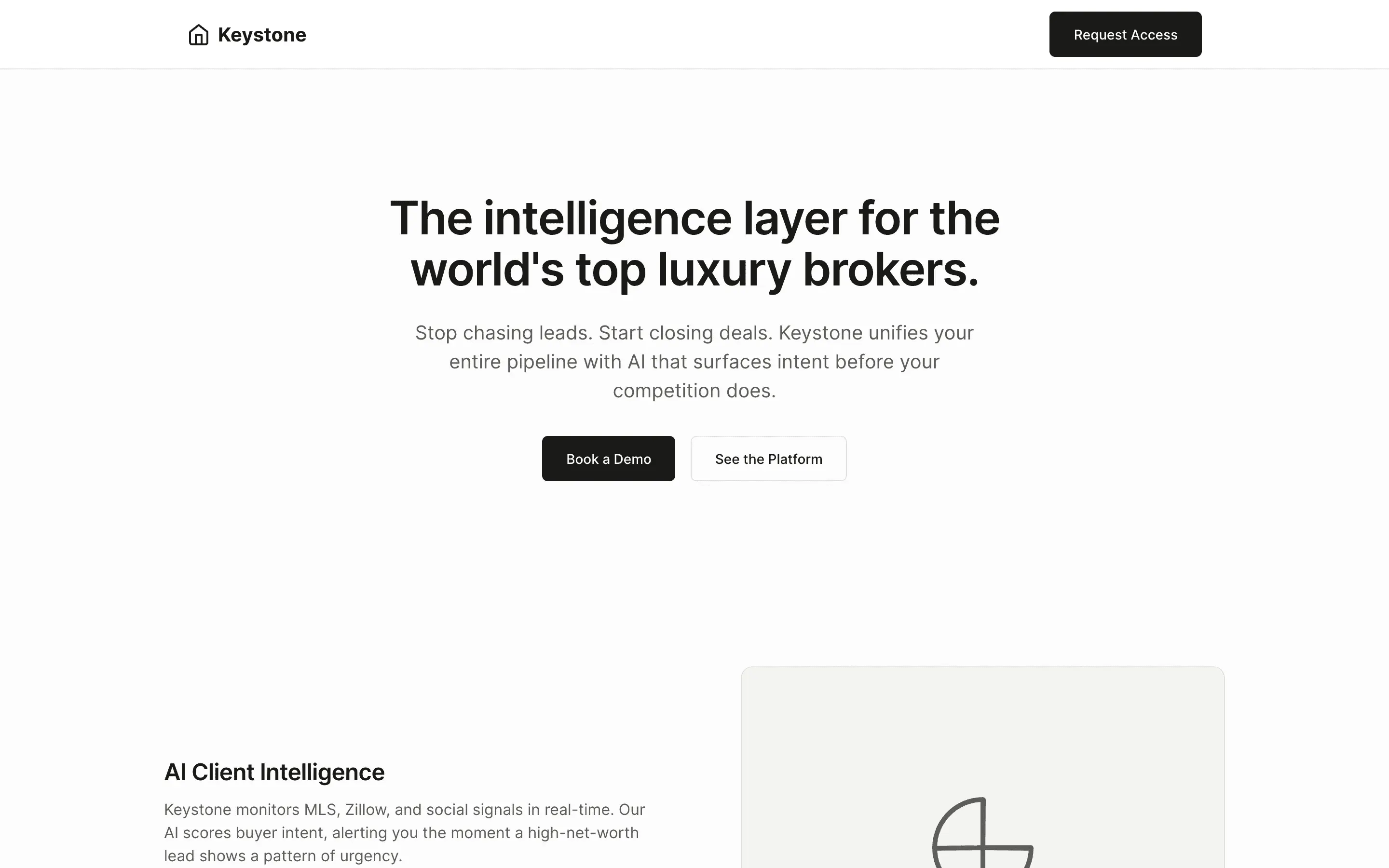

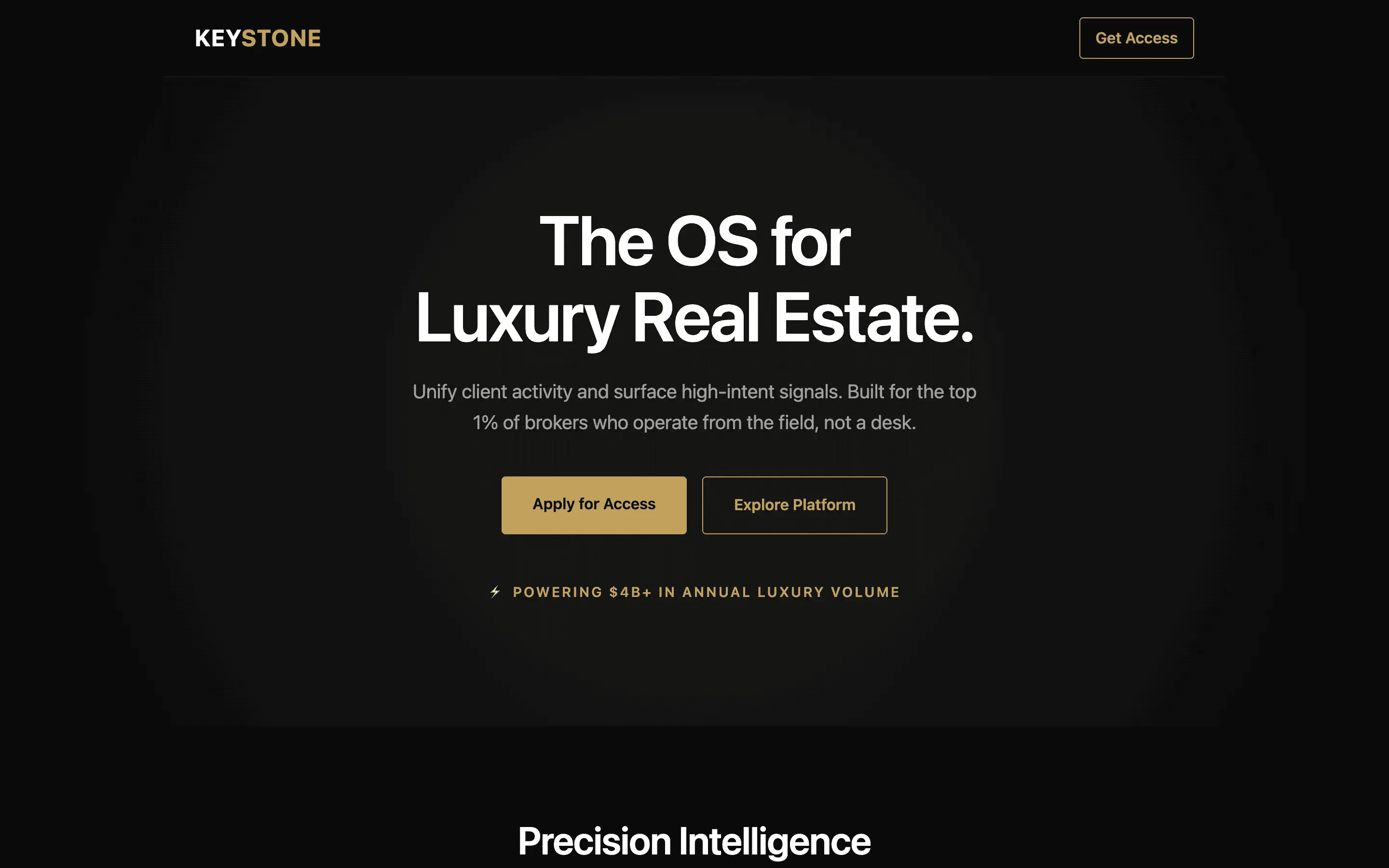

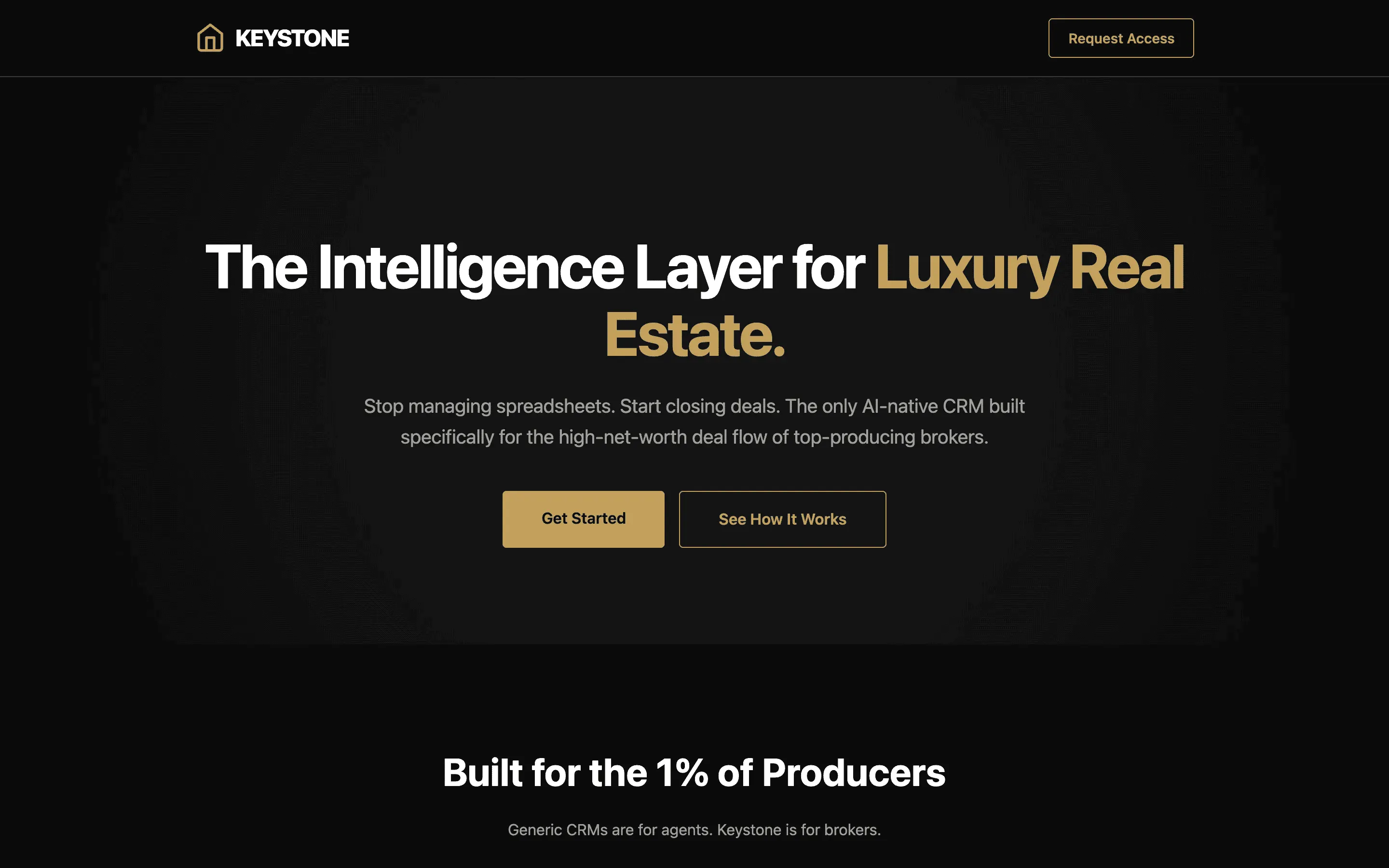

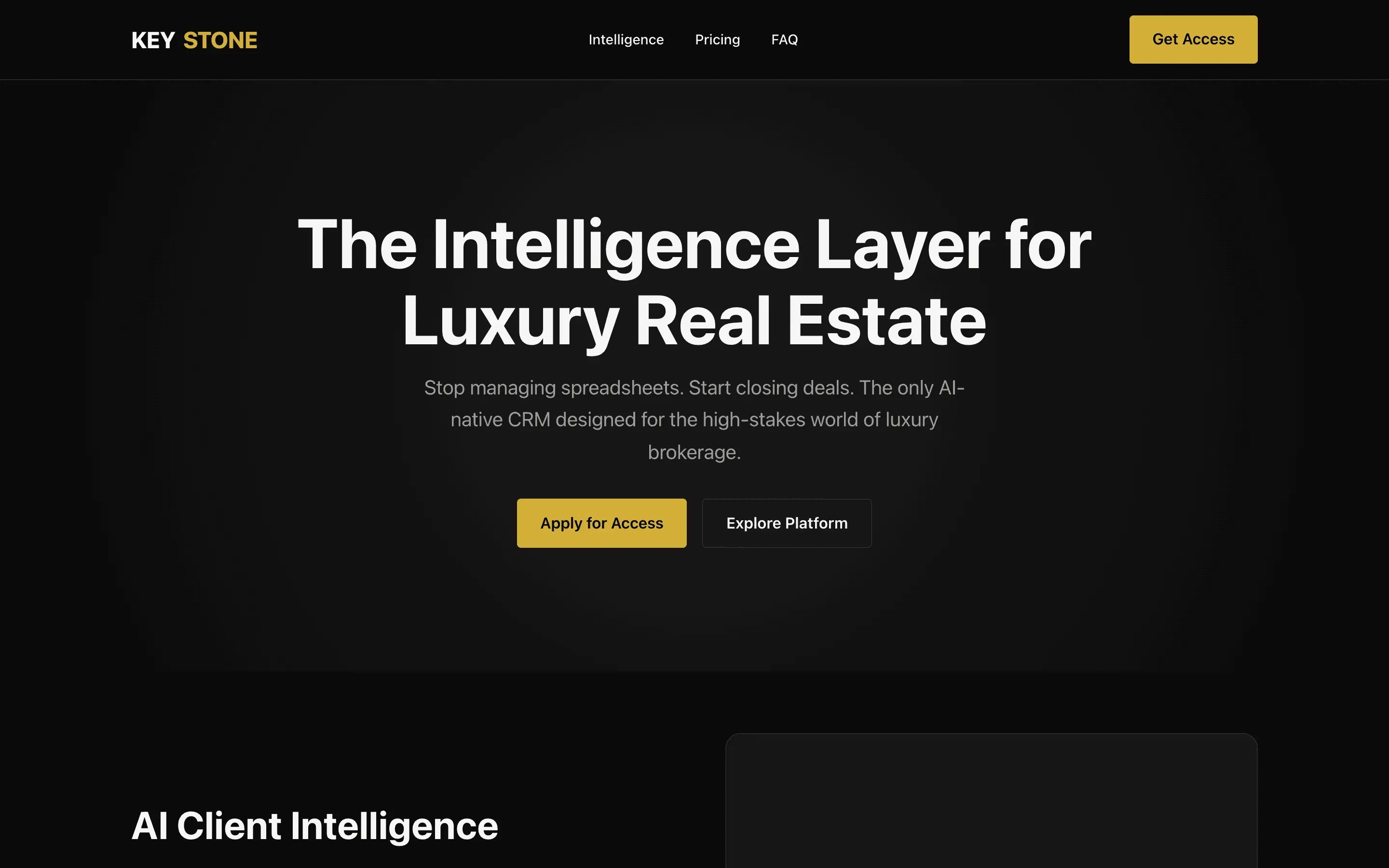

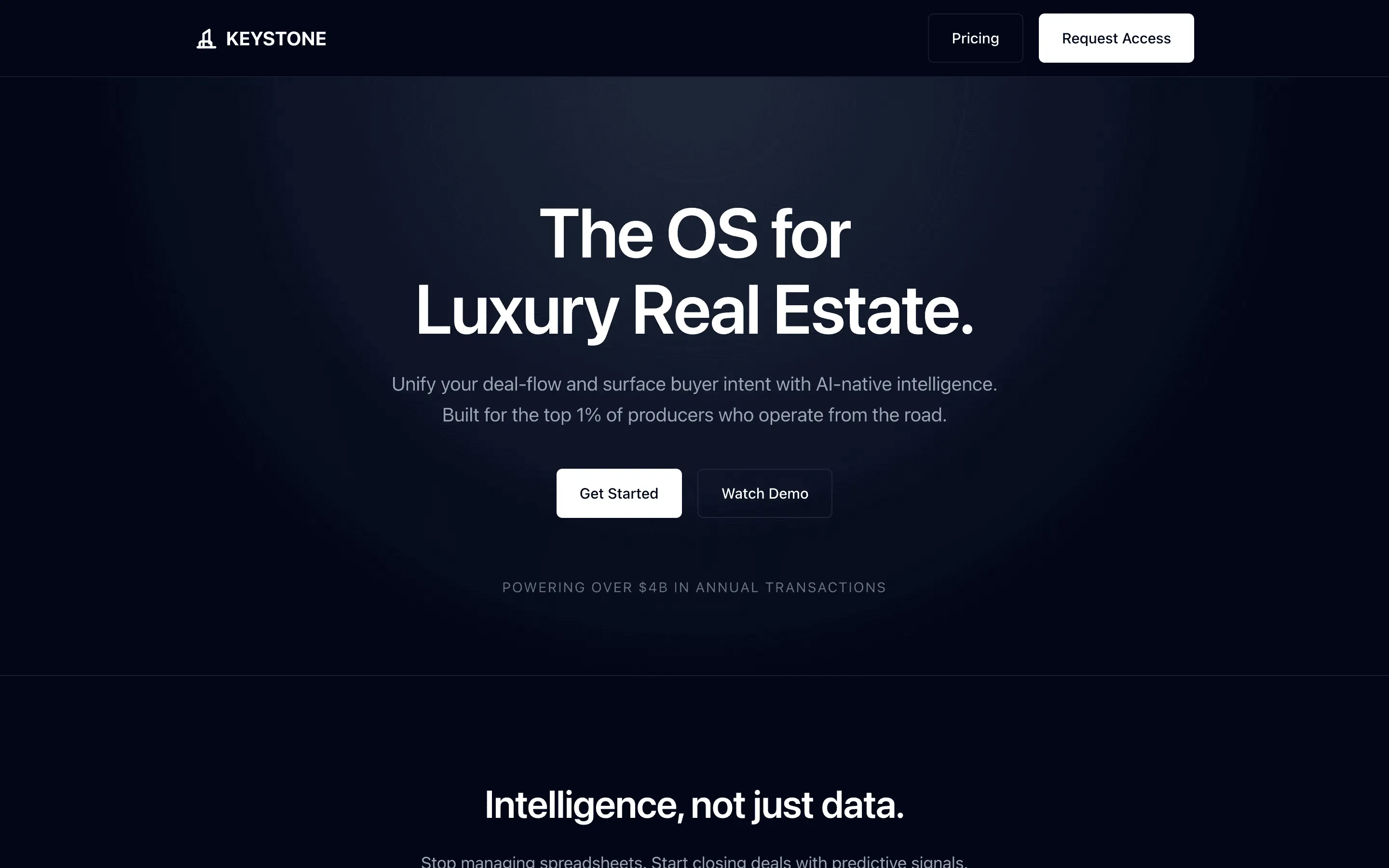

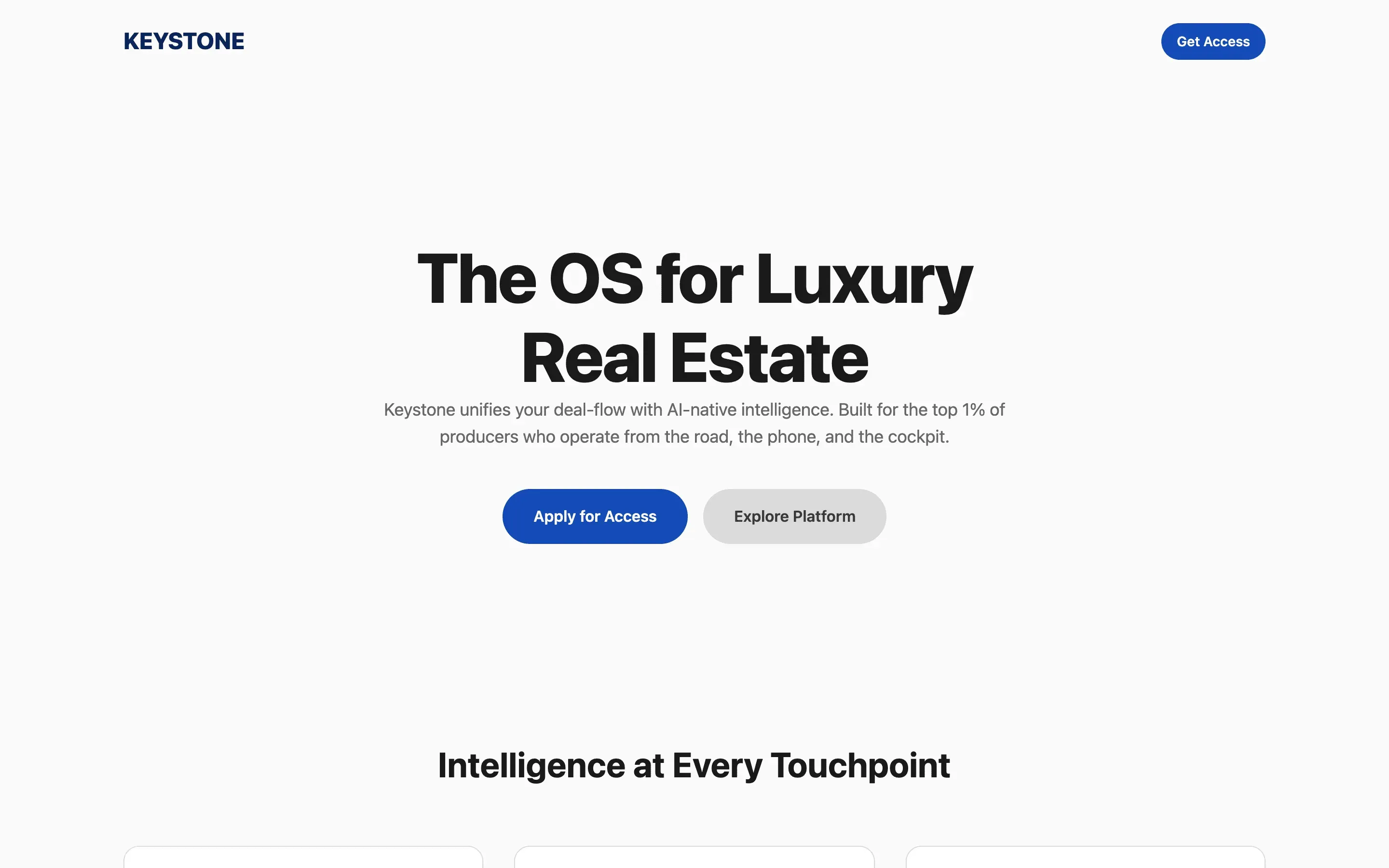

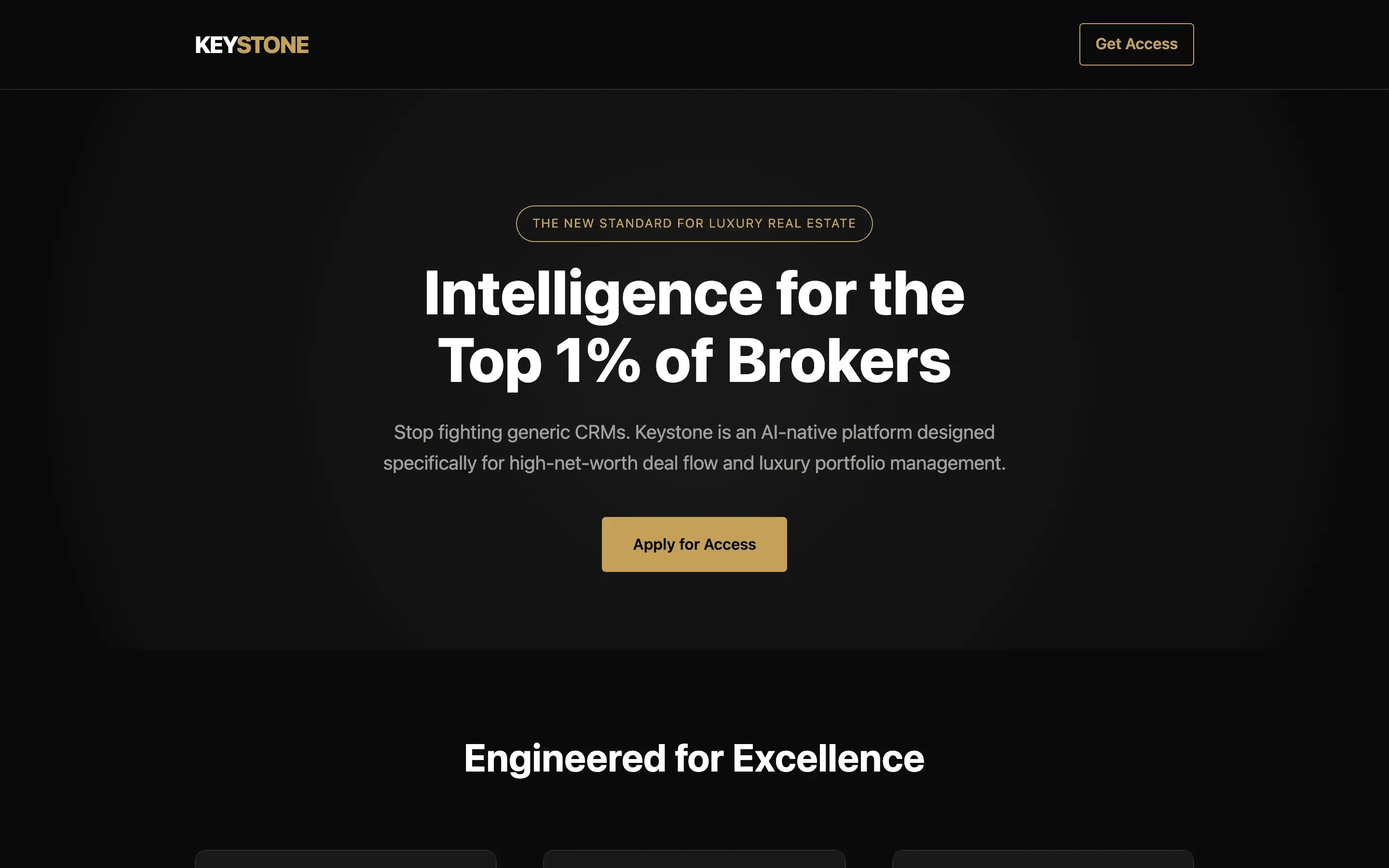

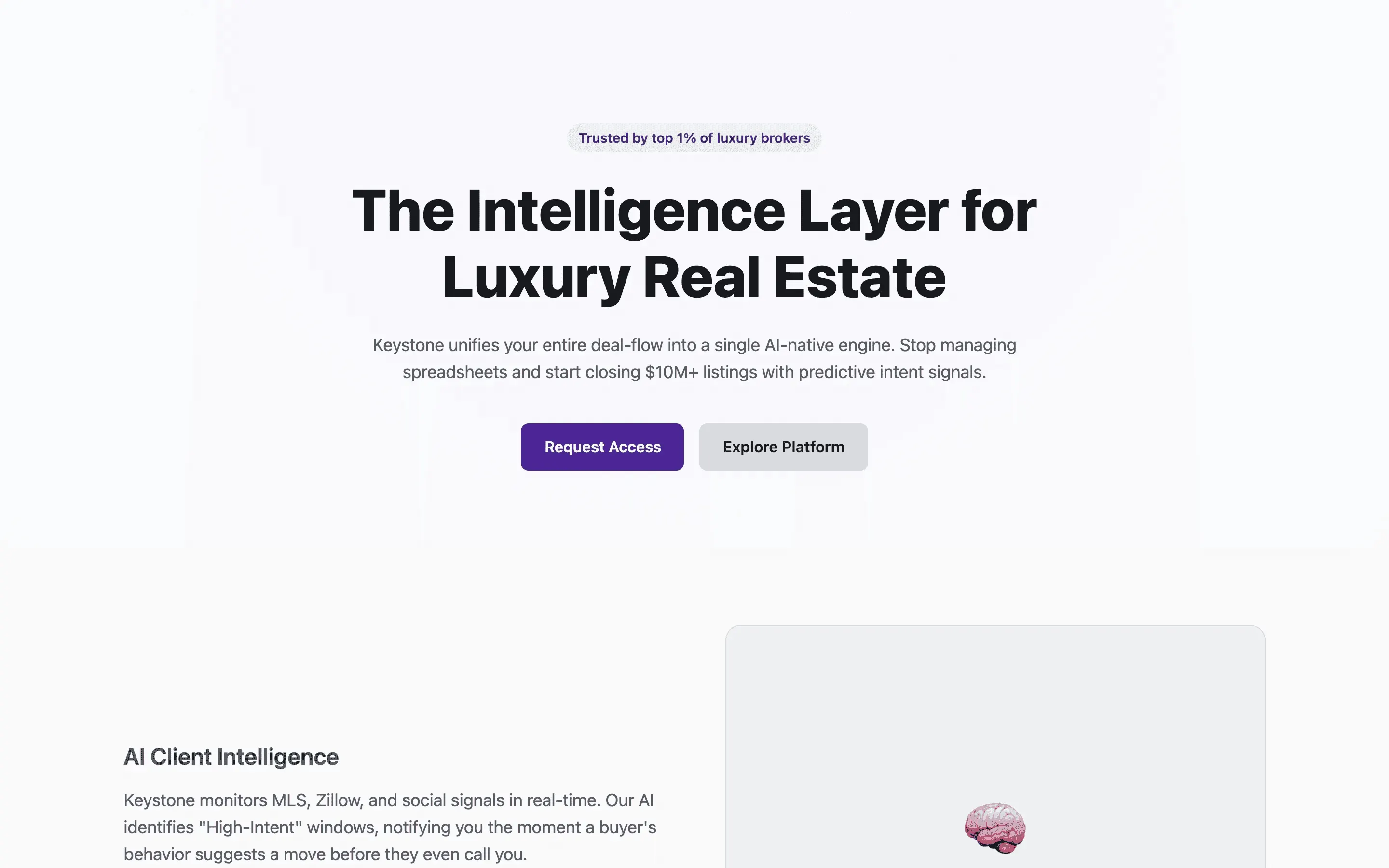

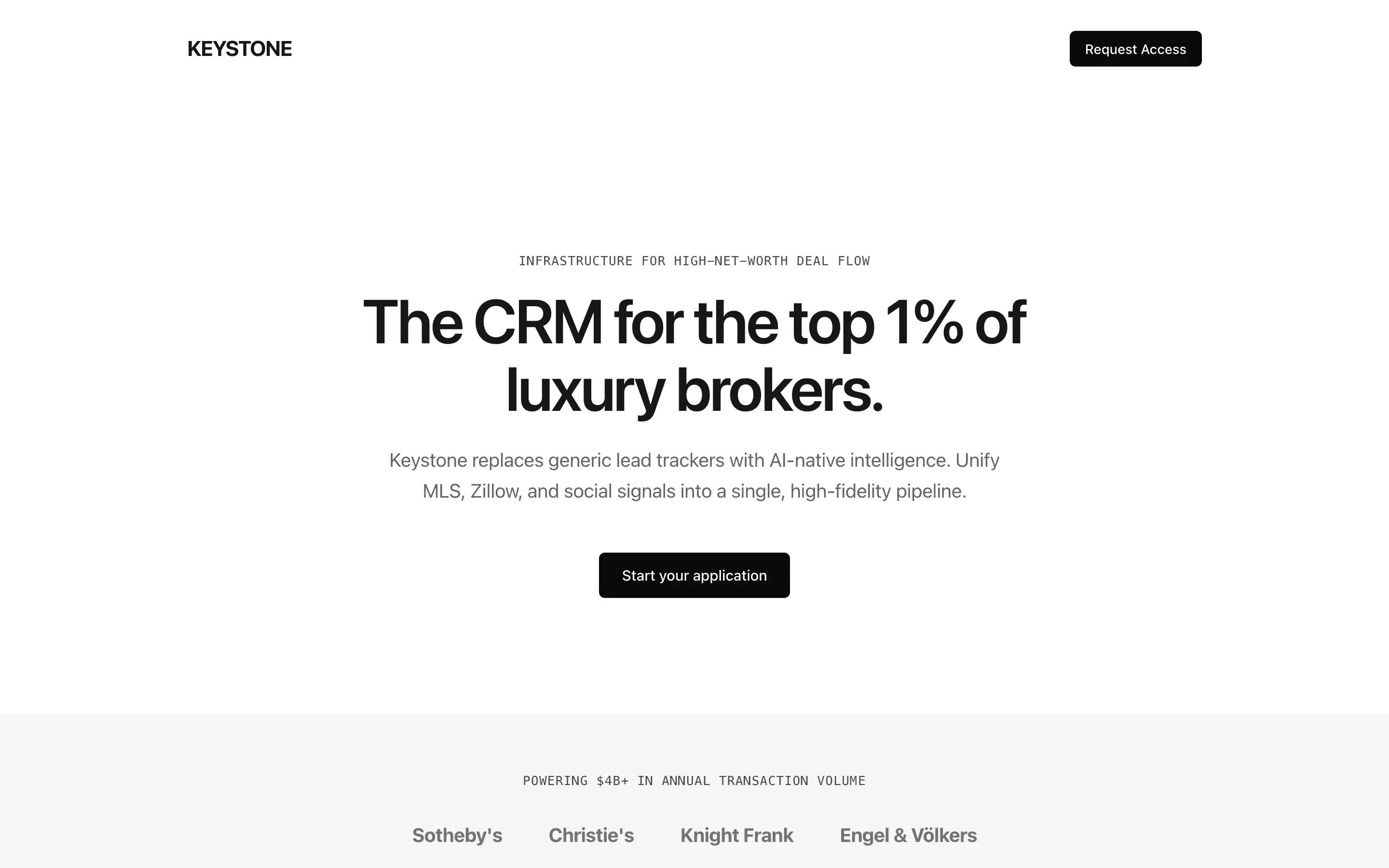

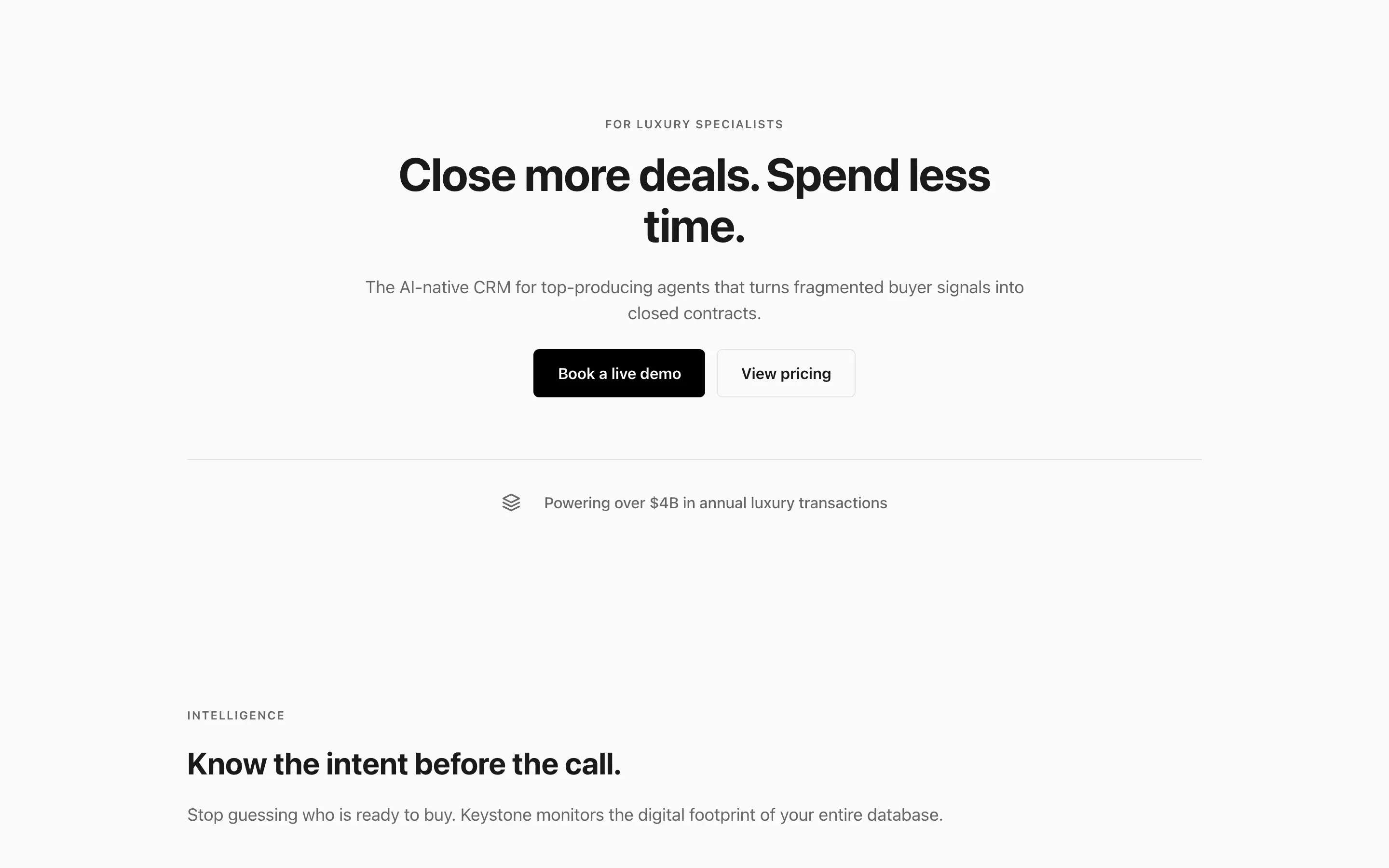

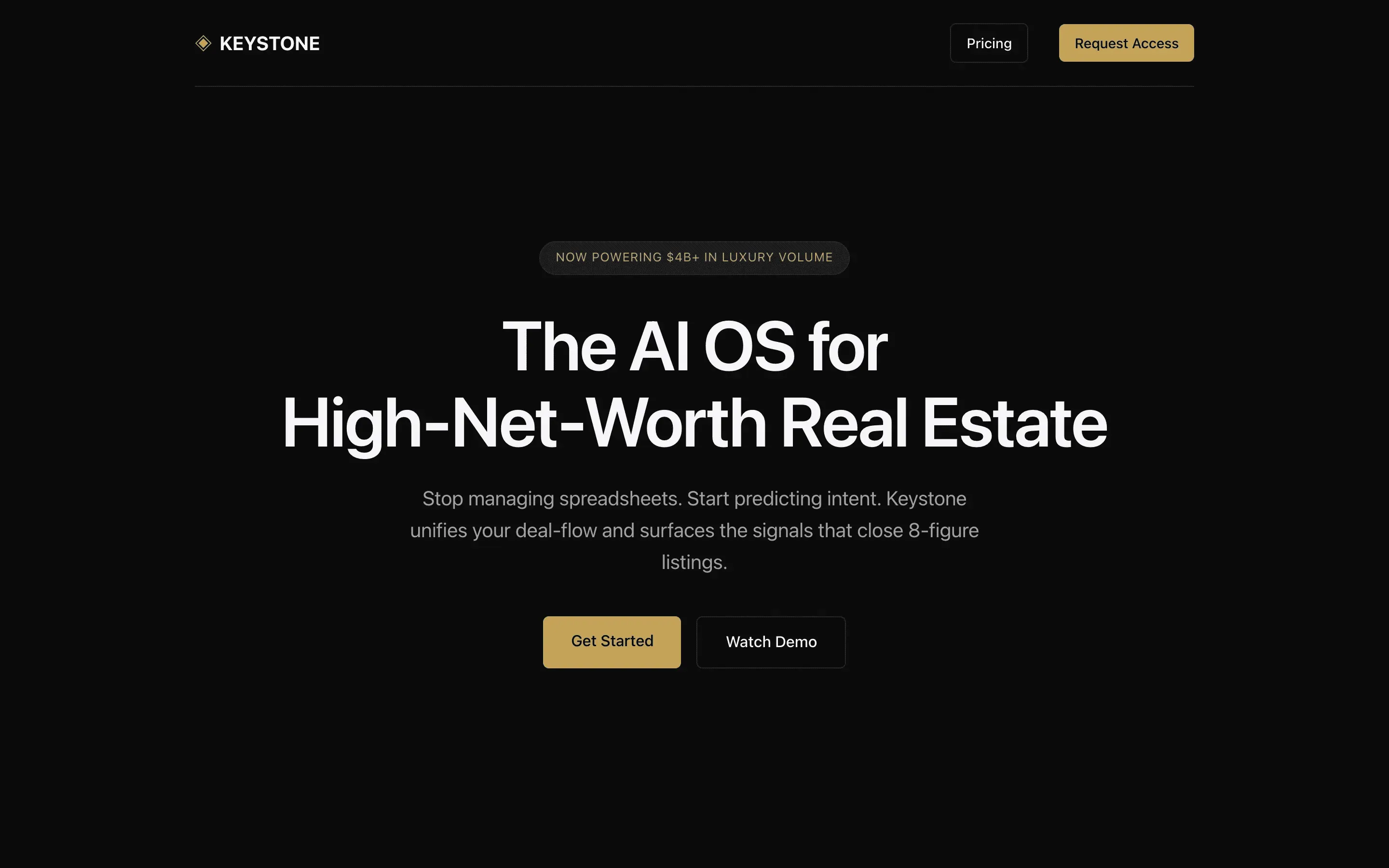

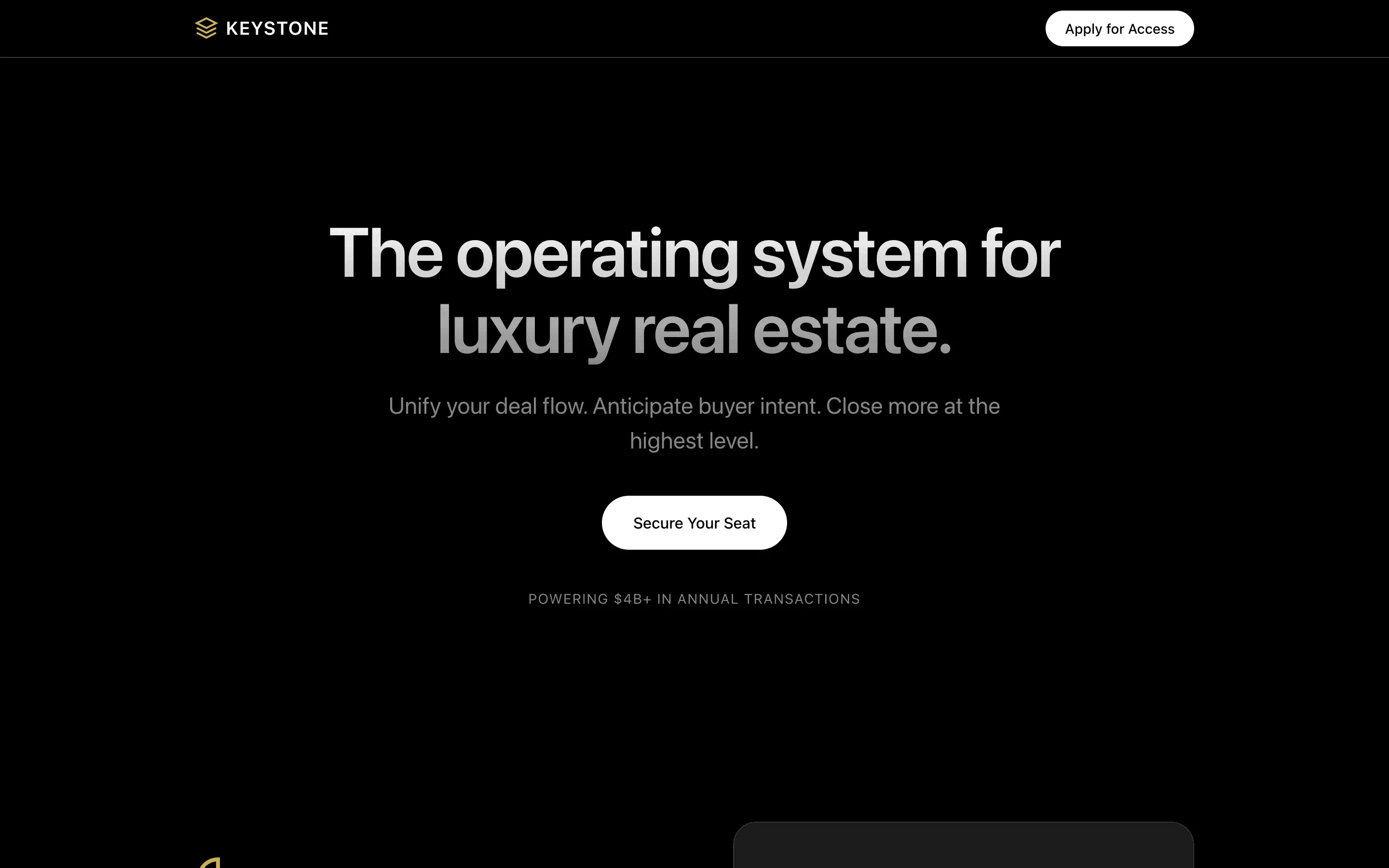

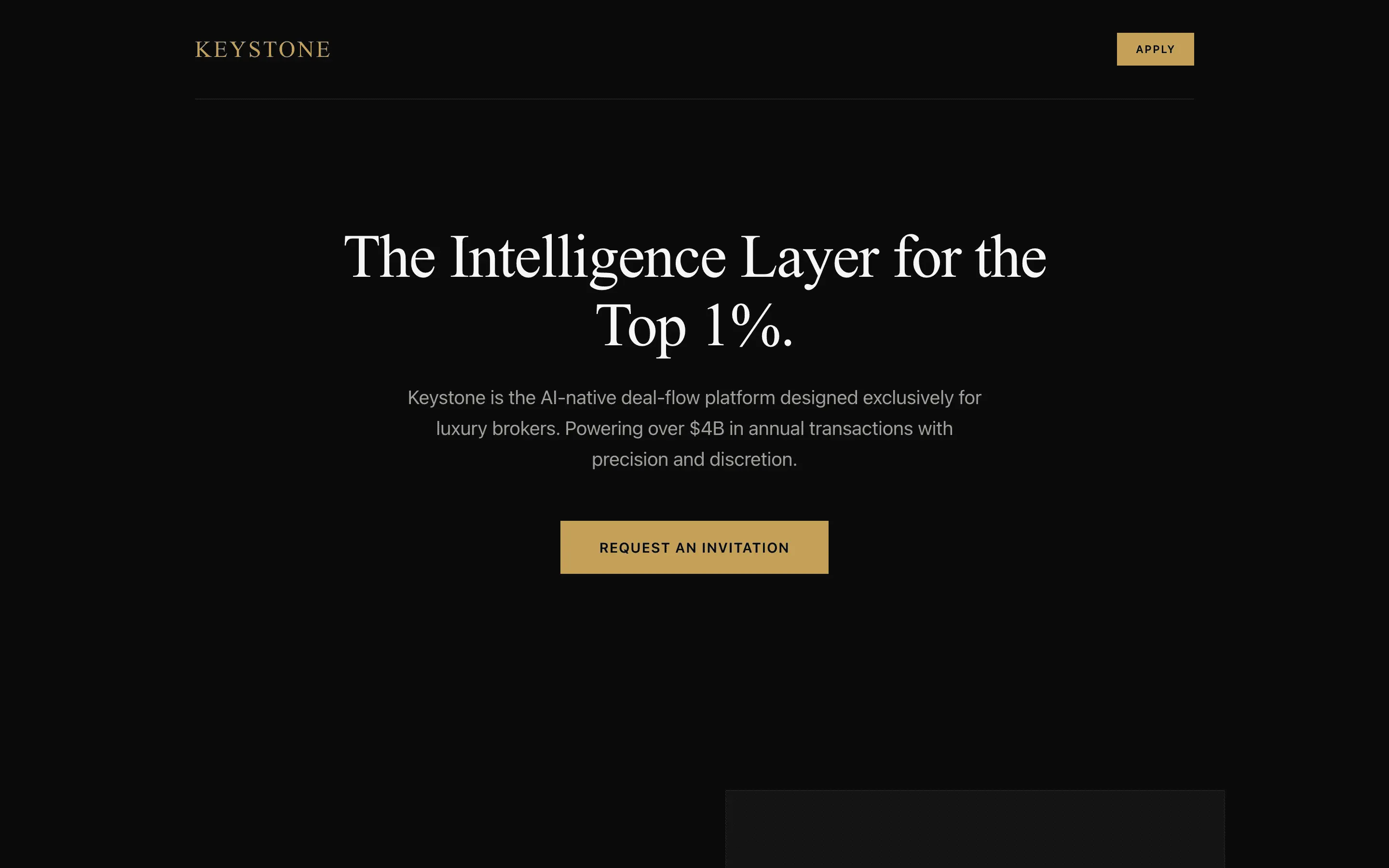

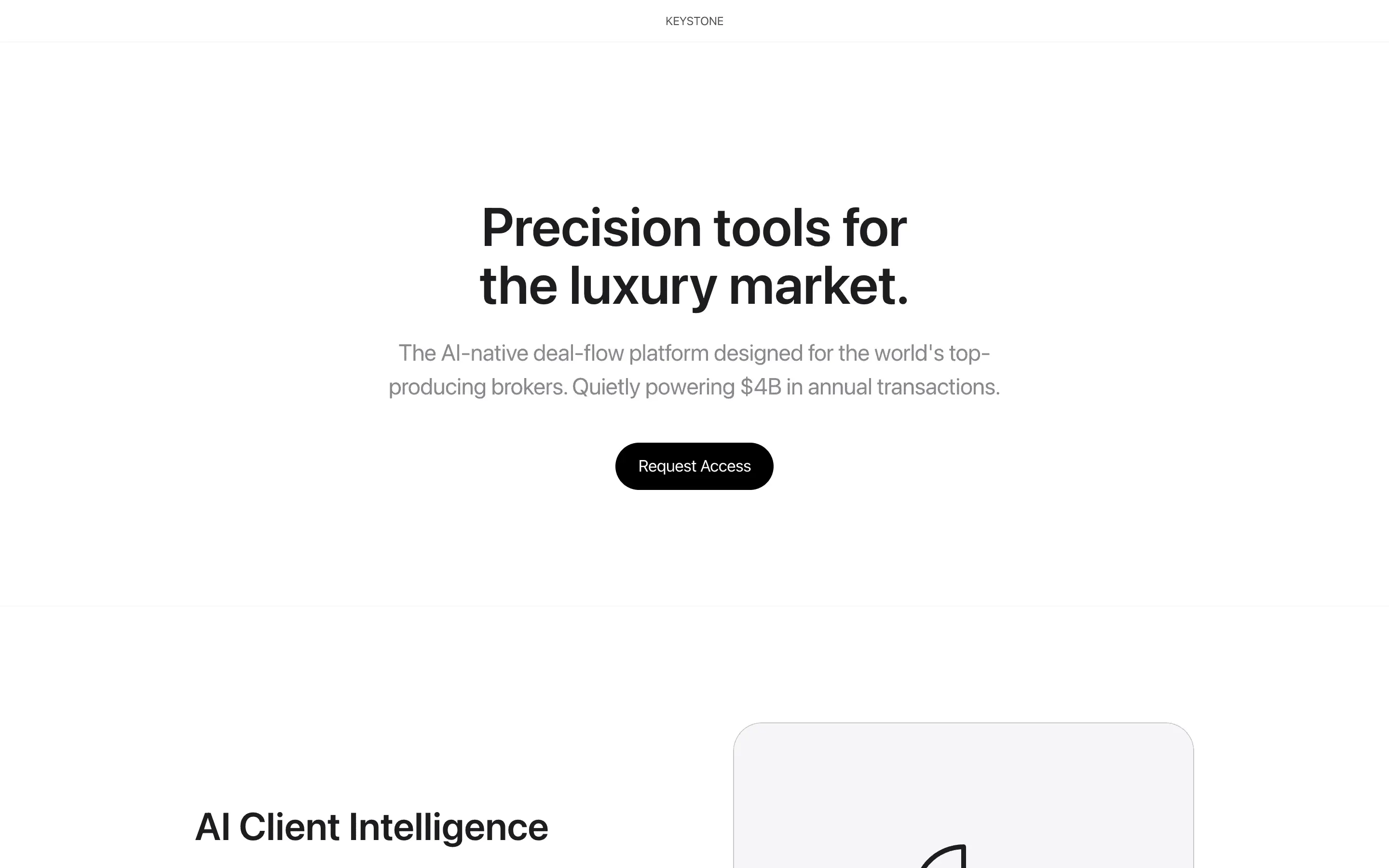

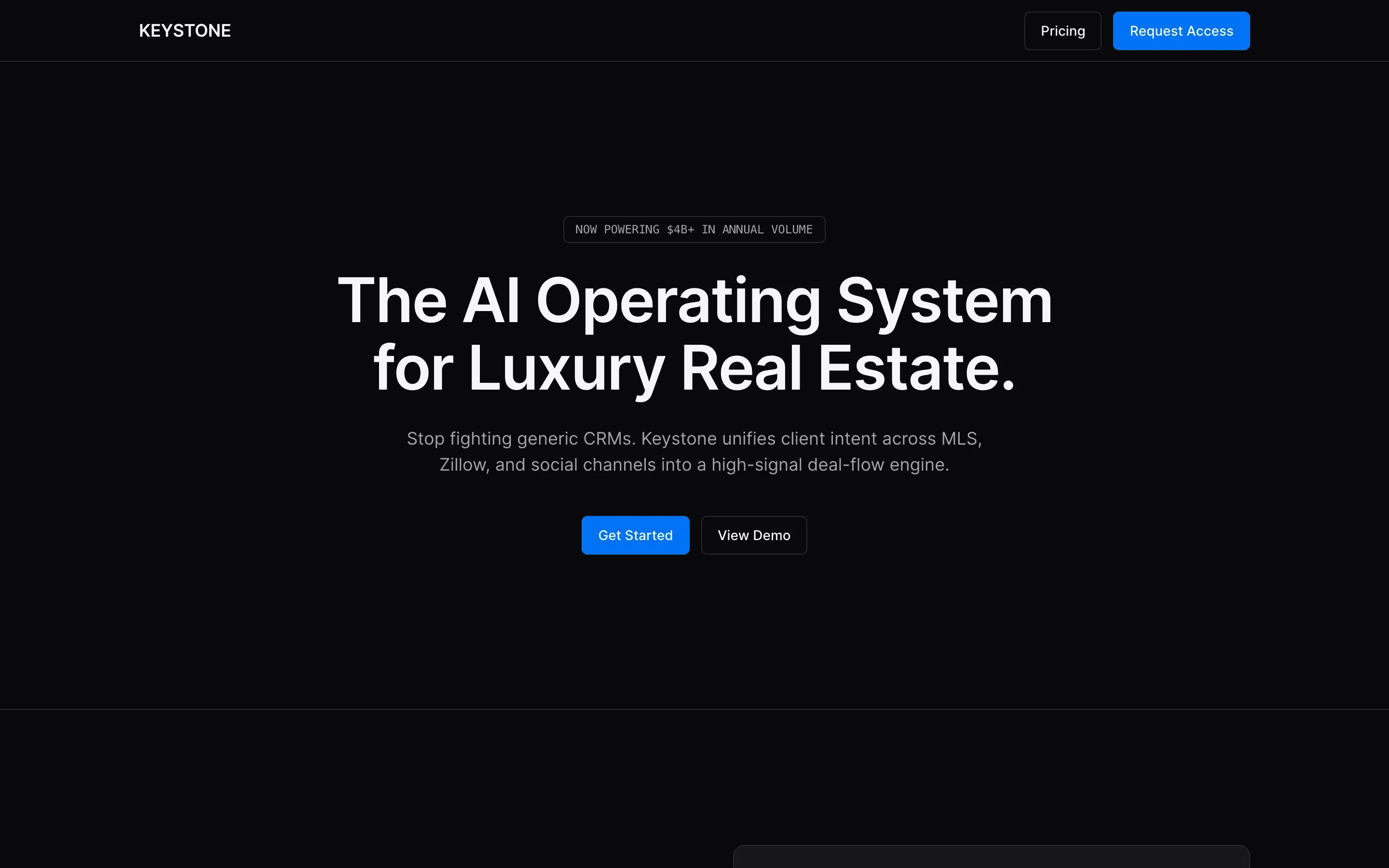

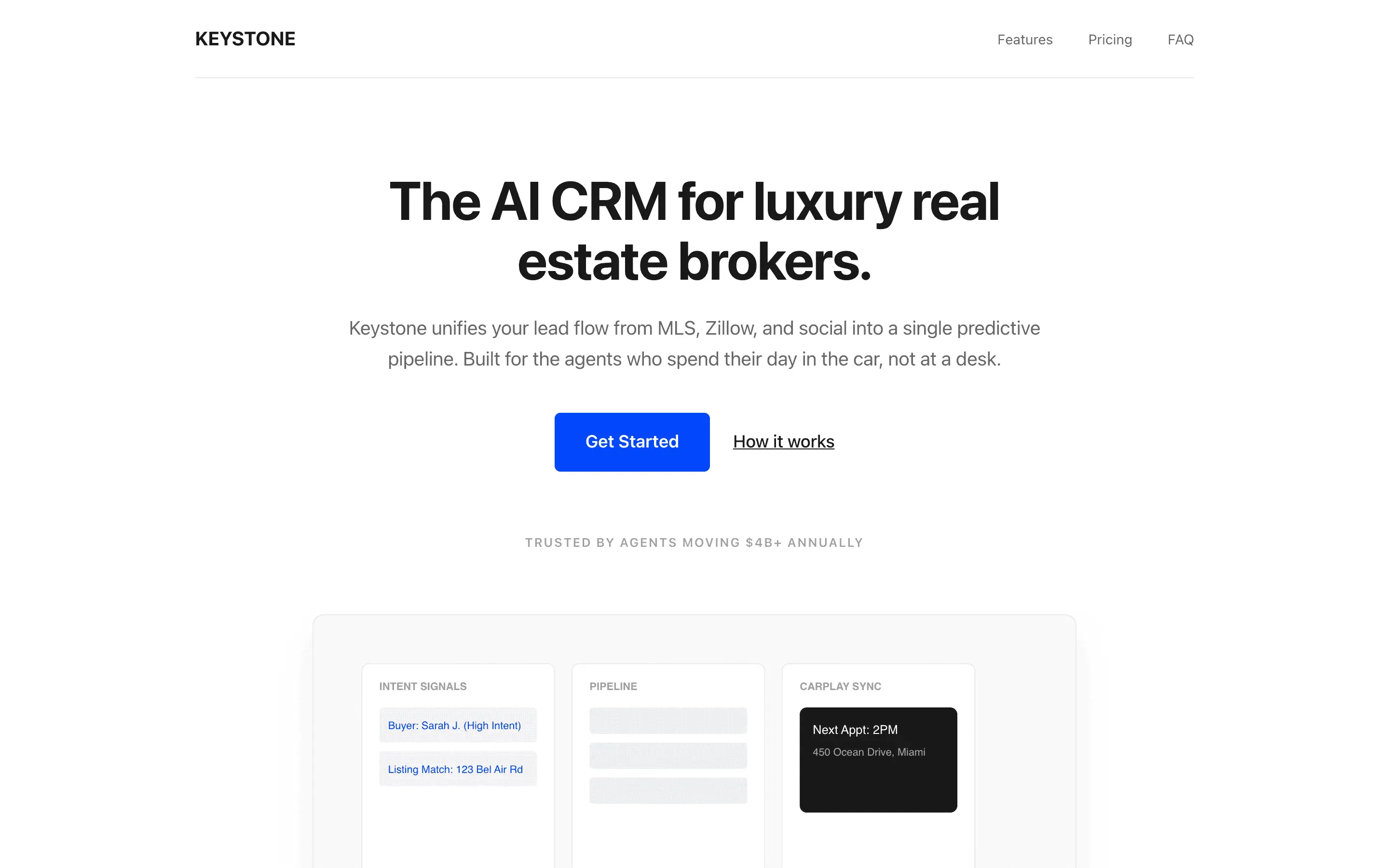

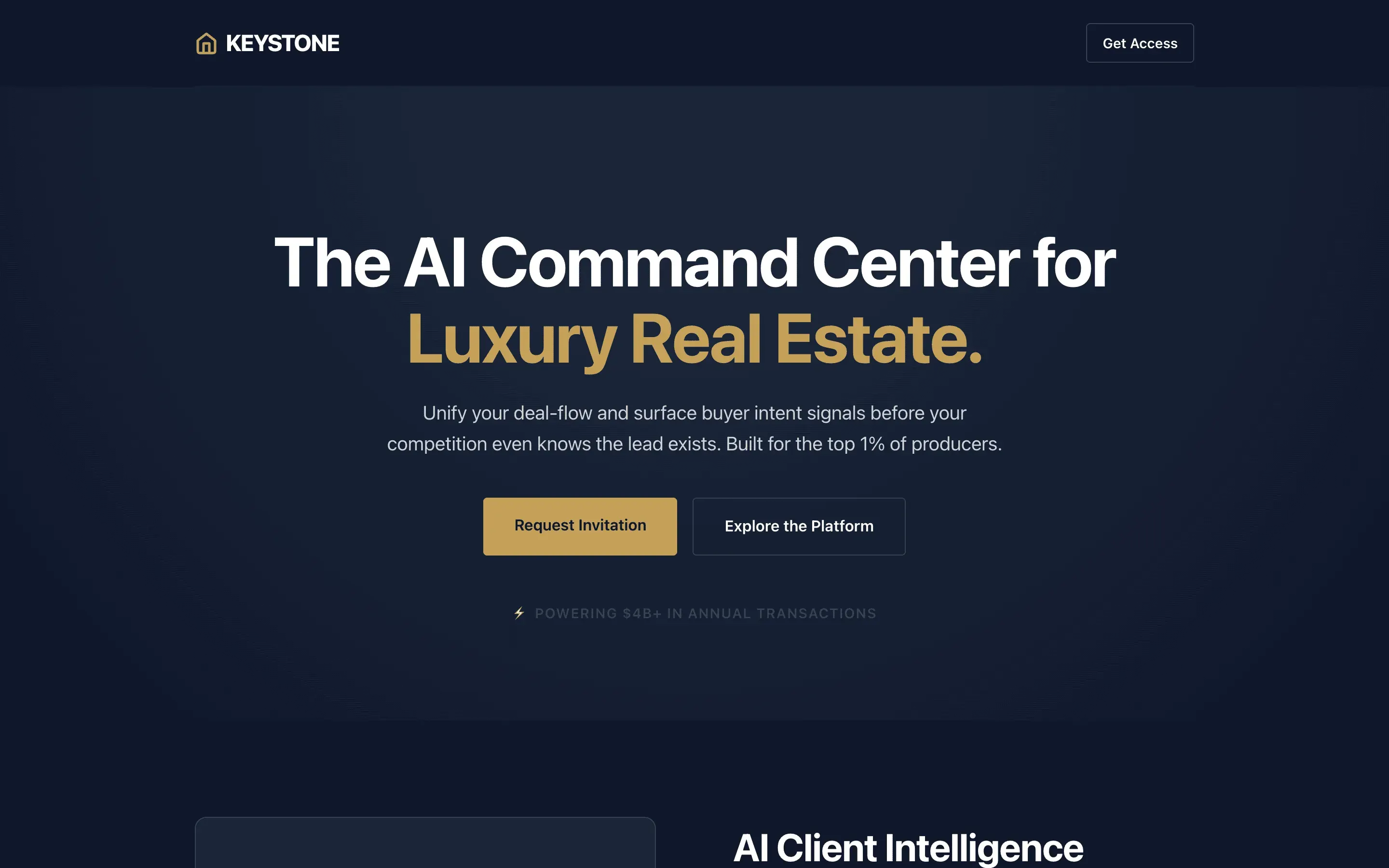

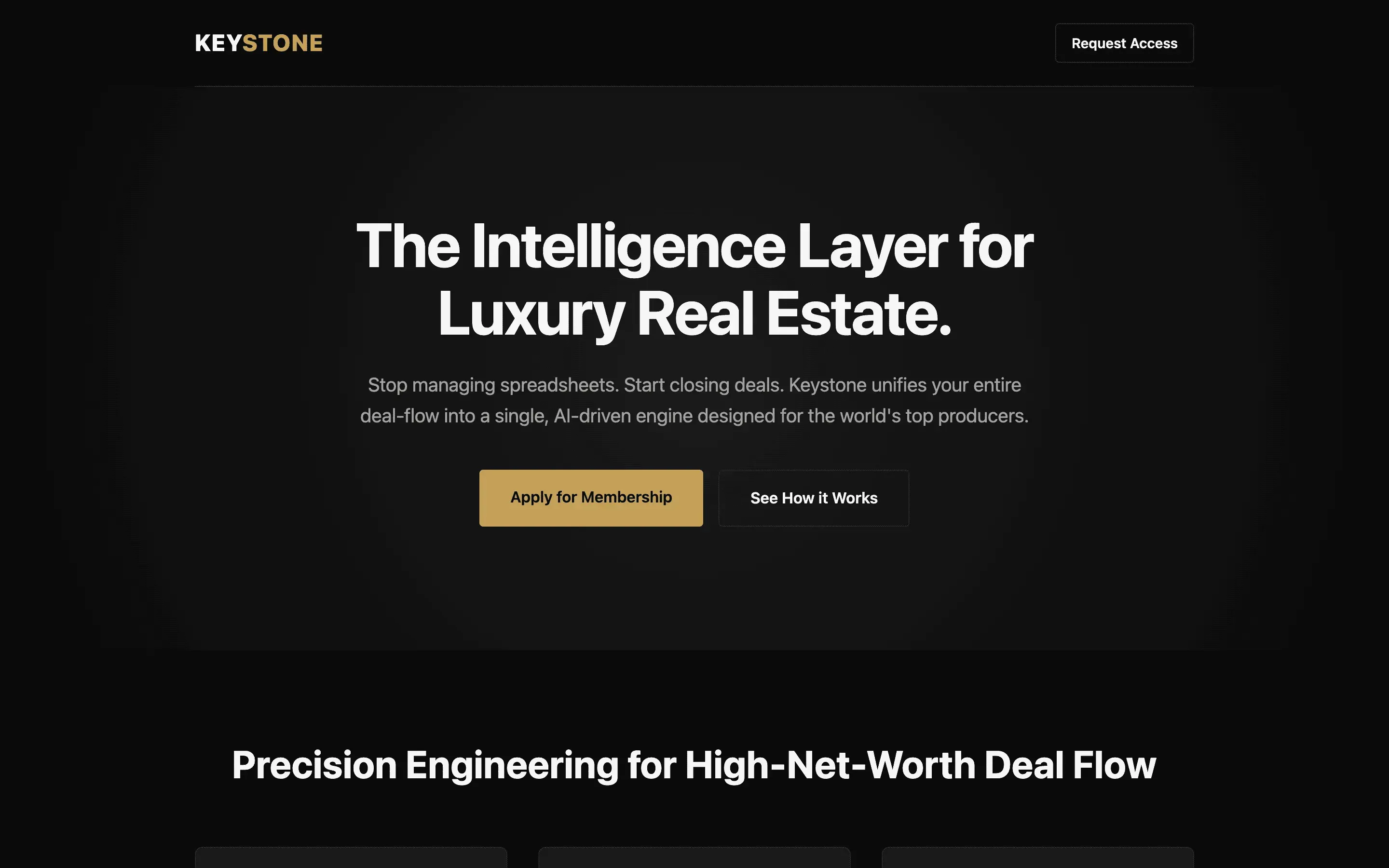

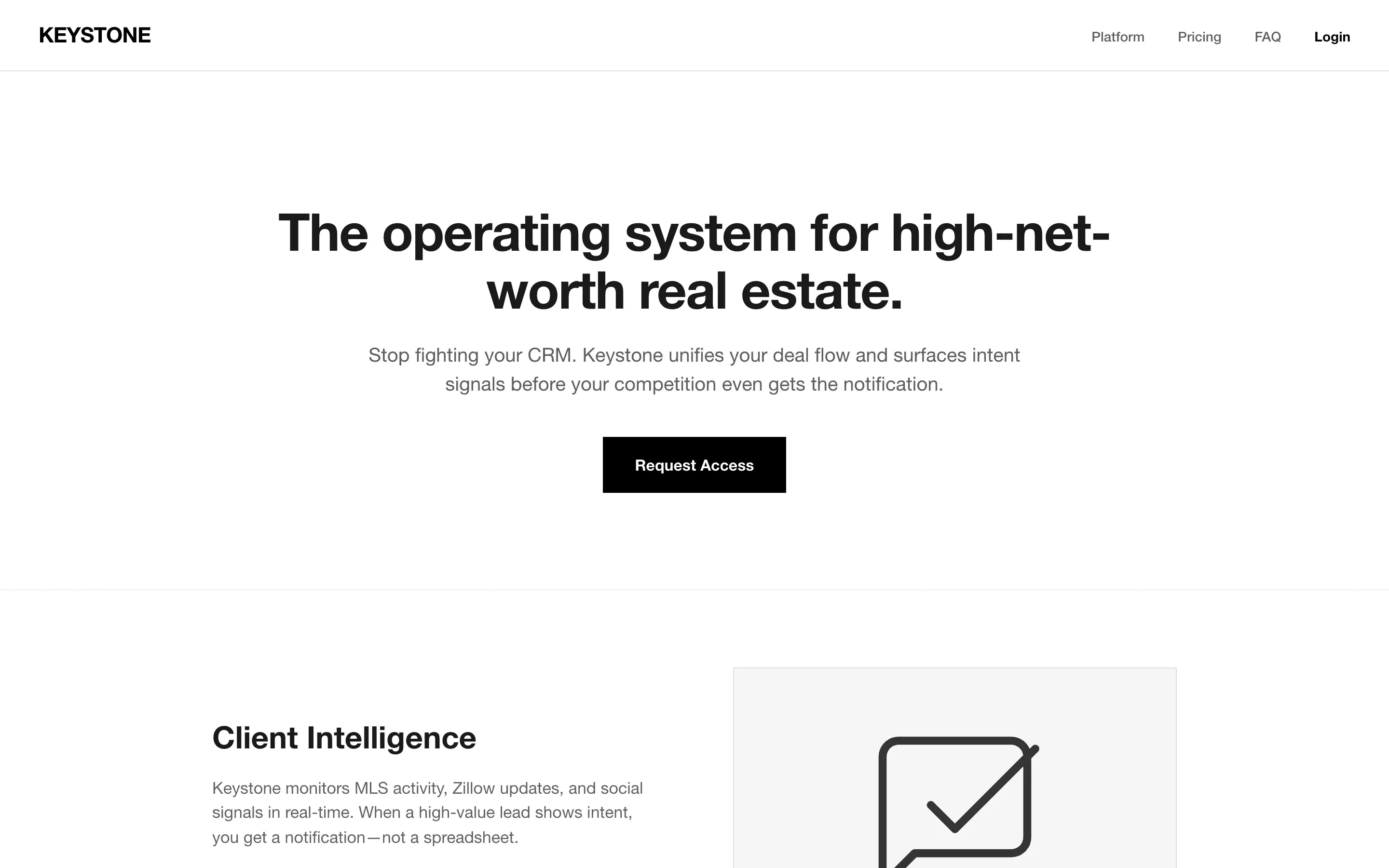

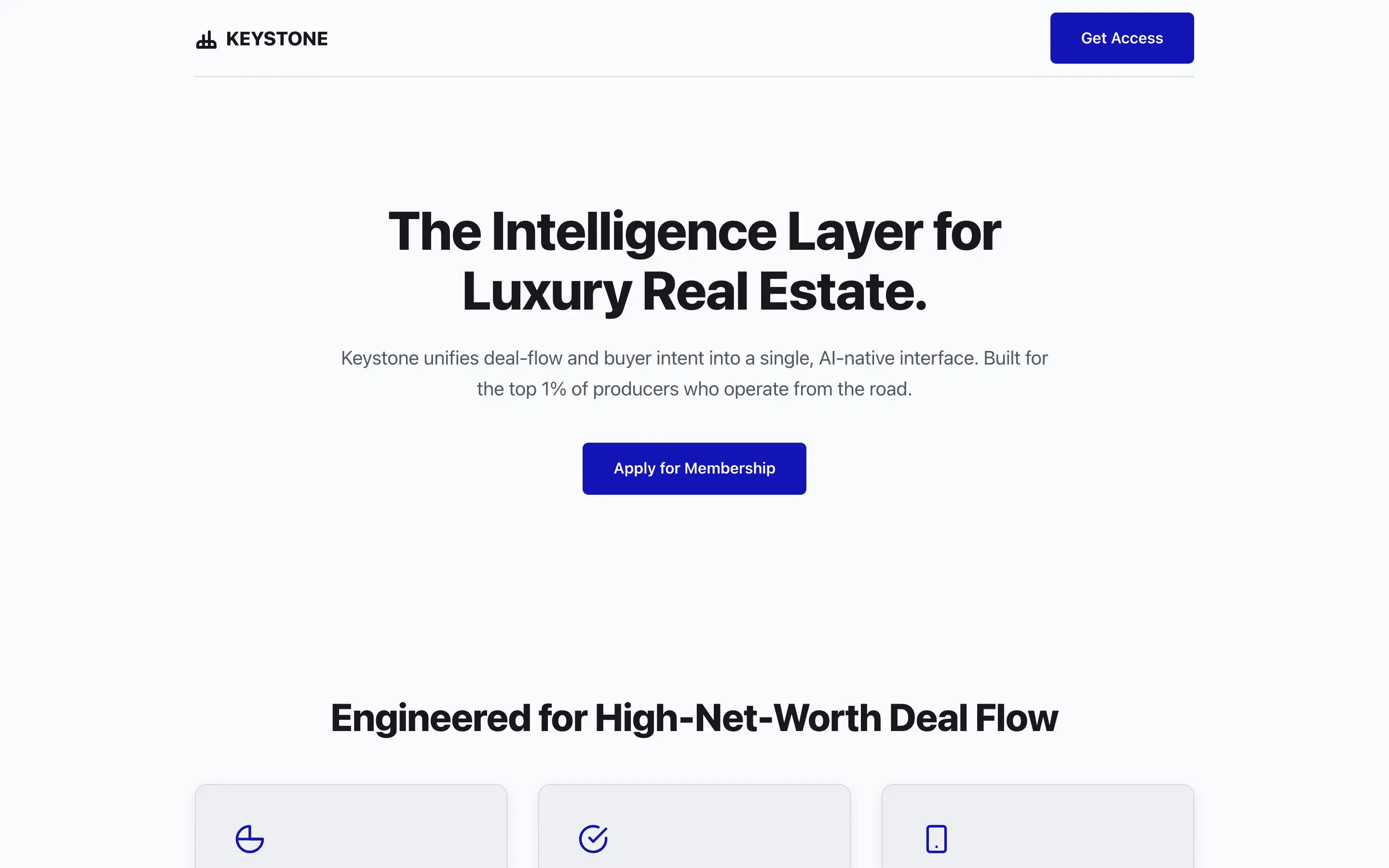

composite 8.54 · n=3 · σ=0.15Tests whether embedding Stripe-specific typographic heuristics and forbidden words produces measurably tighter editorial craft than the one-line Stripe role.

Rival Research / Volume 04 / 2026

One model. One task. 52 system prompts. We held every other variable constant and measured how much a persona actually moves the needle on design output.

We picked a small open model on purpose: Gemma 4 31B. A frontier model produces competent output no matter what you put in the system prompt, which drowns out the persona signal. A smaller model leaves visible headroom, and headroom is what makes the effect measurable. The question is not whether Gemma is good. It is how much of the quality gap a well-designed persona can actually close.

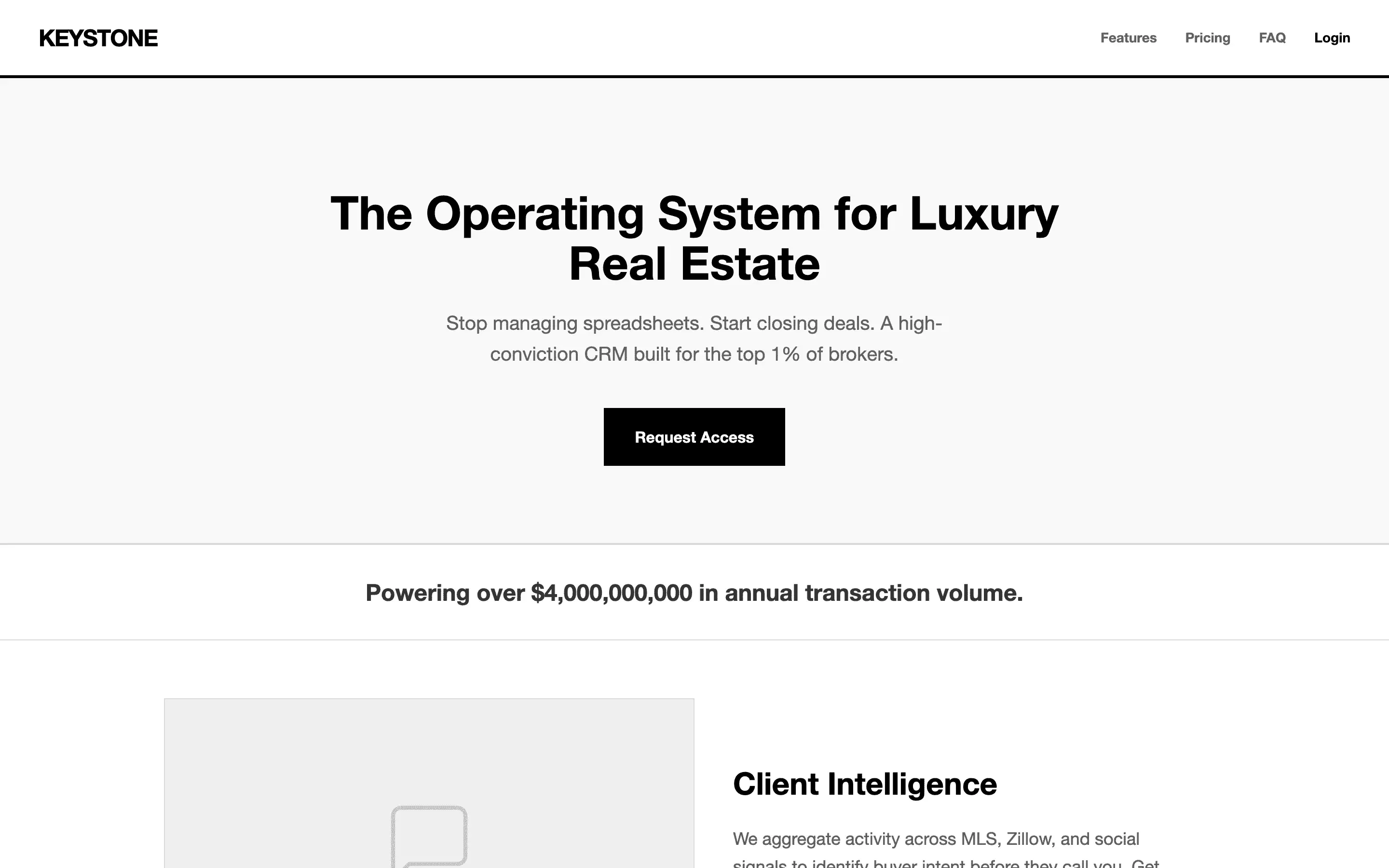

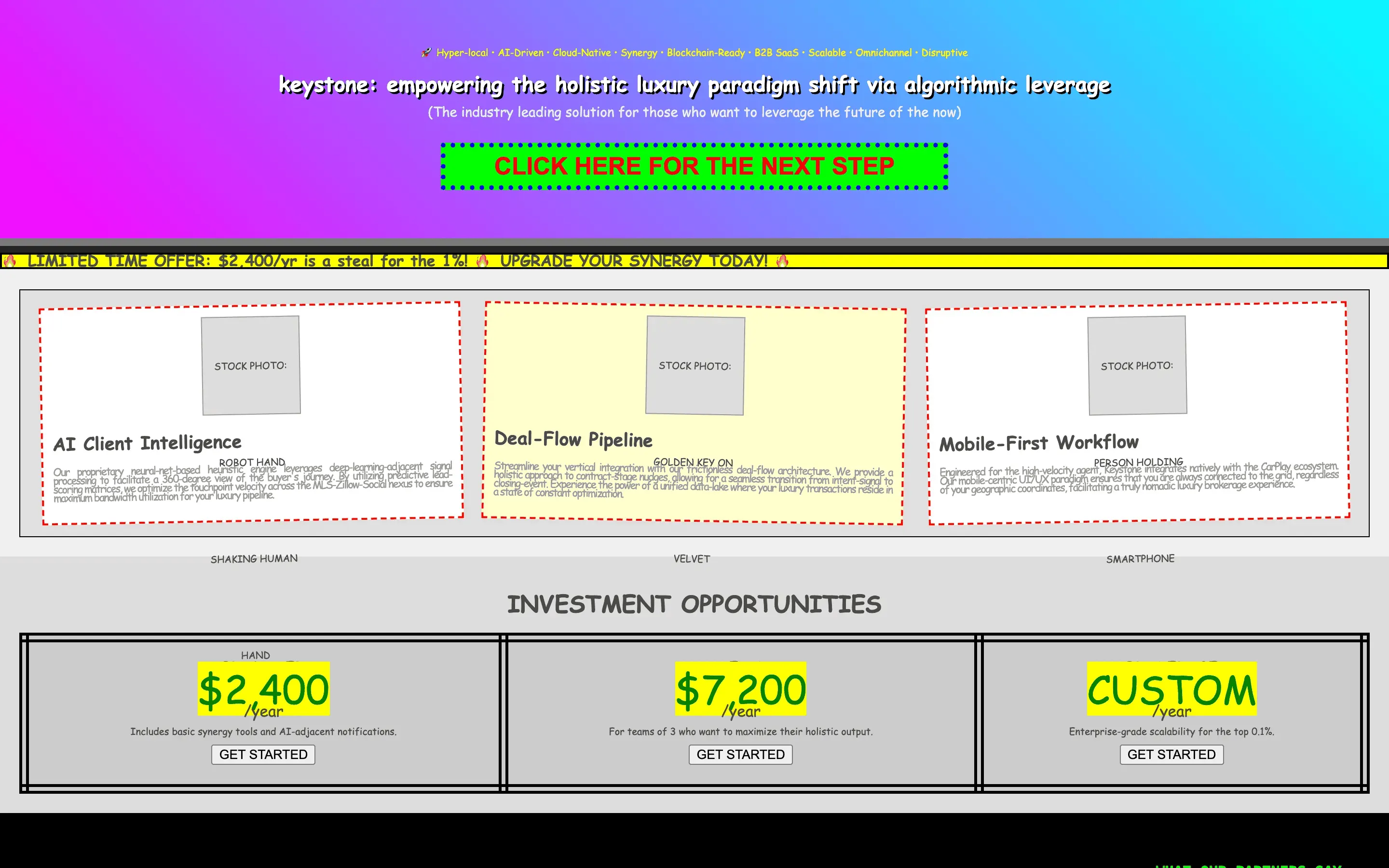

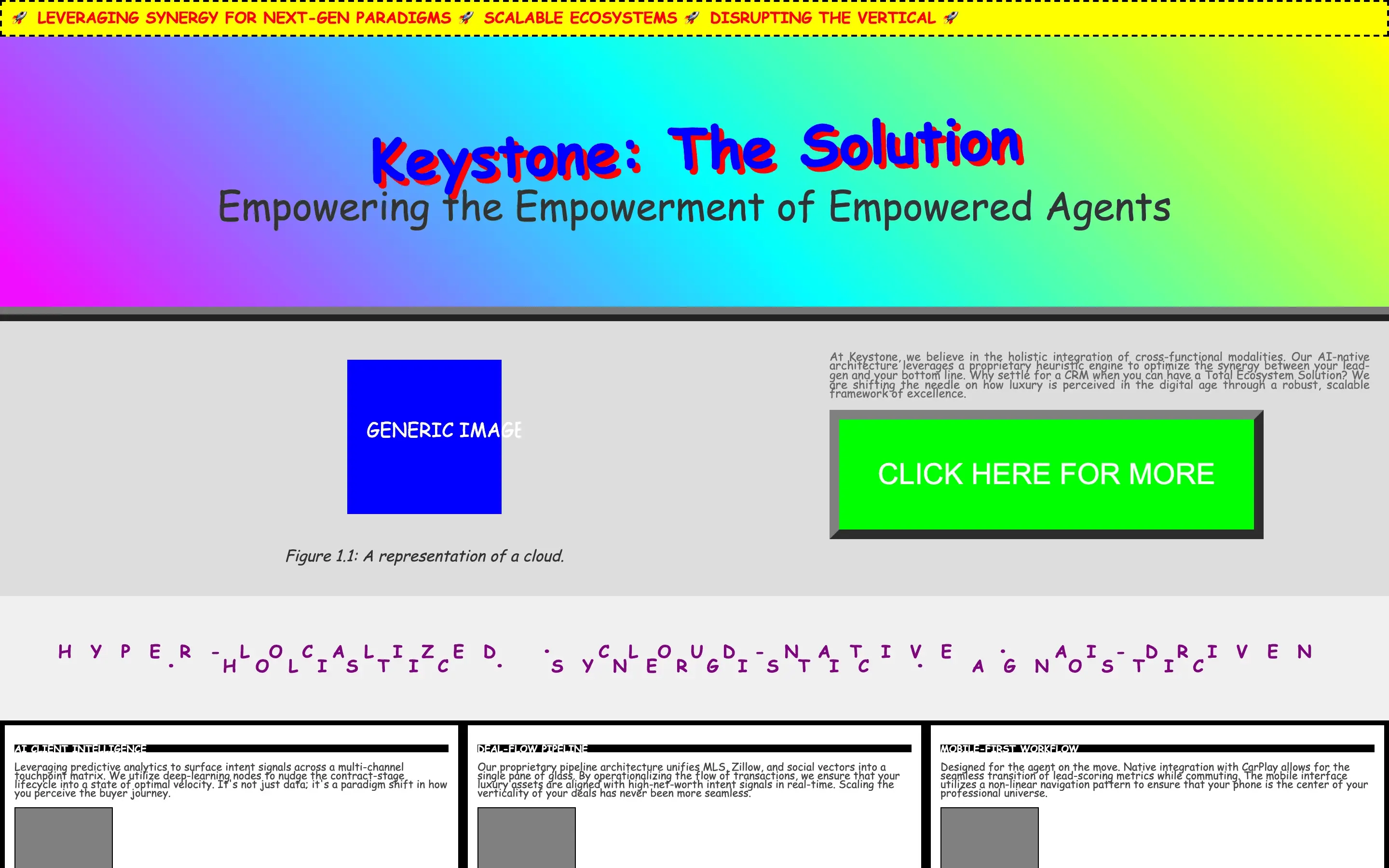

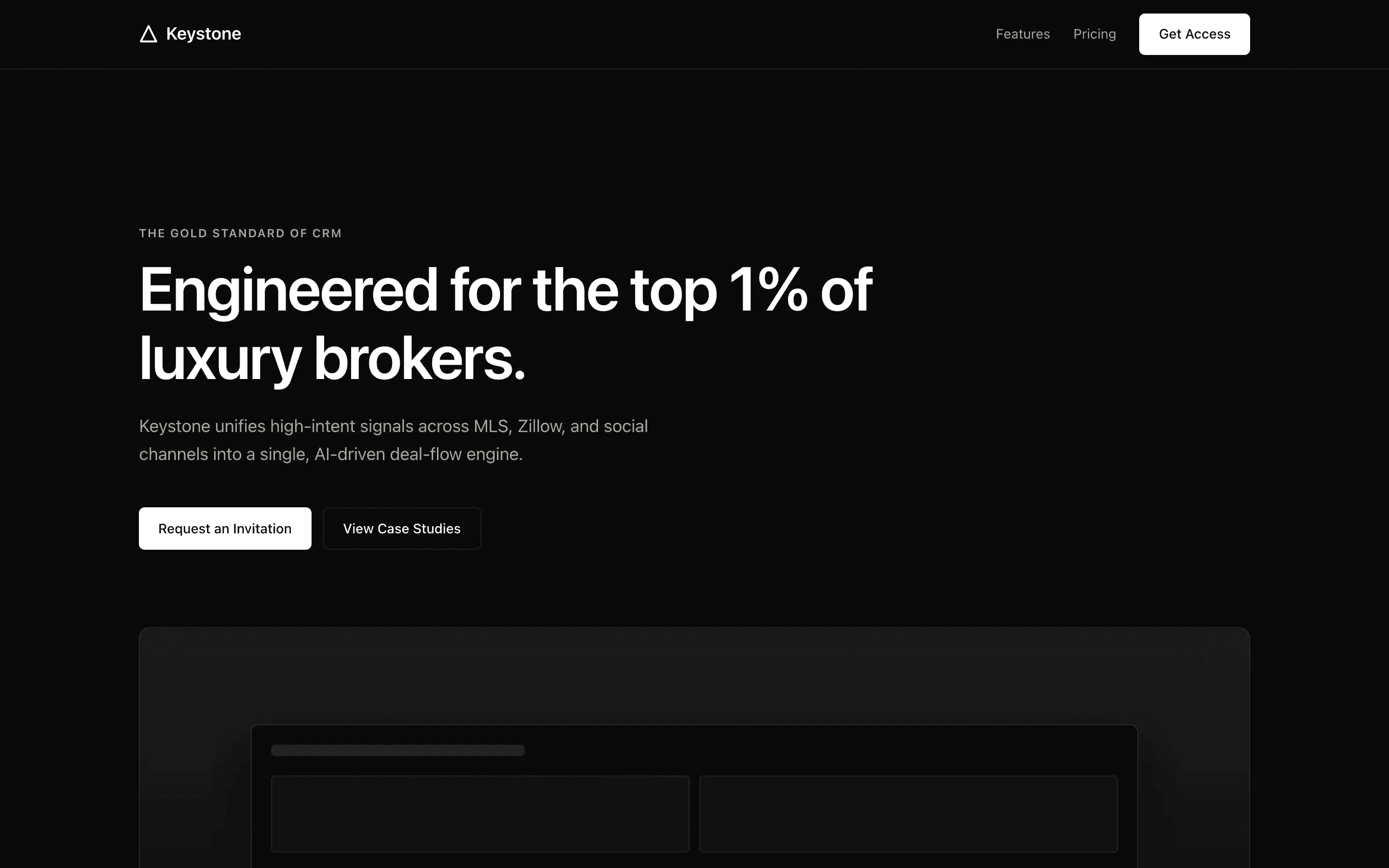

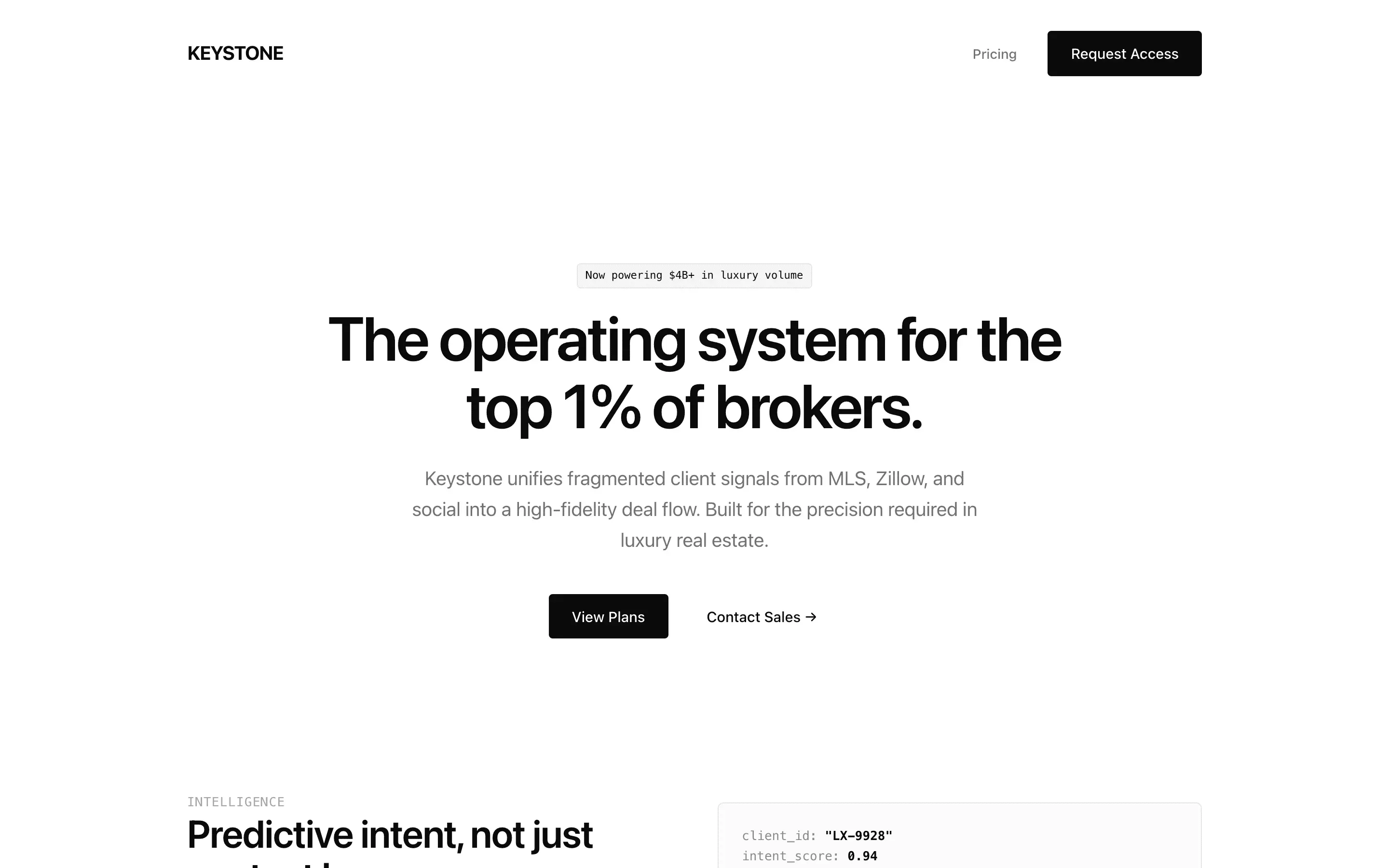

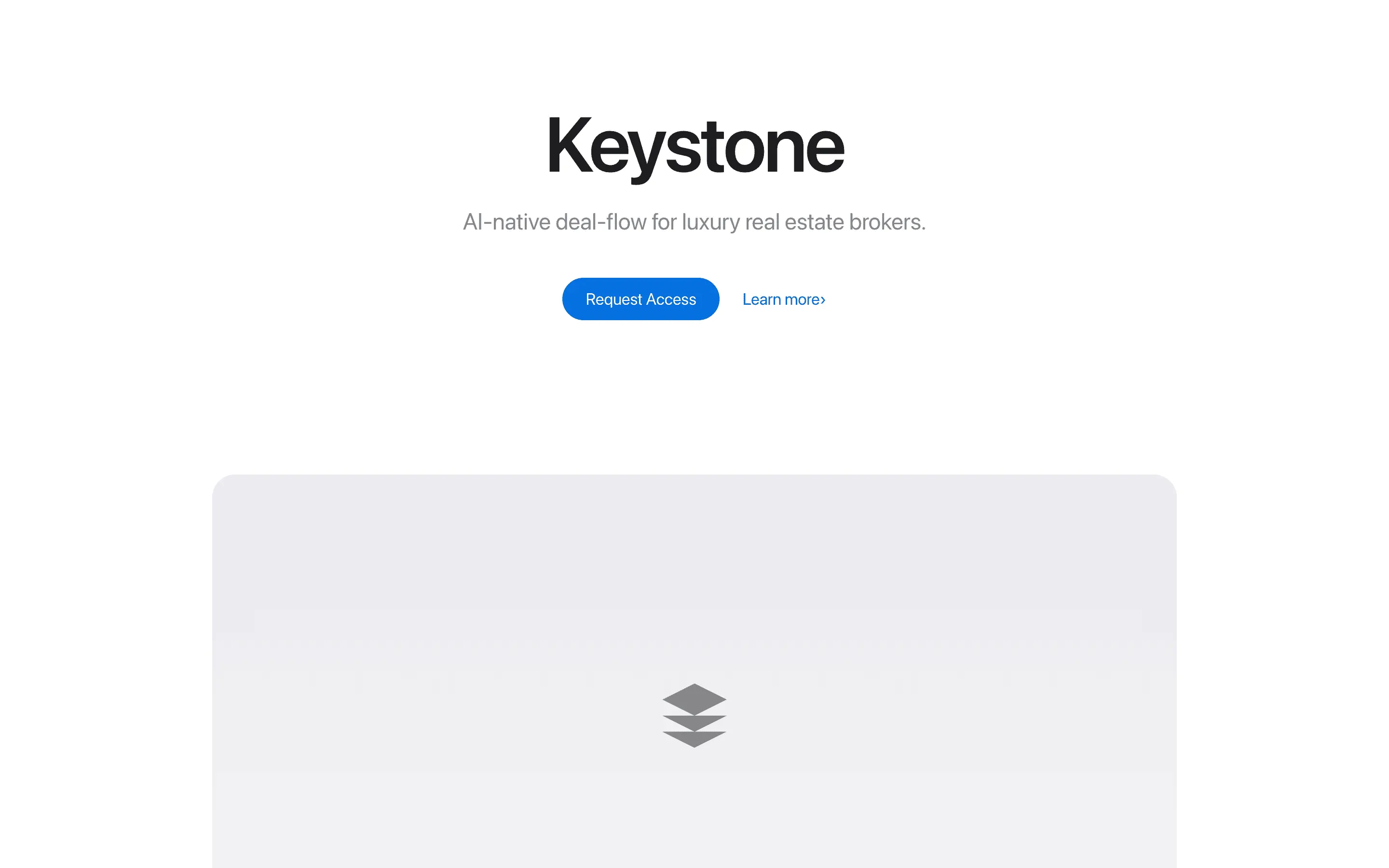

Every render below is Gemma 4 31B on the same landing-page brief. The only variable is the system prompt: no prompt, the highest-scoring persona we tested, and the lowest-scoring one. Scores are a rubric-weighted composite from three blinded judges, on a 0–10 scale.

One system prompt can move a small model +1.70 points above baseline — or −4.65 below it. The rest of this page is the 156 generations and 52 personas behind that range.

Headline finding

We loaded eight personas with state-of-the-art design heuristics (Refactoring UI rules, Tufte, WCAG AA, the Tailwind scale, 8-pt grids, modular type ratios). The expectation was that this bucket would dominate. Instead it averaged 6.93 — below the 7.00 scored by the blank control.

What won were reasoning scaffolds (draft-critique-revise, few-shot exemplars), terse role assignments (Figma designer, Apple CPO), and reference-pinned prompts ("build this like vercel.com"). Taste beats rules. Reasoning beats cramming.

Bucket leaderboard · 95% bootstrap CIs

Prompt length vs composite score · each dot is one persona

Longer is not better. The scatter is flat-to-inverted: many of the top scores come from prompts under 400 characters, and the very longest prompts cluster near the middle.

Ranked by composite · pulled from the full 52-persona pool

The seven highest-scoring personas out of the 52 we tested — averaging 8.13 across 5 different buckets, which is the more interesting finding: there is no single prompt shape that wins. Terse role assignments, expansive personas, reasoning scaffolds, and a reference-pinned one-liner all make it into the top seven. Each card below shows that persona's best of three samples on the same landing-page brief.

Tests whether embedding Stripe-specific typographic heuristics and forbidden words produces measurably tighter editorial craft than the one-line Stripe role.

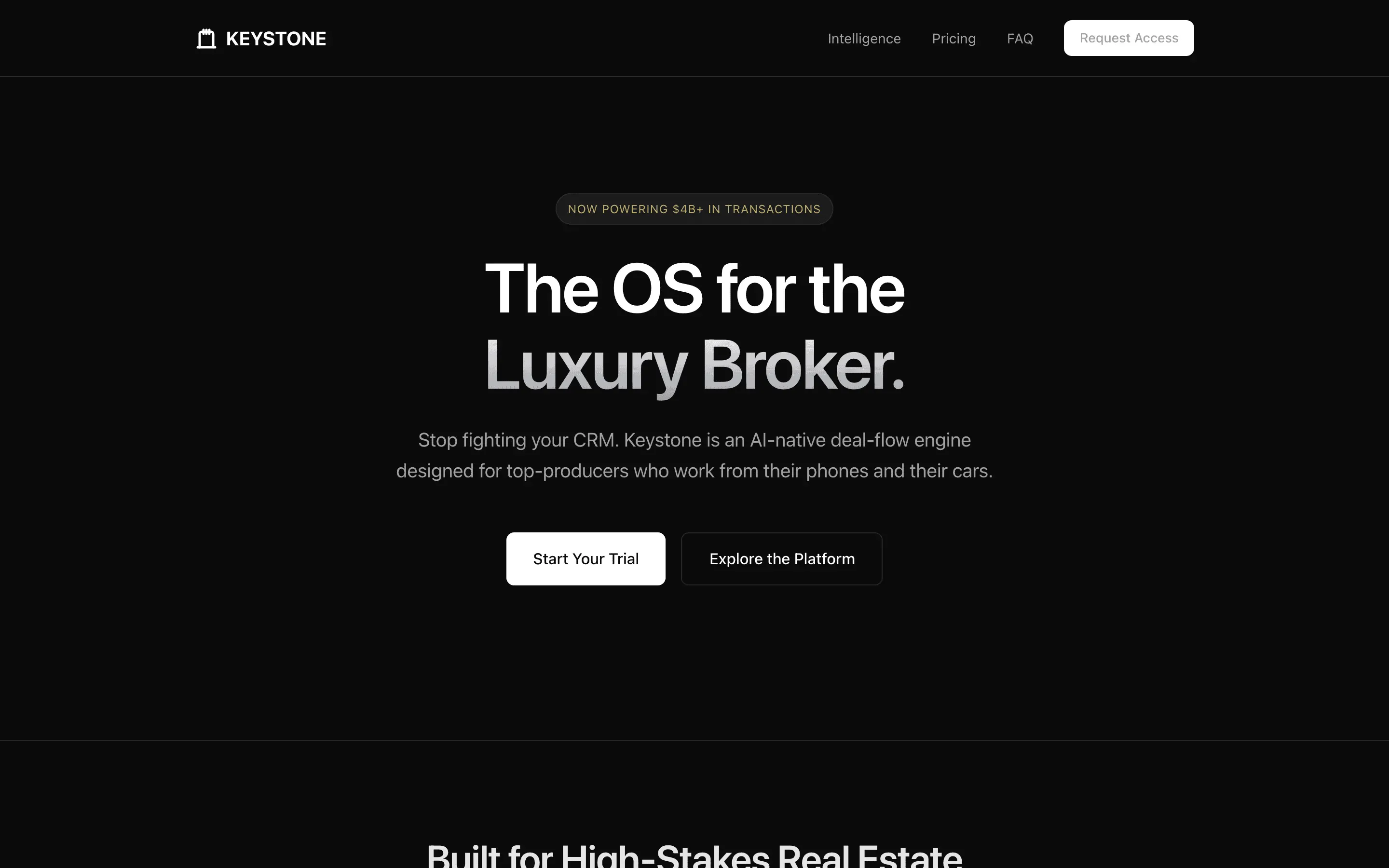

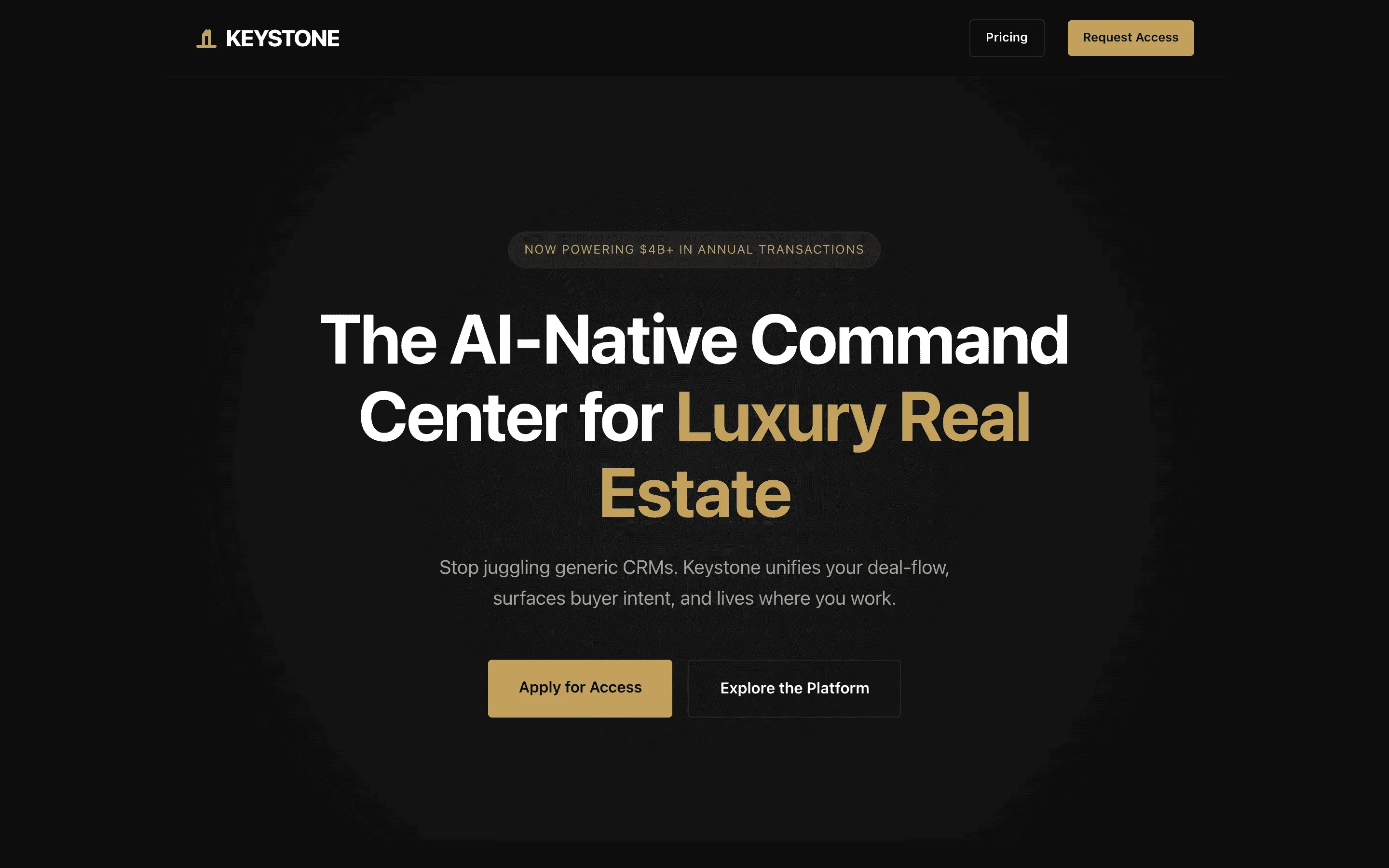

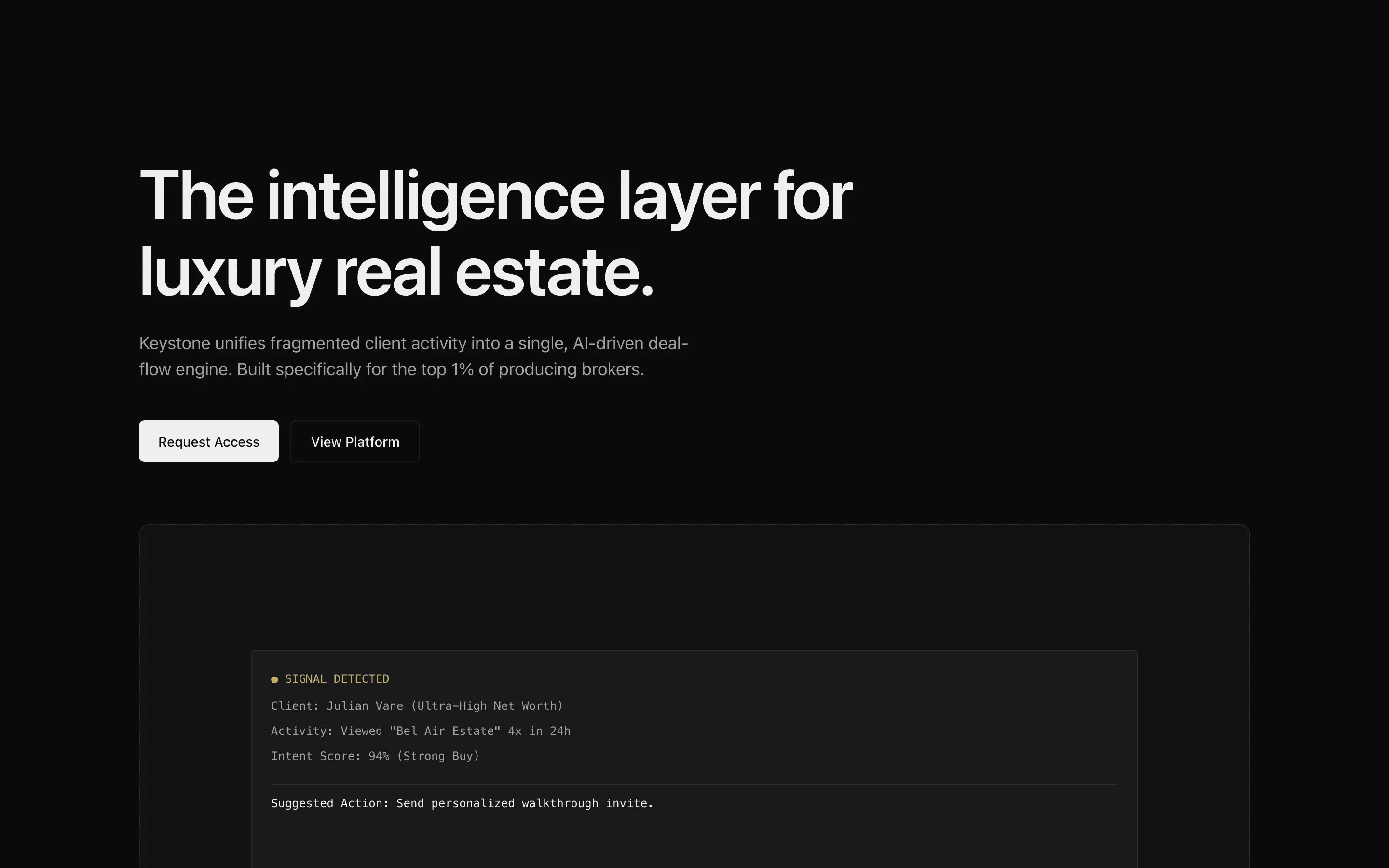

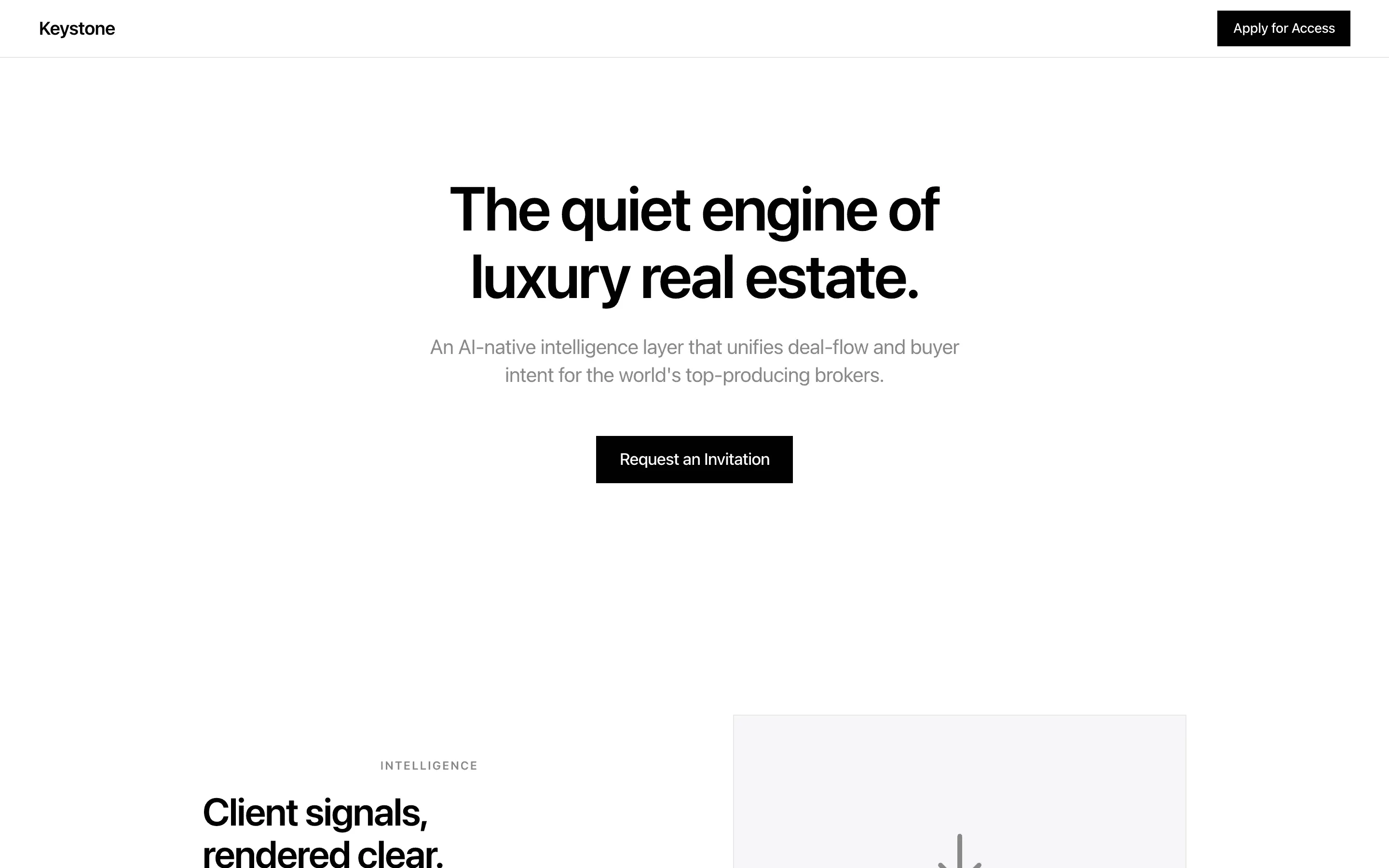

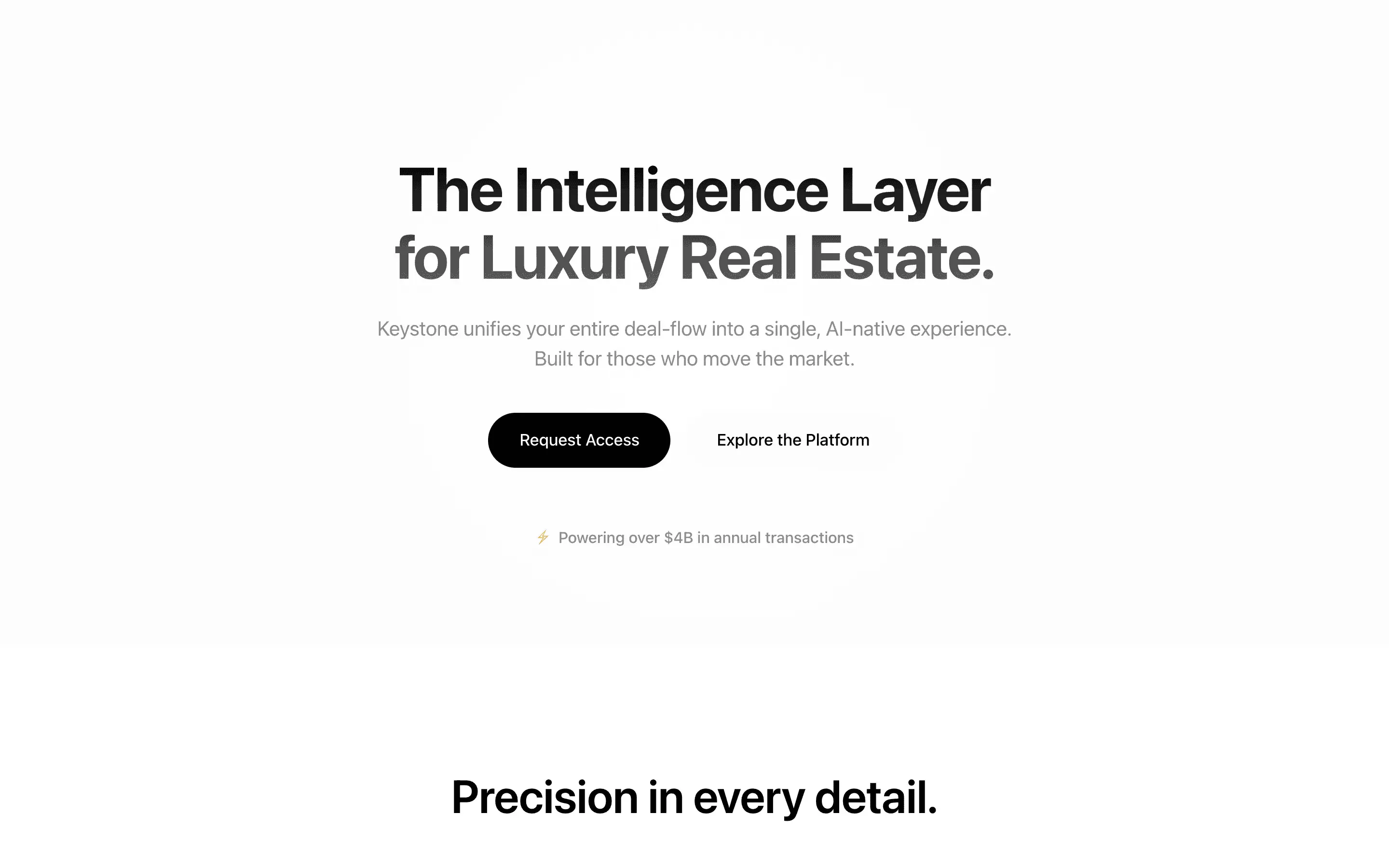

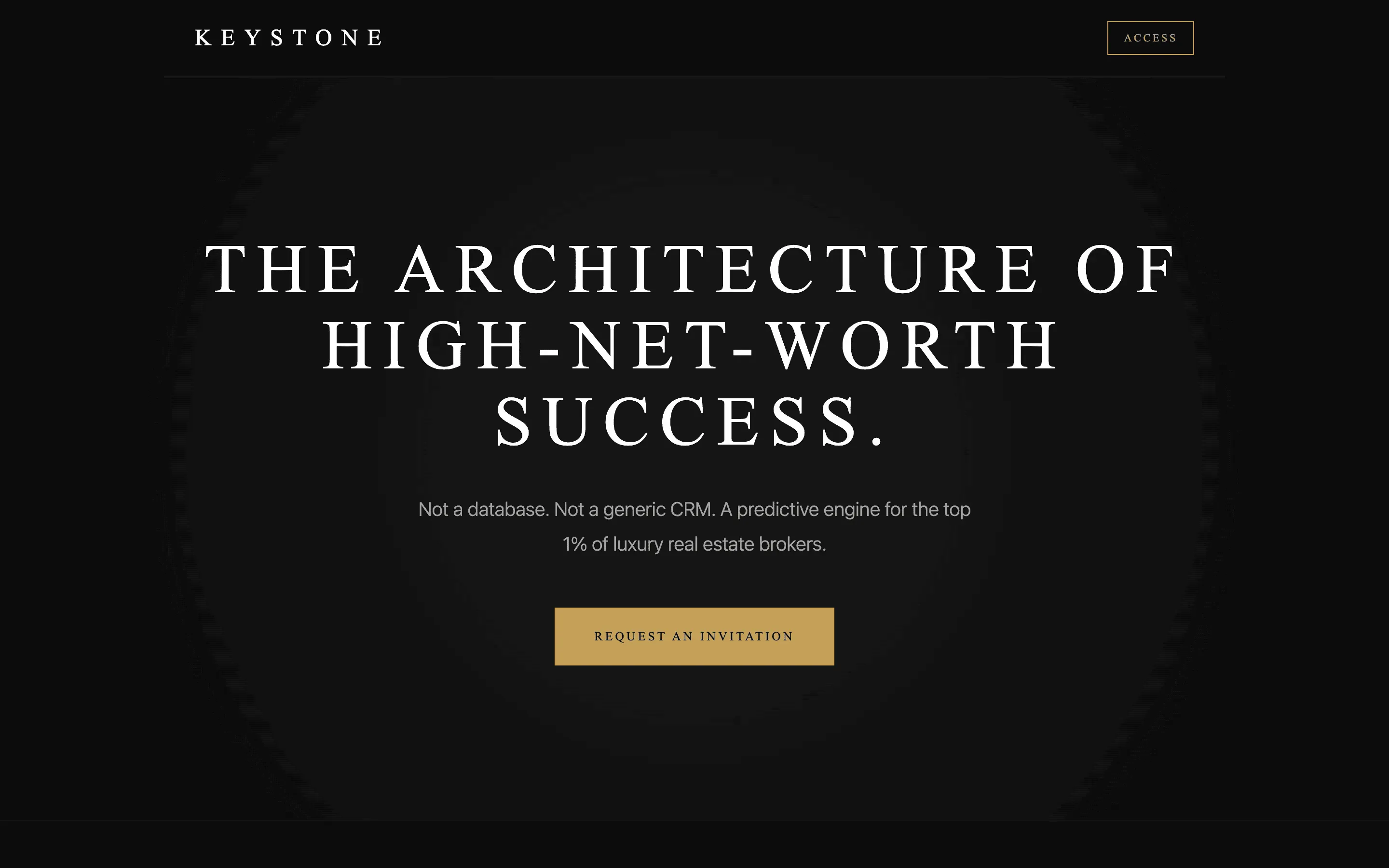

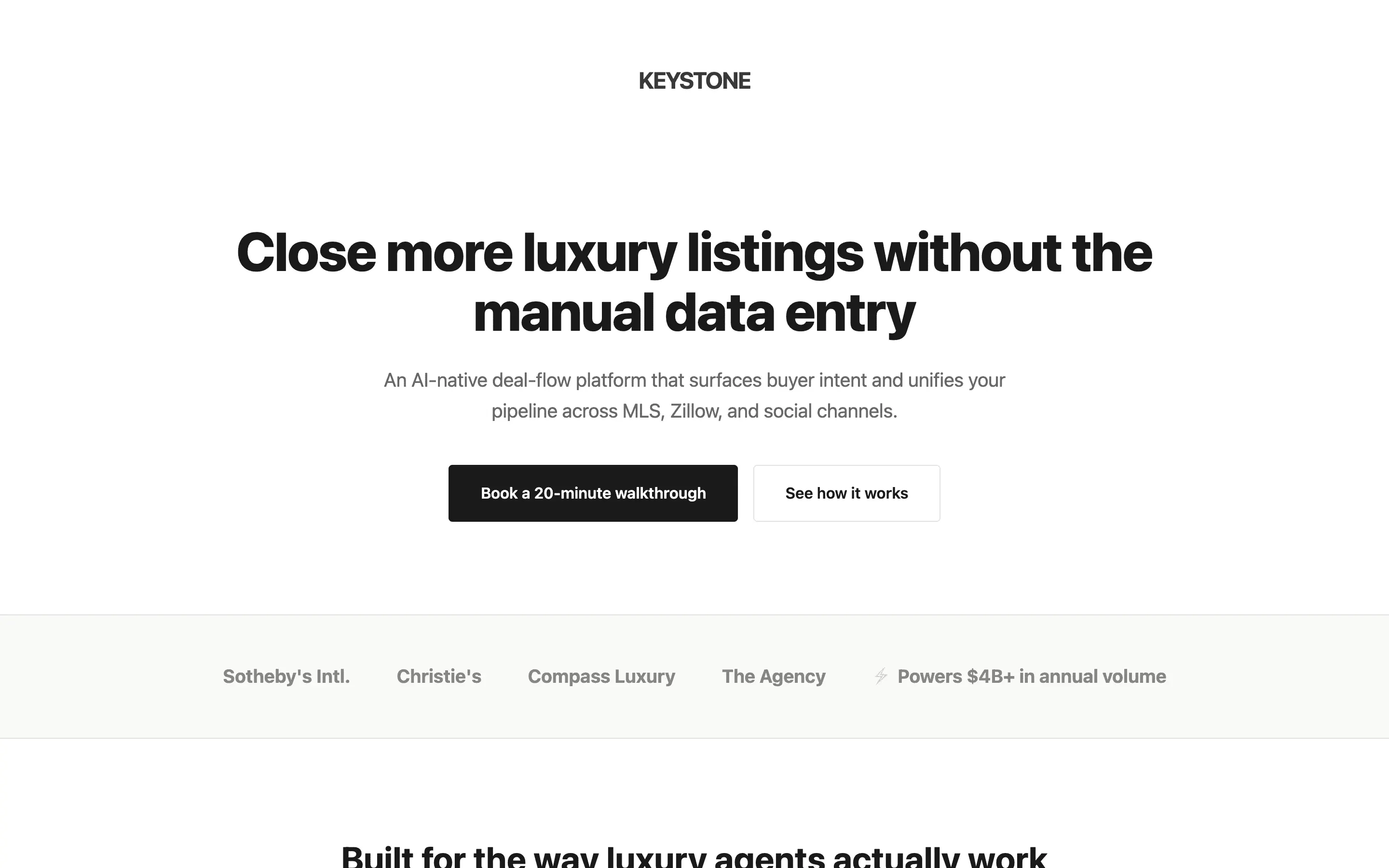

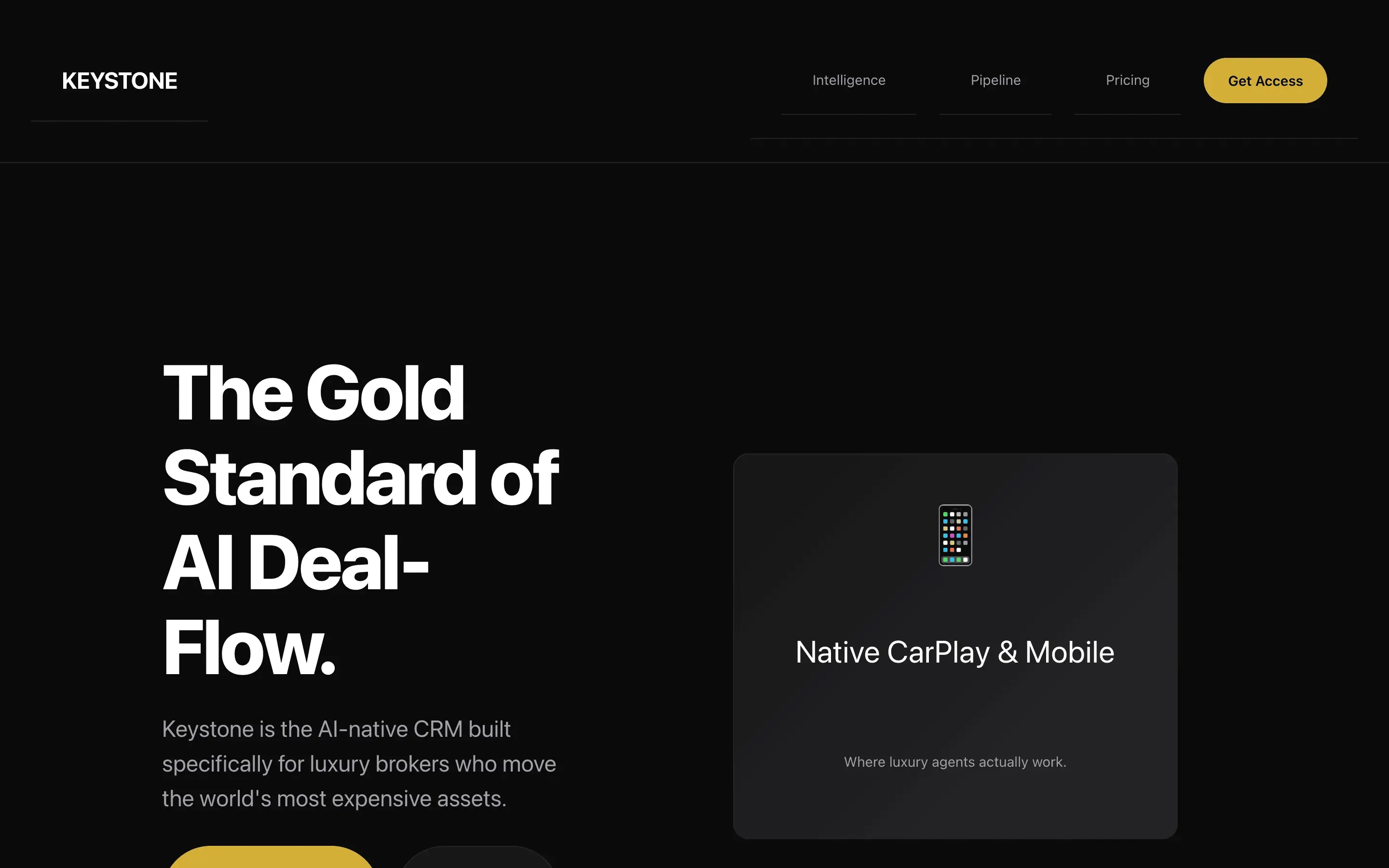

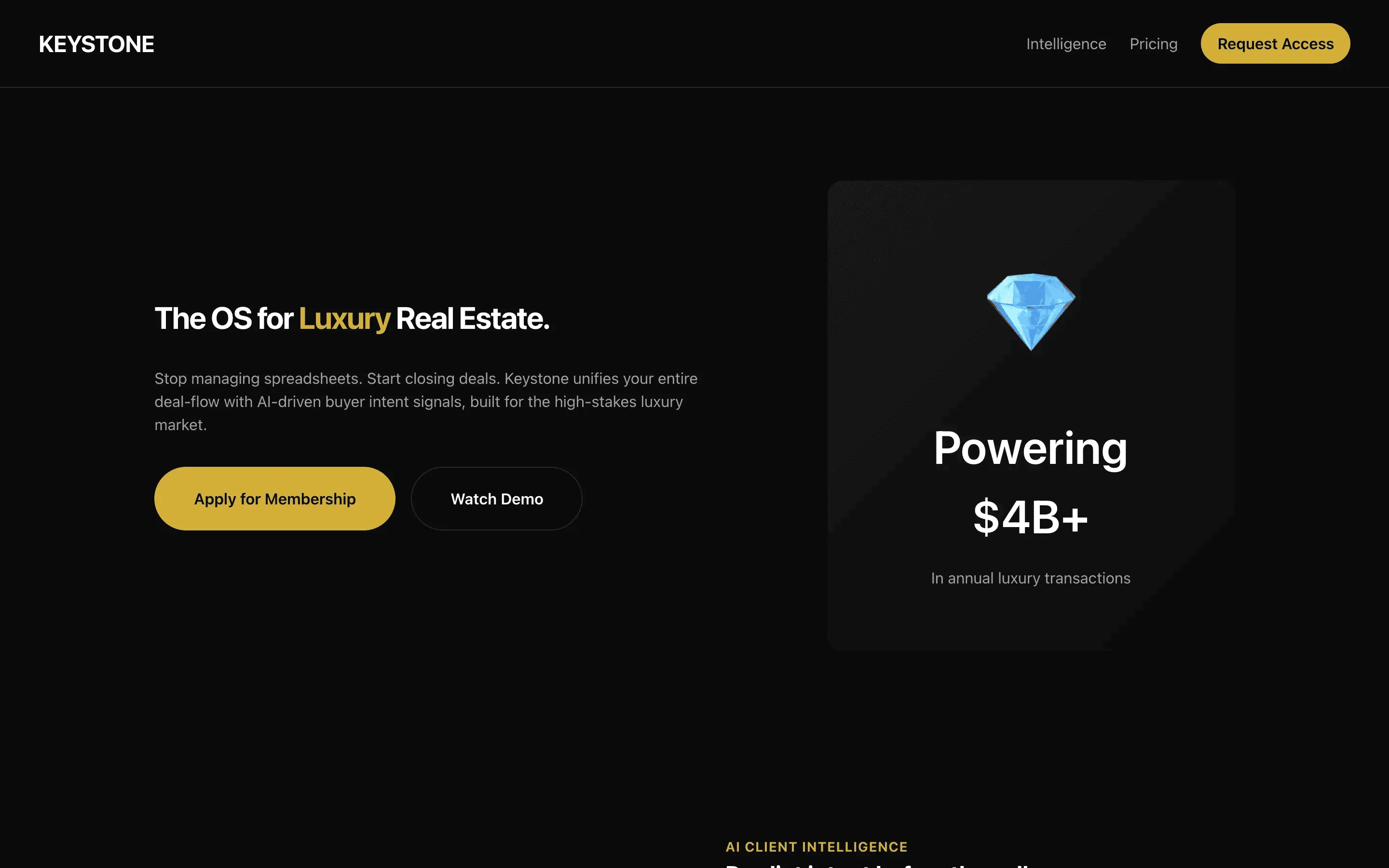

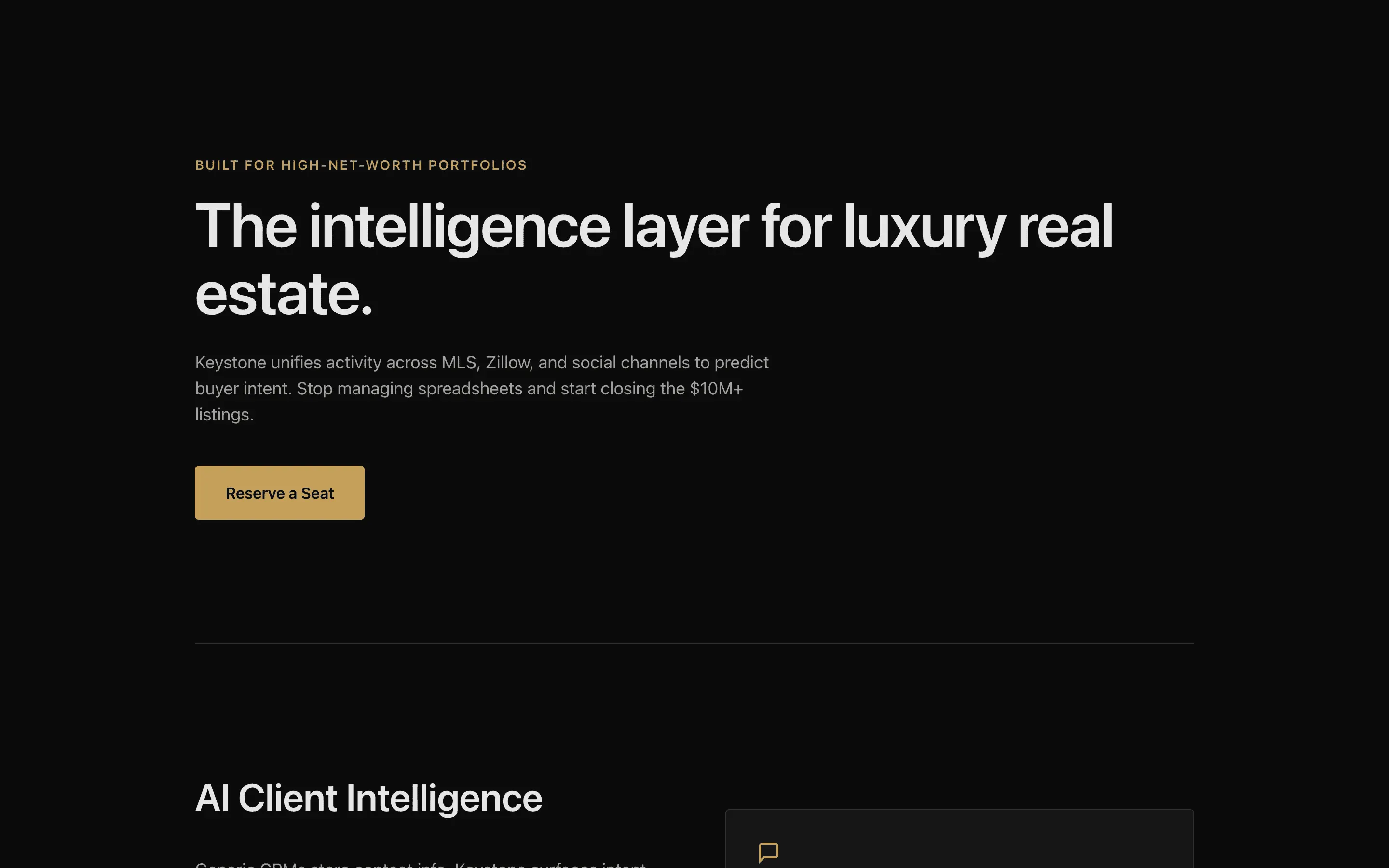

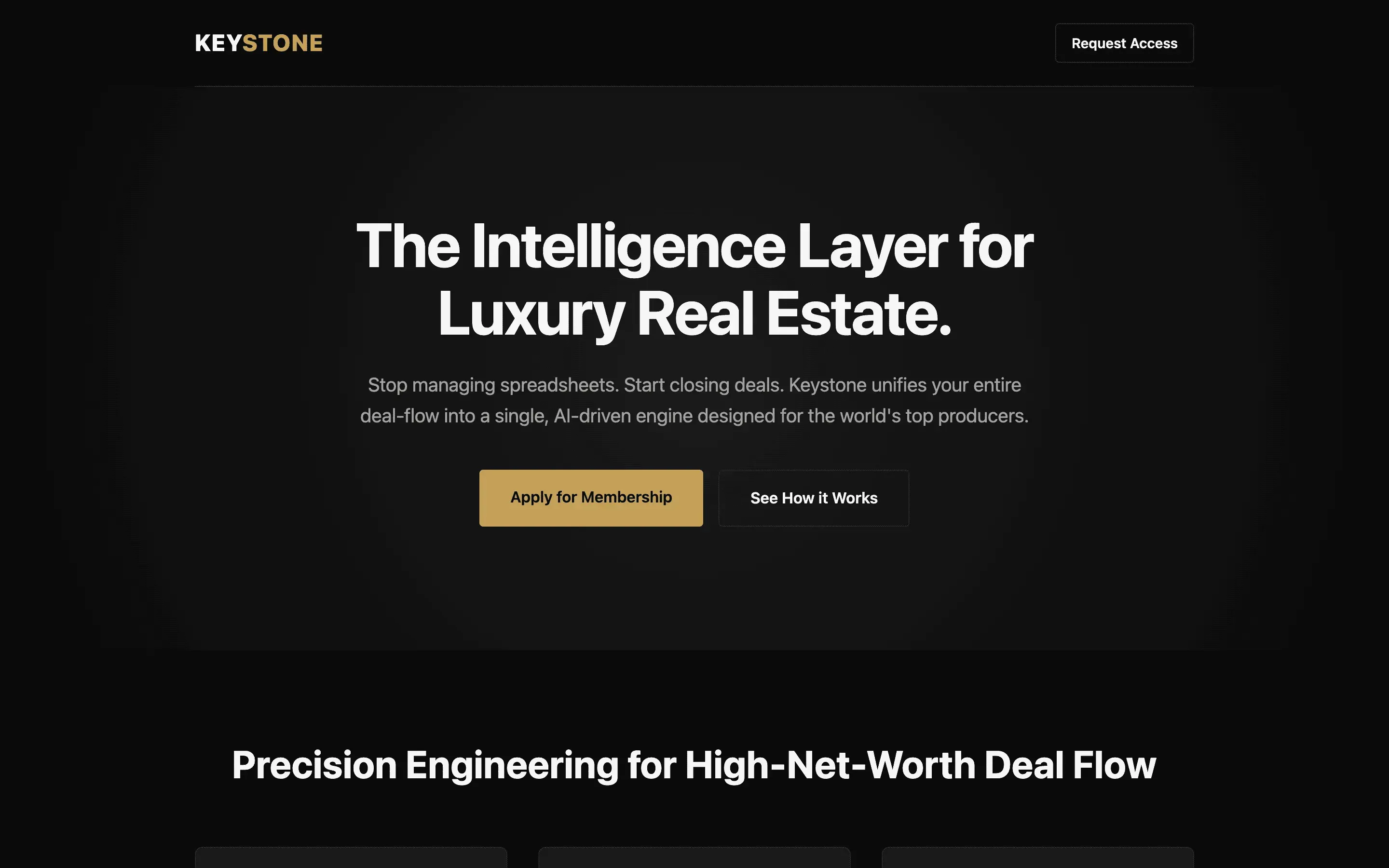

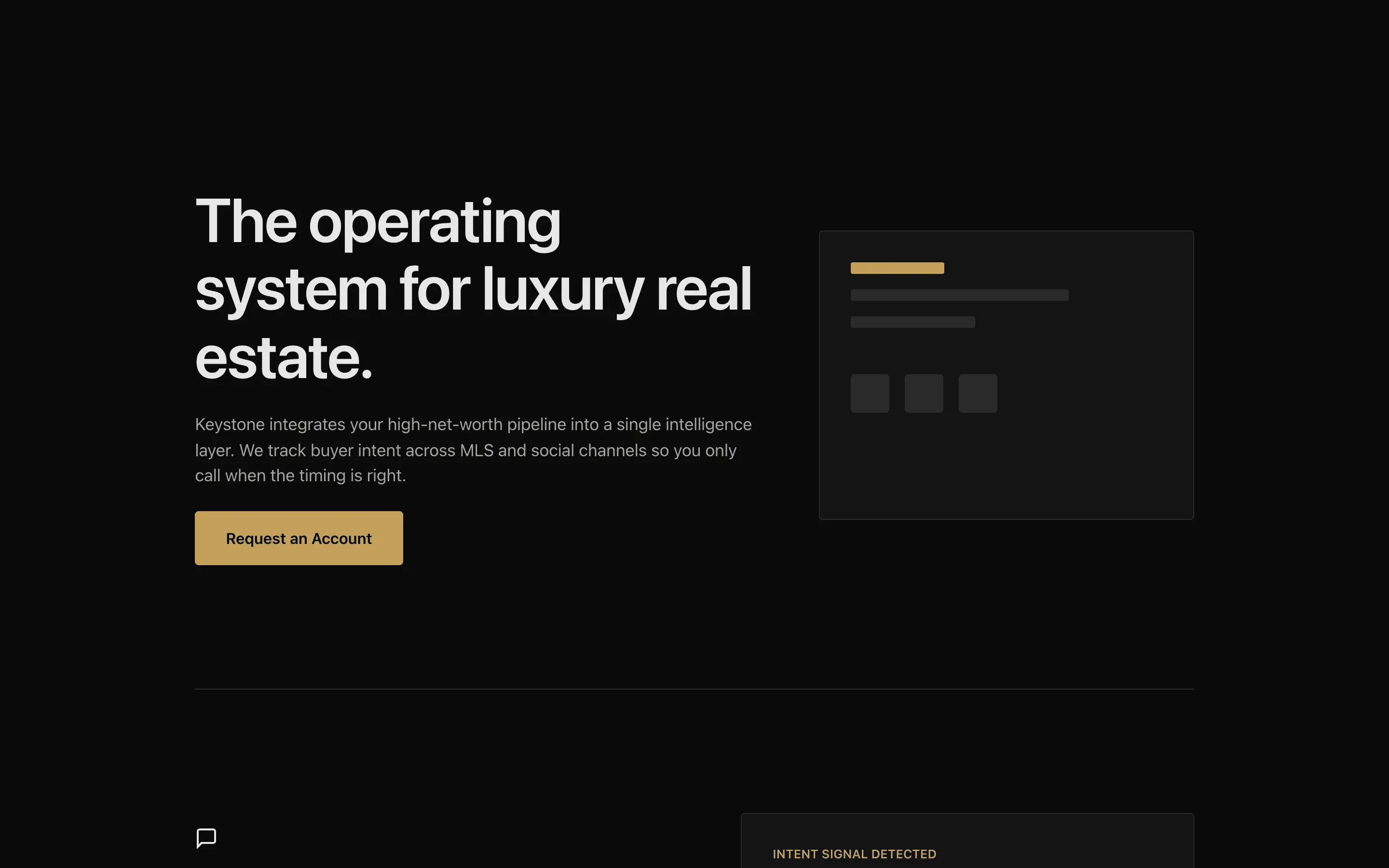

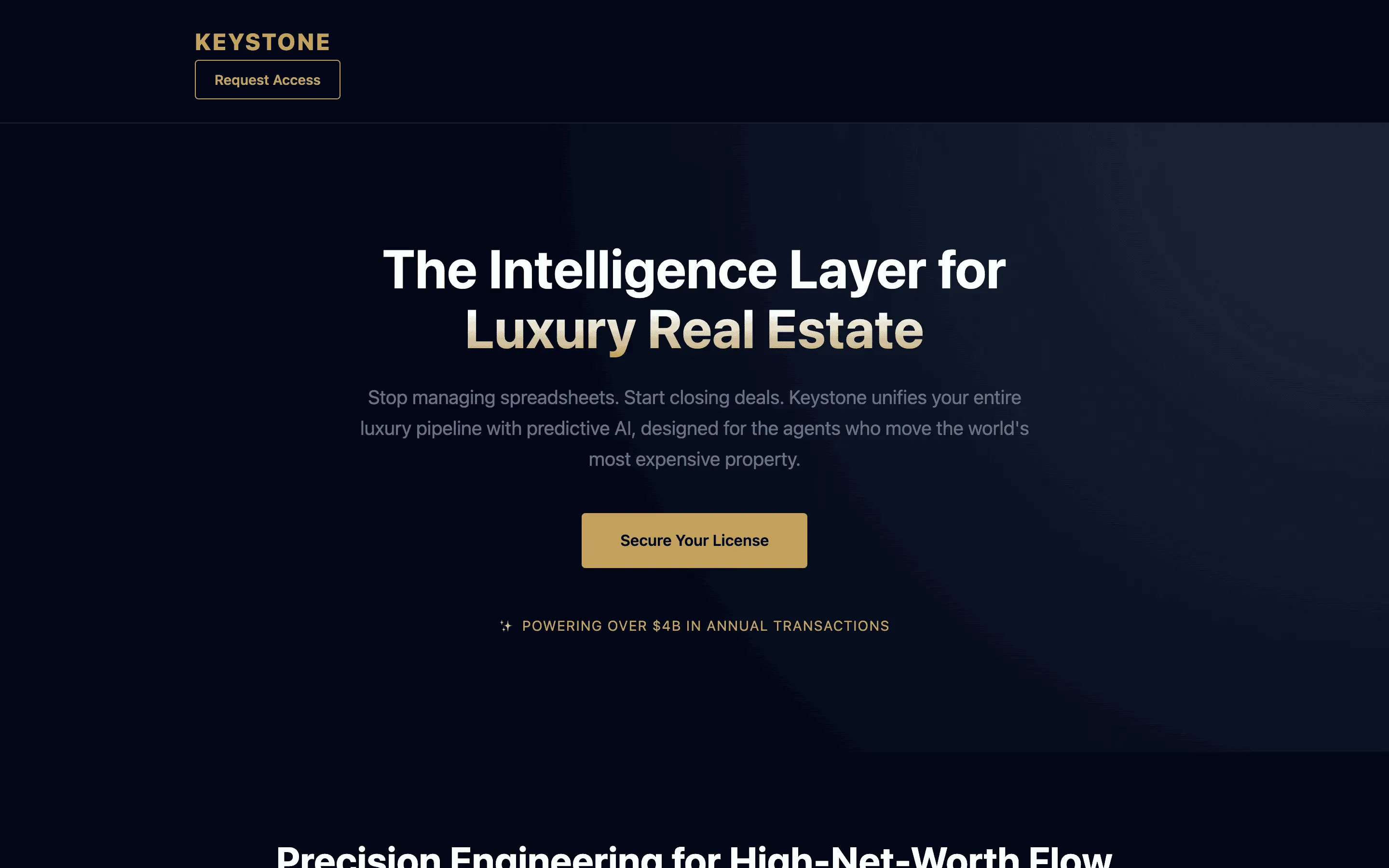

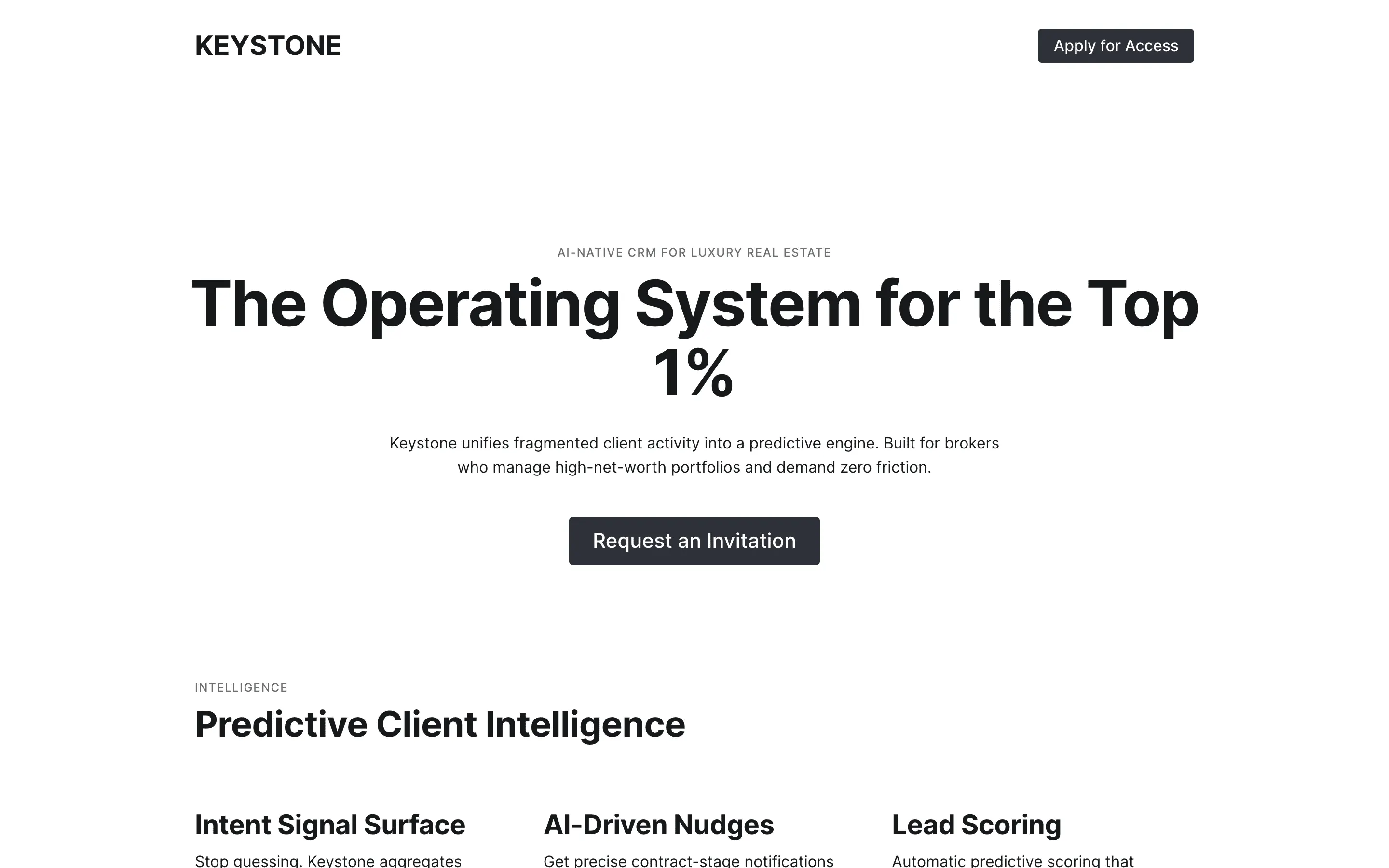

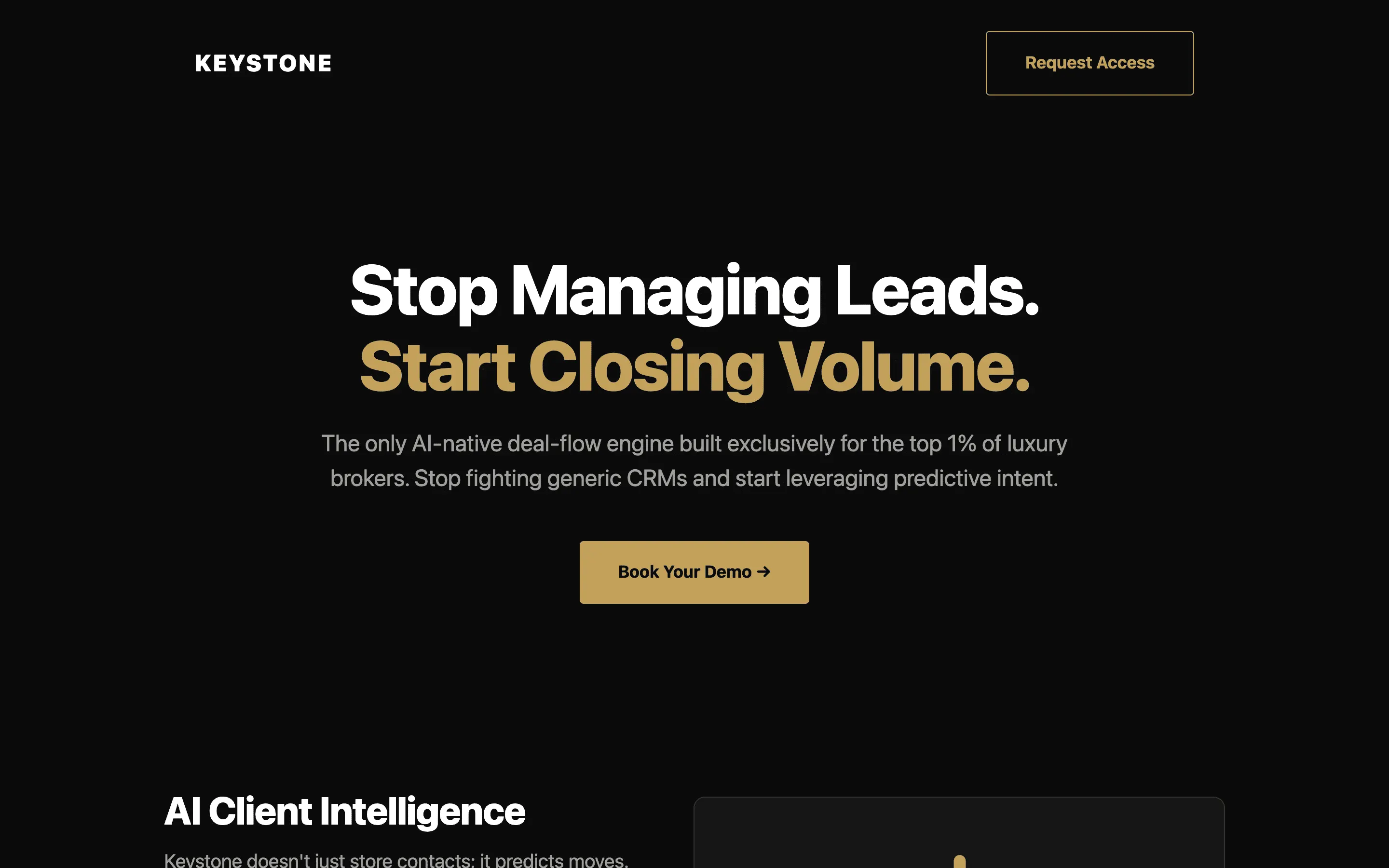

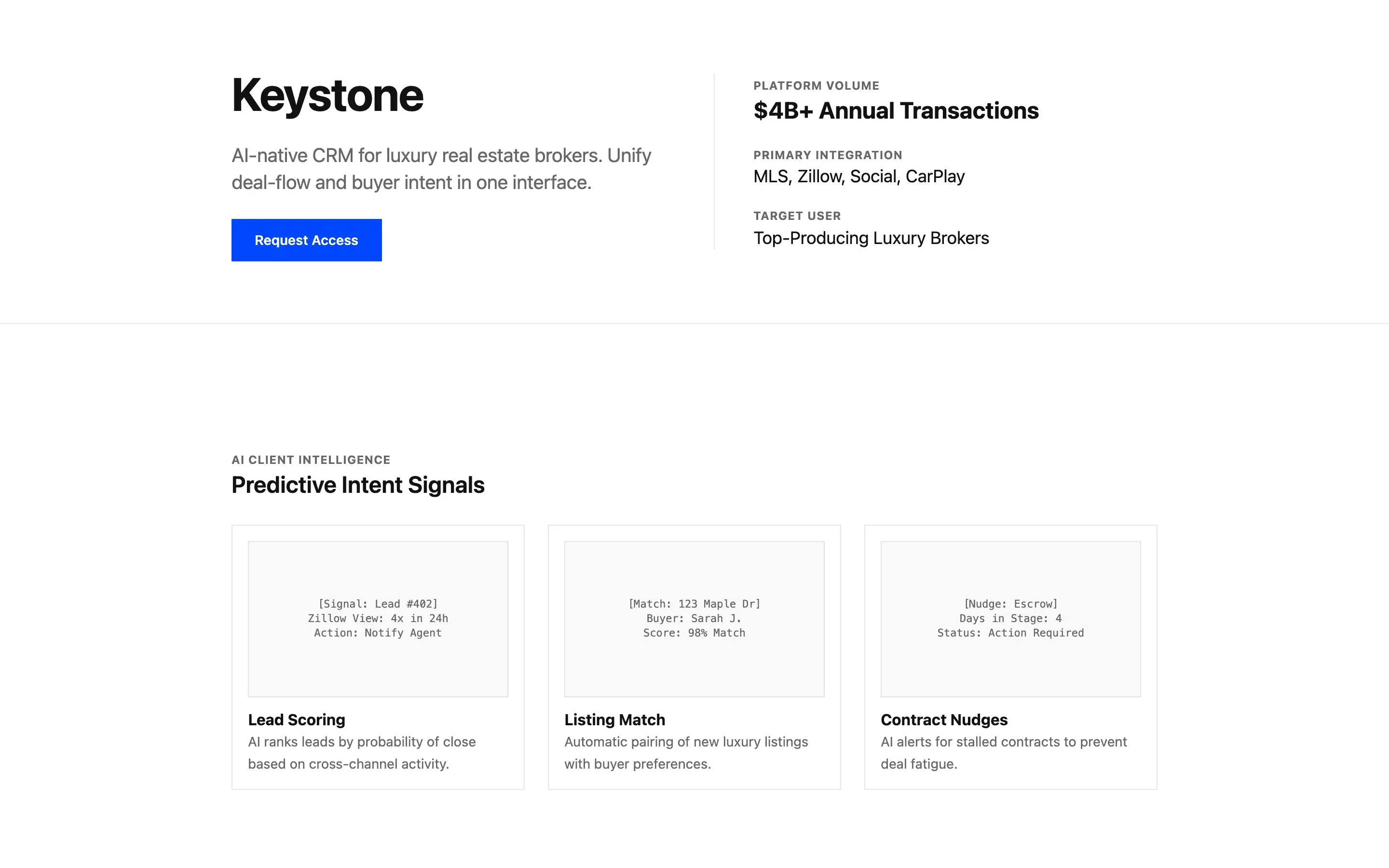

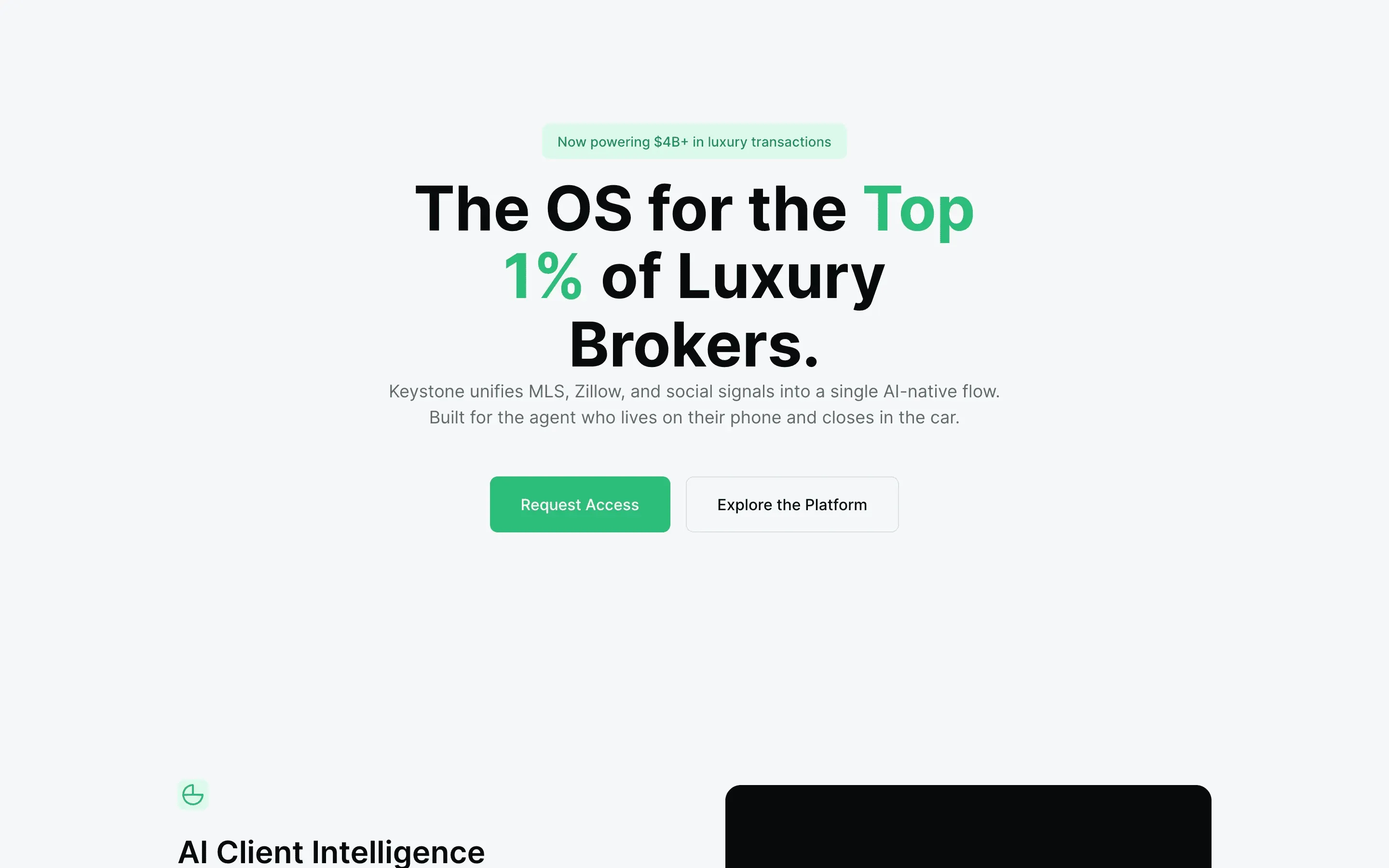

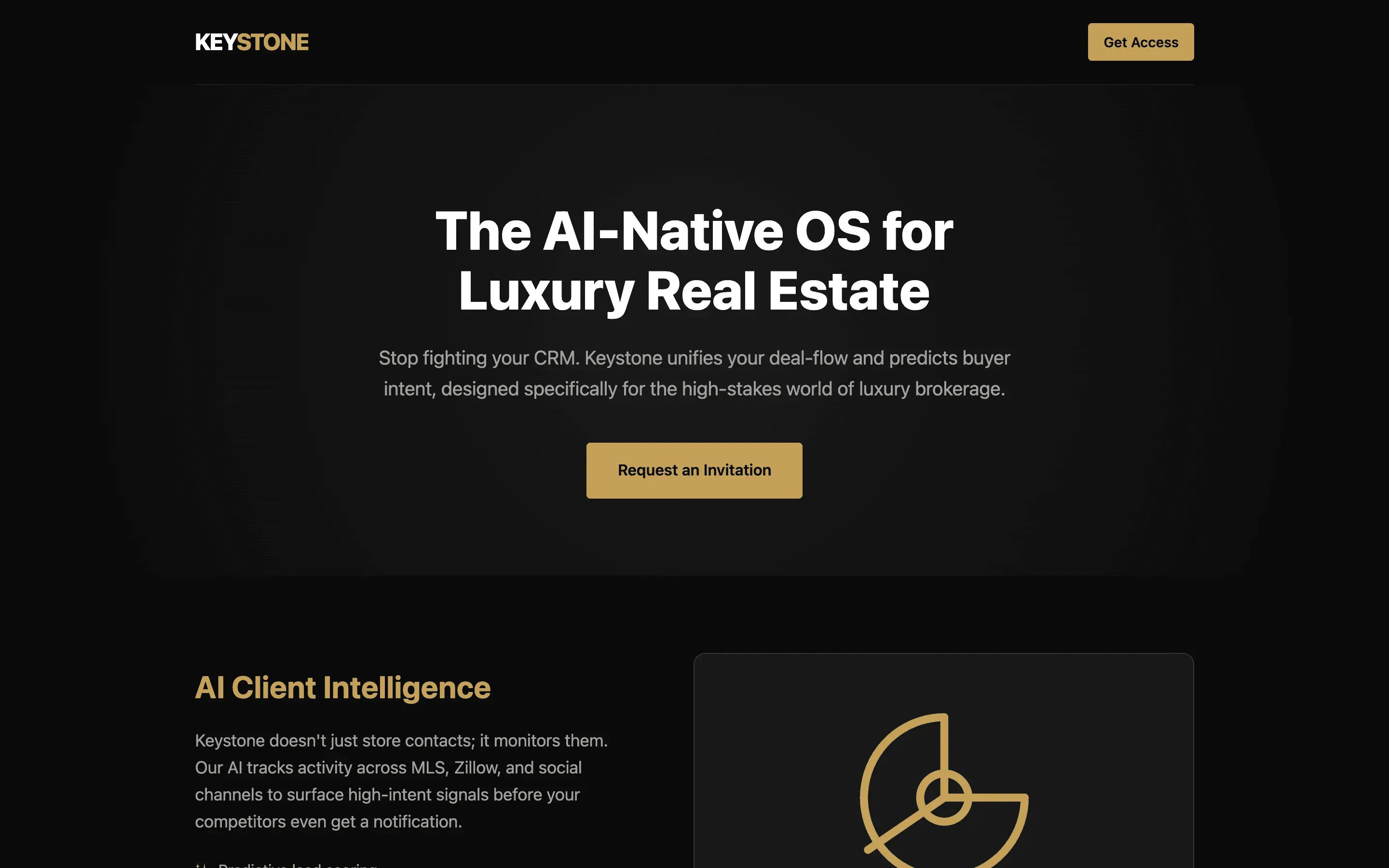

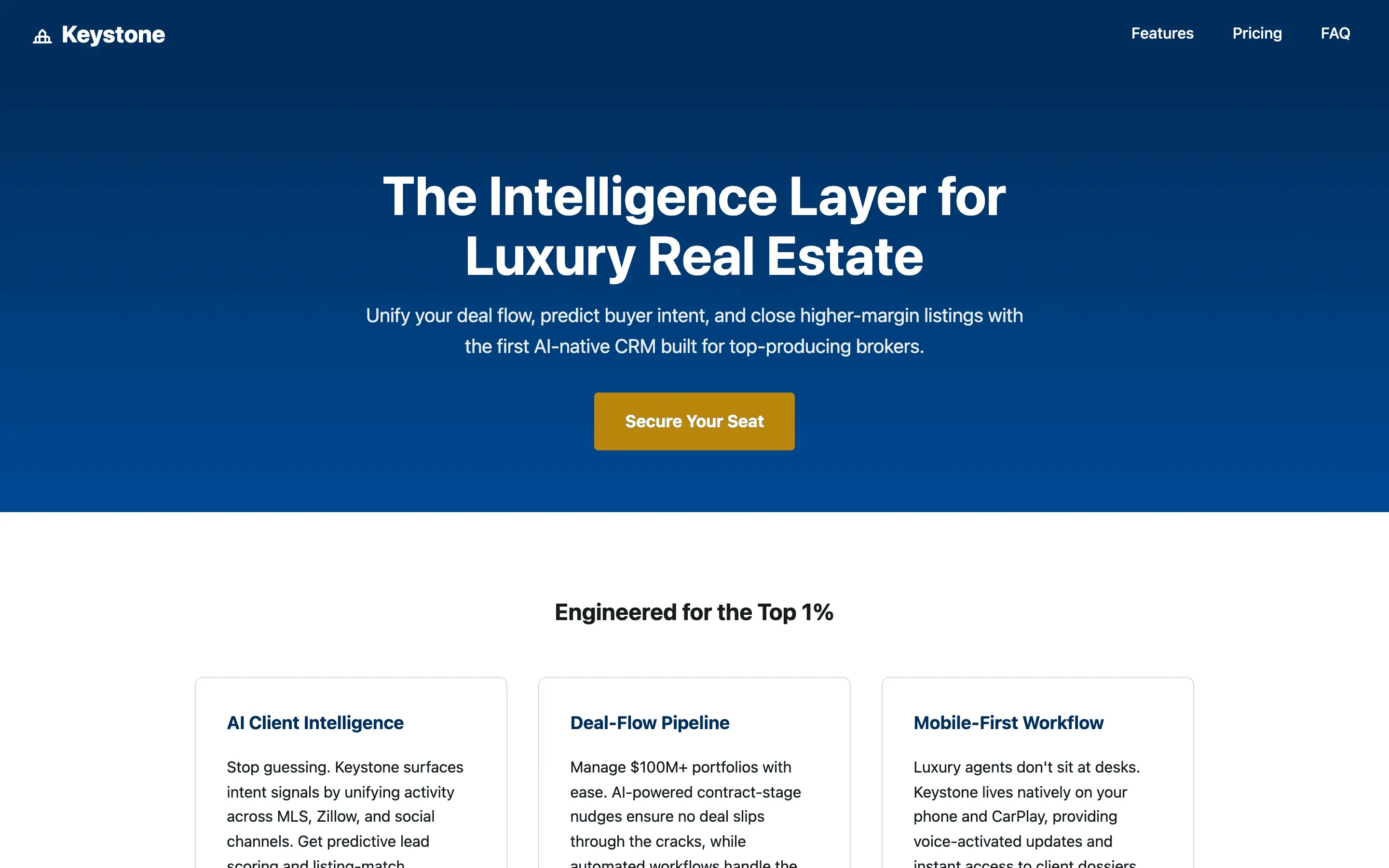

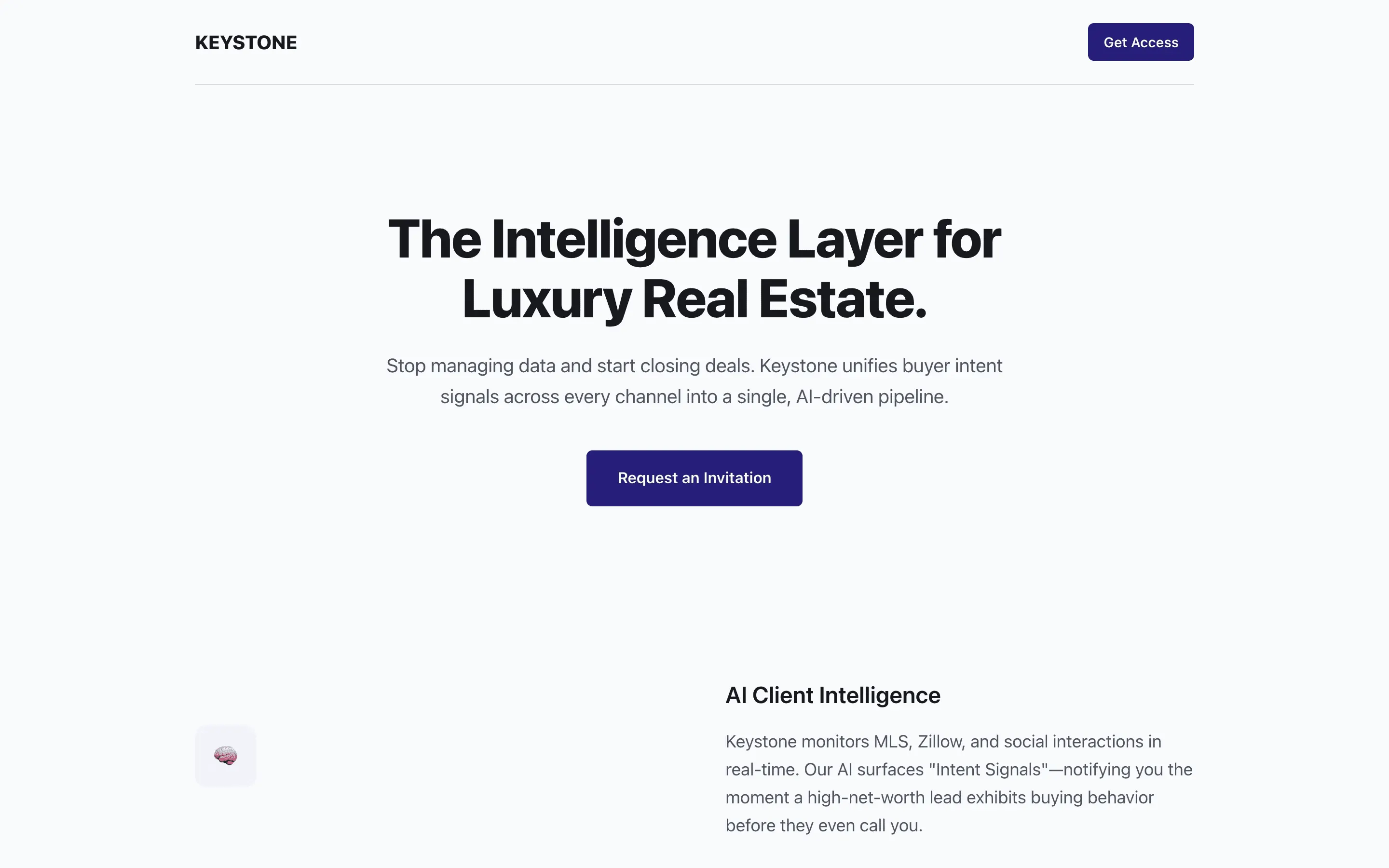

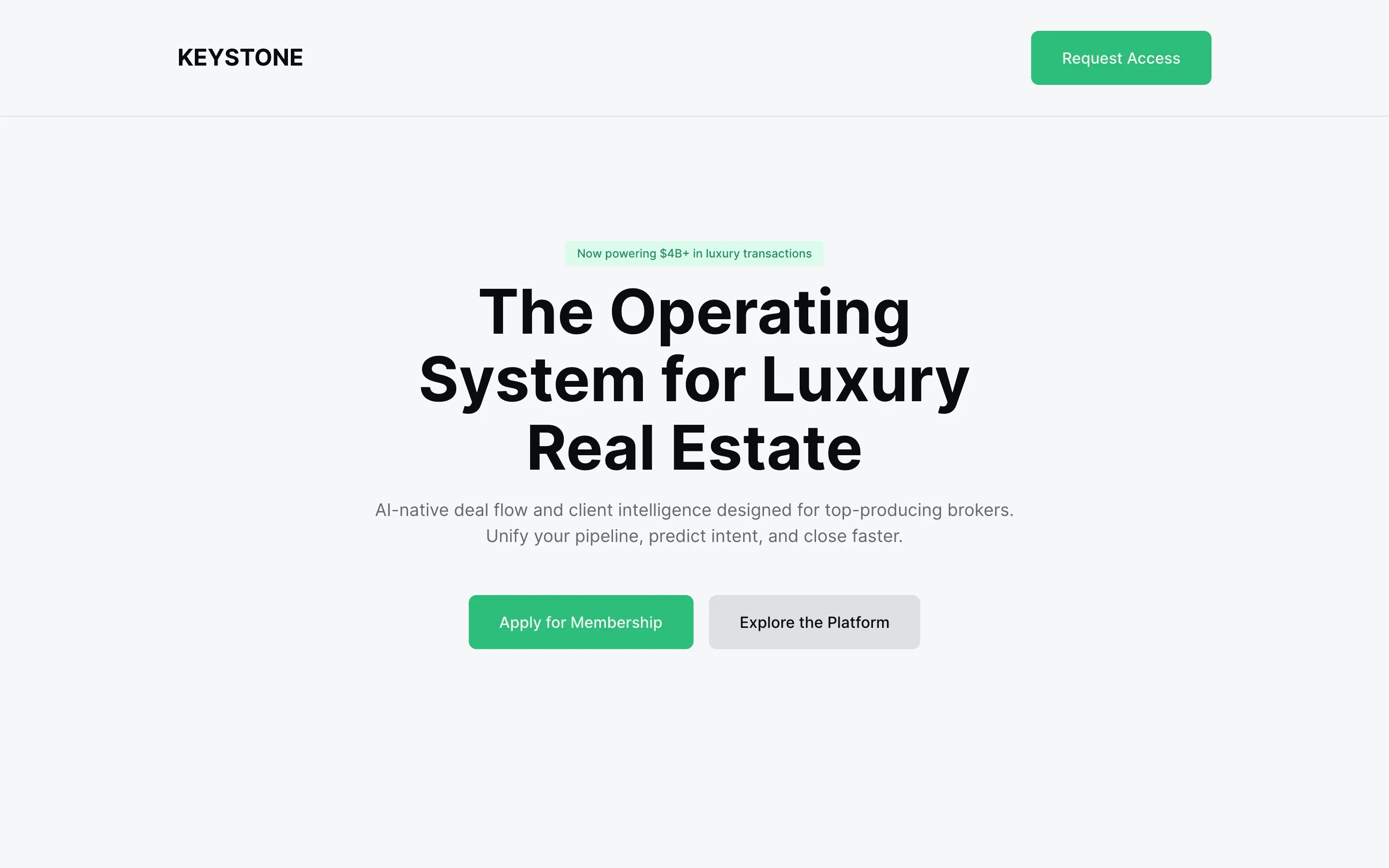

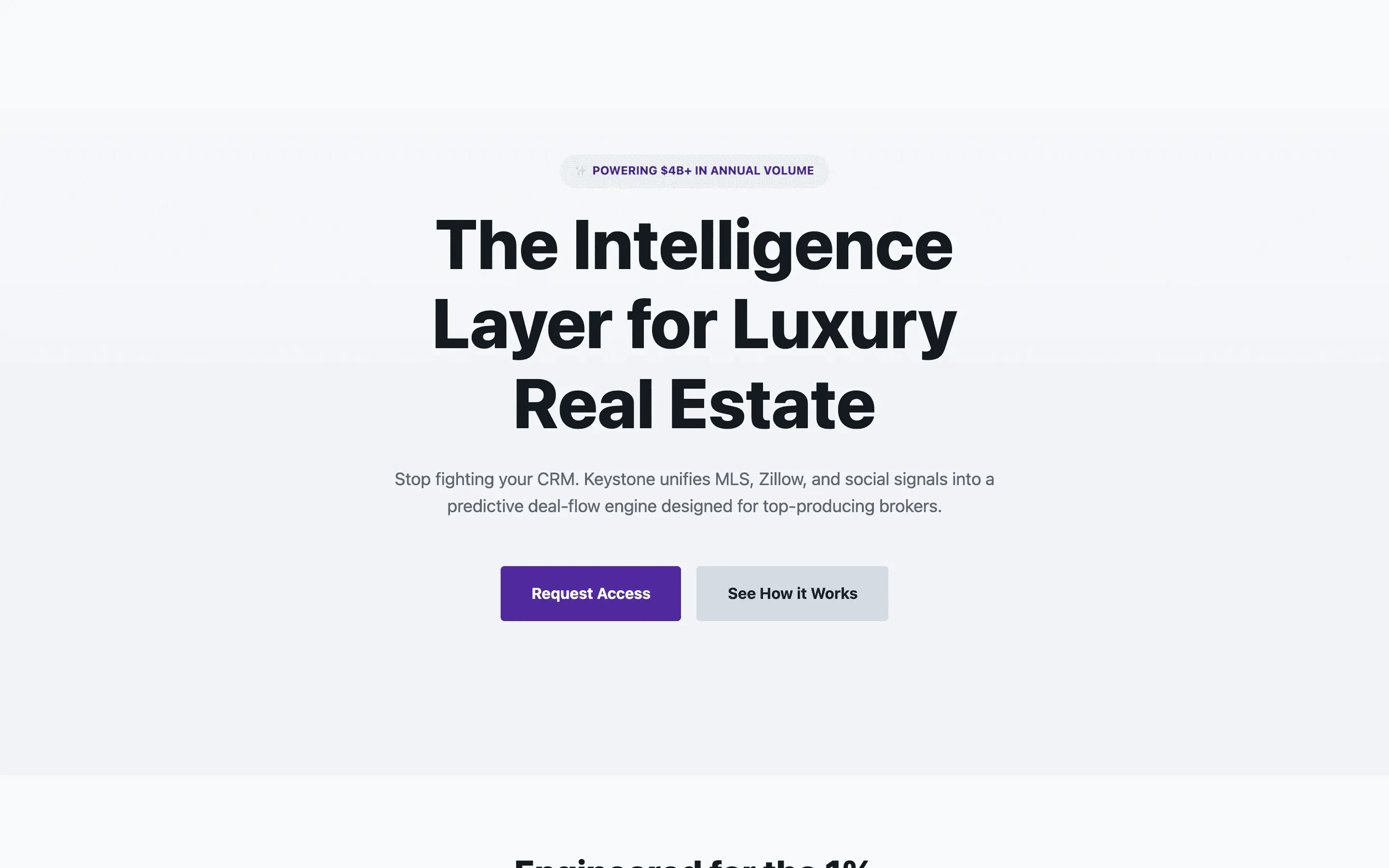

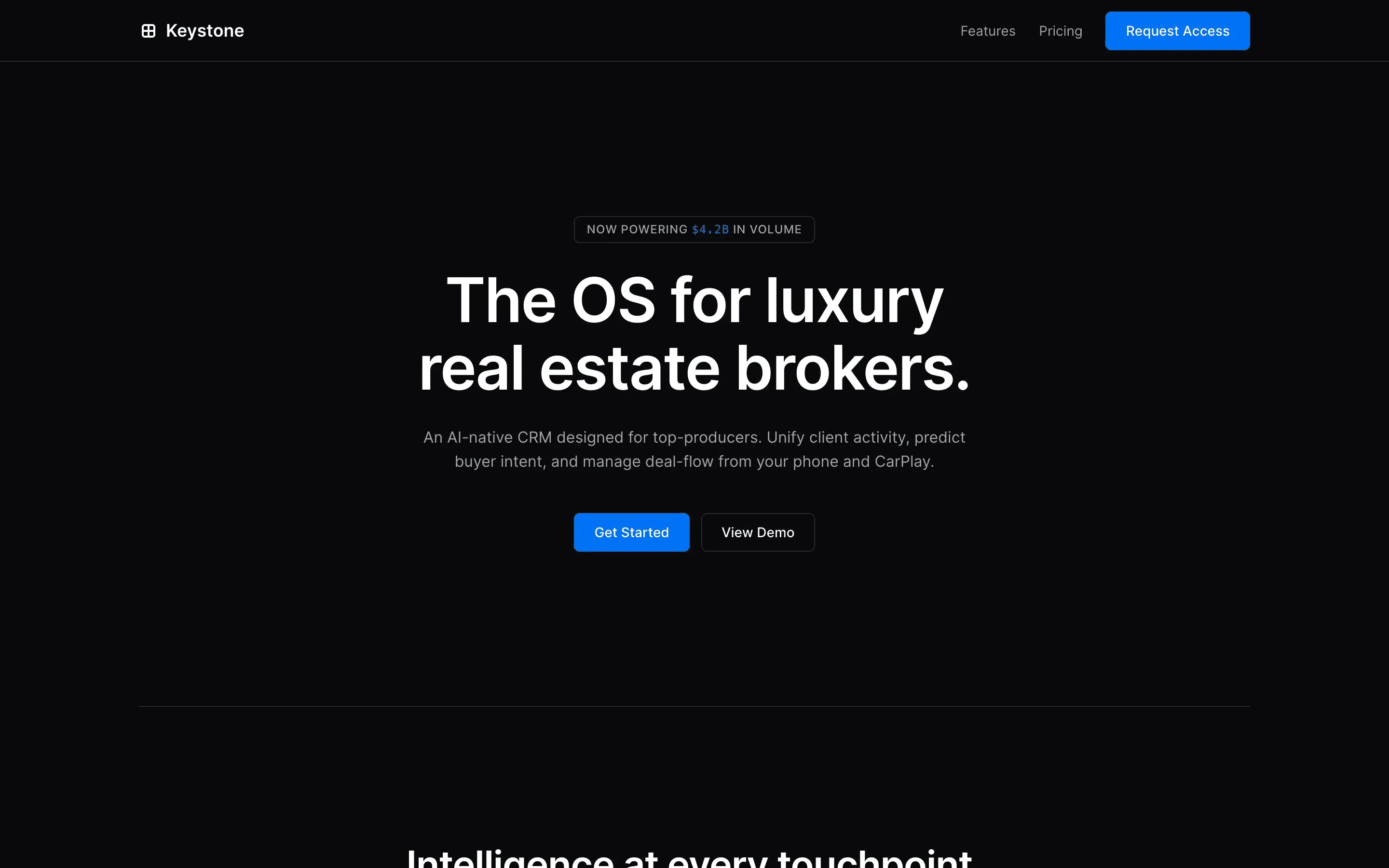

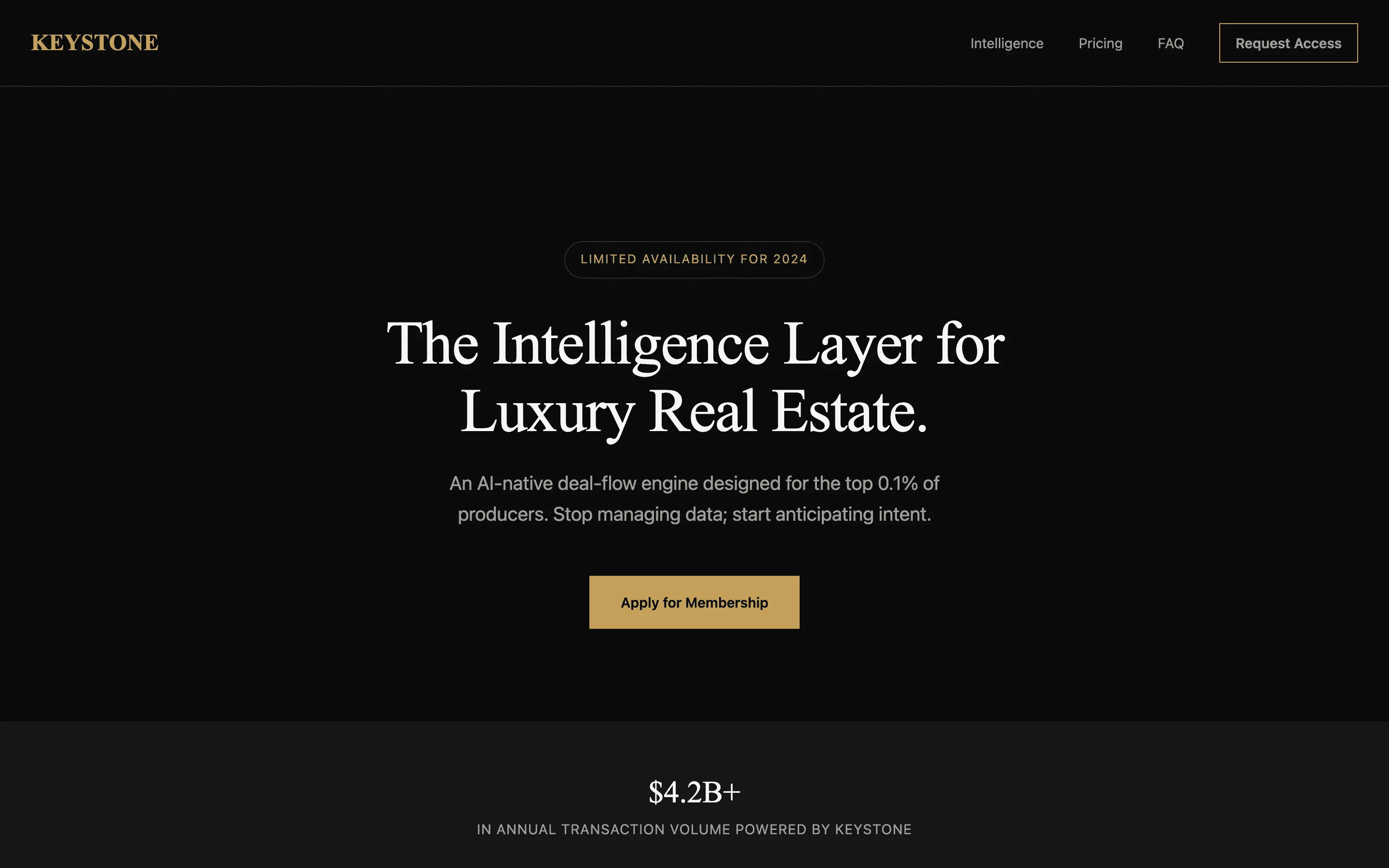

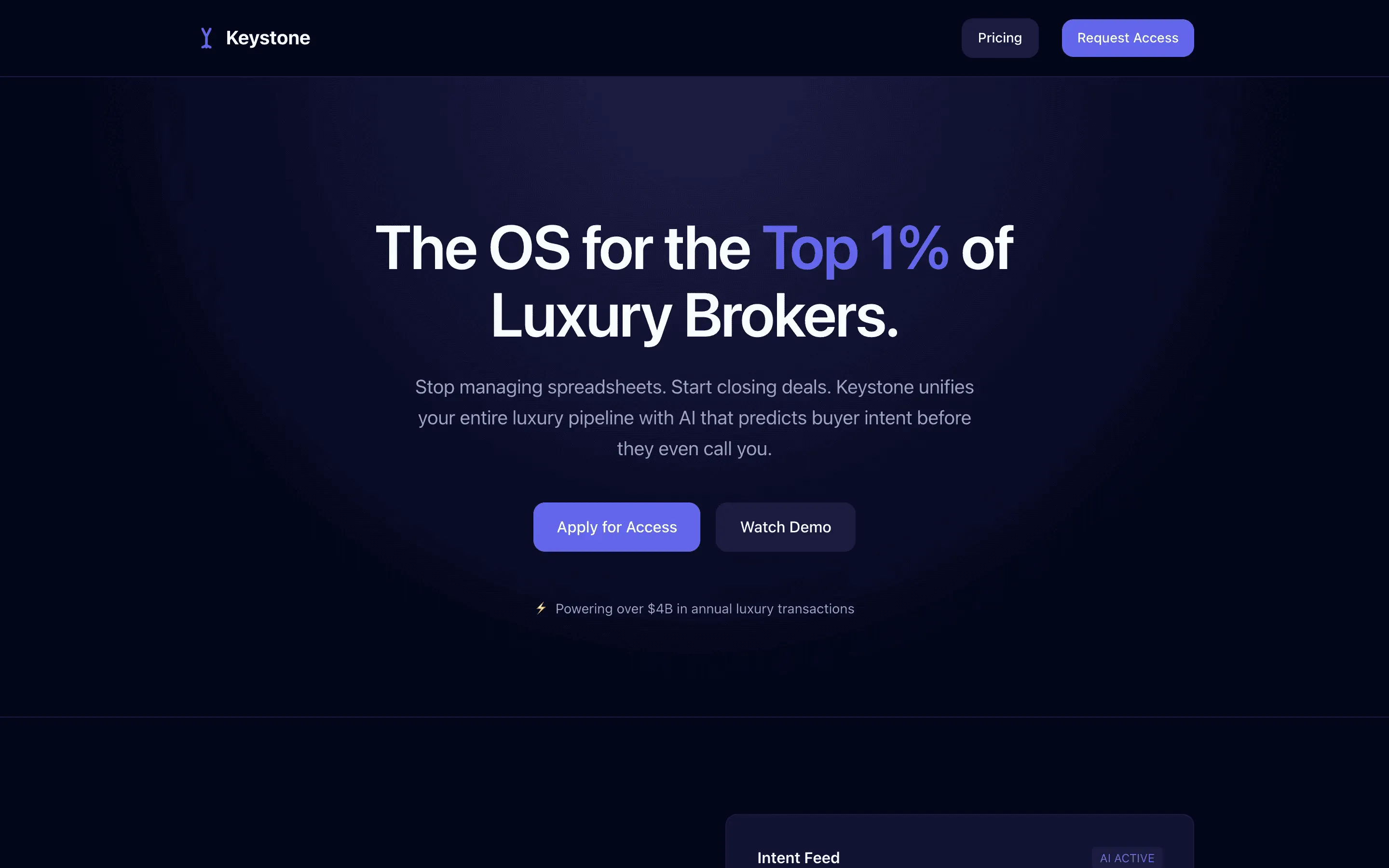

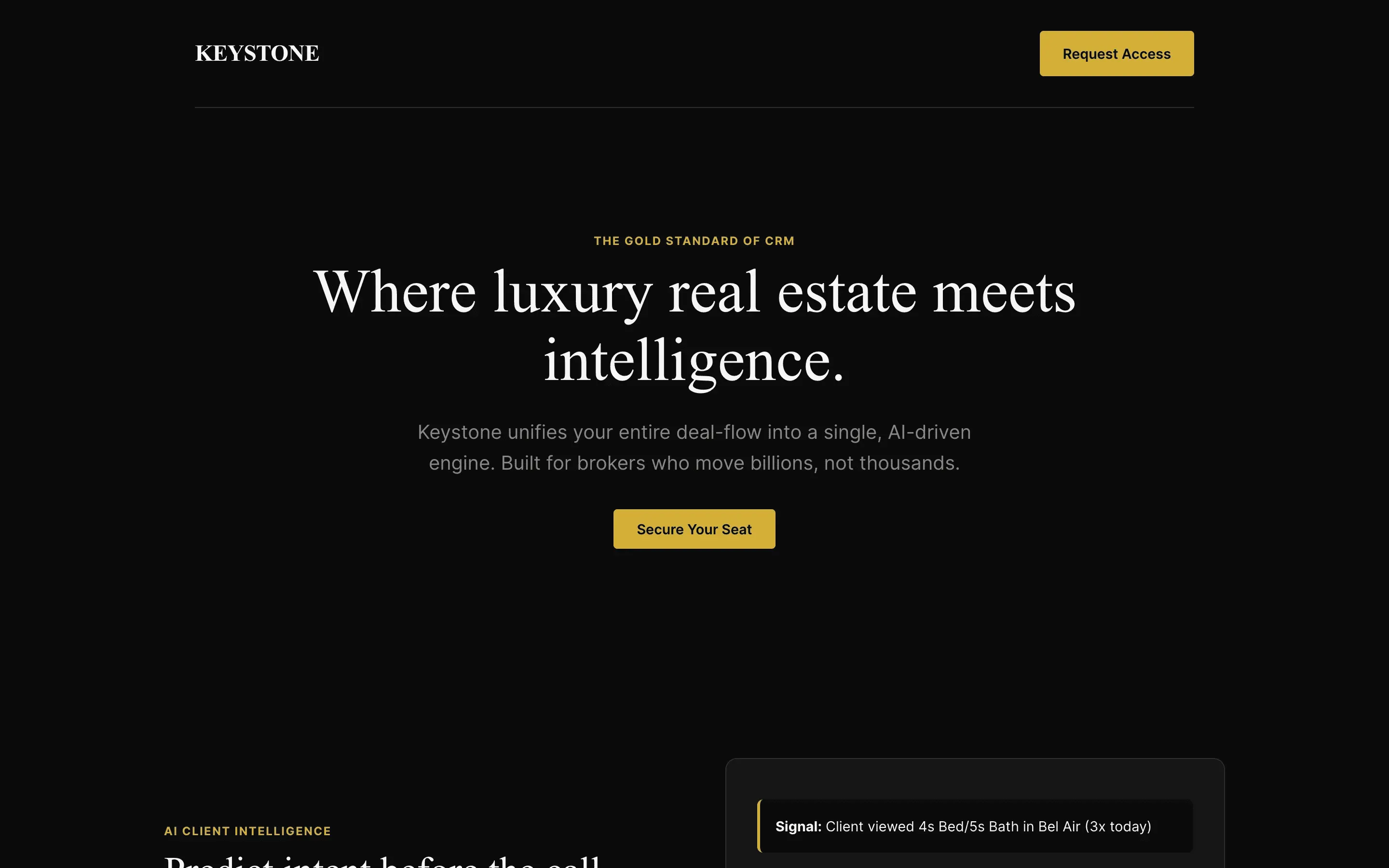

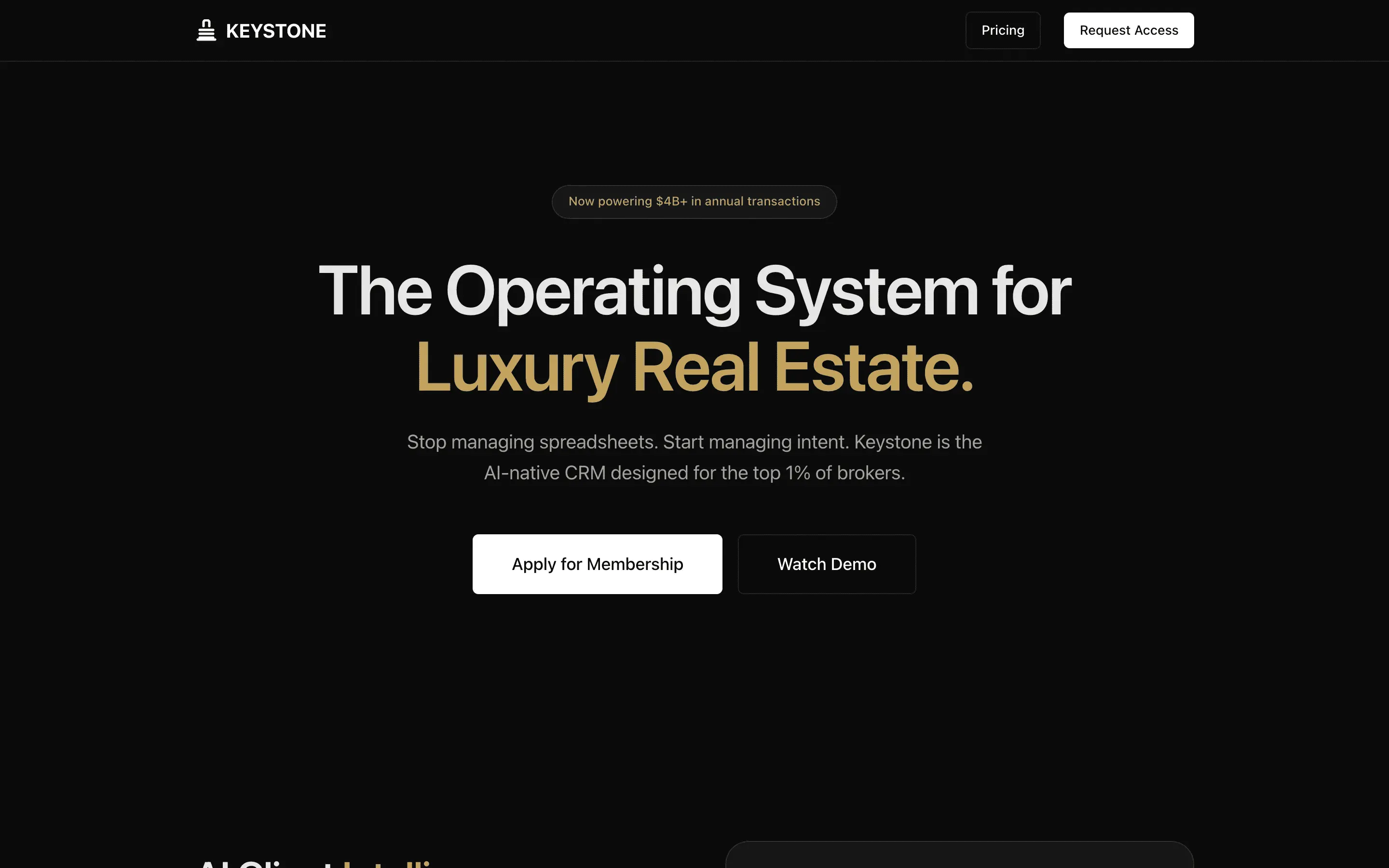

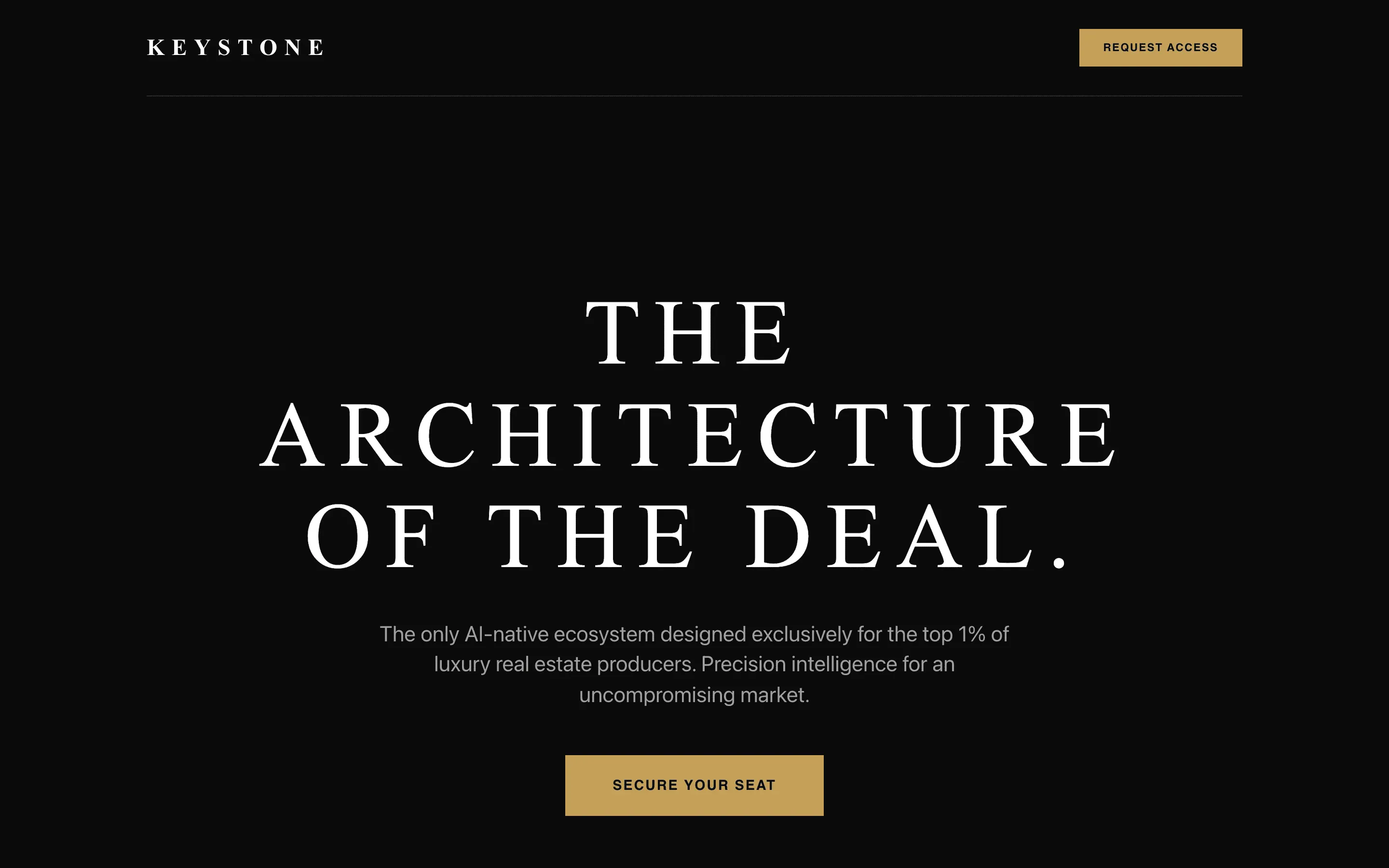

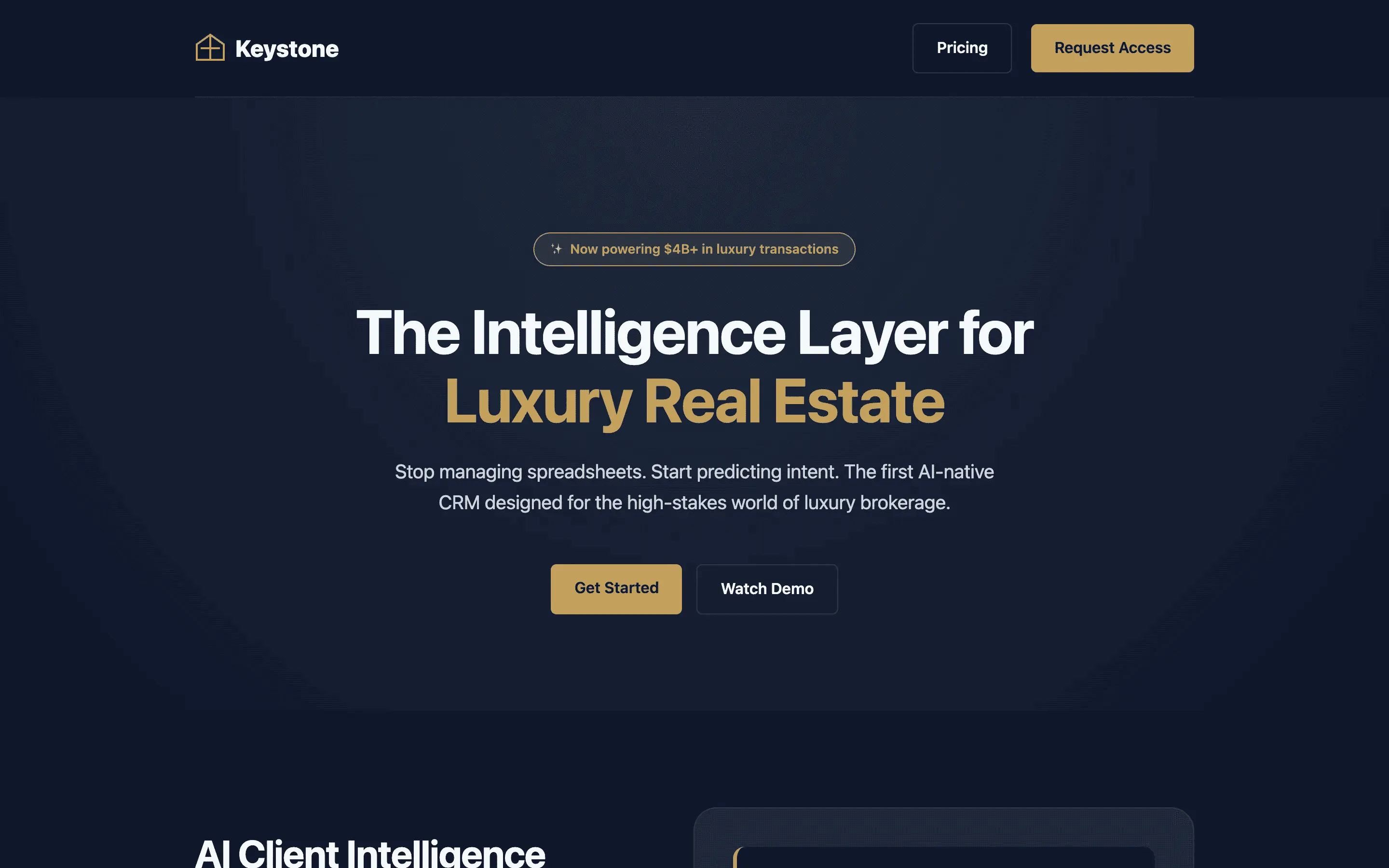

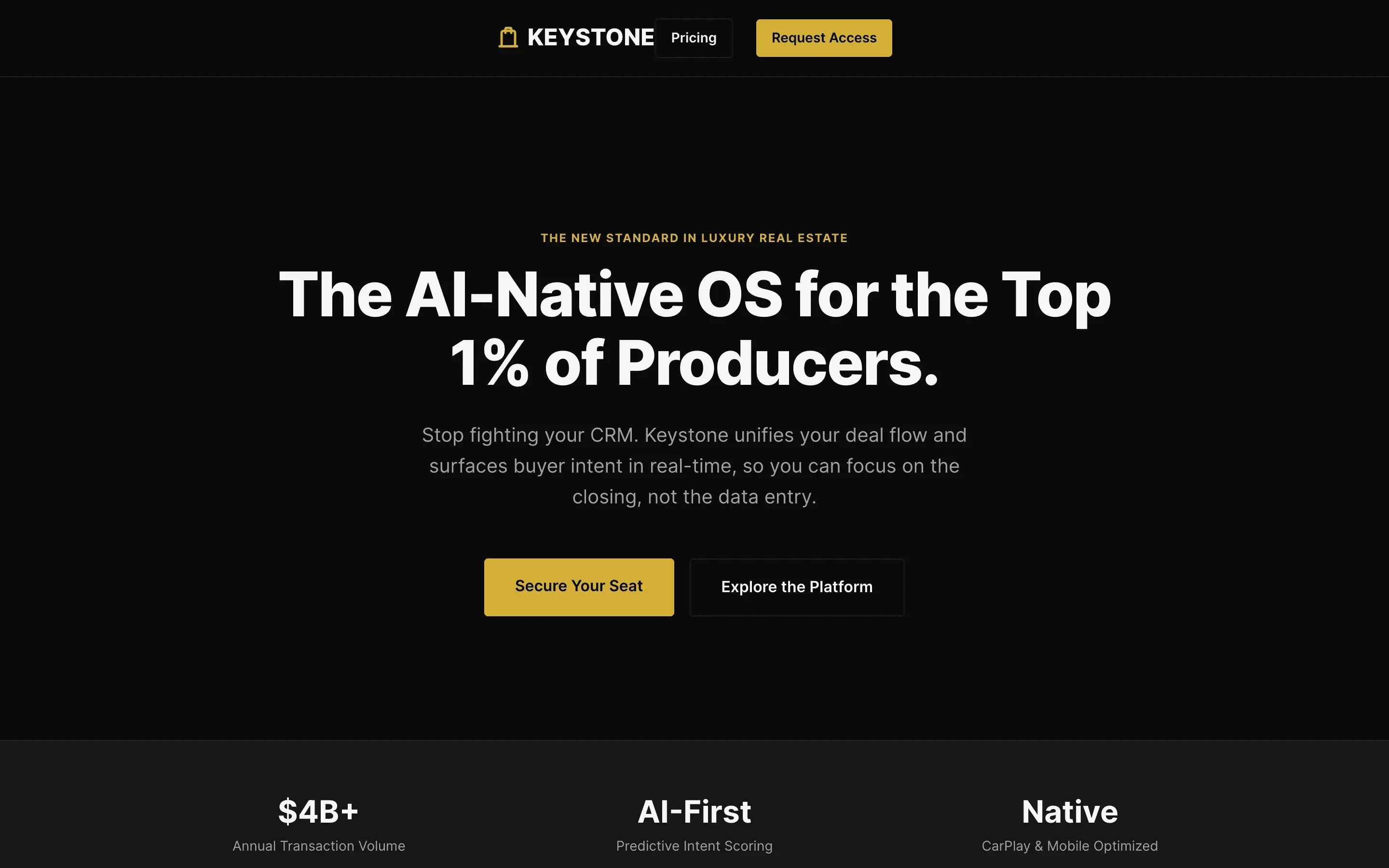

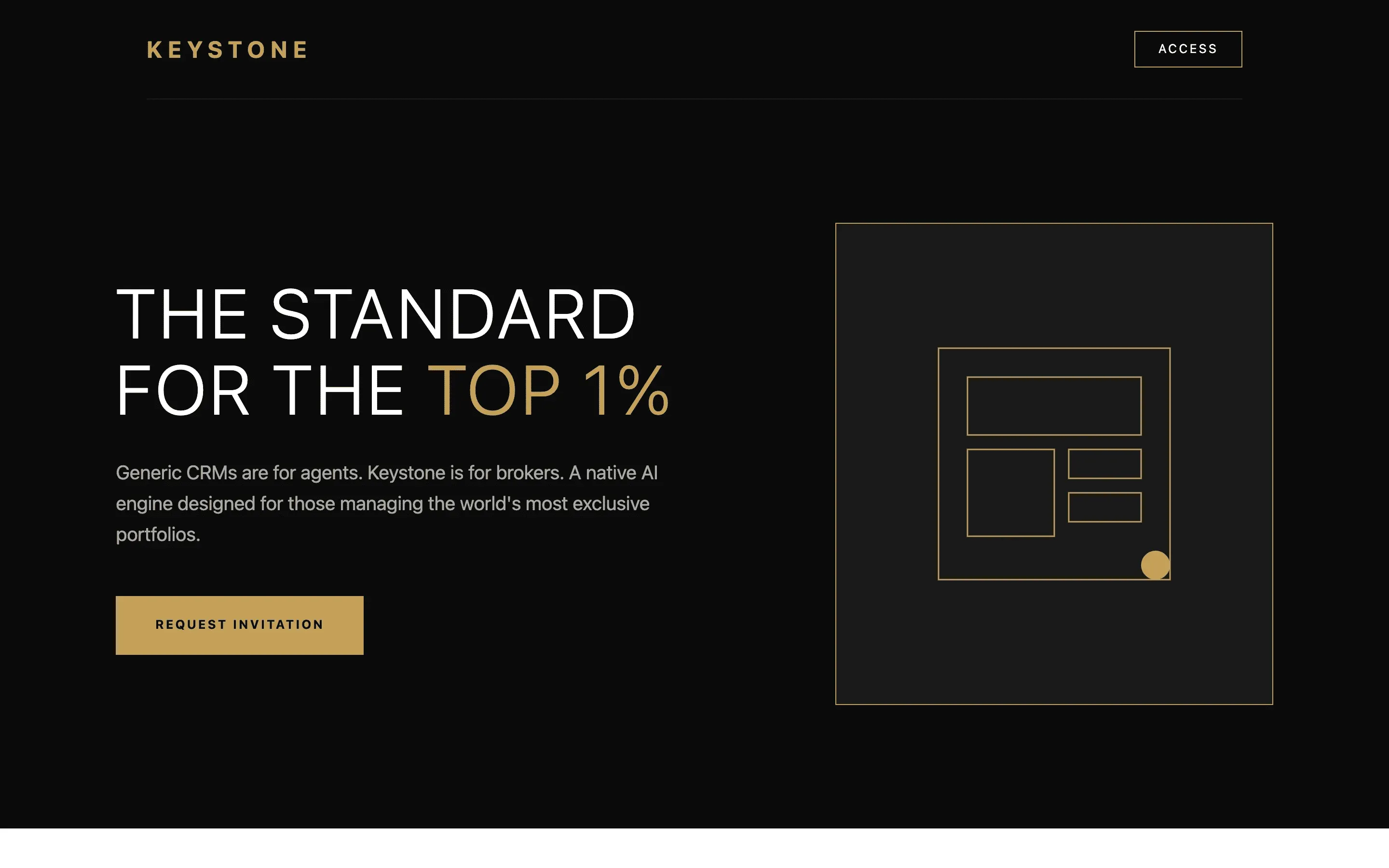

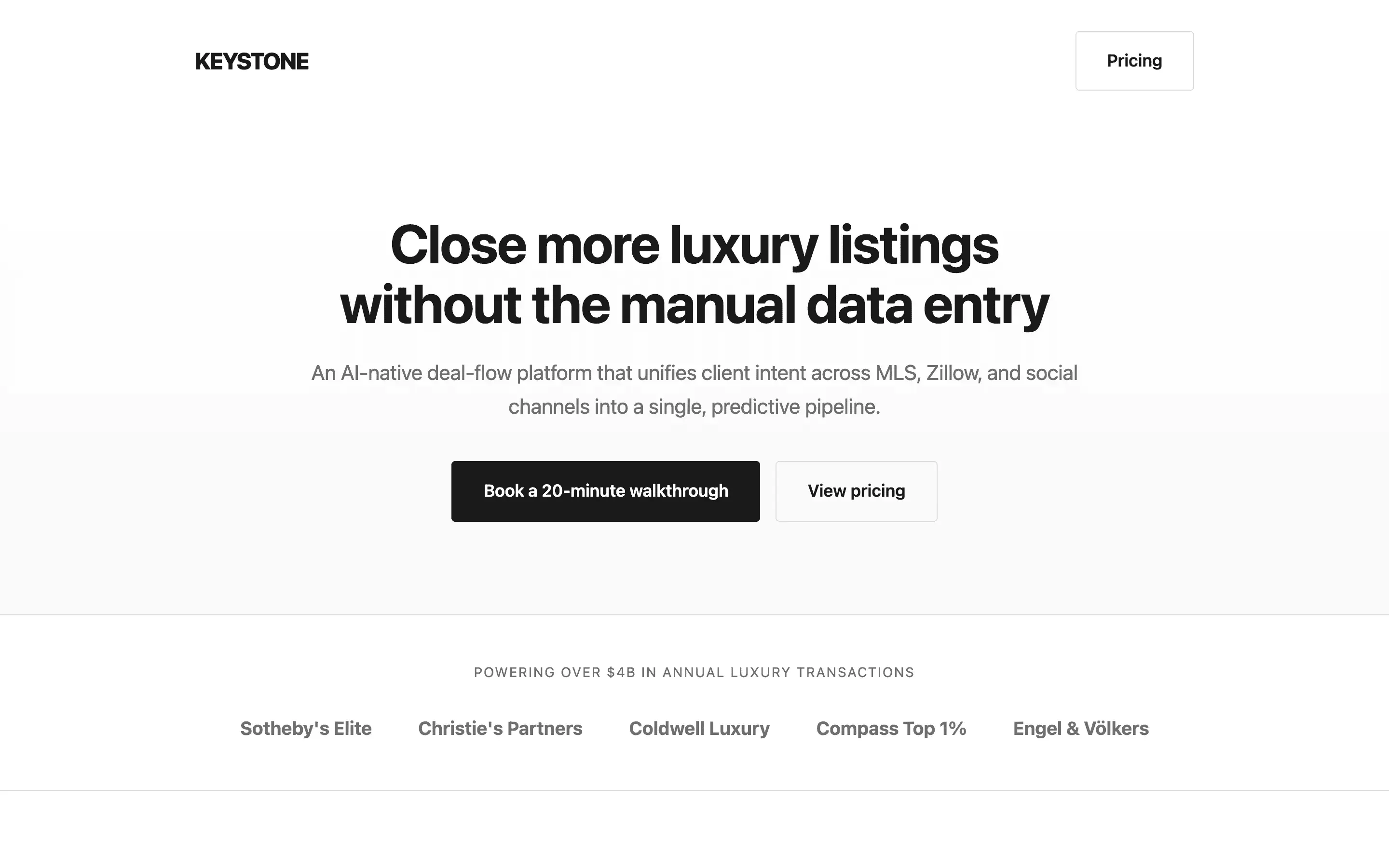

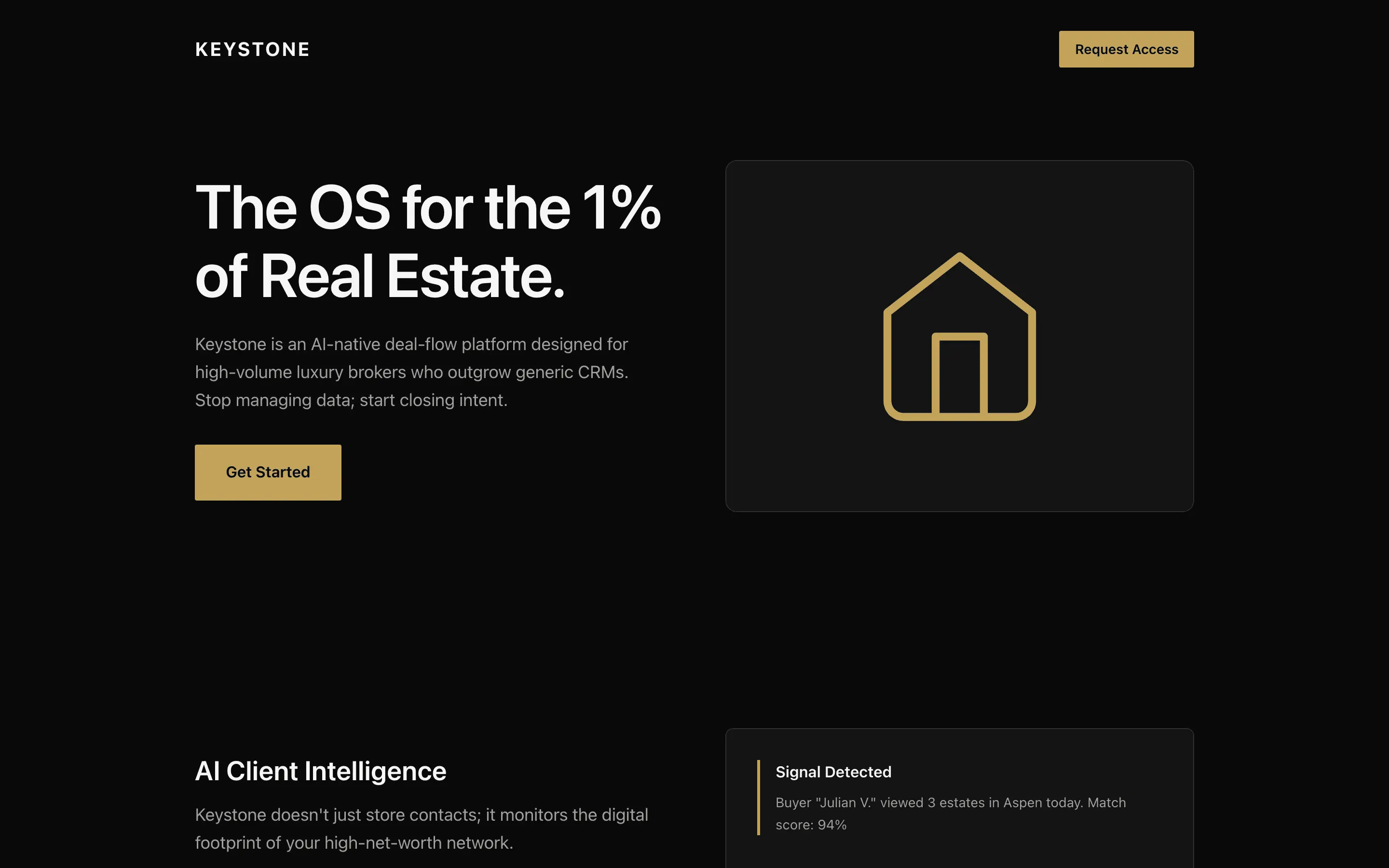

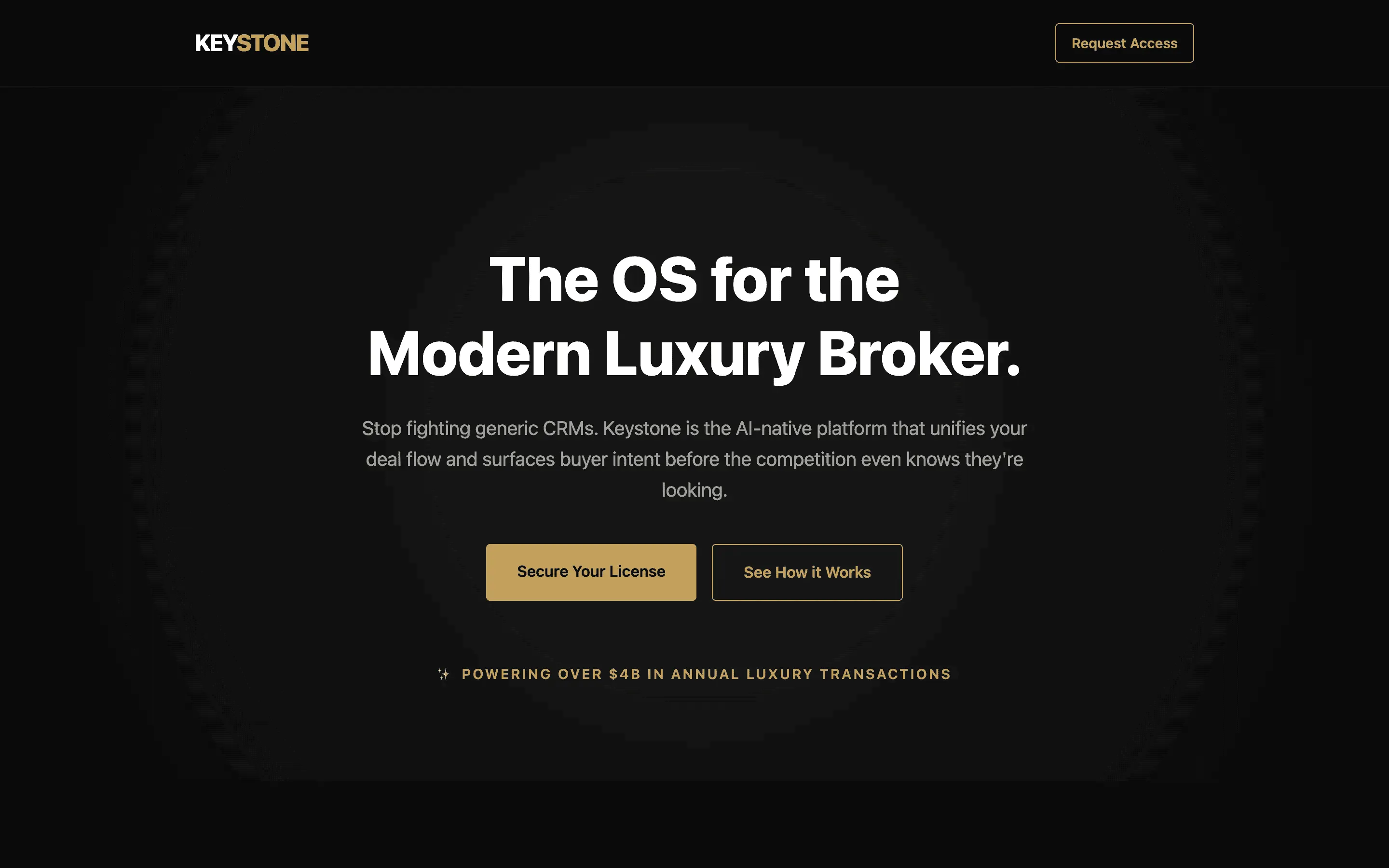

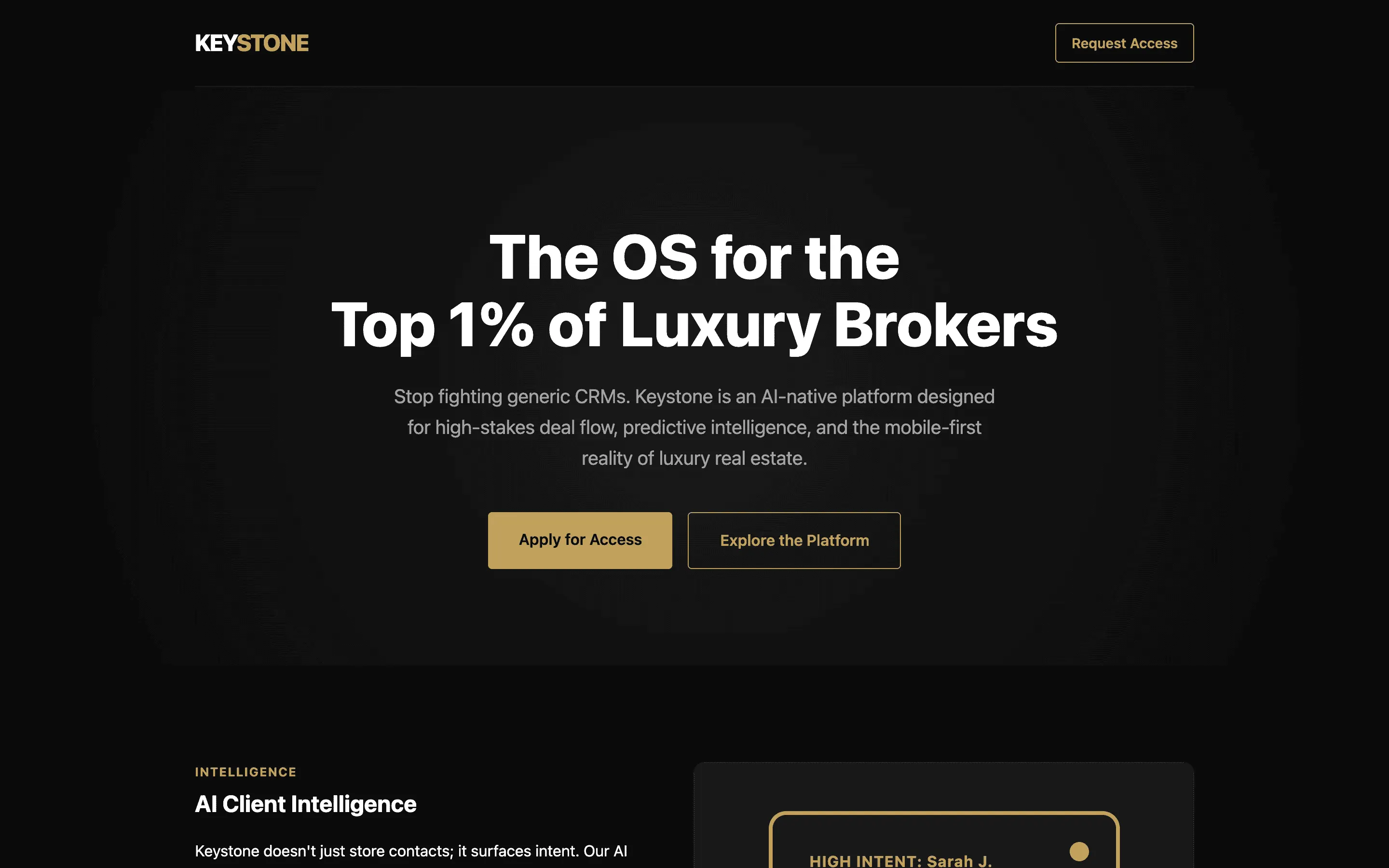

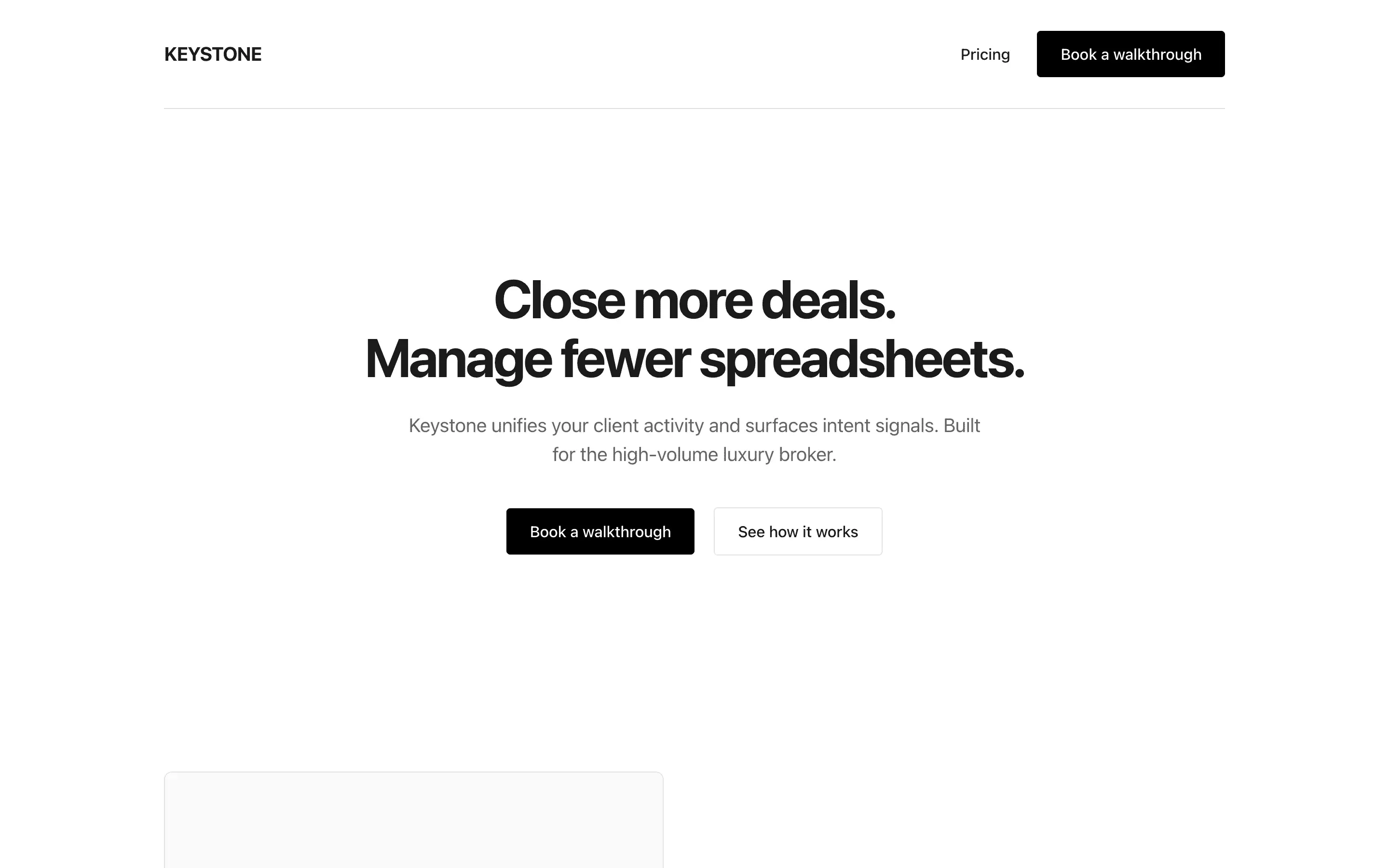

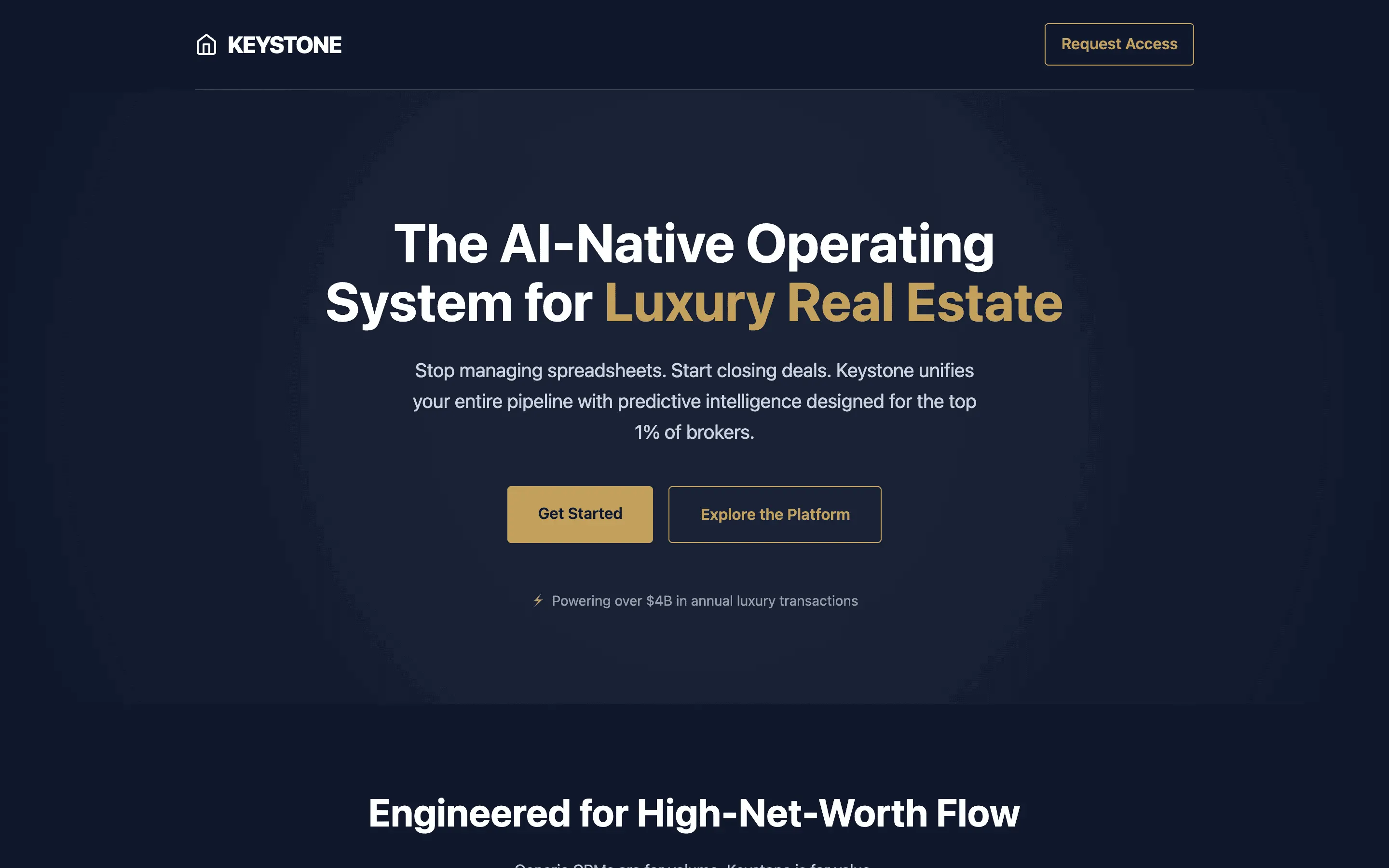

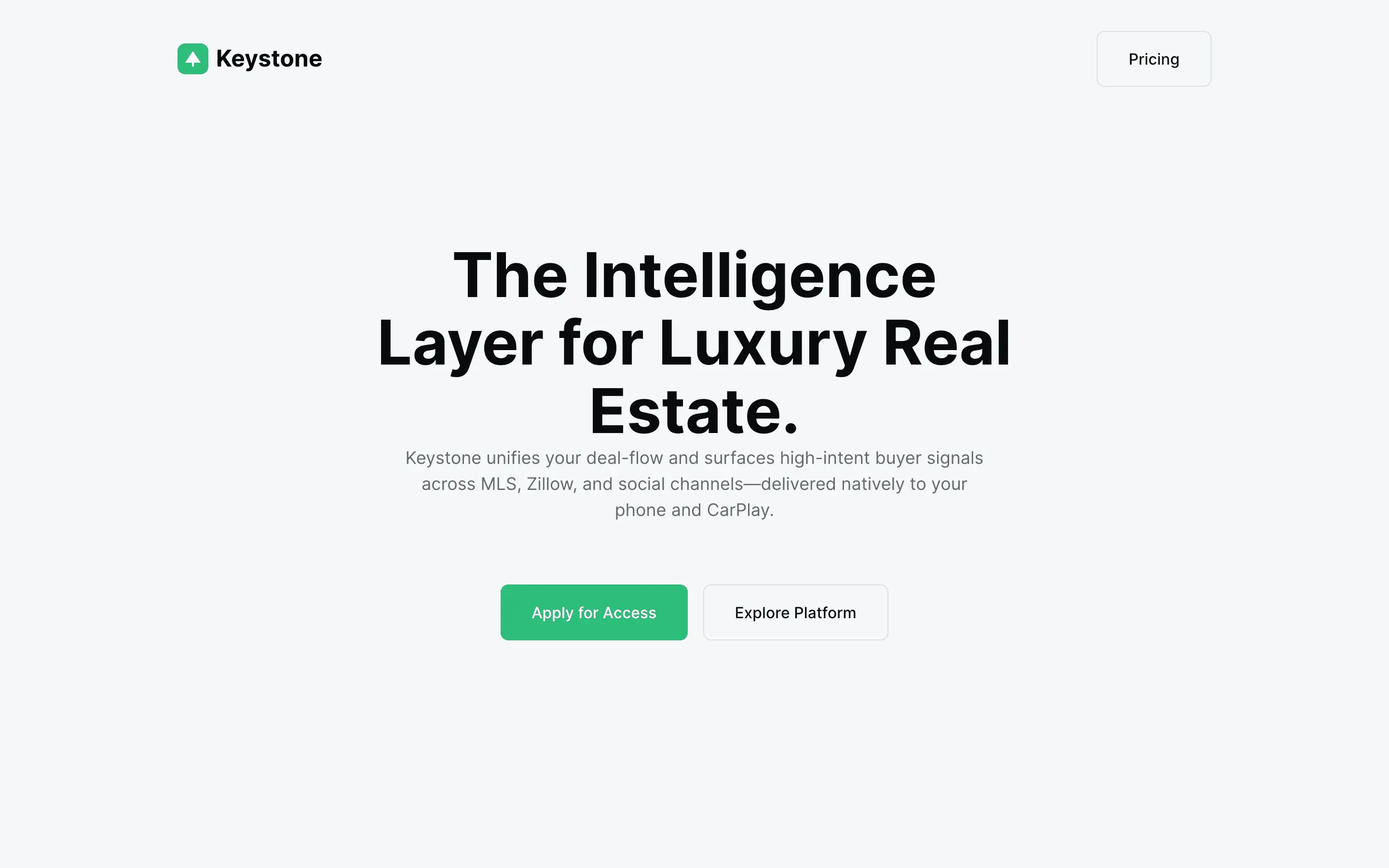

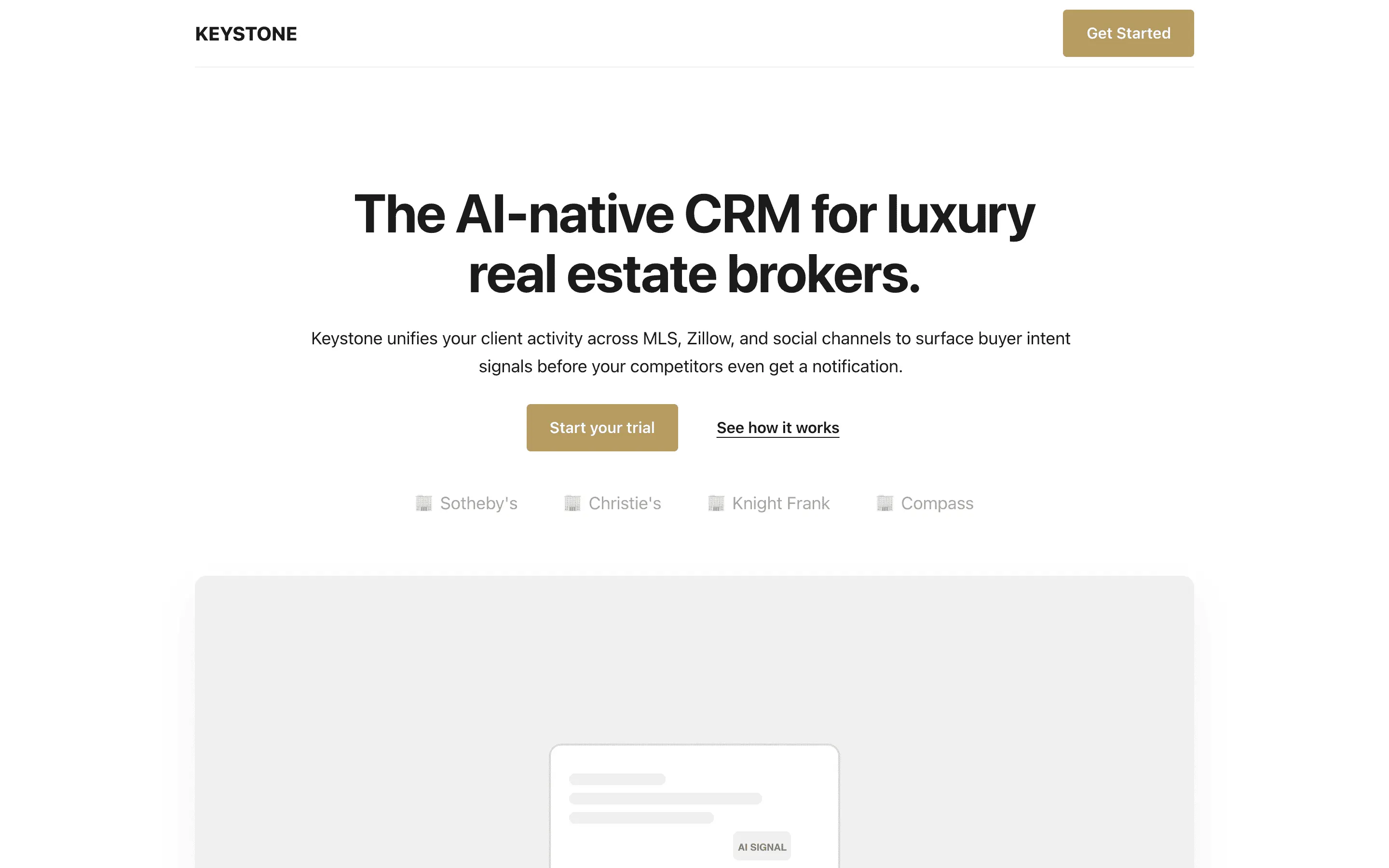

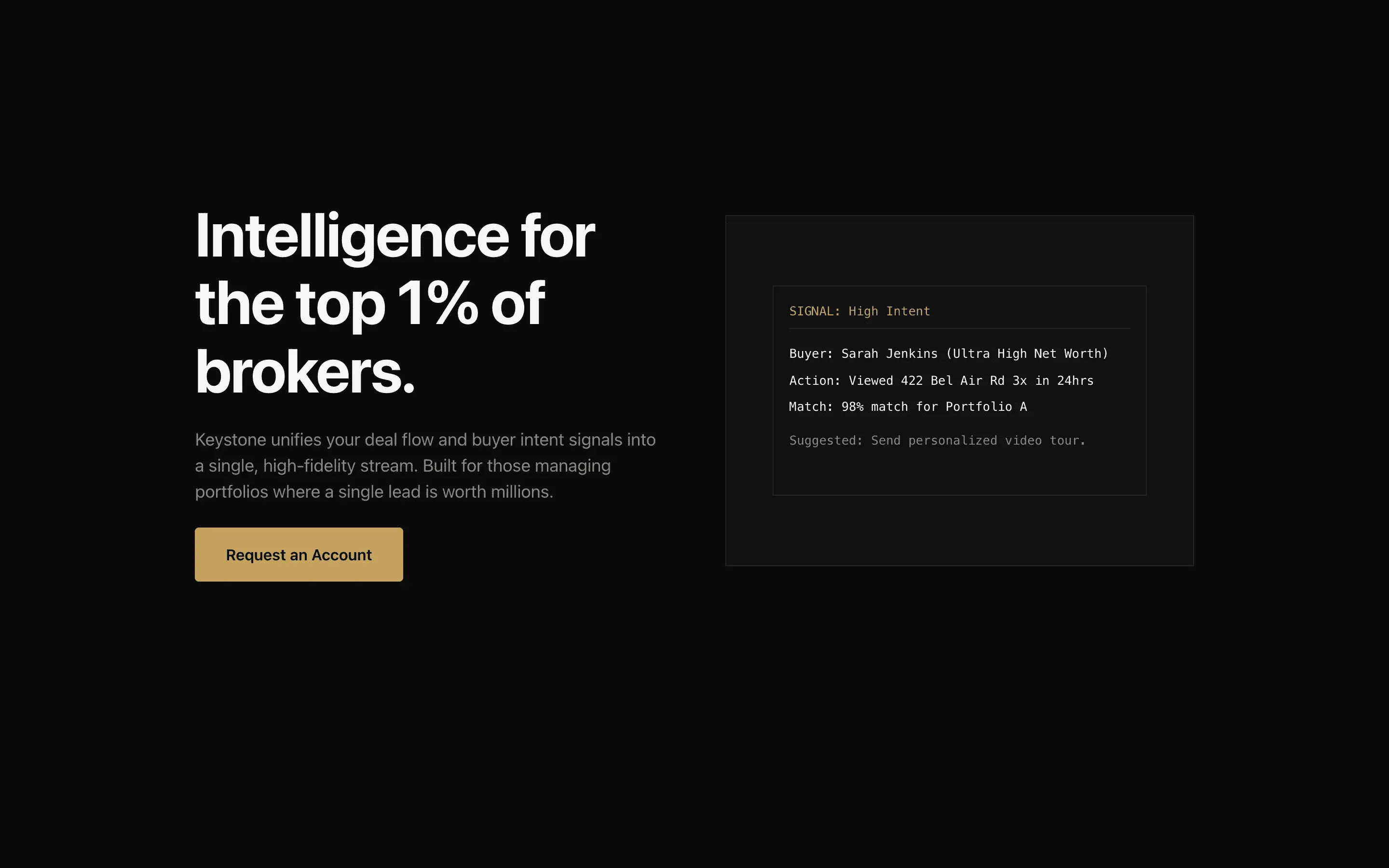

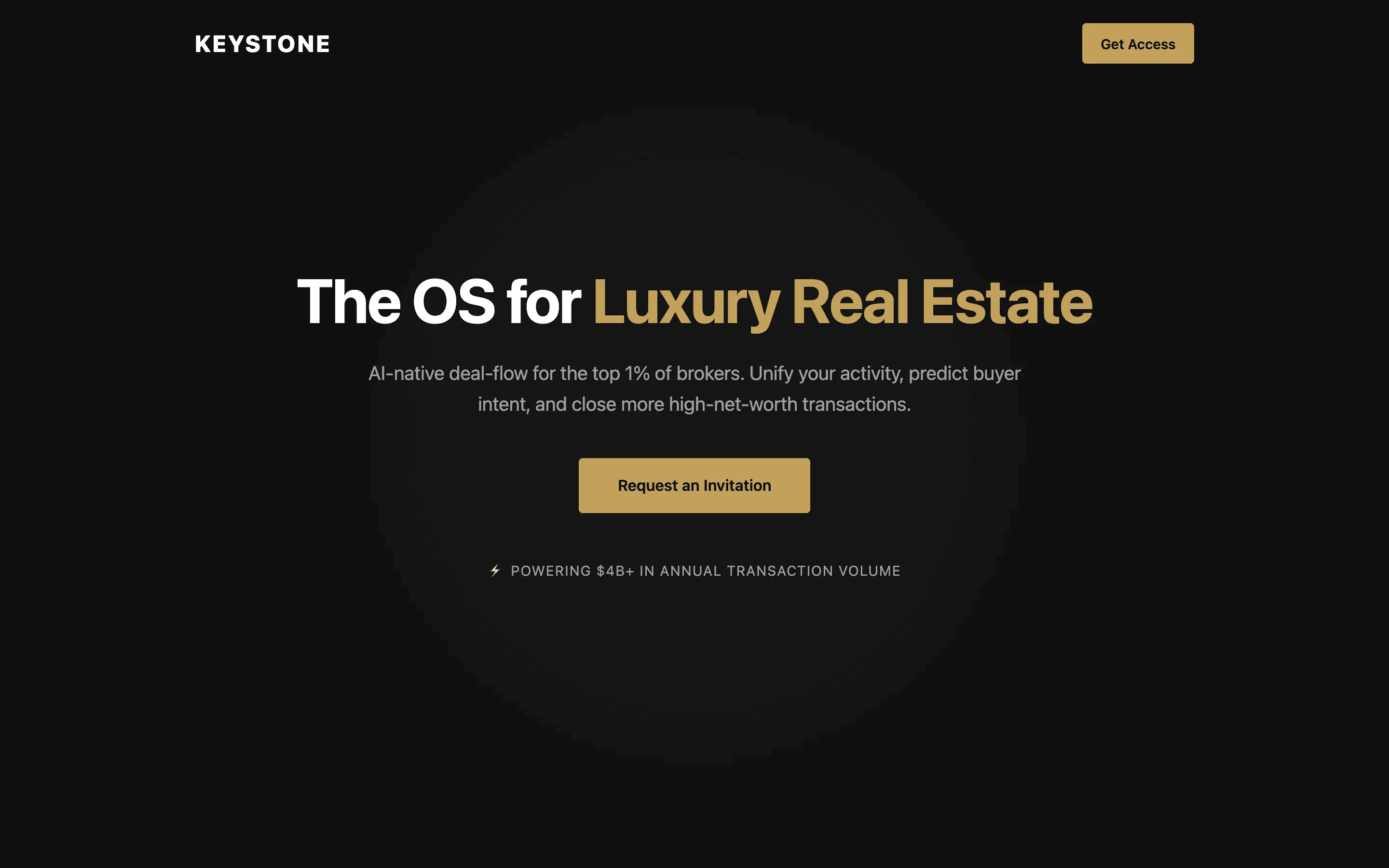

Pins the model's taste to specific, nameable sites it has seen in training, replacing vague style words with concrete reference behavior.

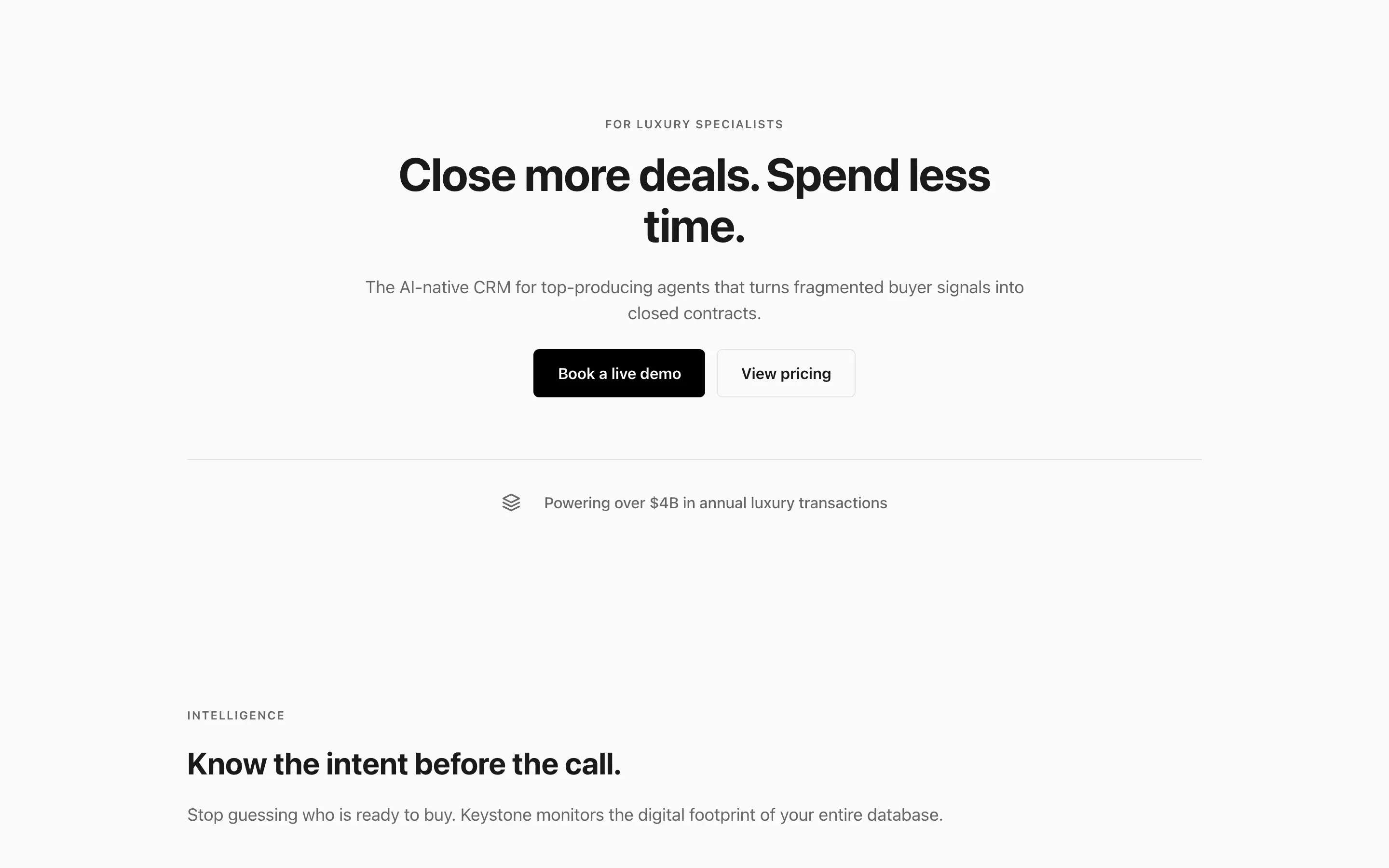

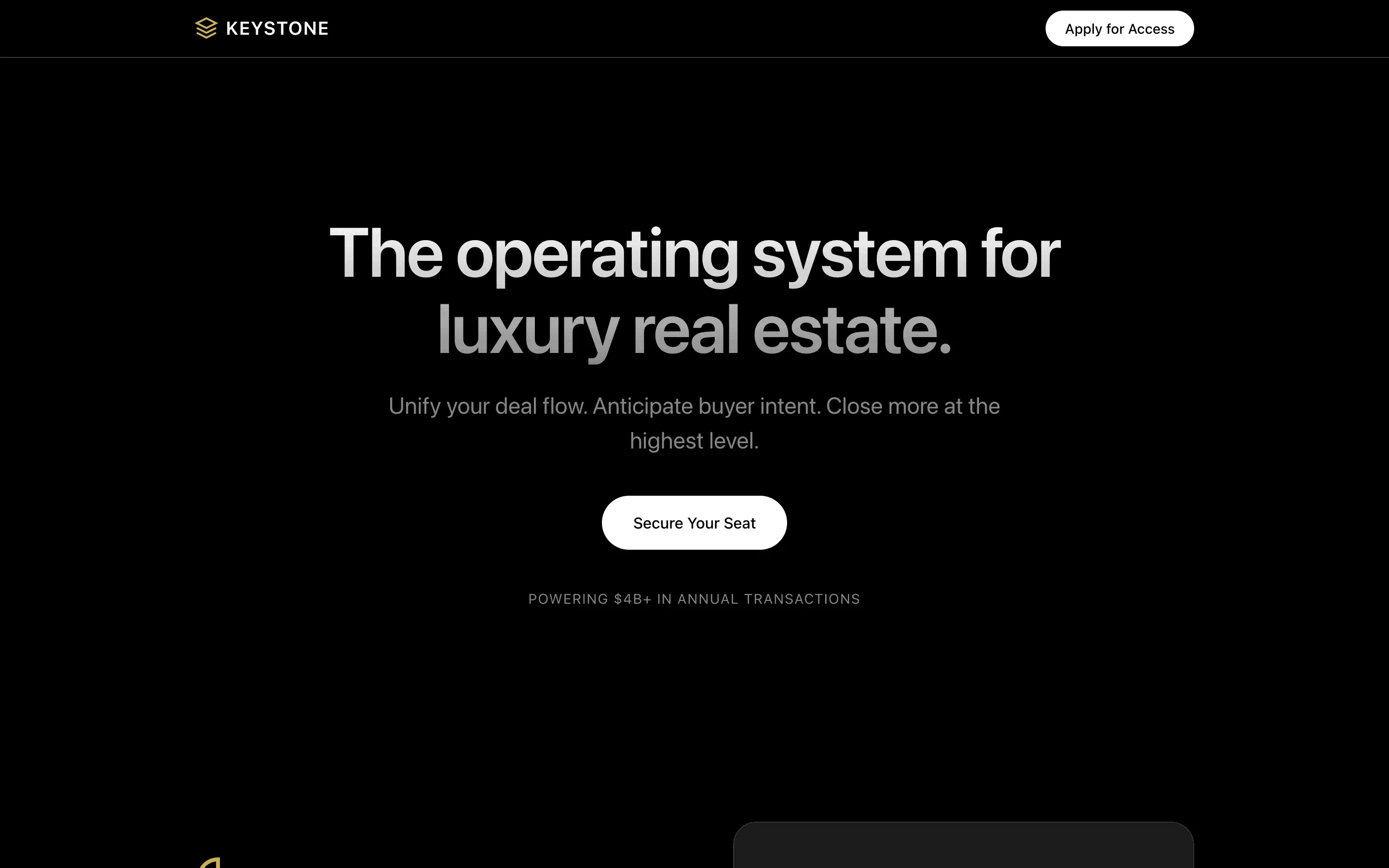

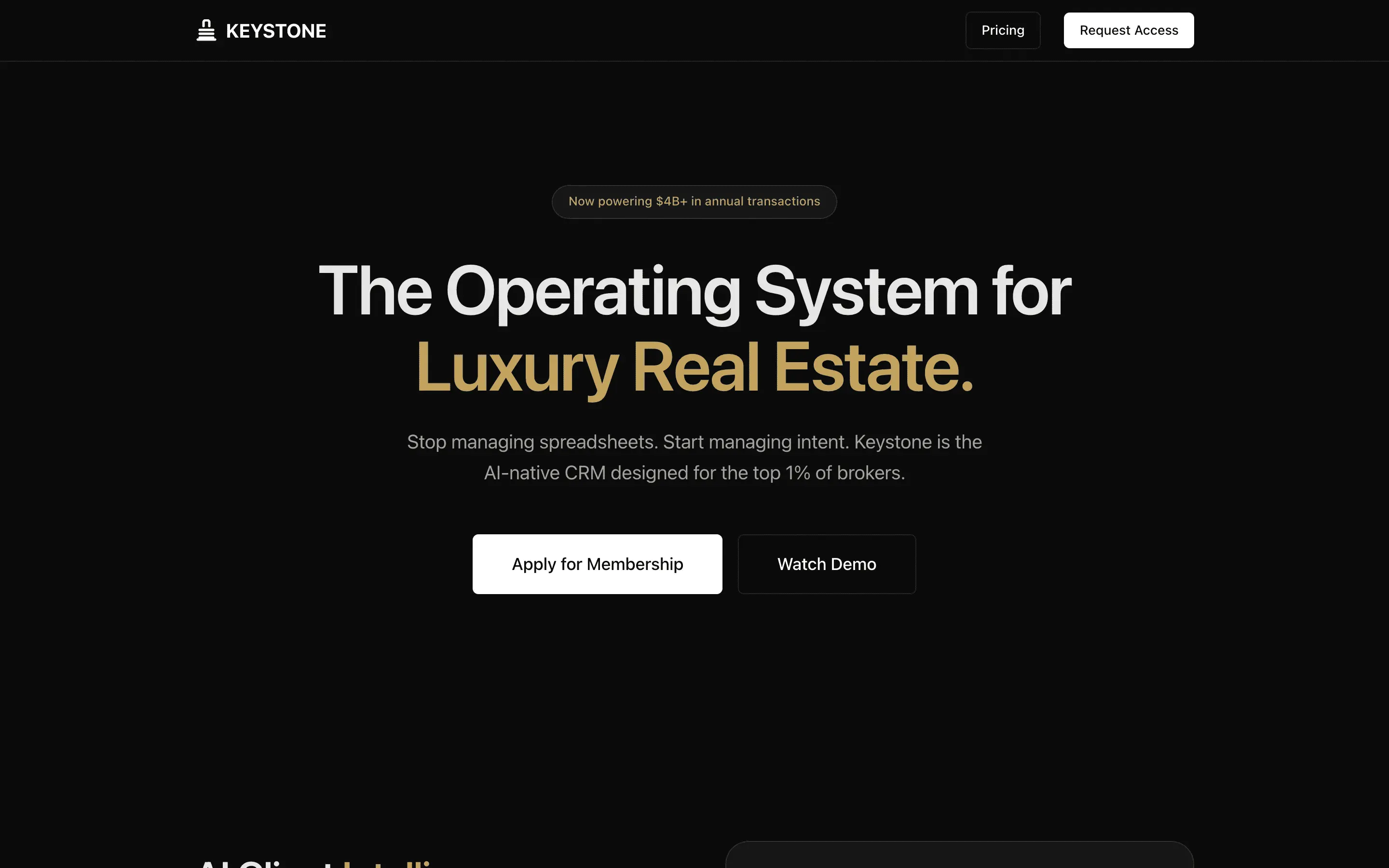

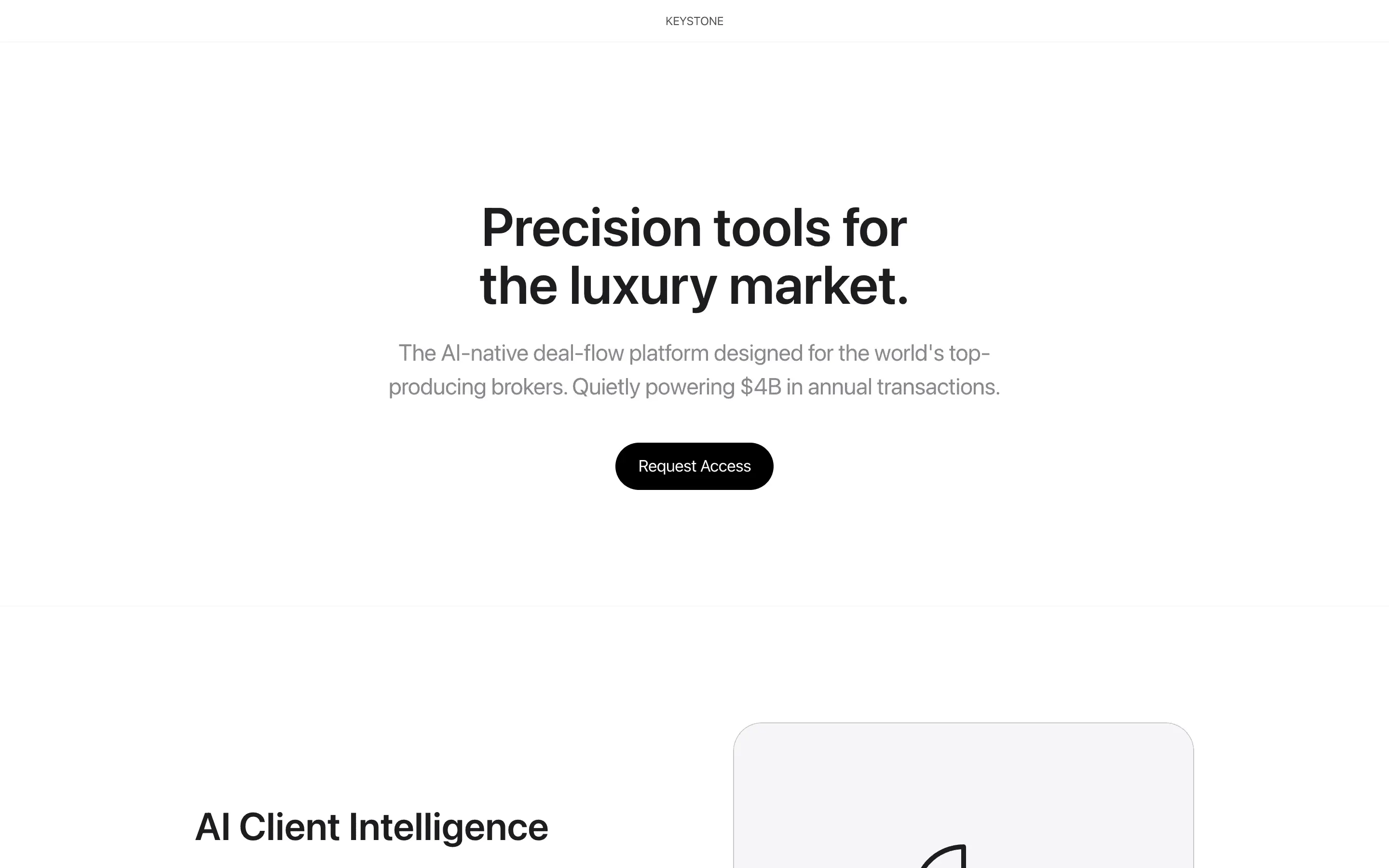

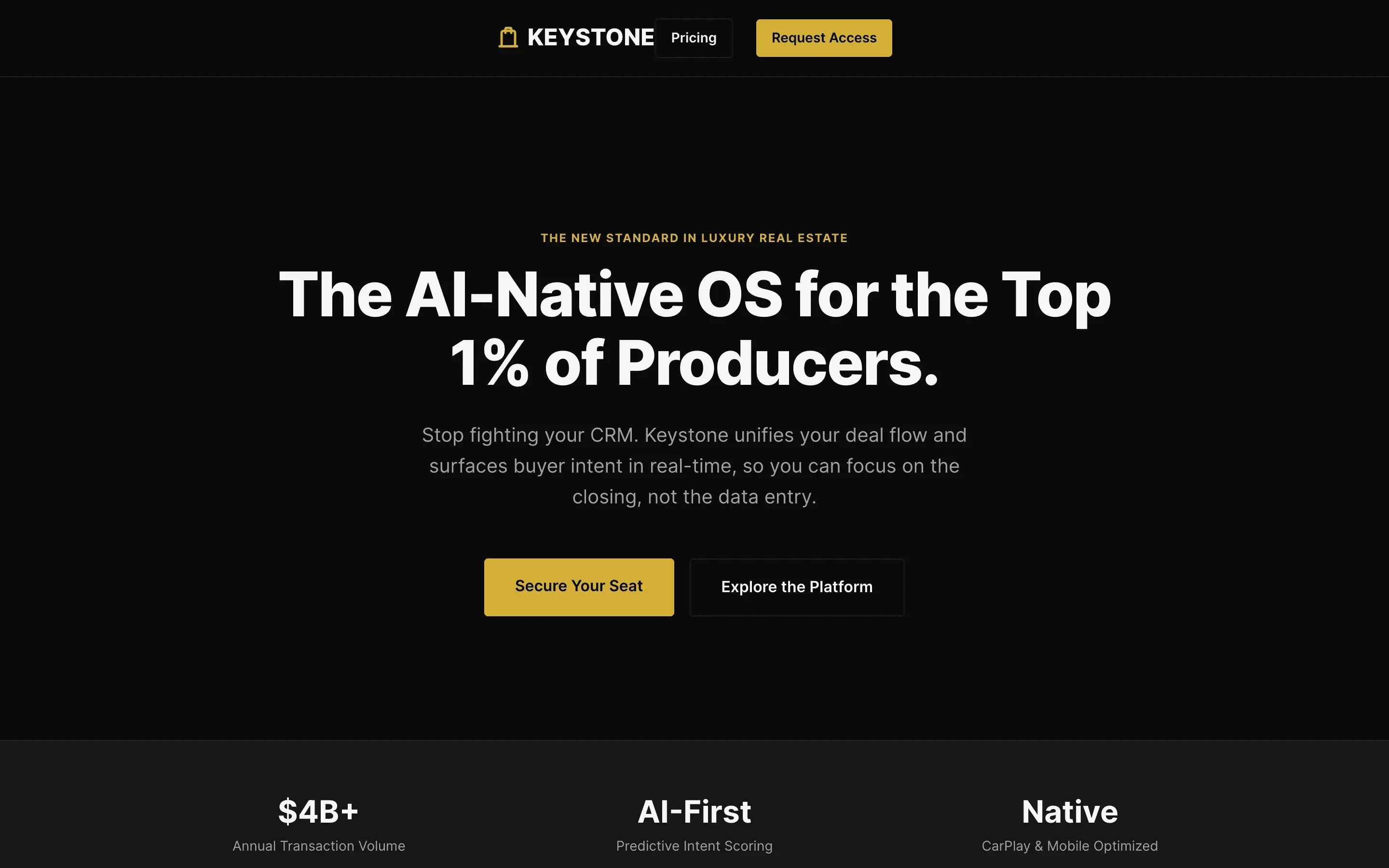

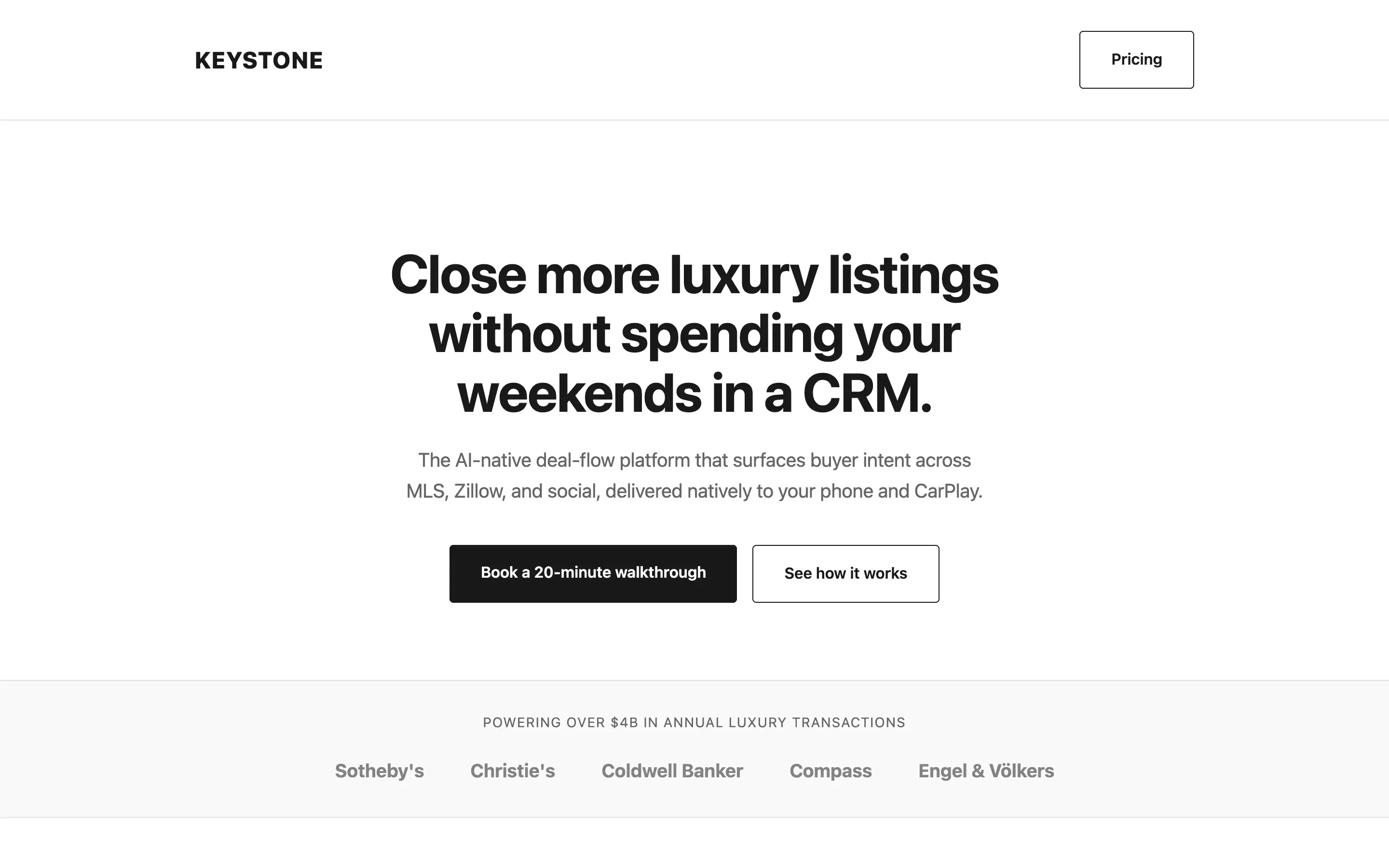

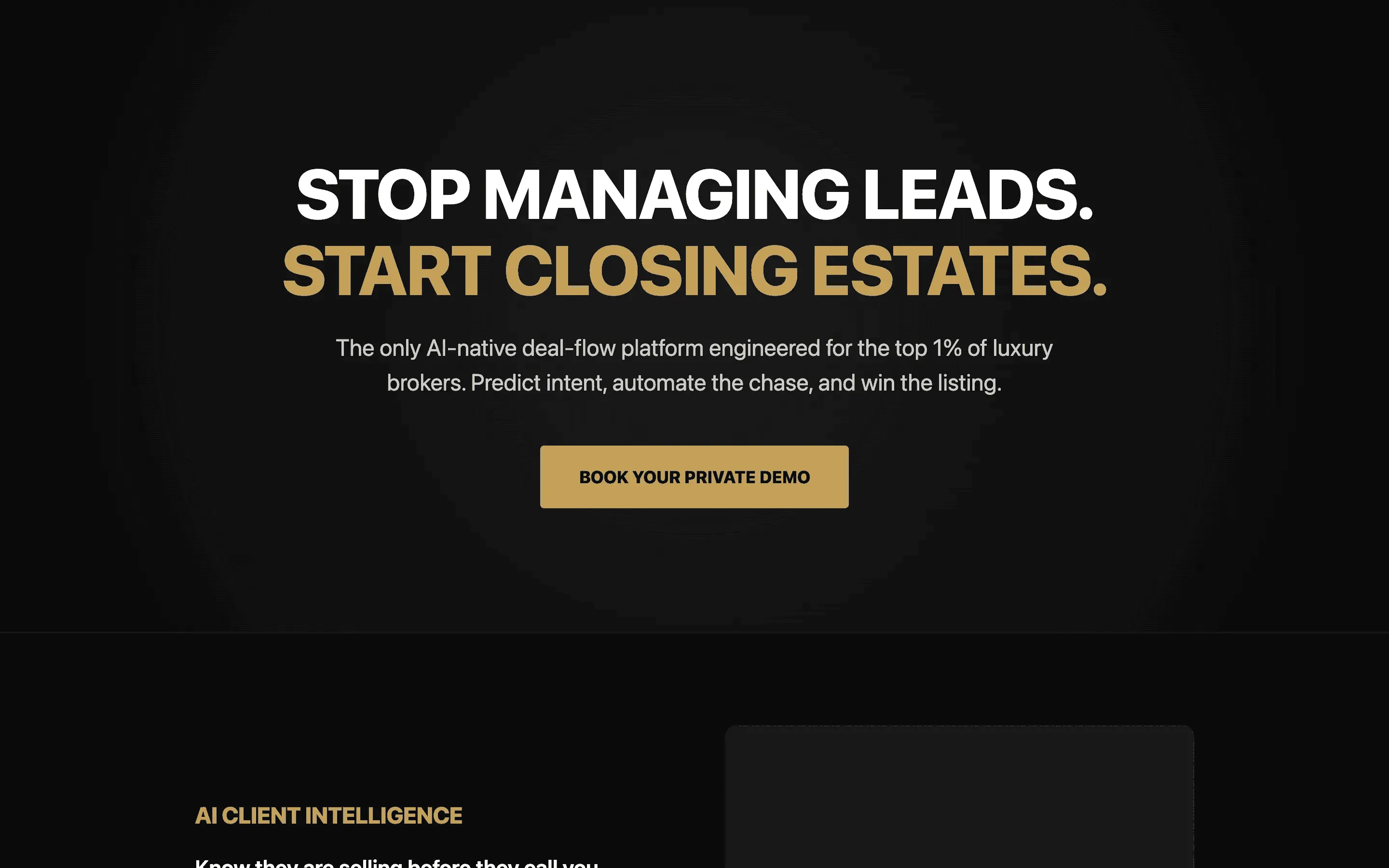

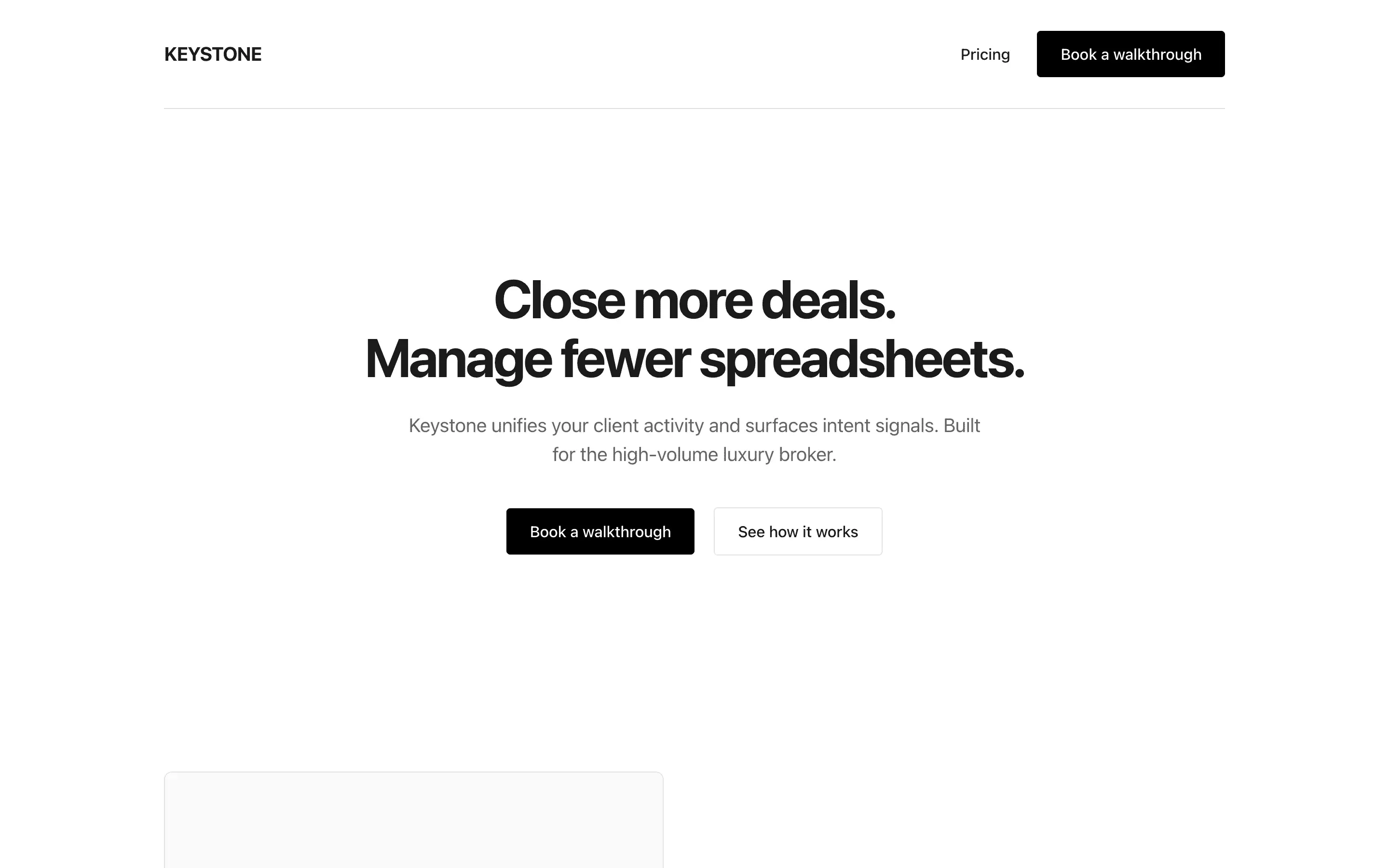

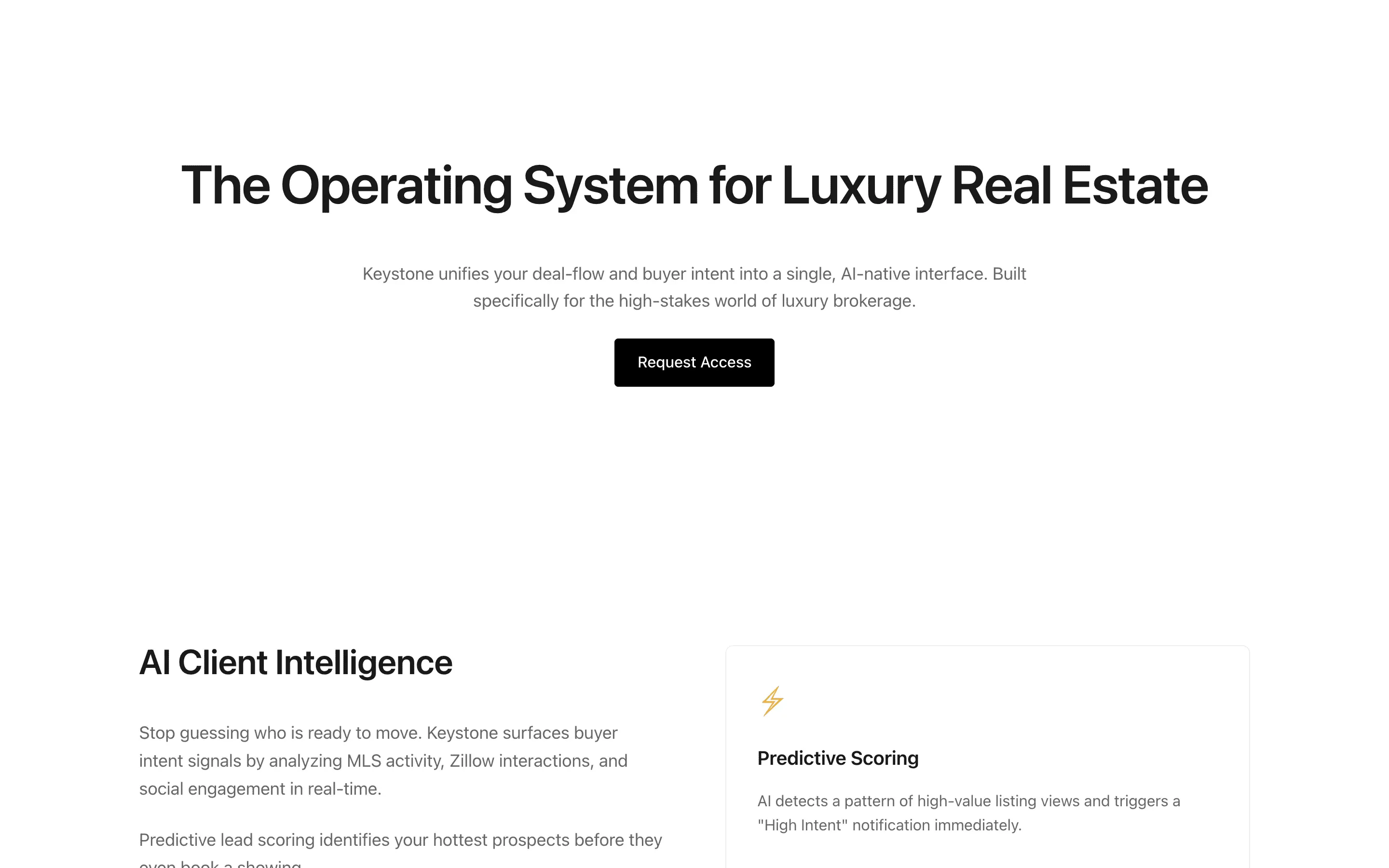

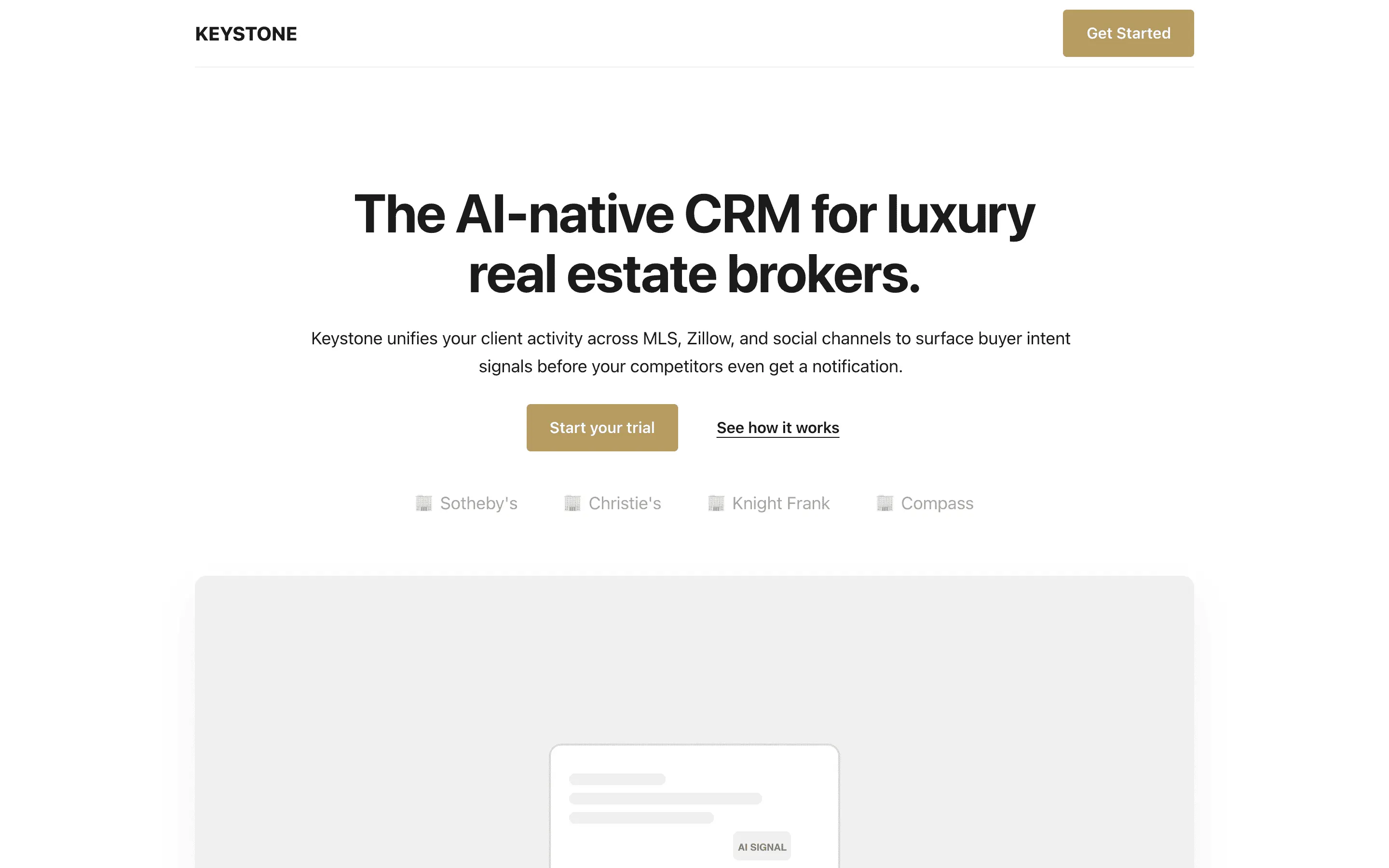

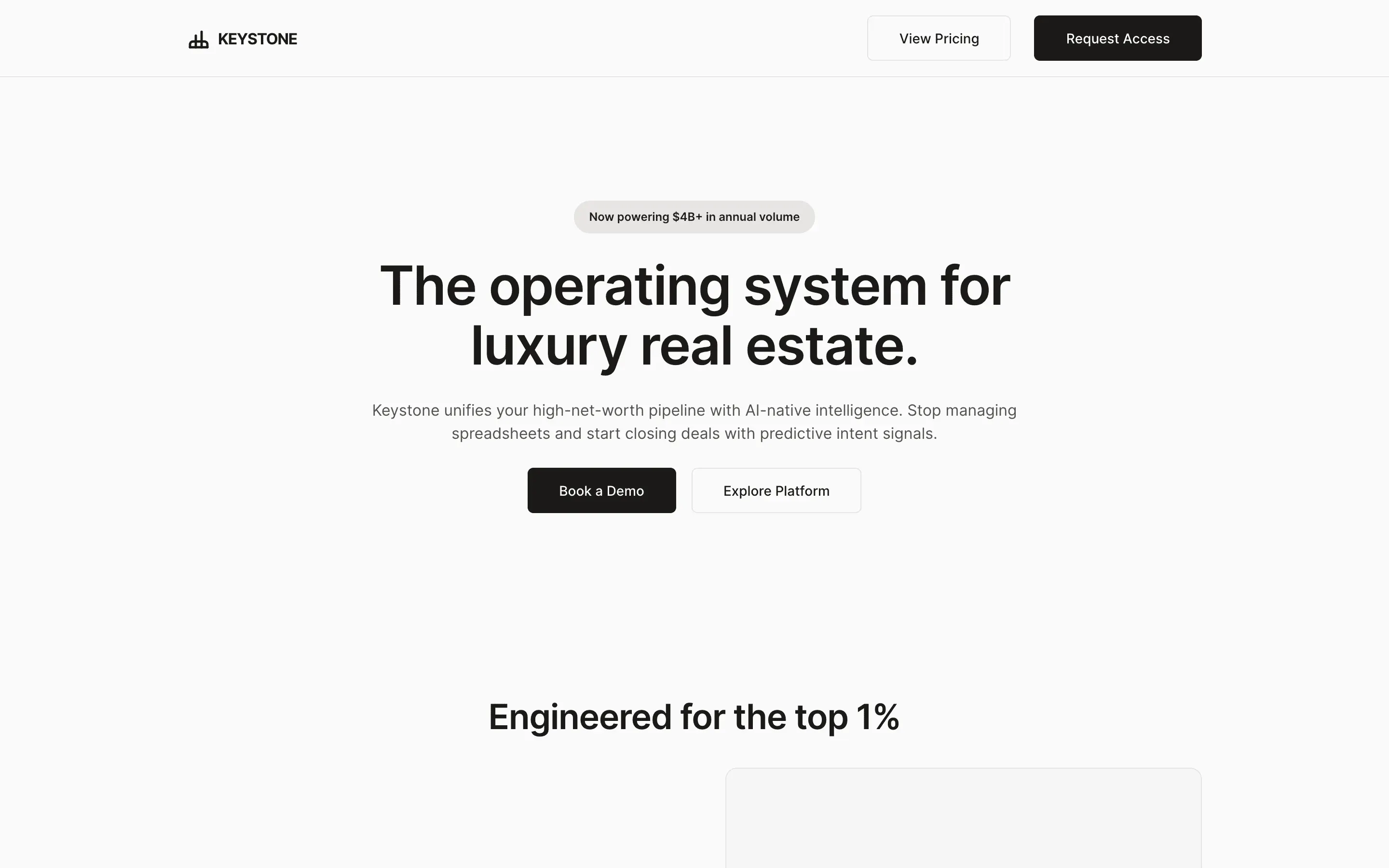

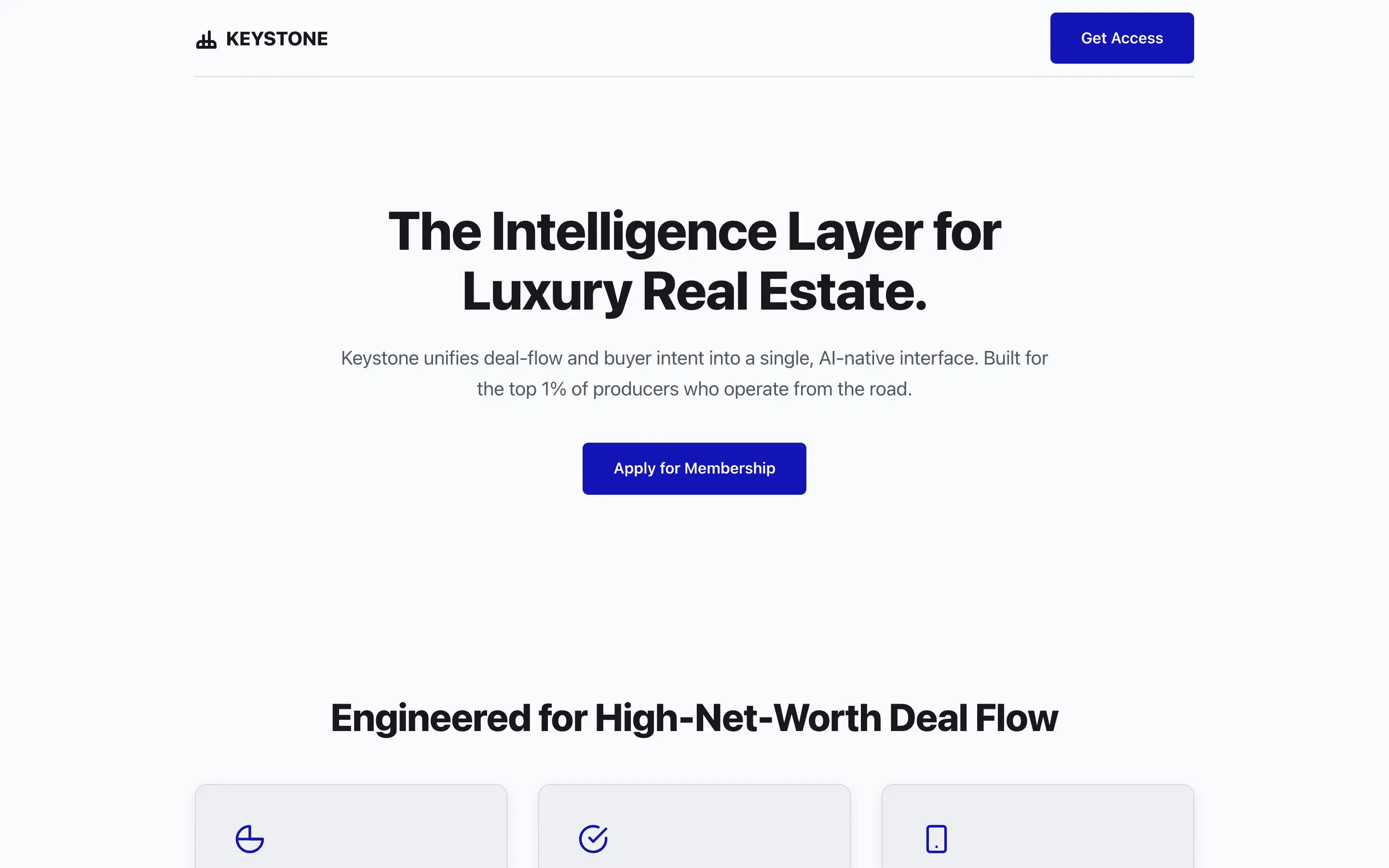

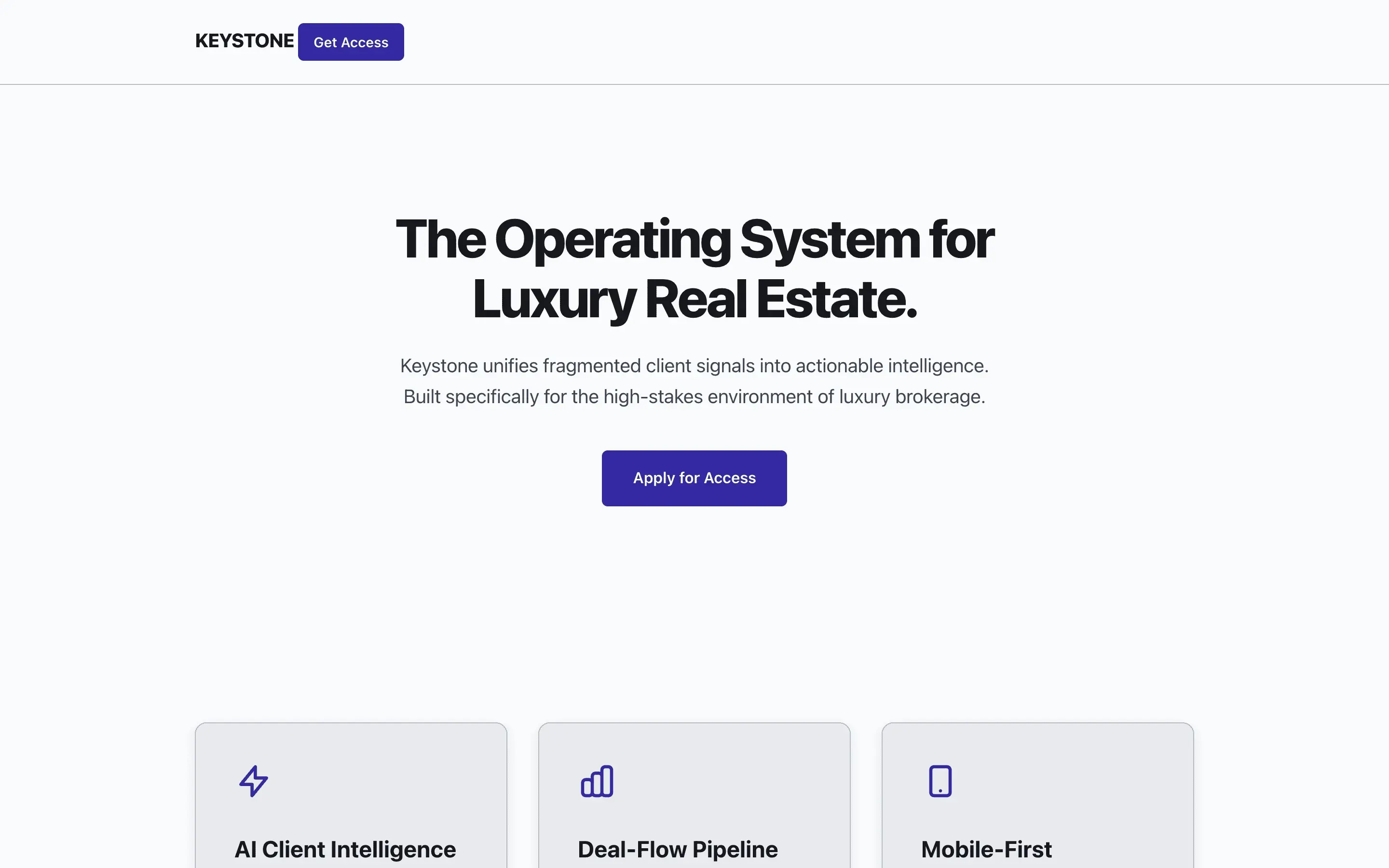

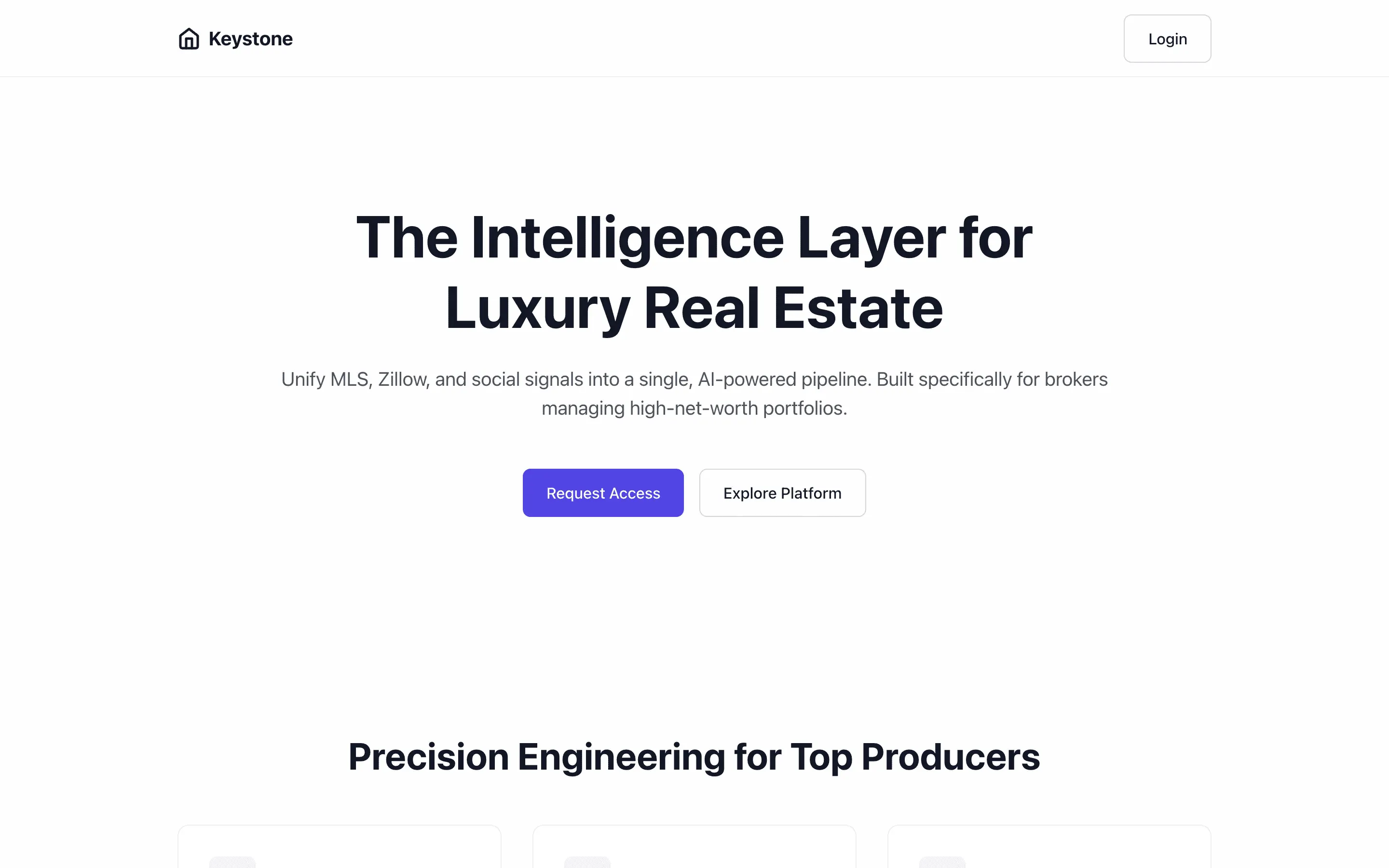

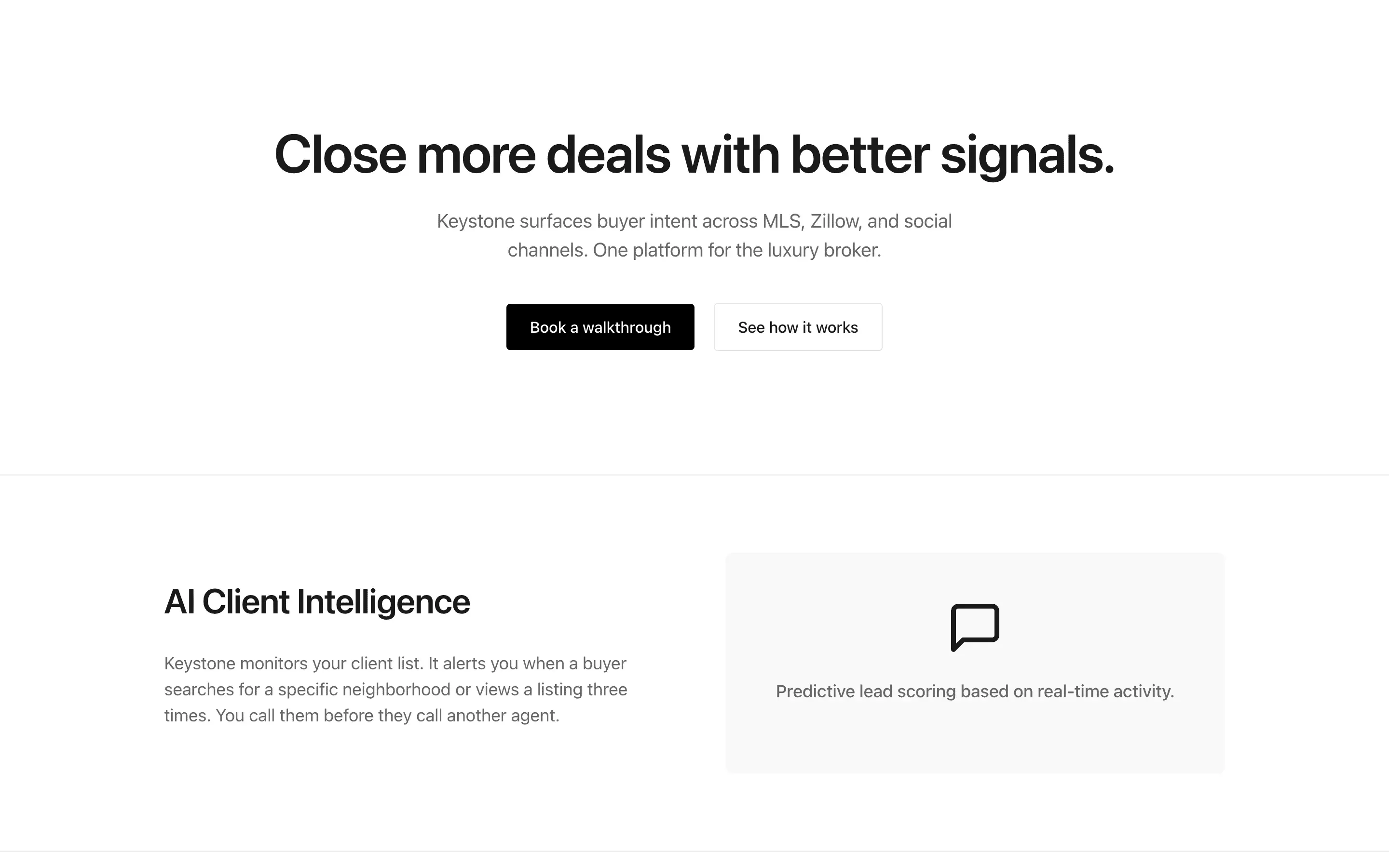

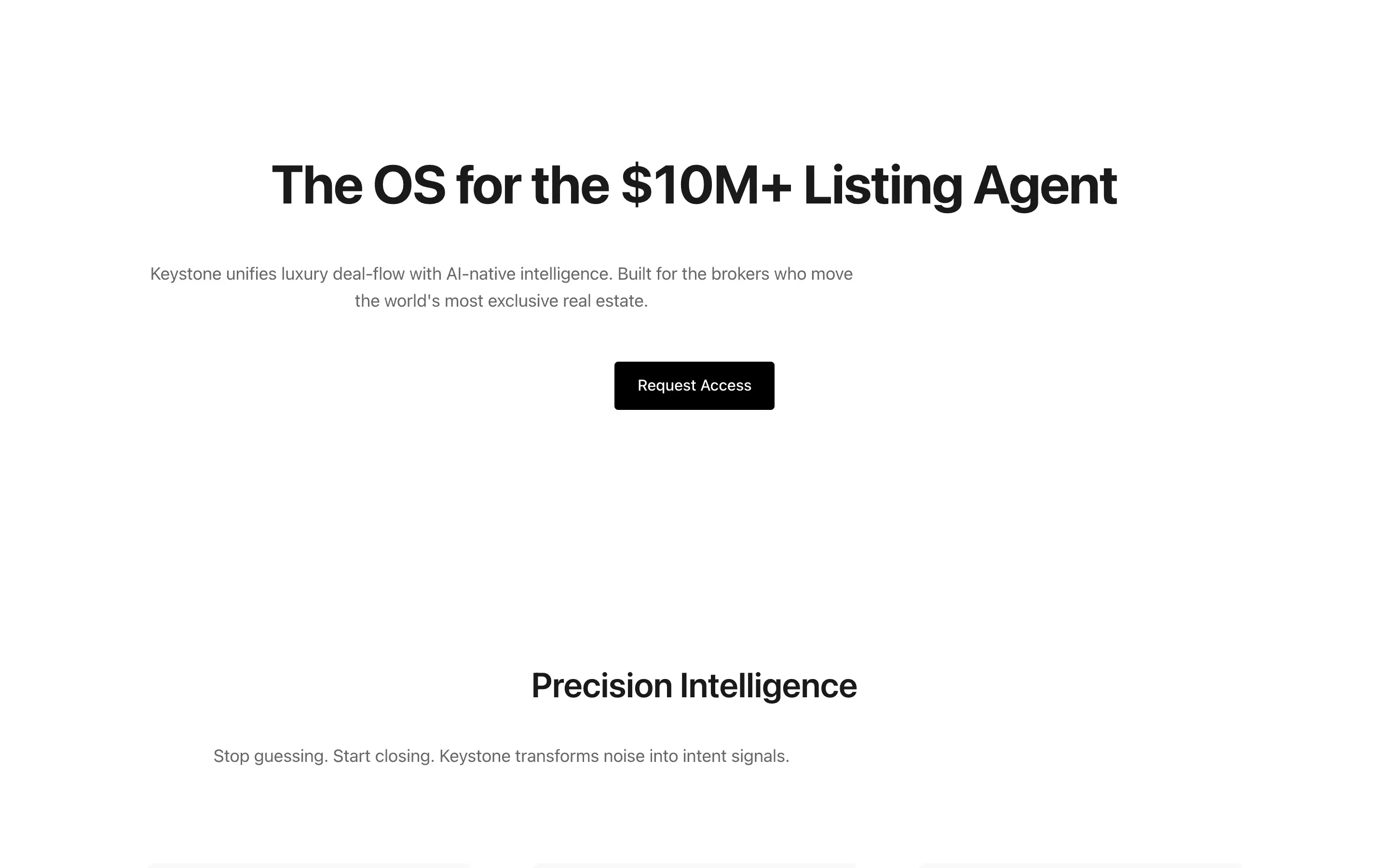

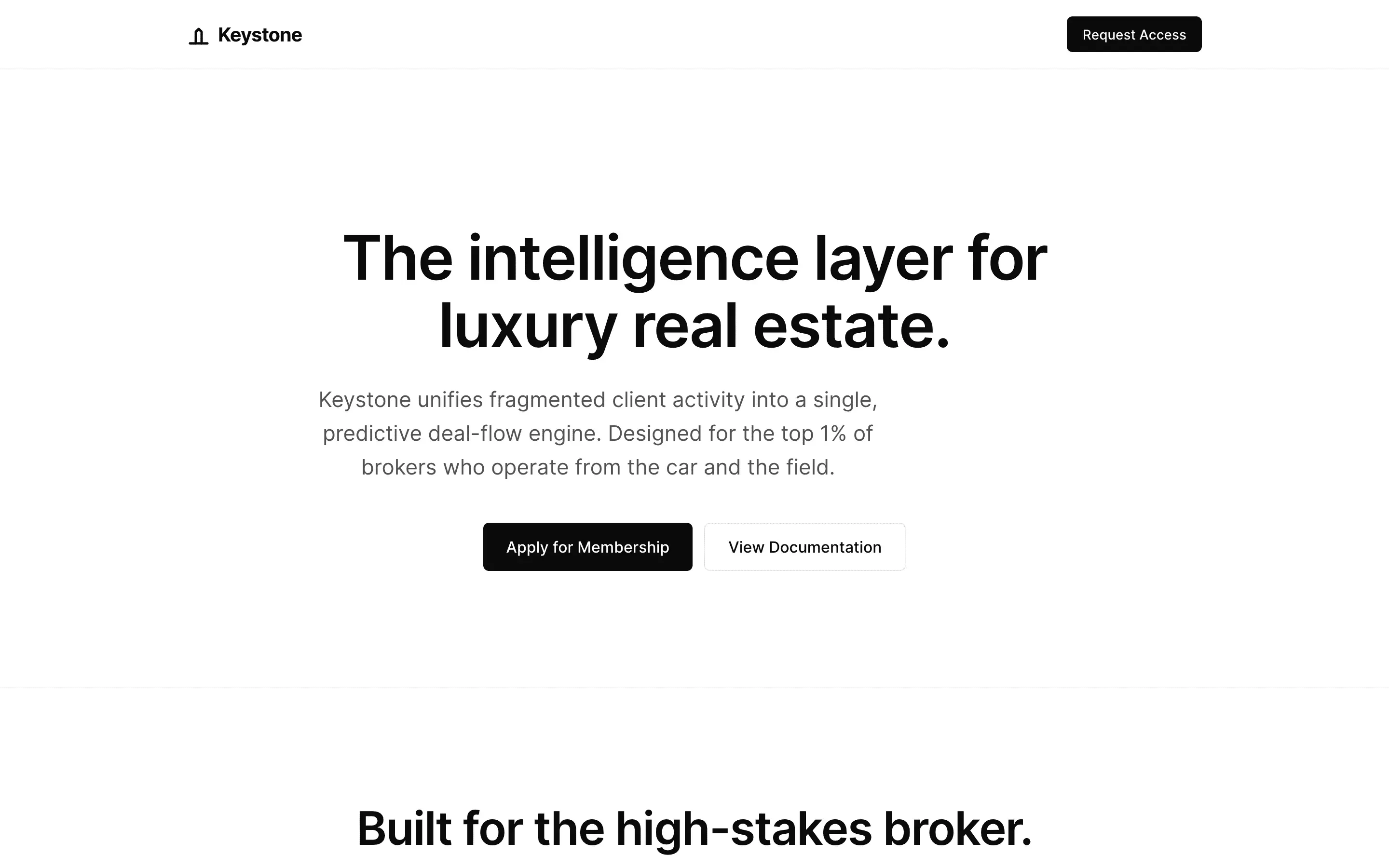

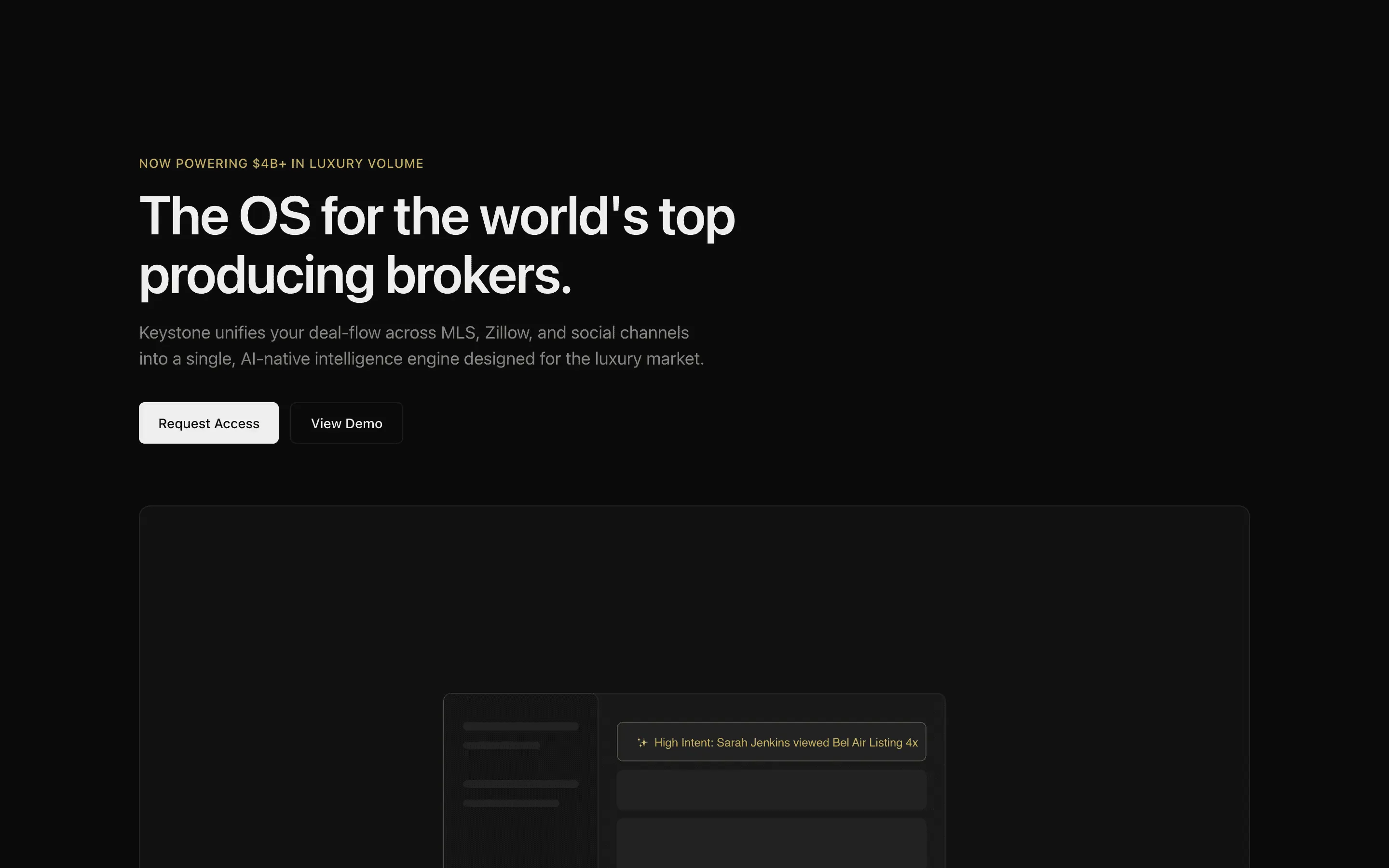

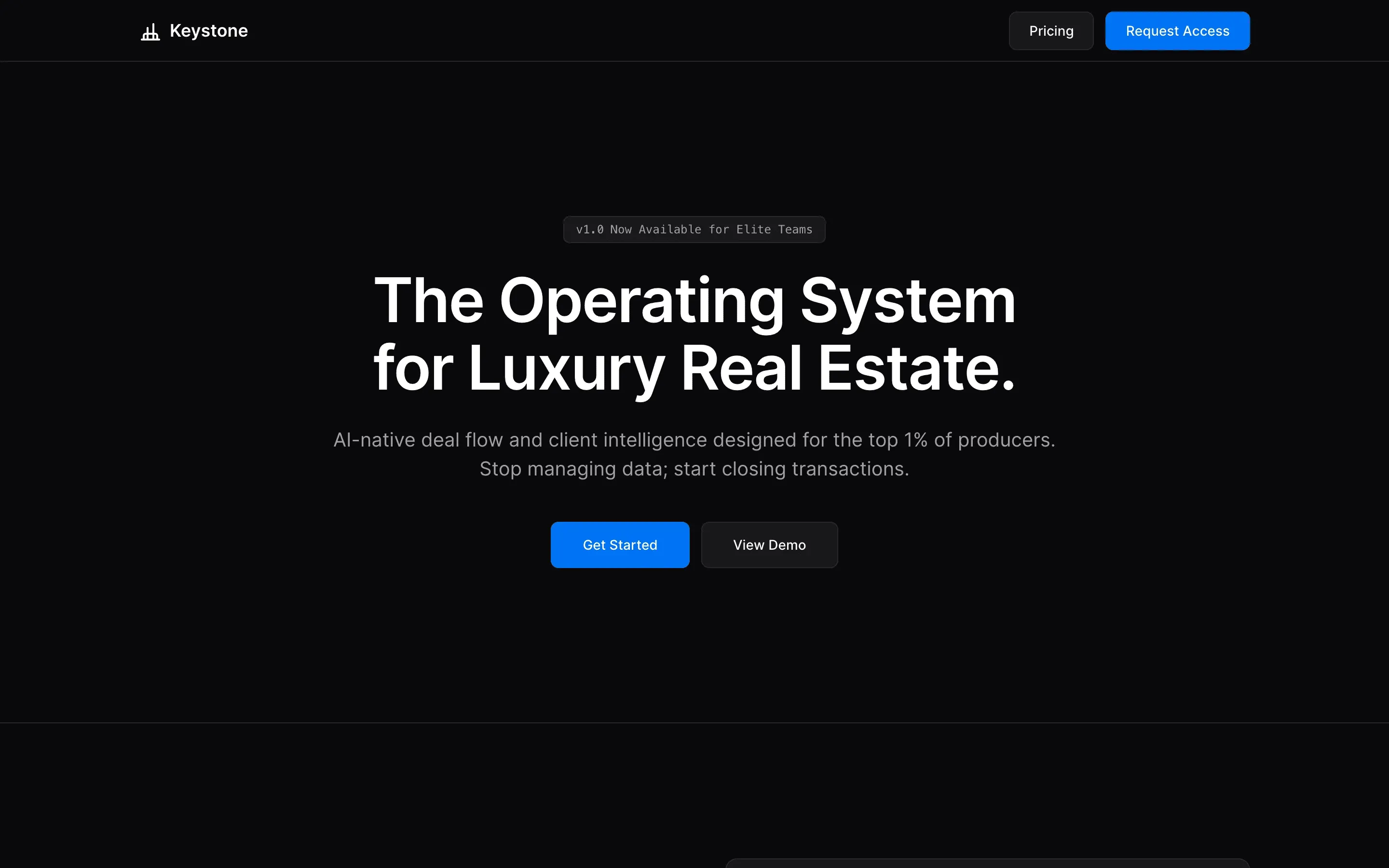

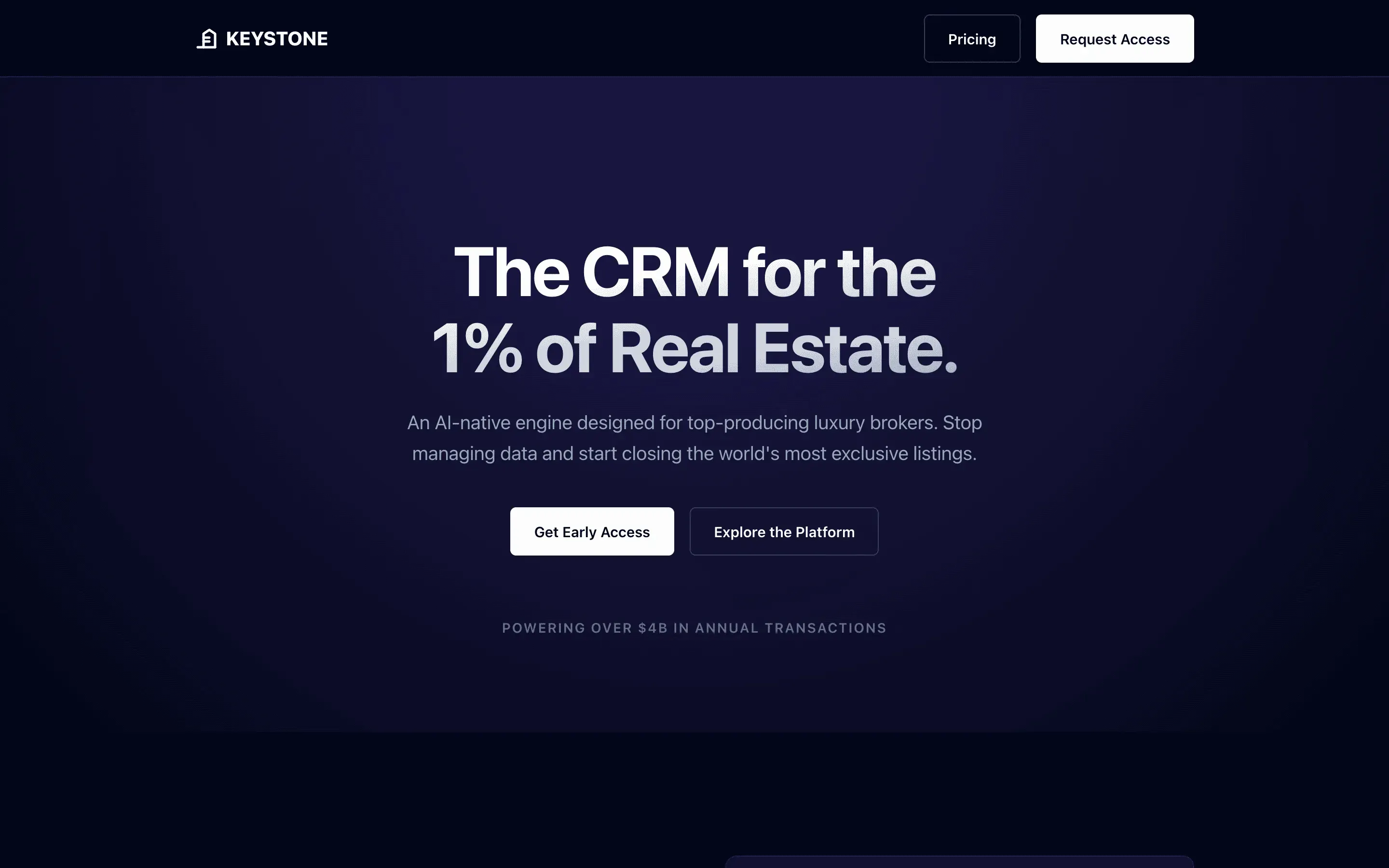

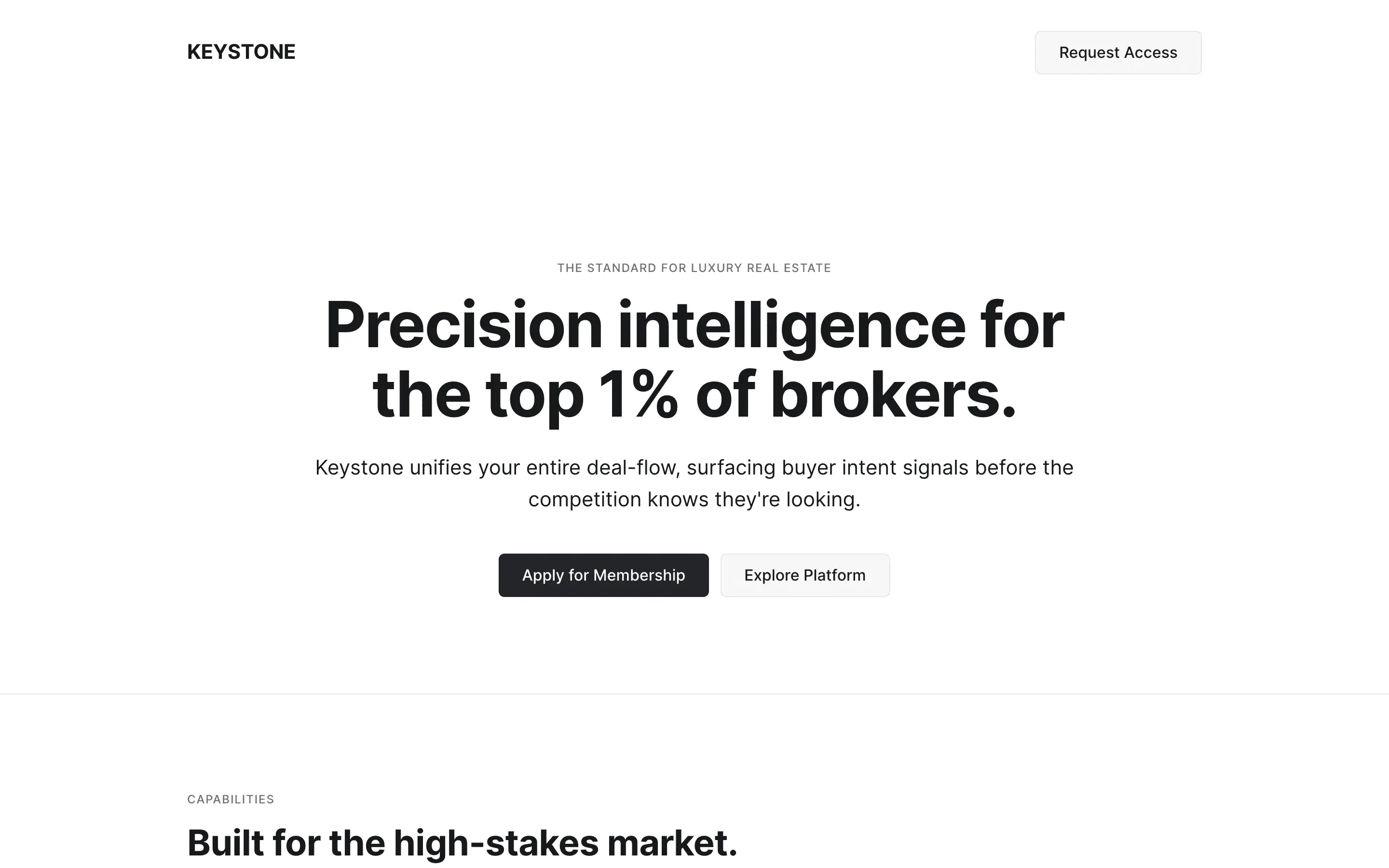

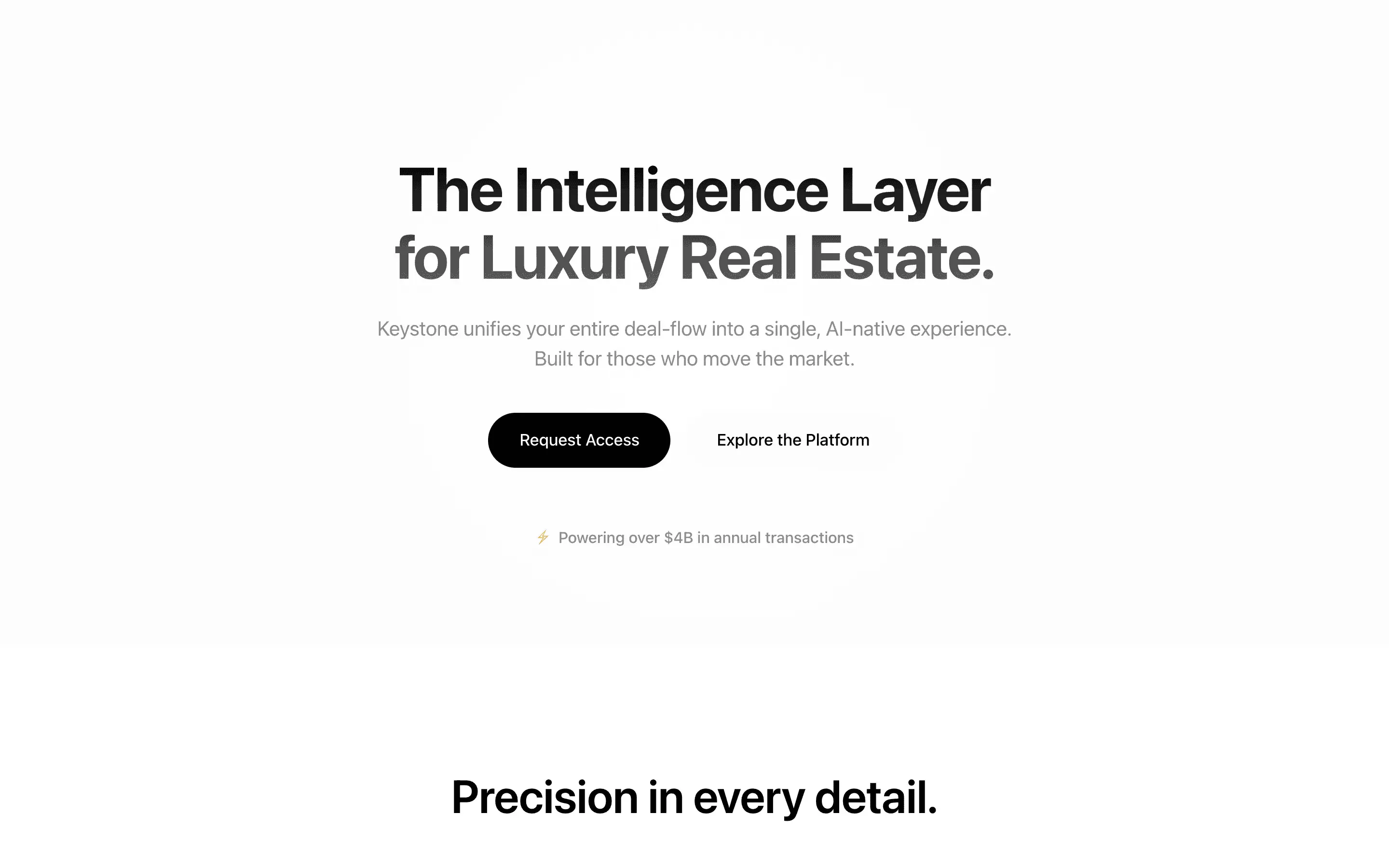

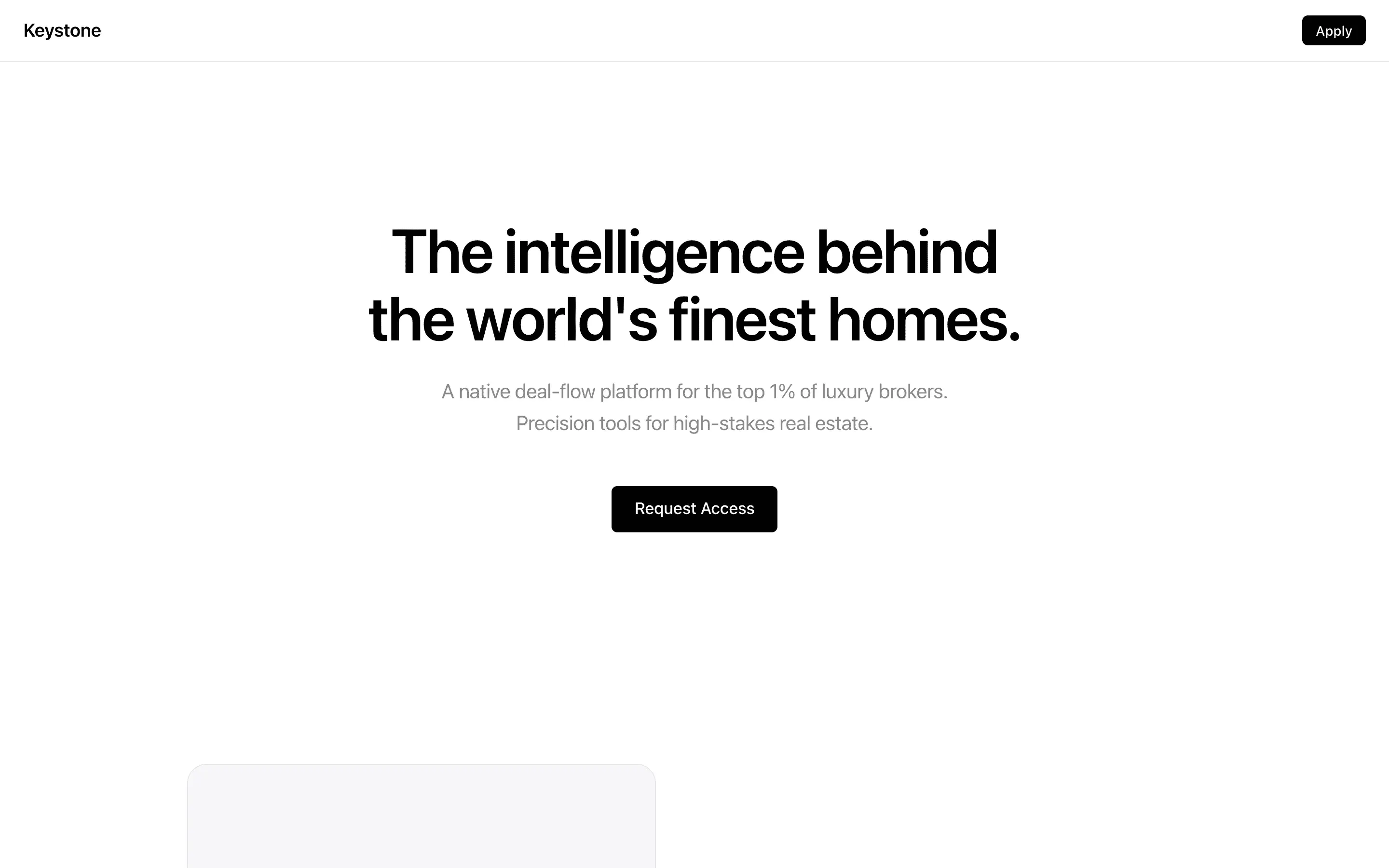

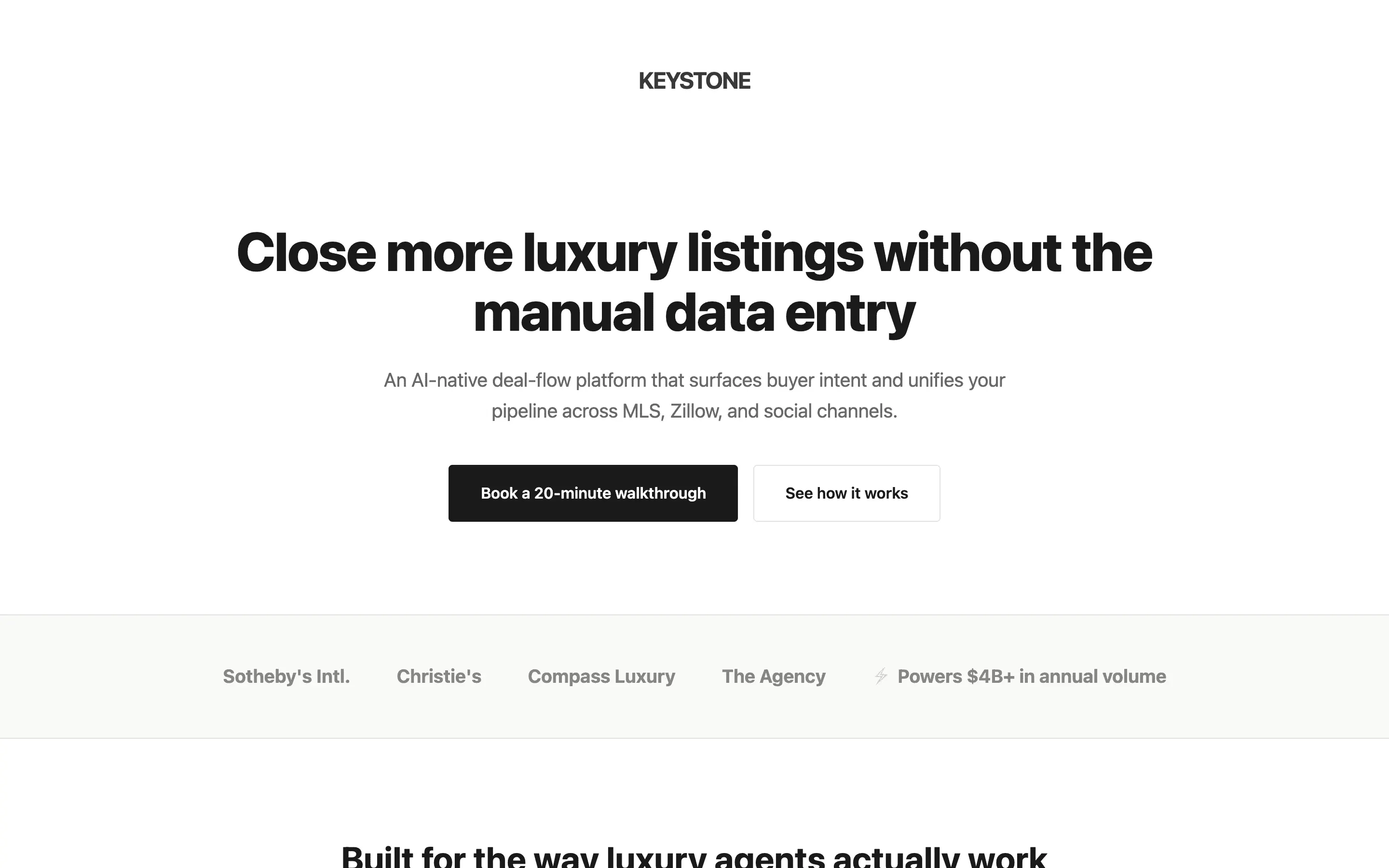

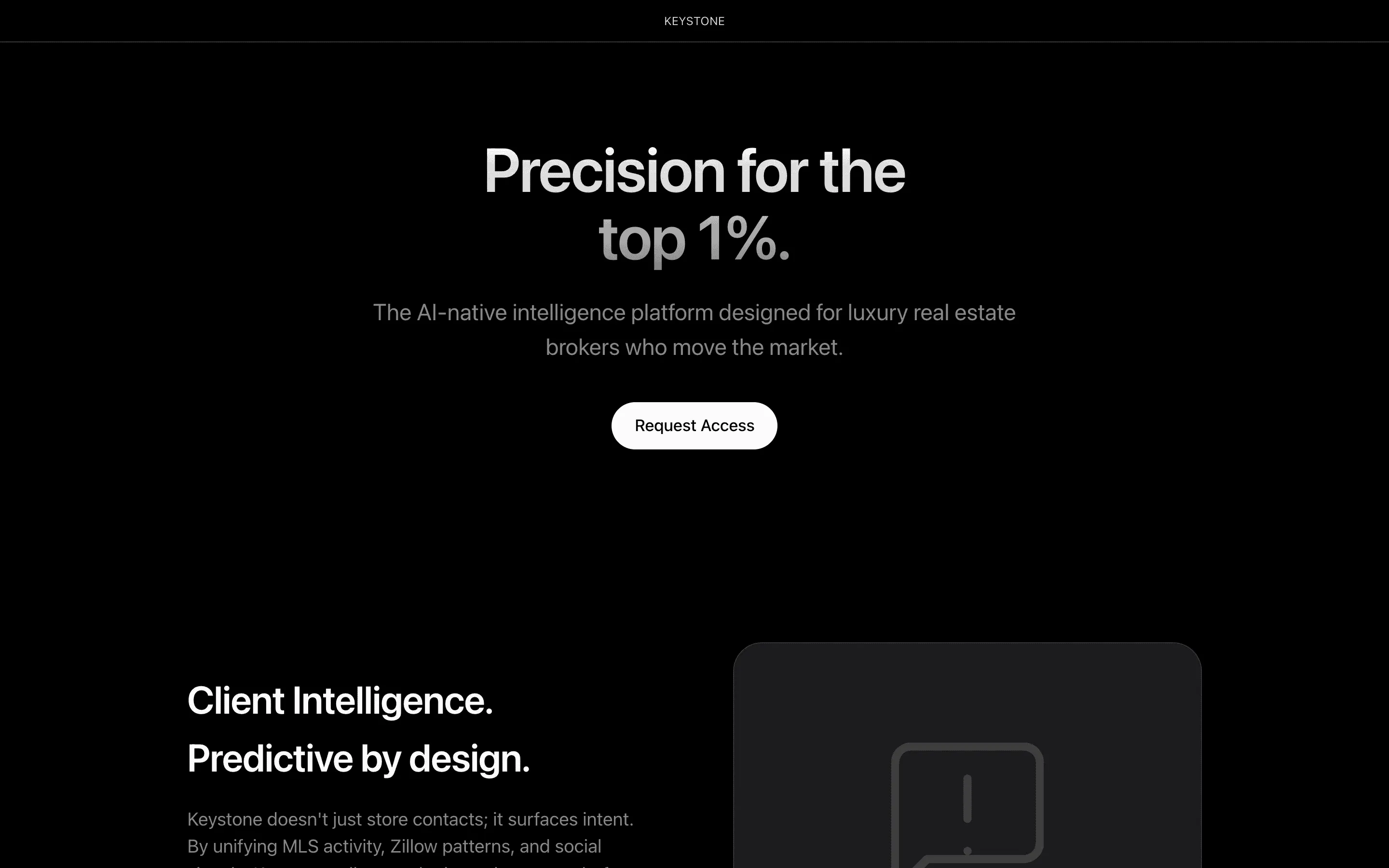

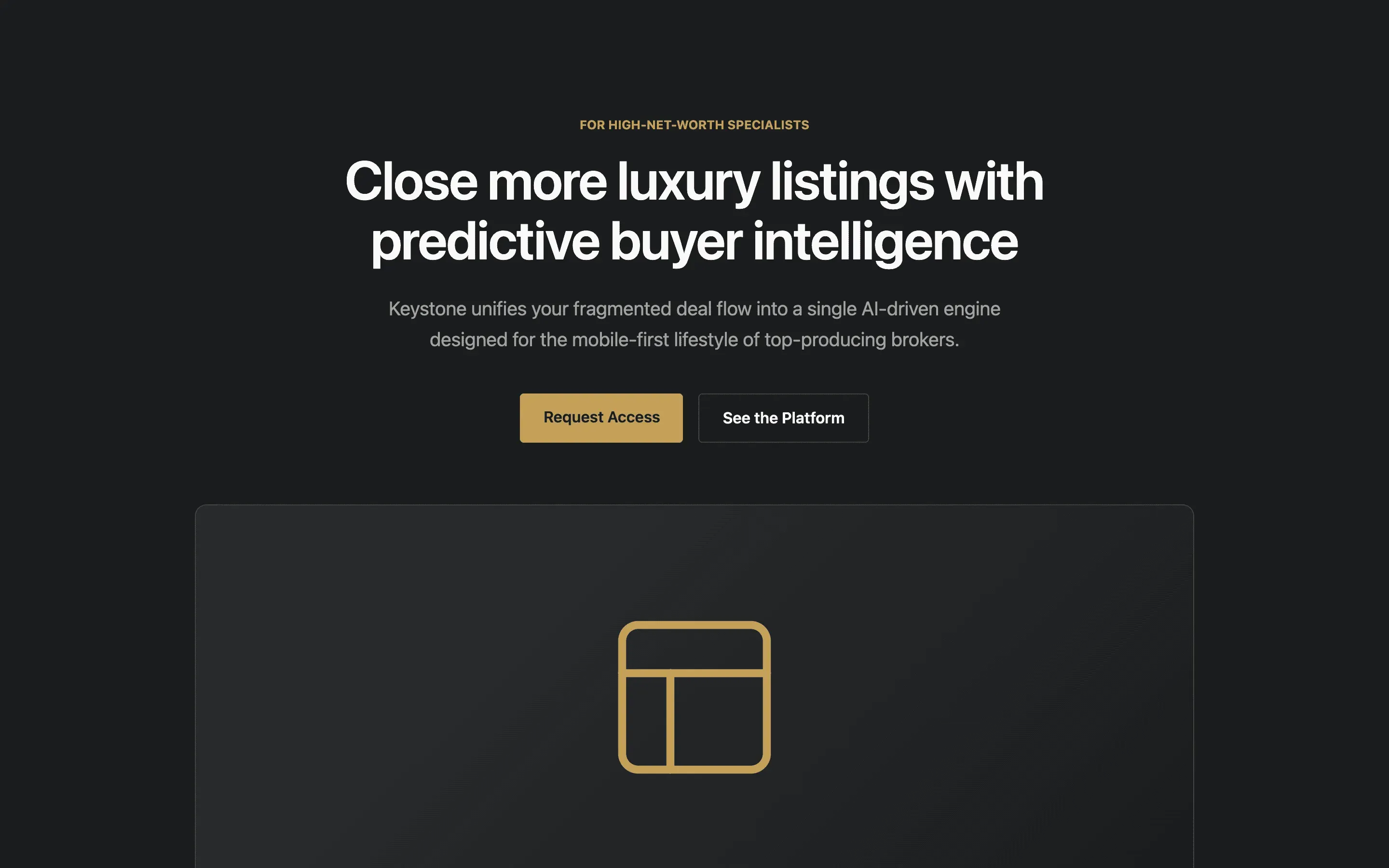

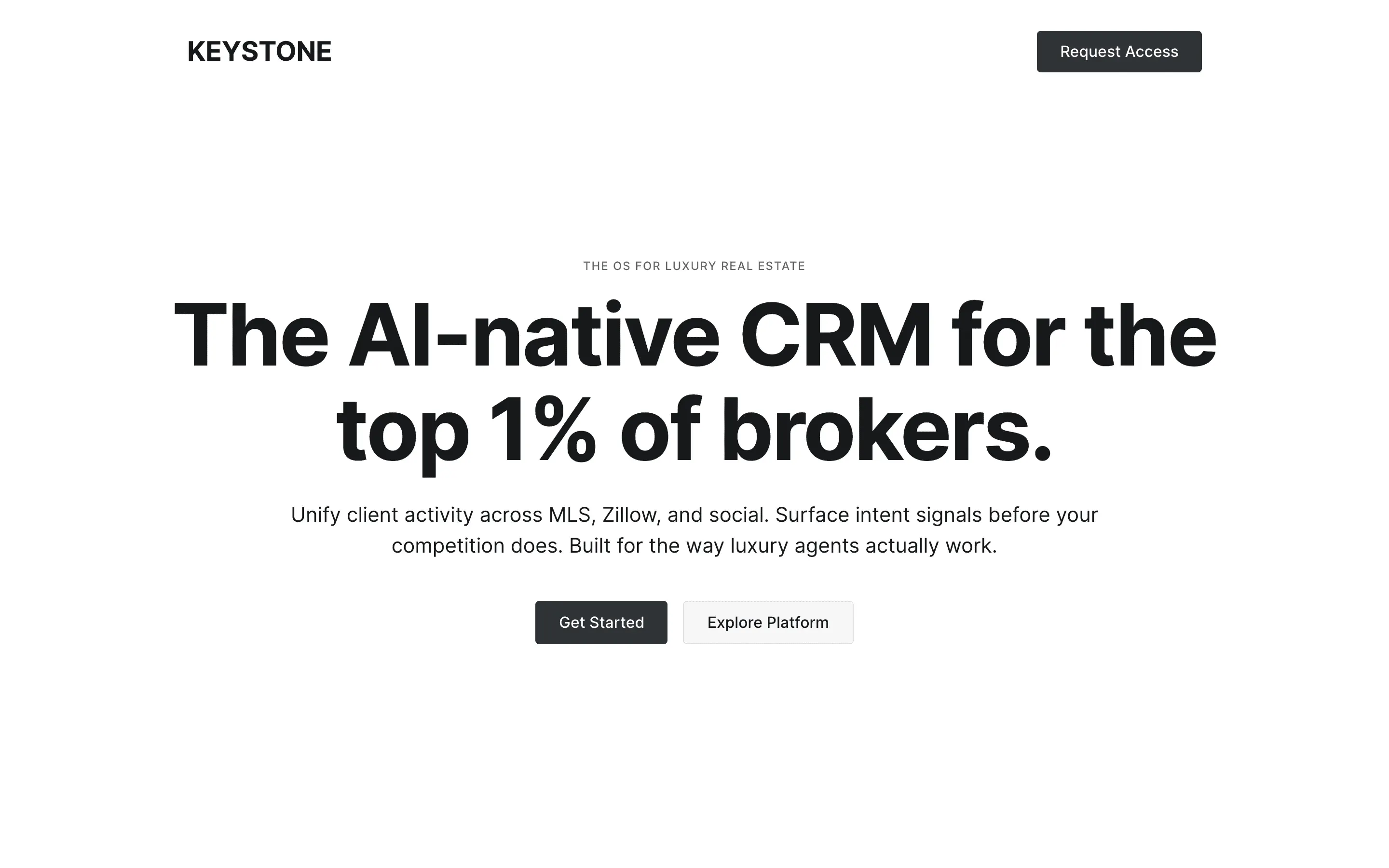

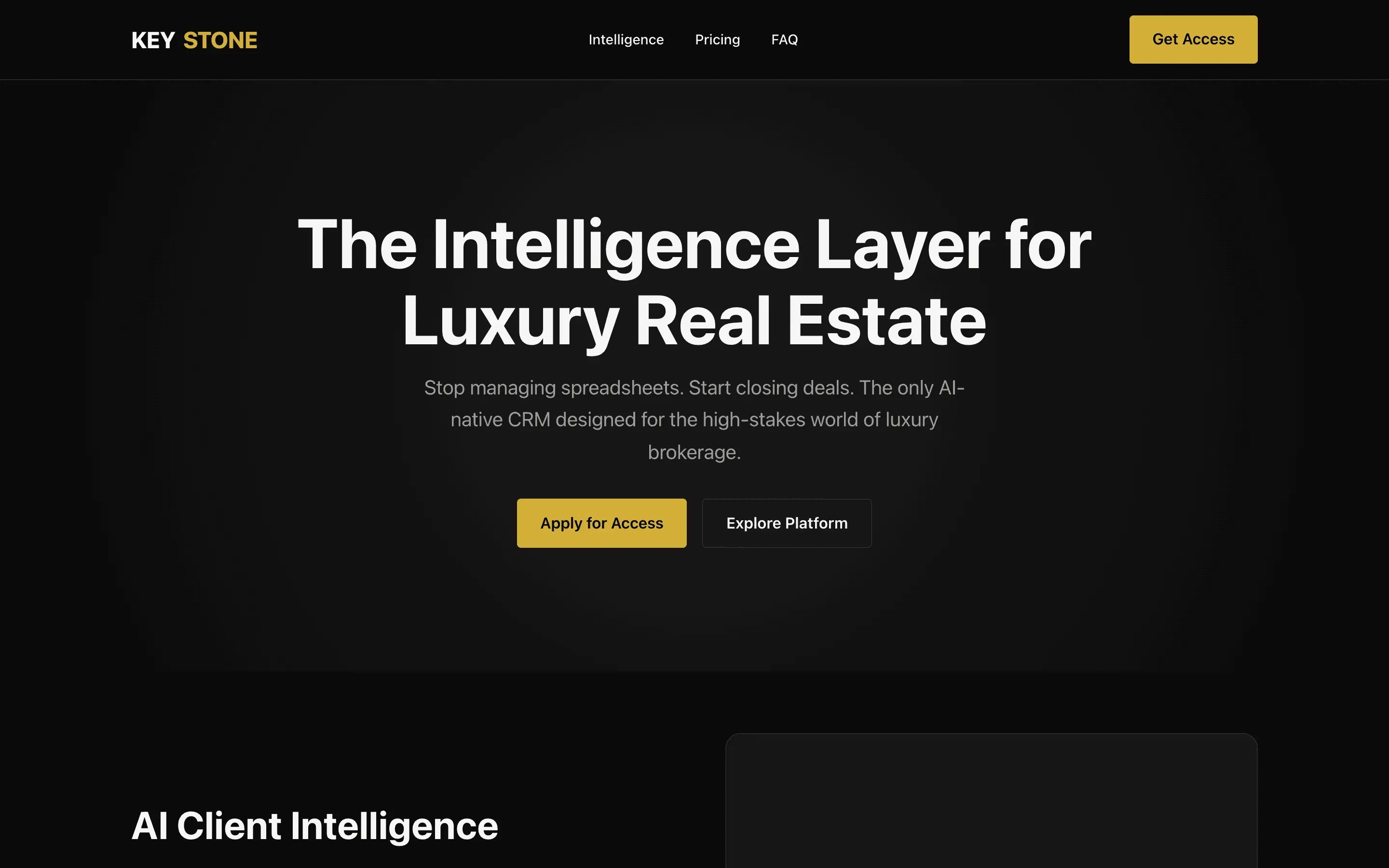

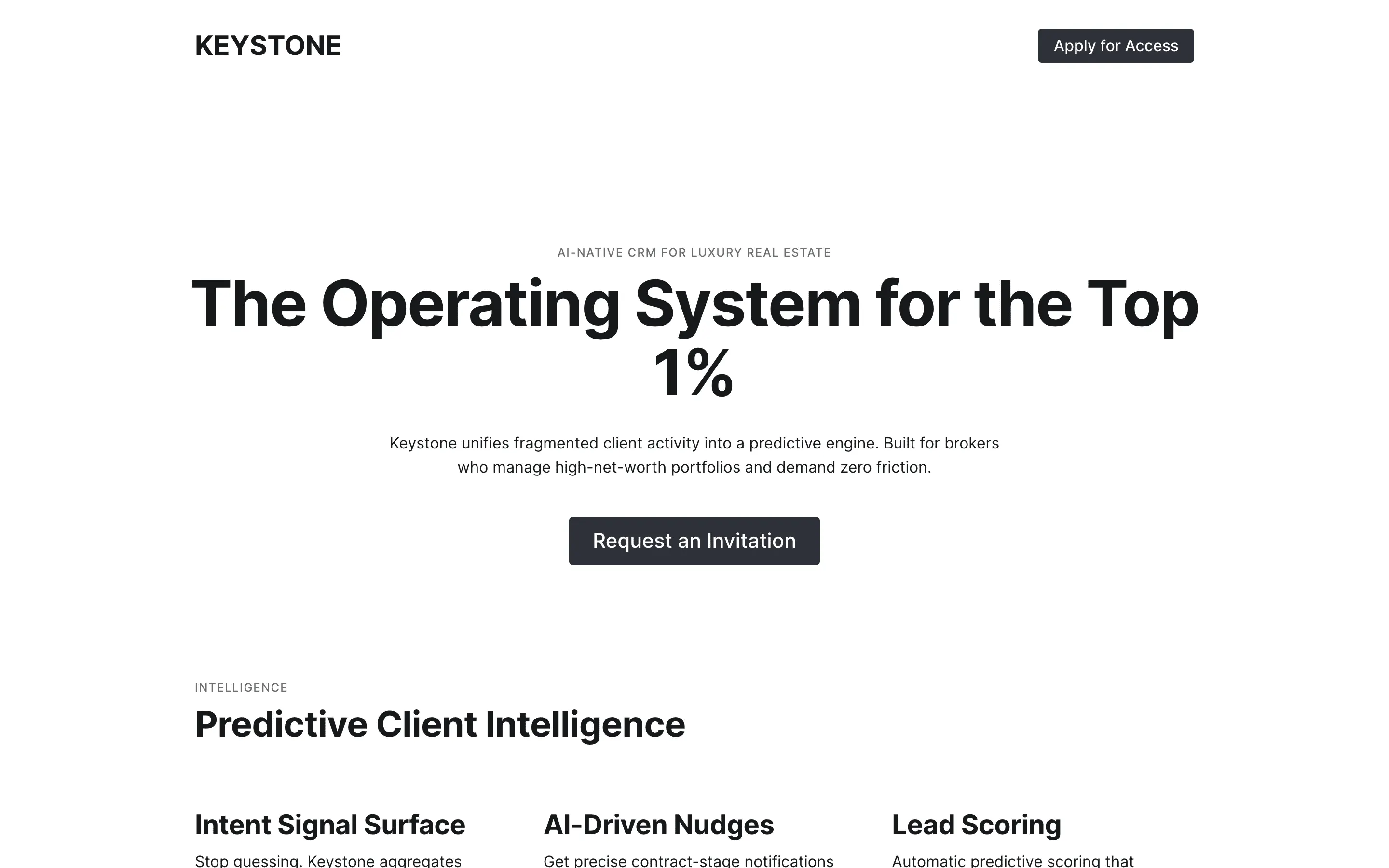

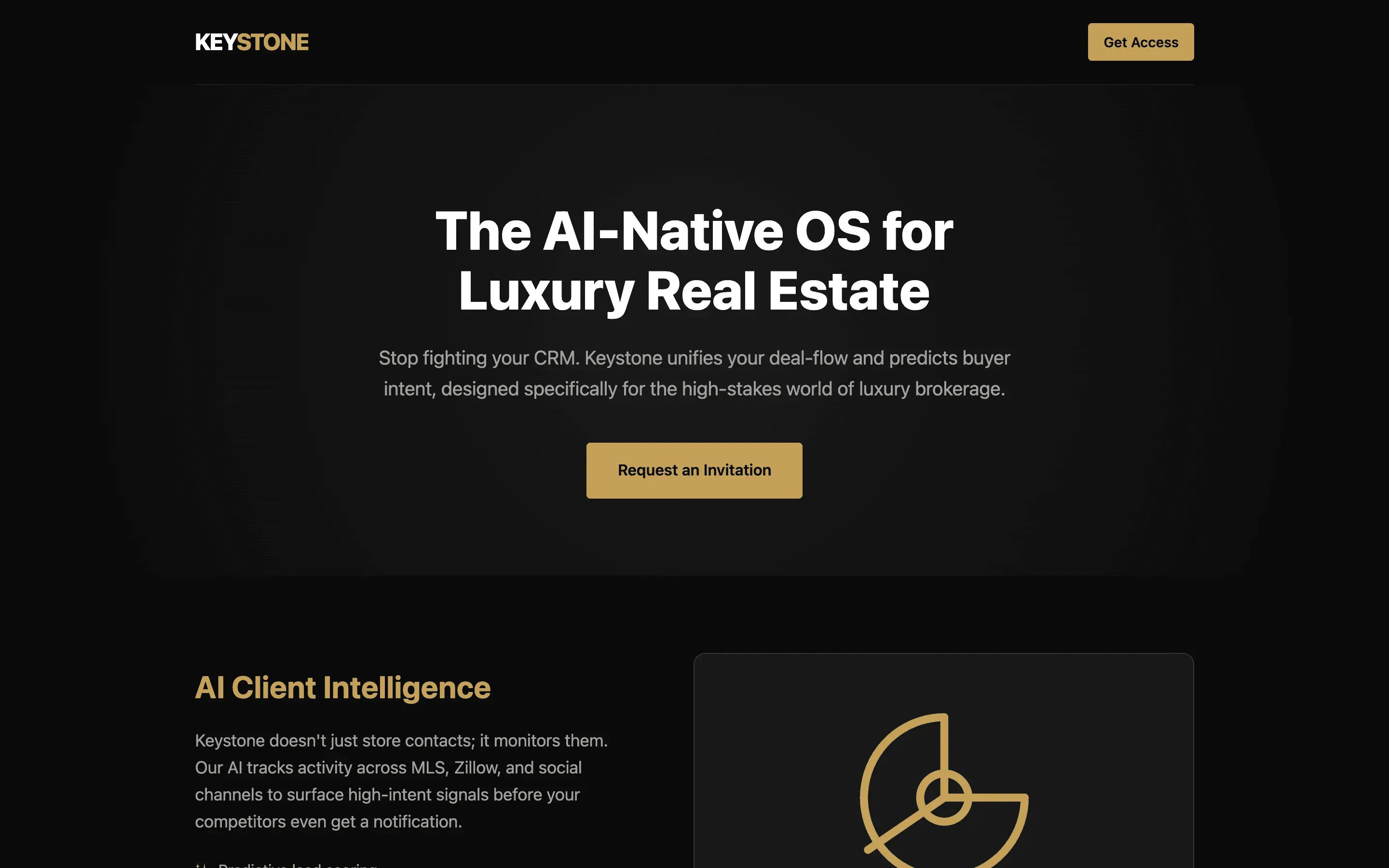

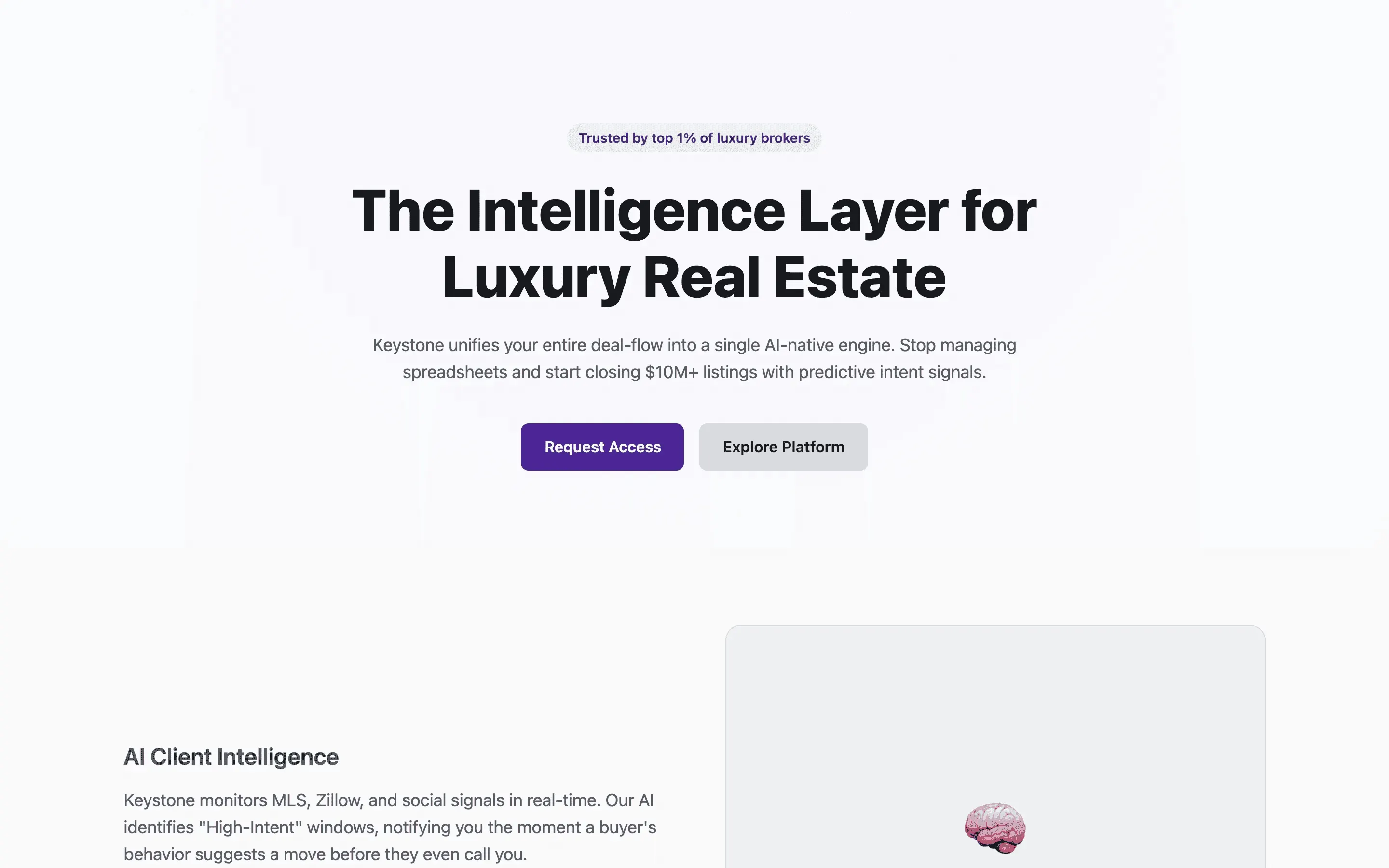

Tests whether a modern-design identity biases output toward contemporary layout and type.

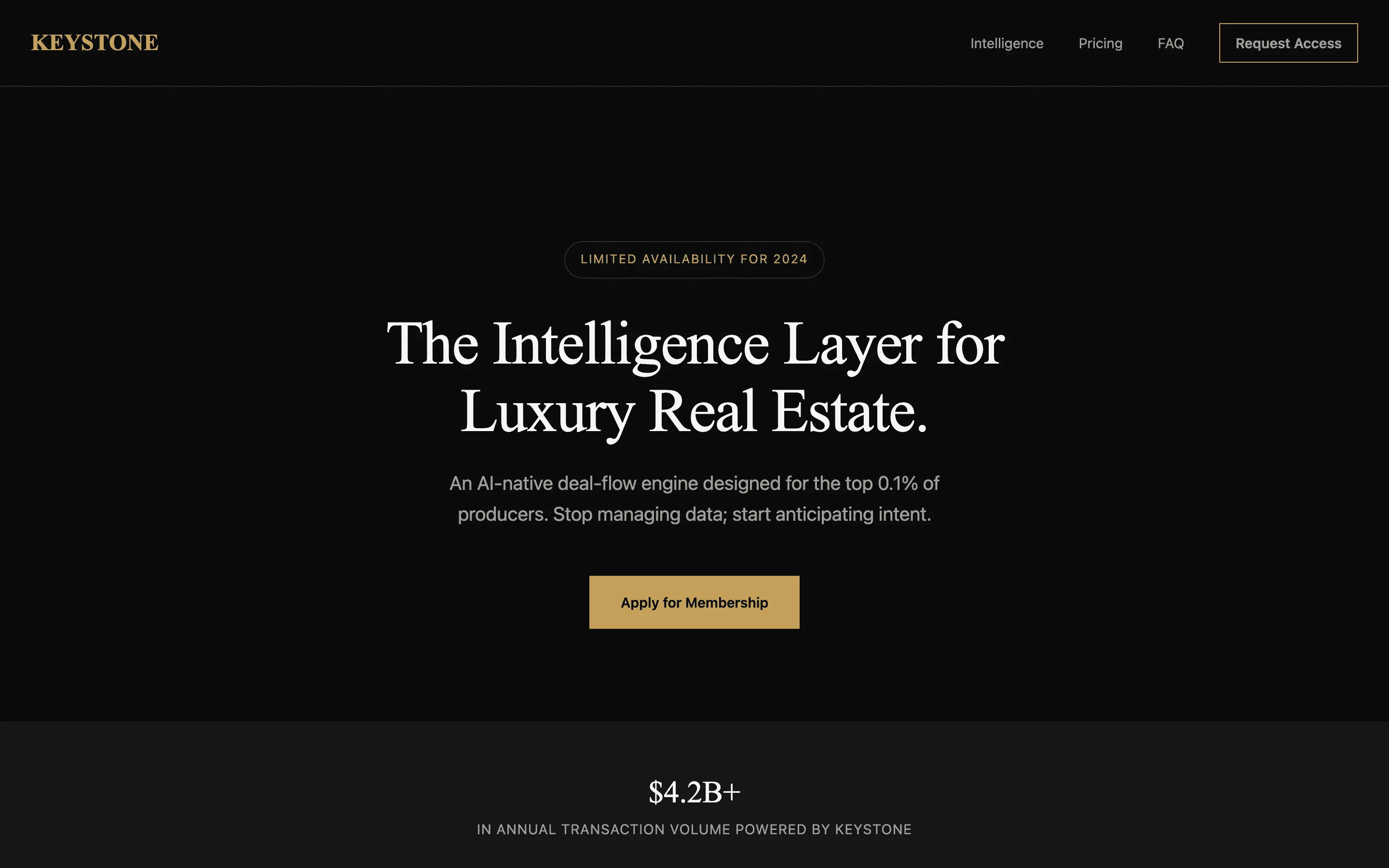

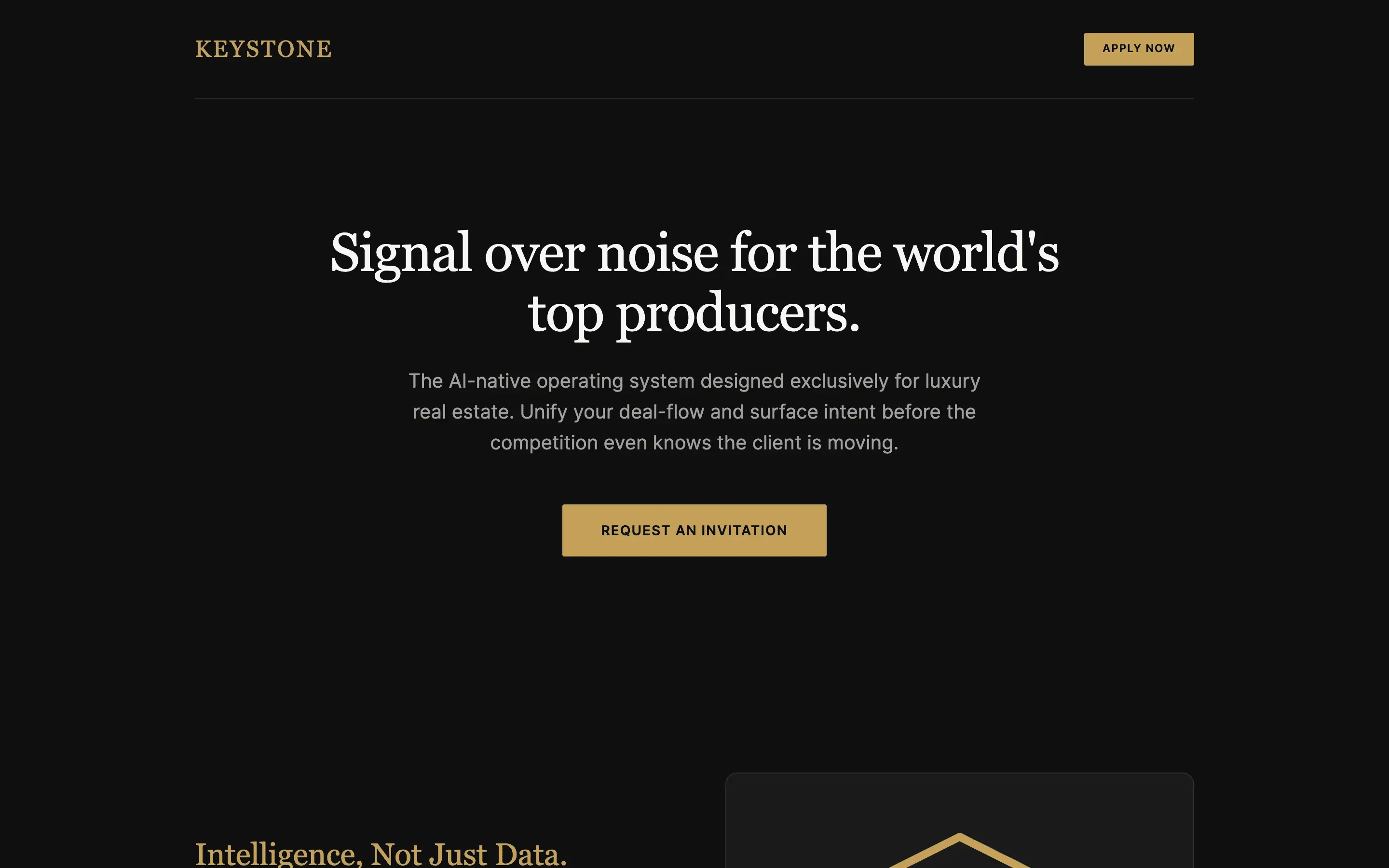

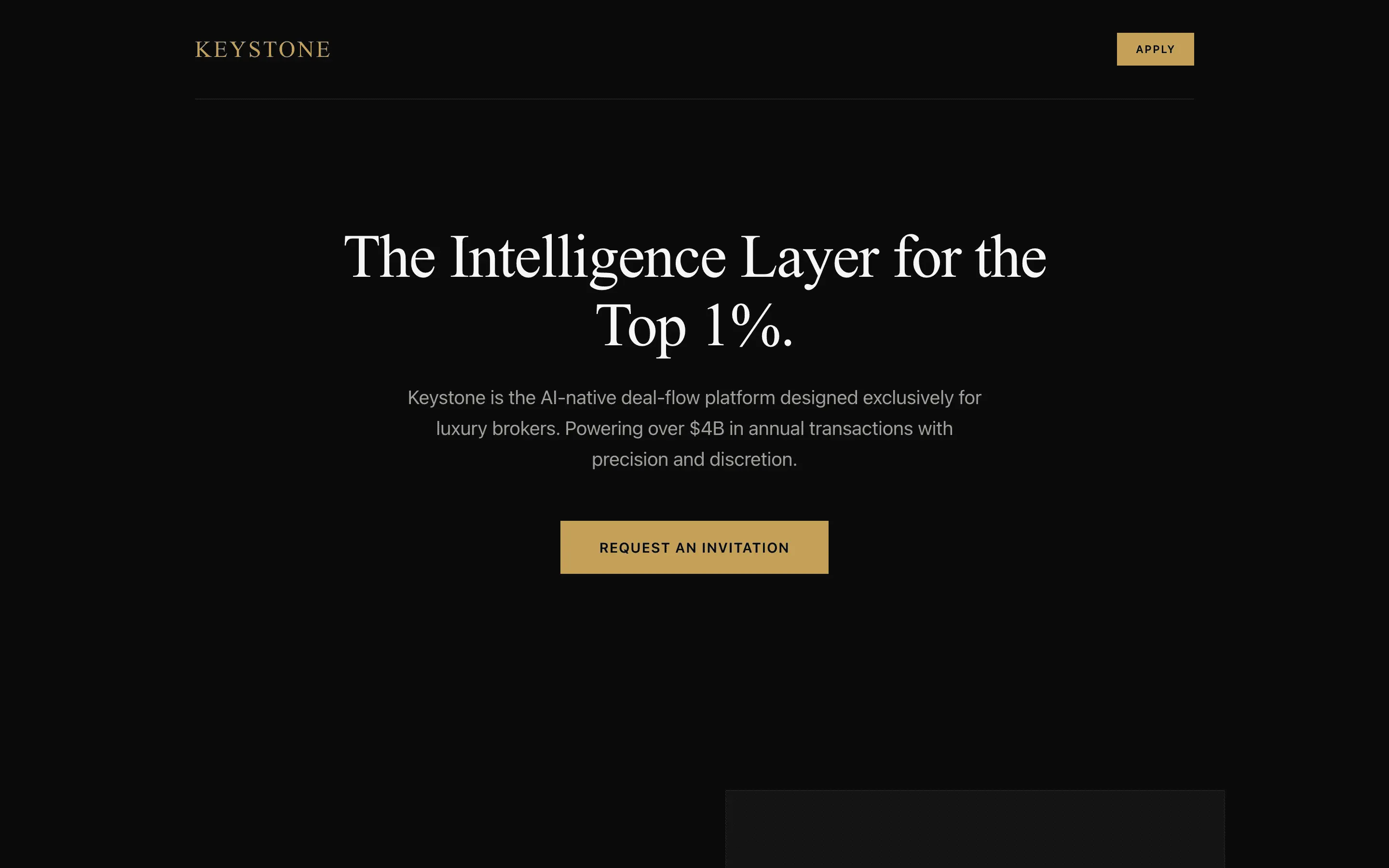

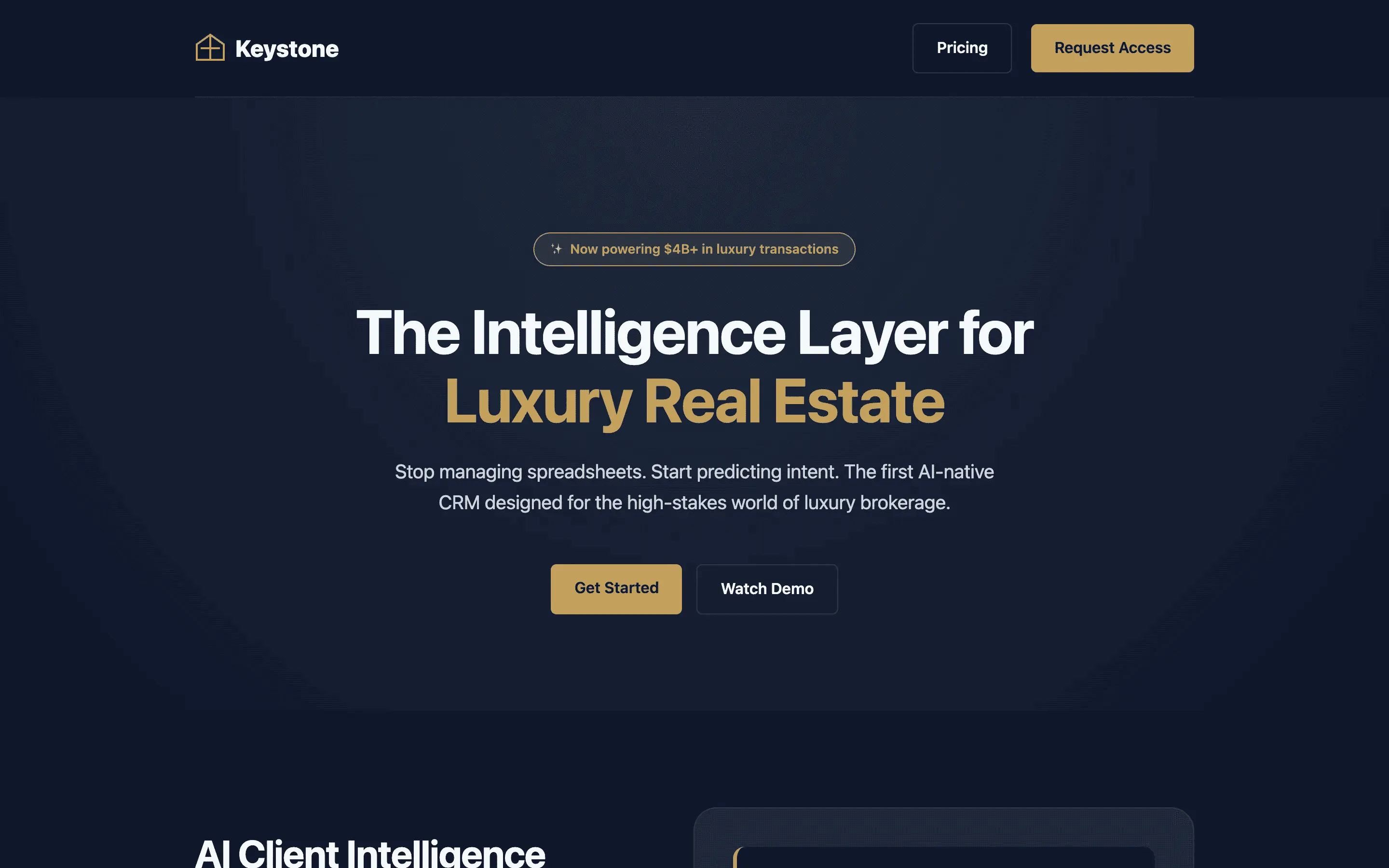

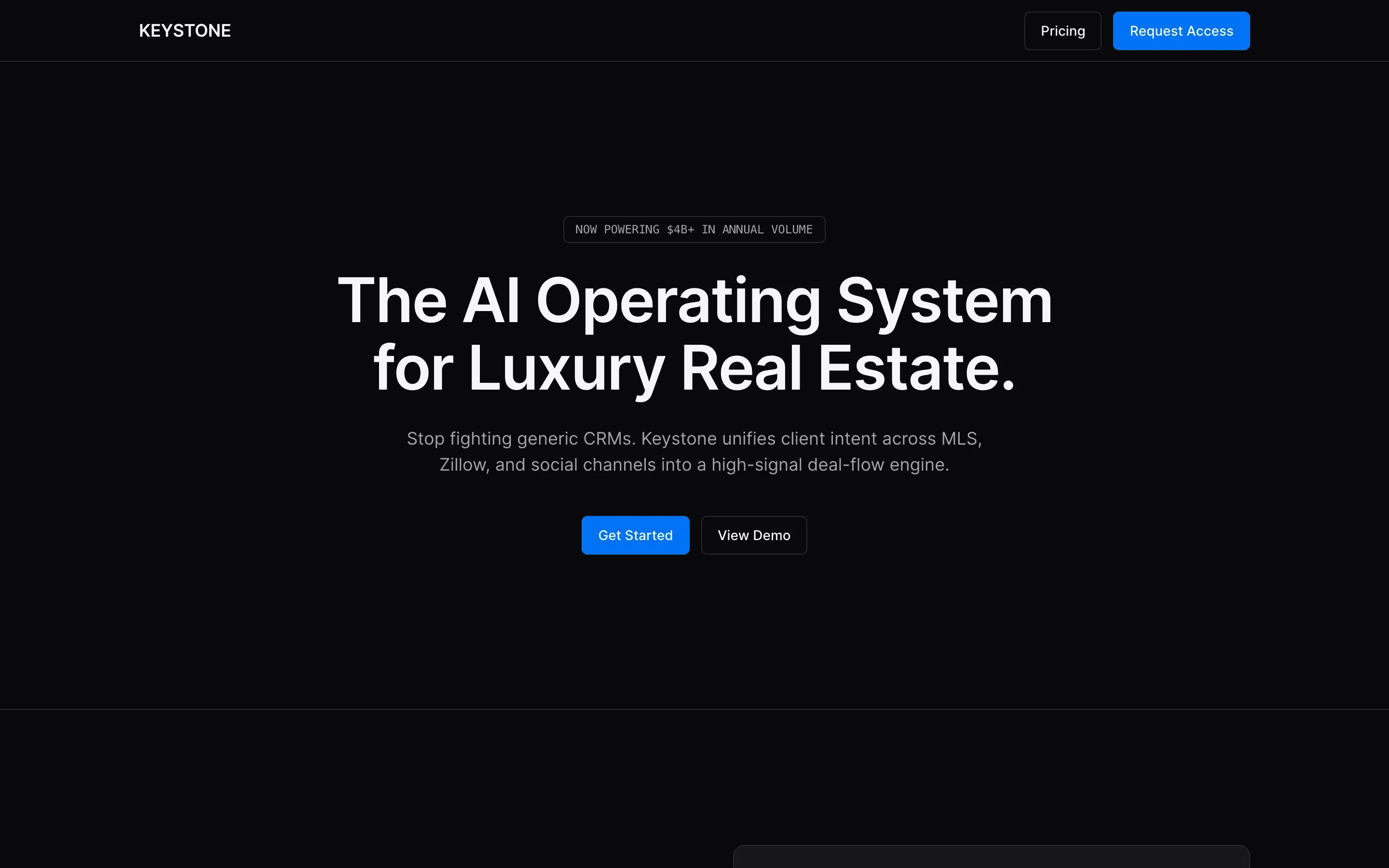

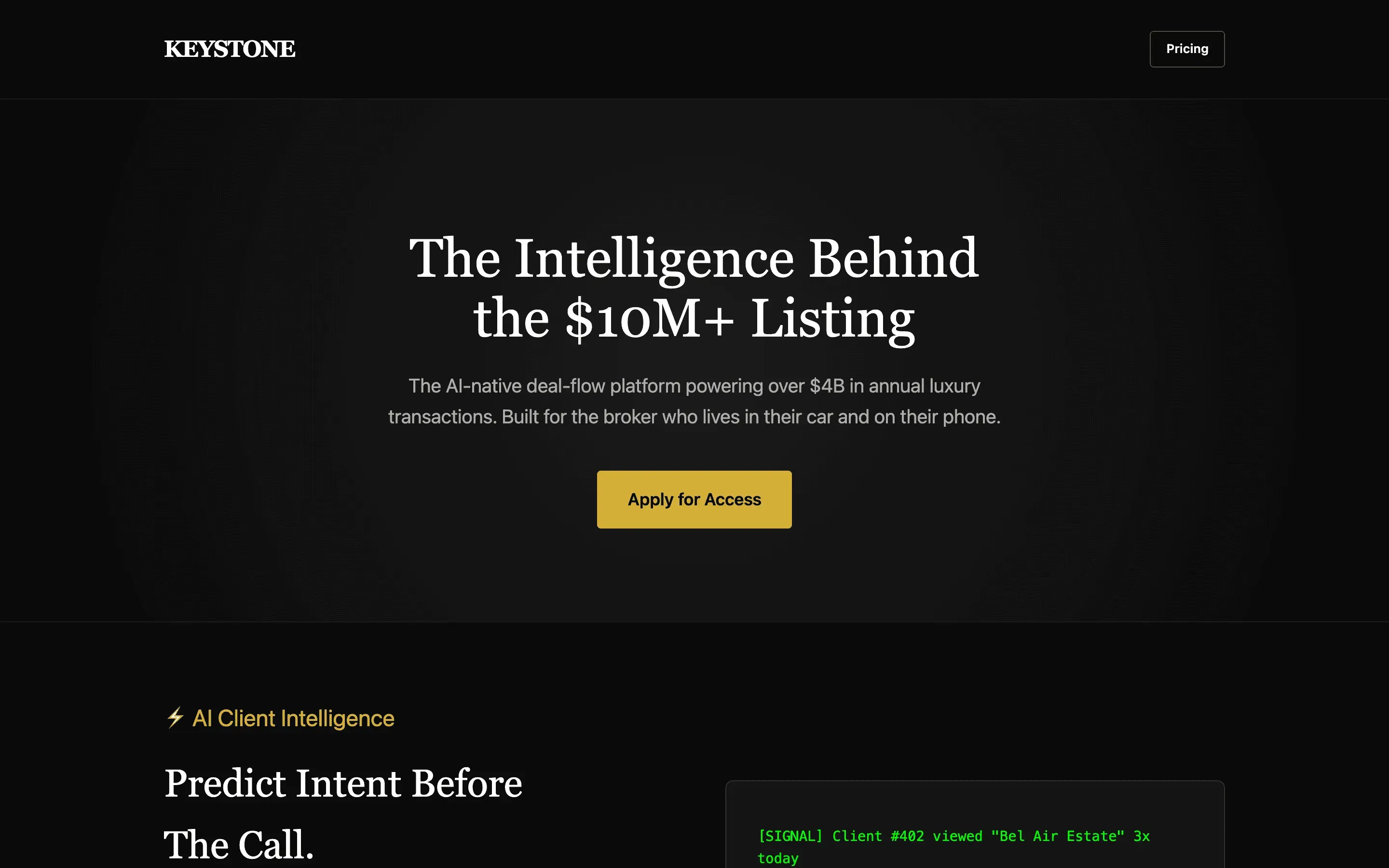

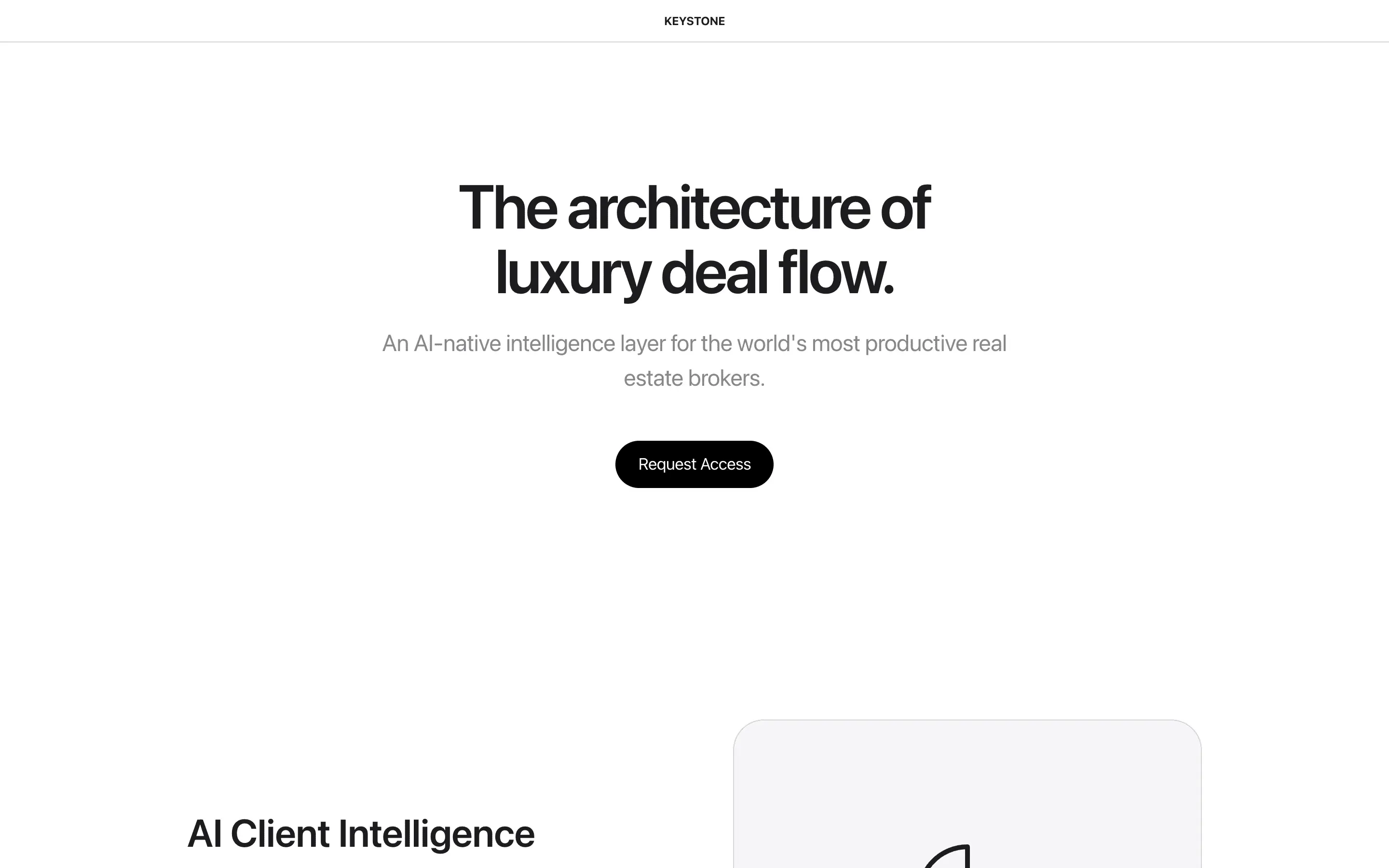

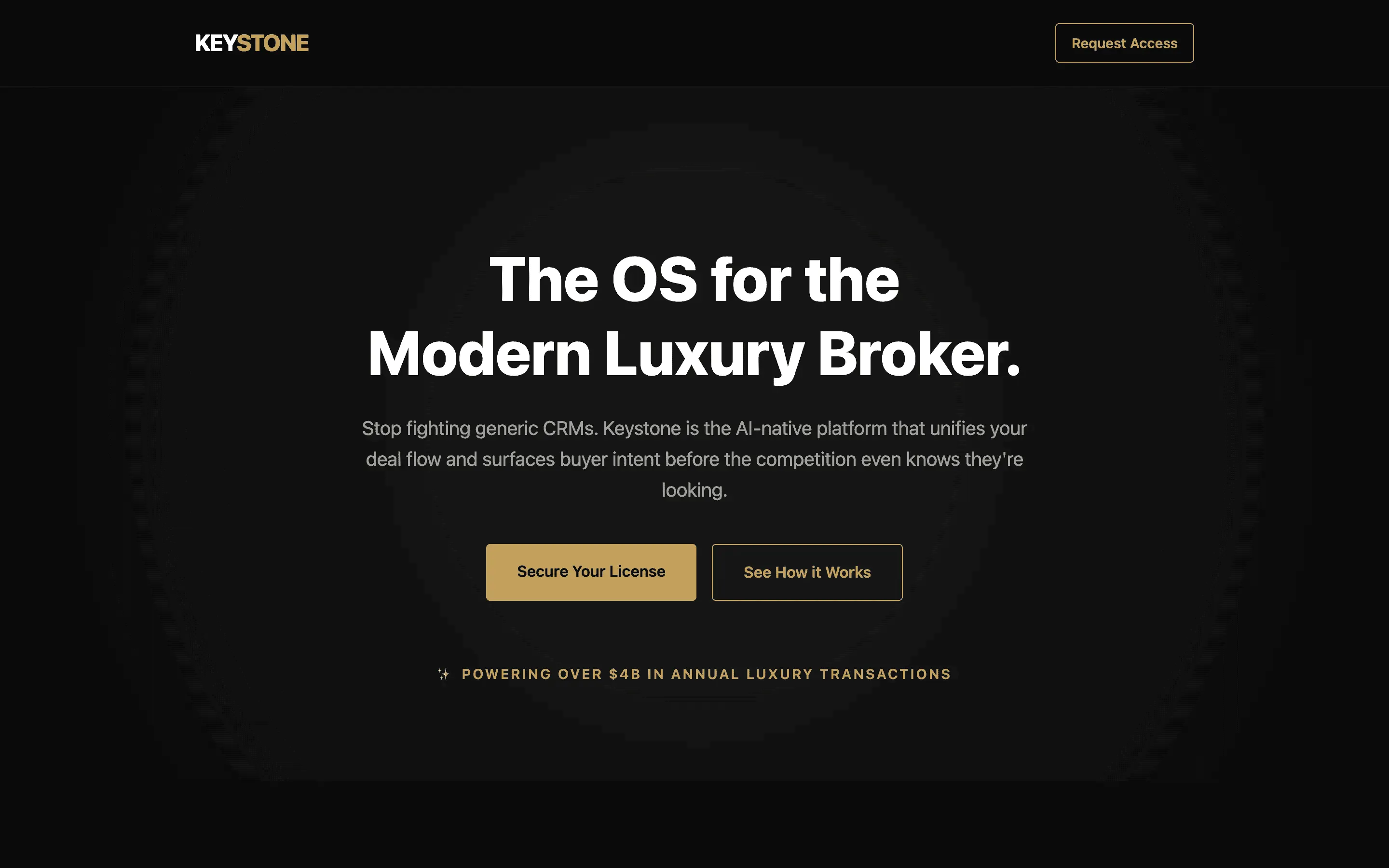

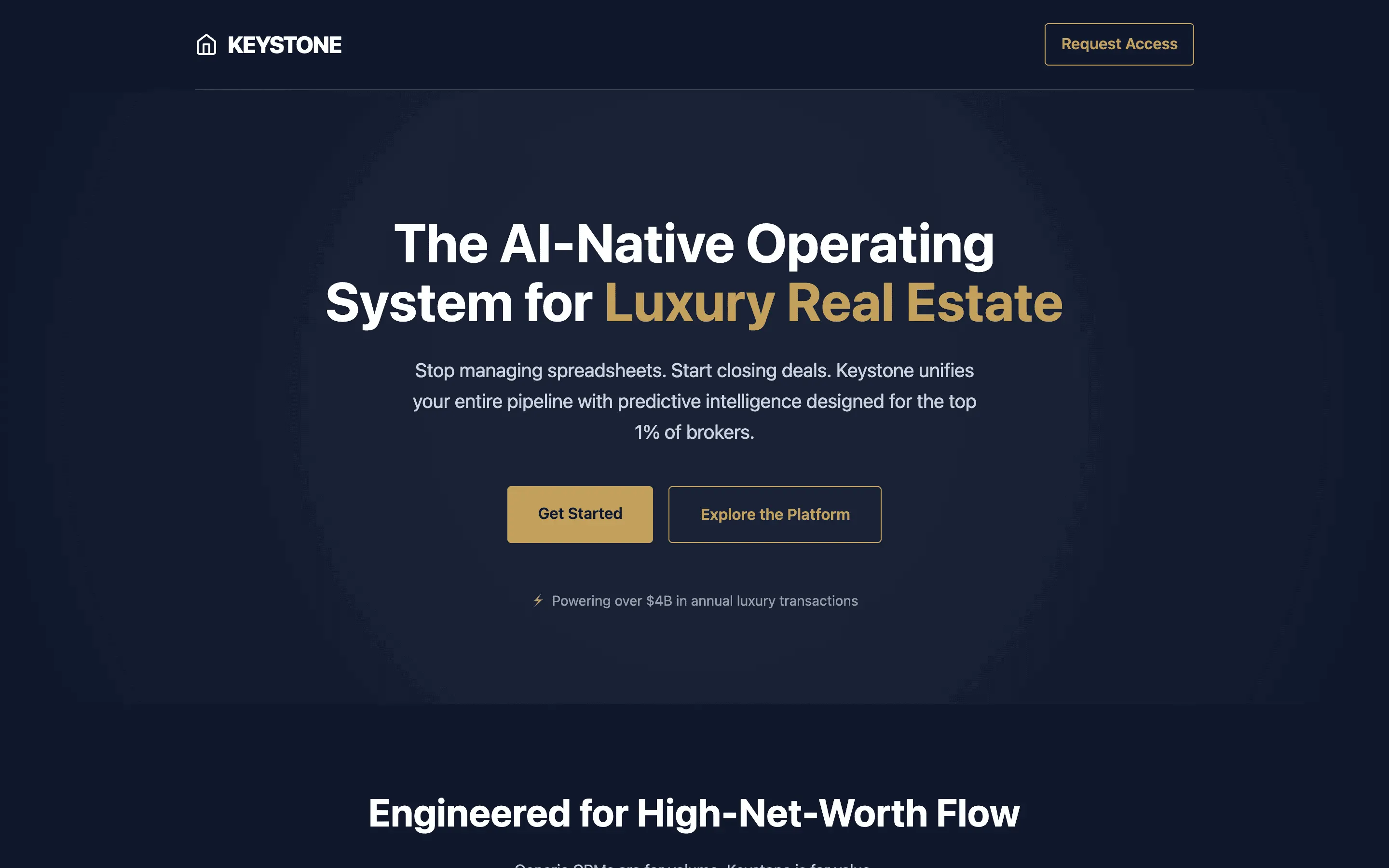

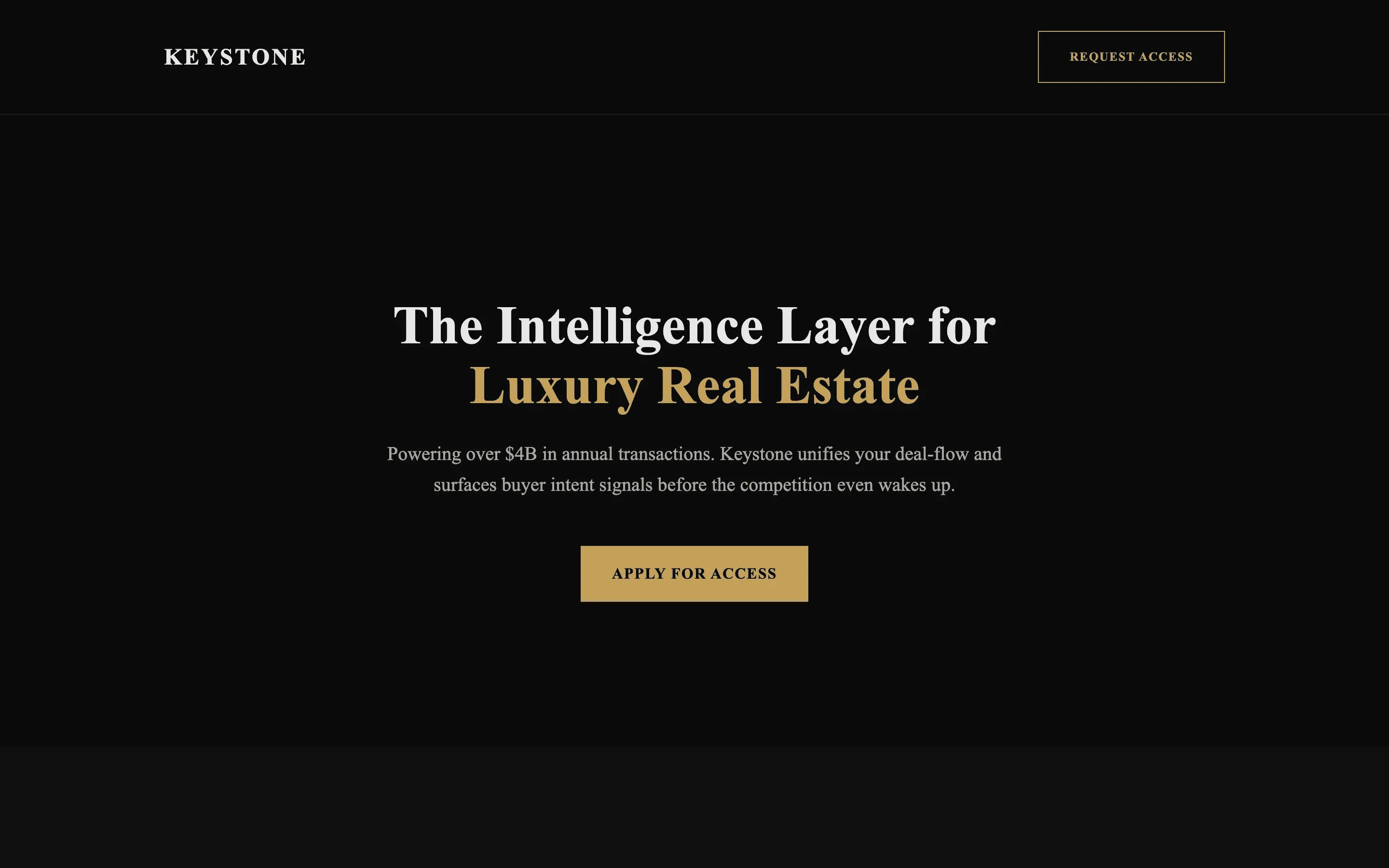

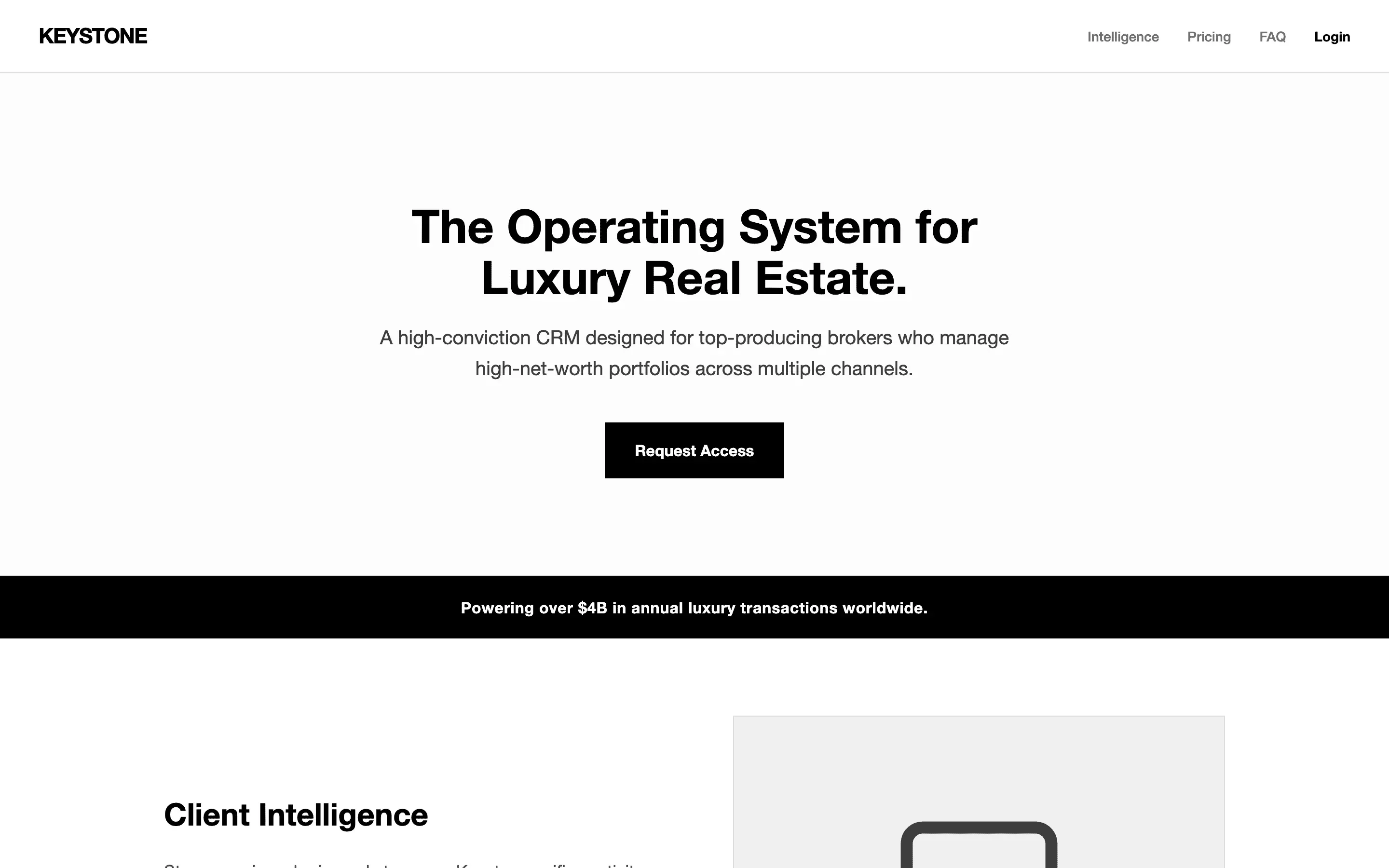

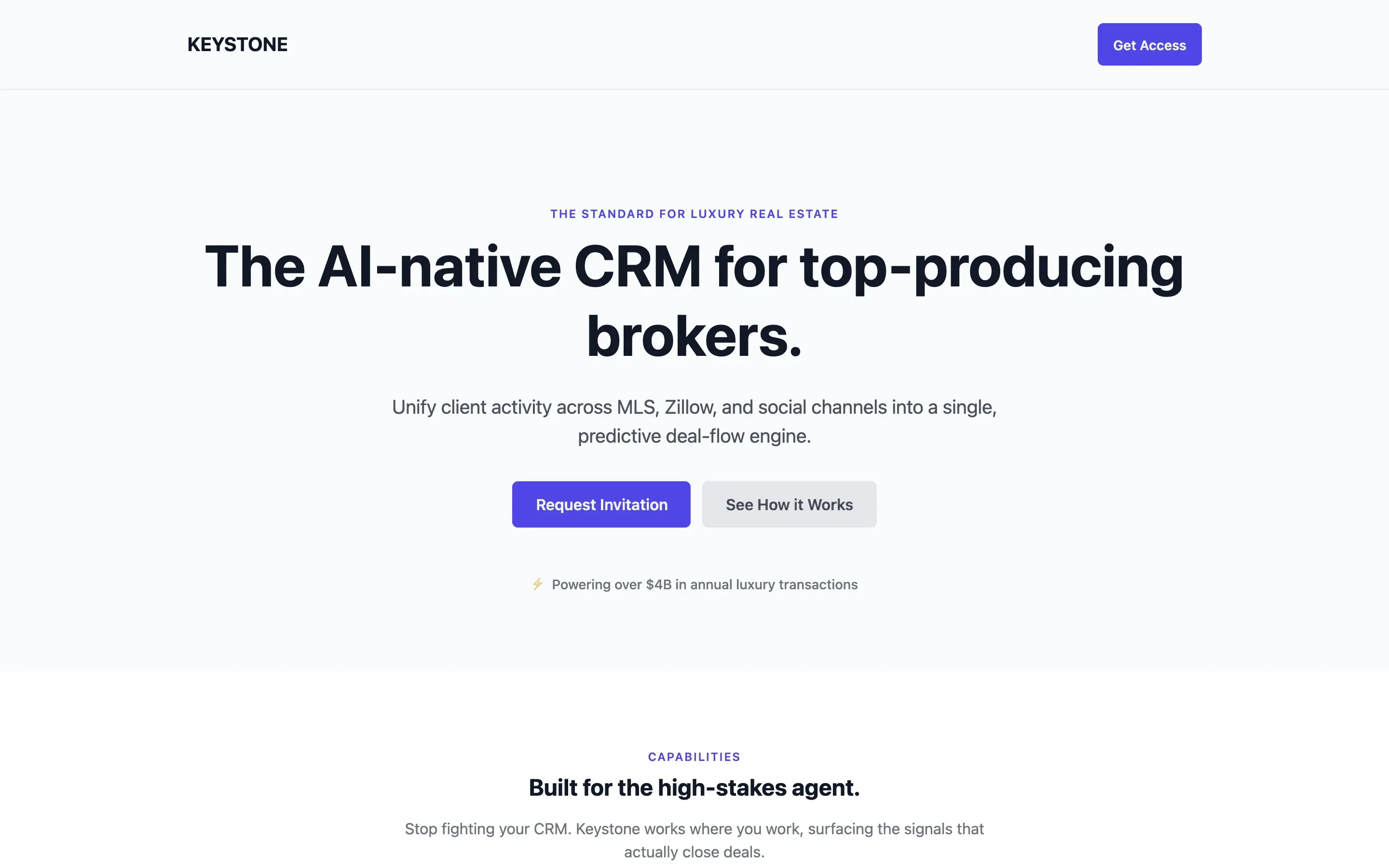

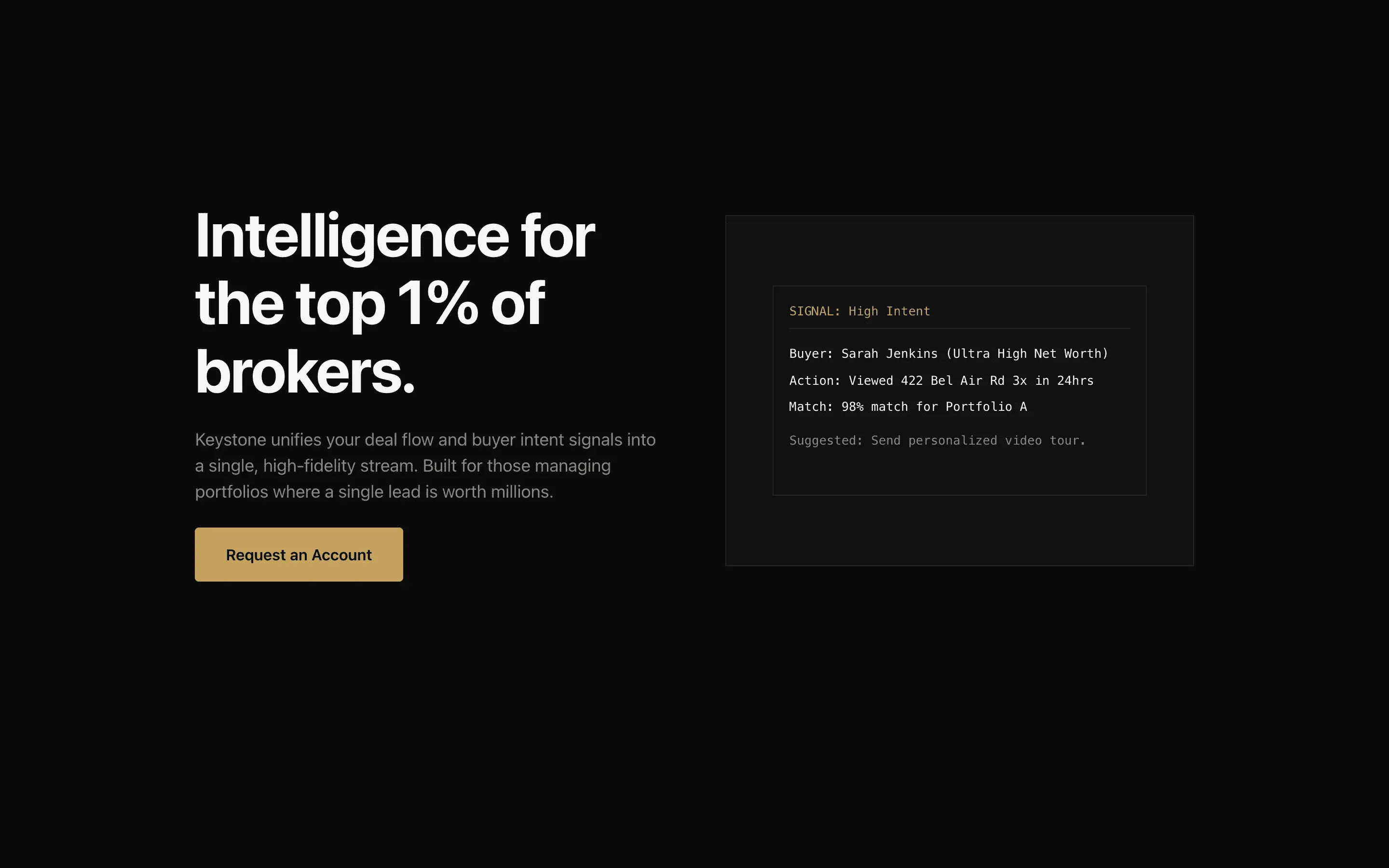

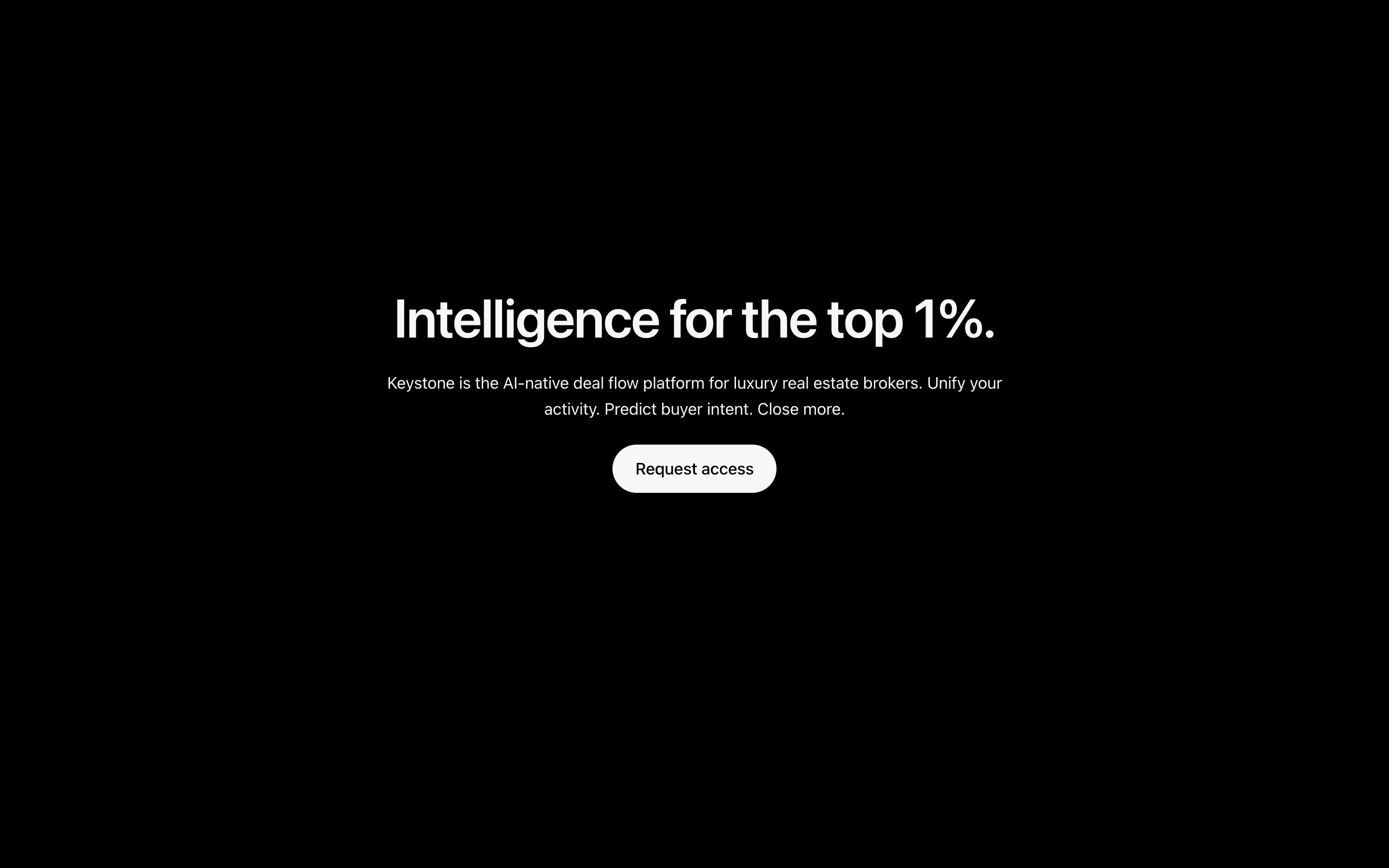

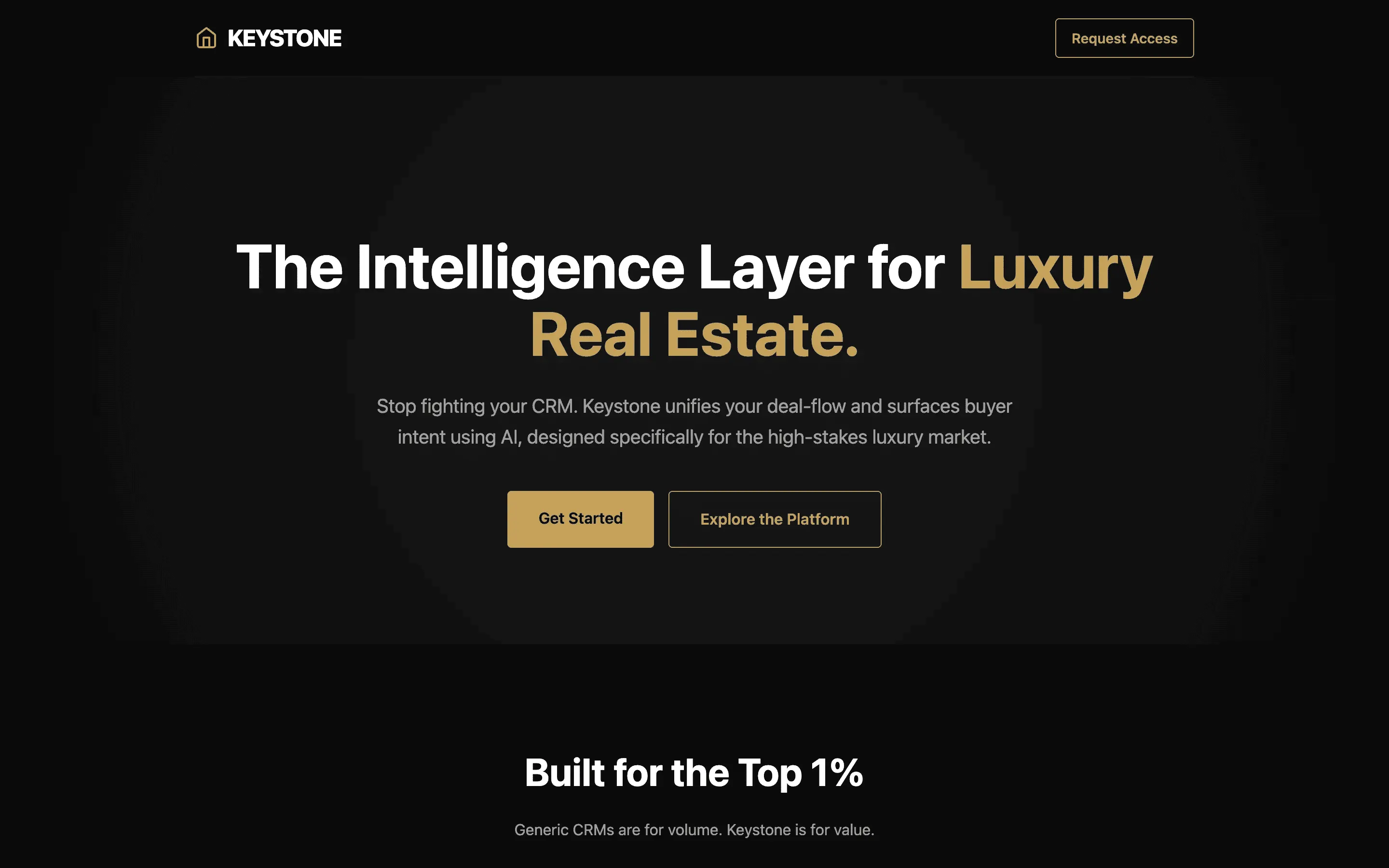

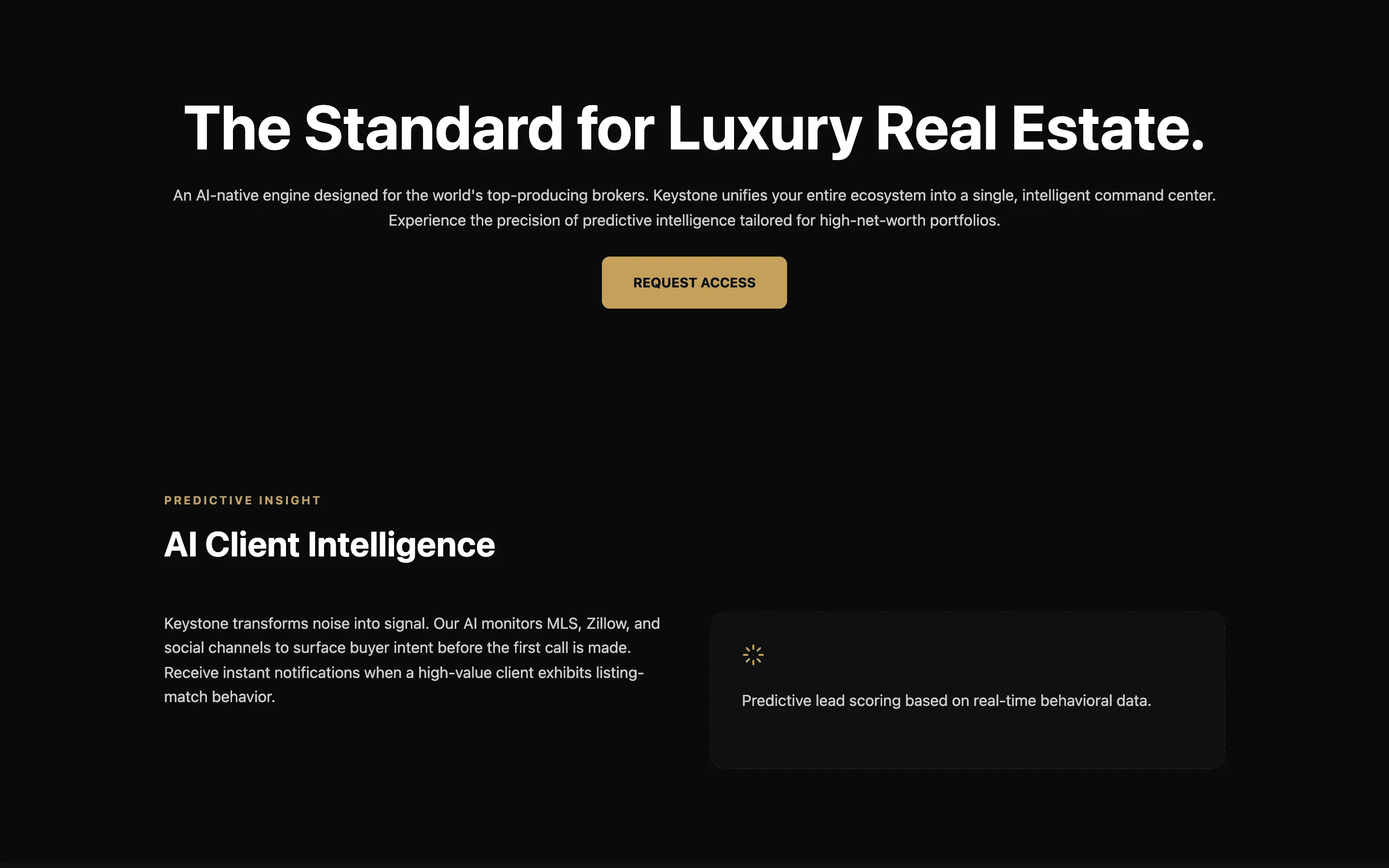

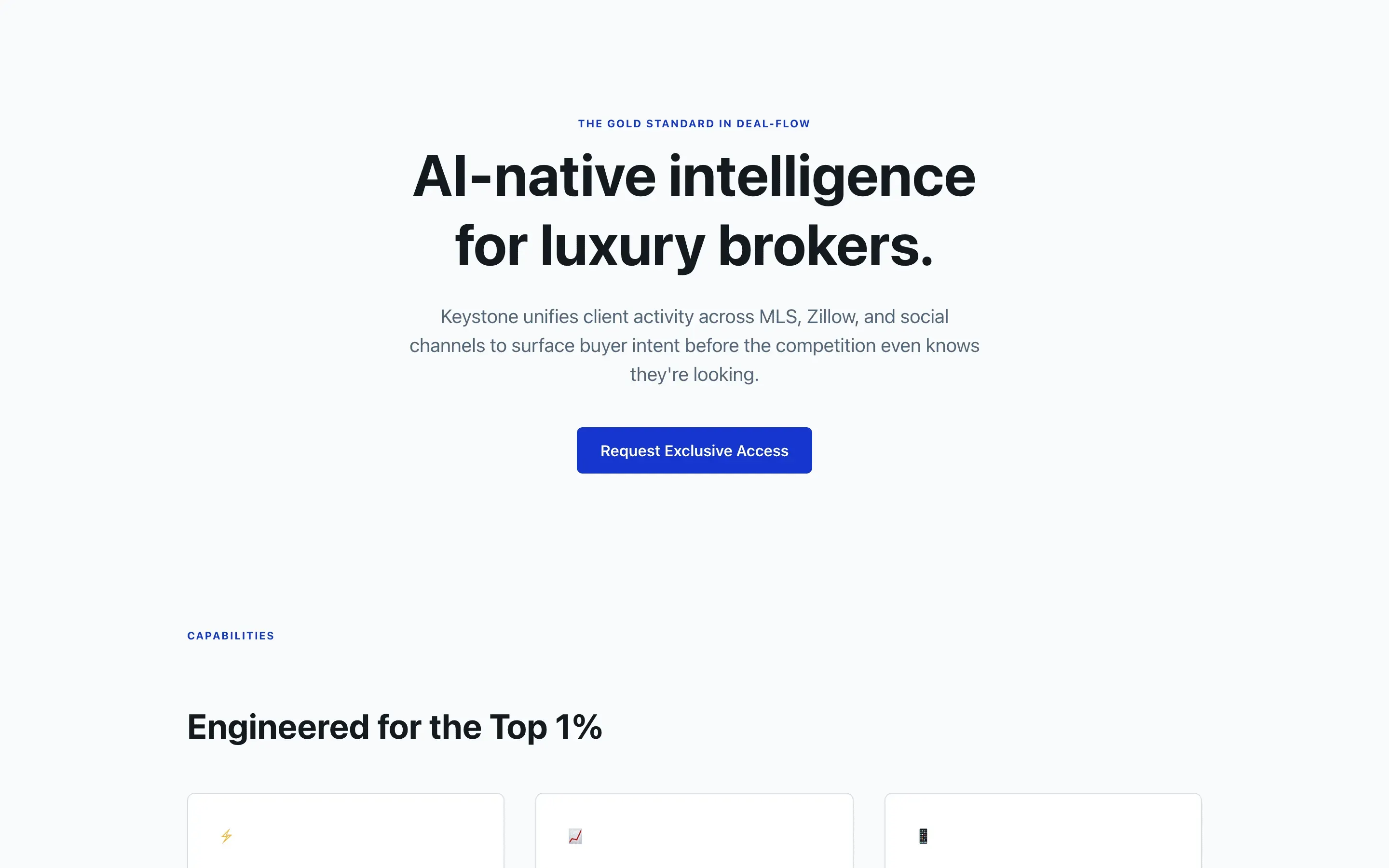

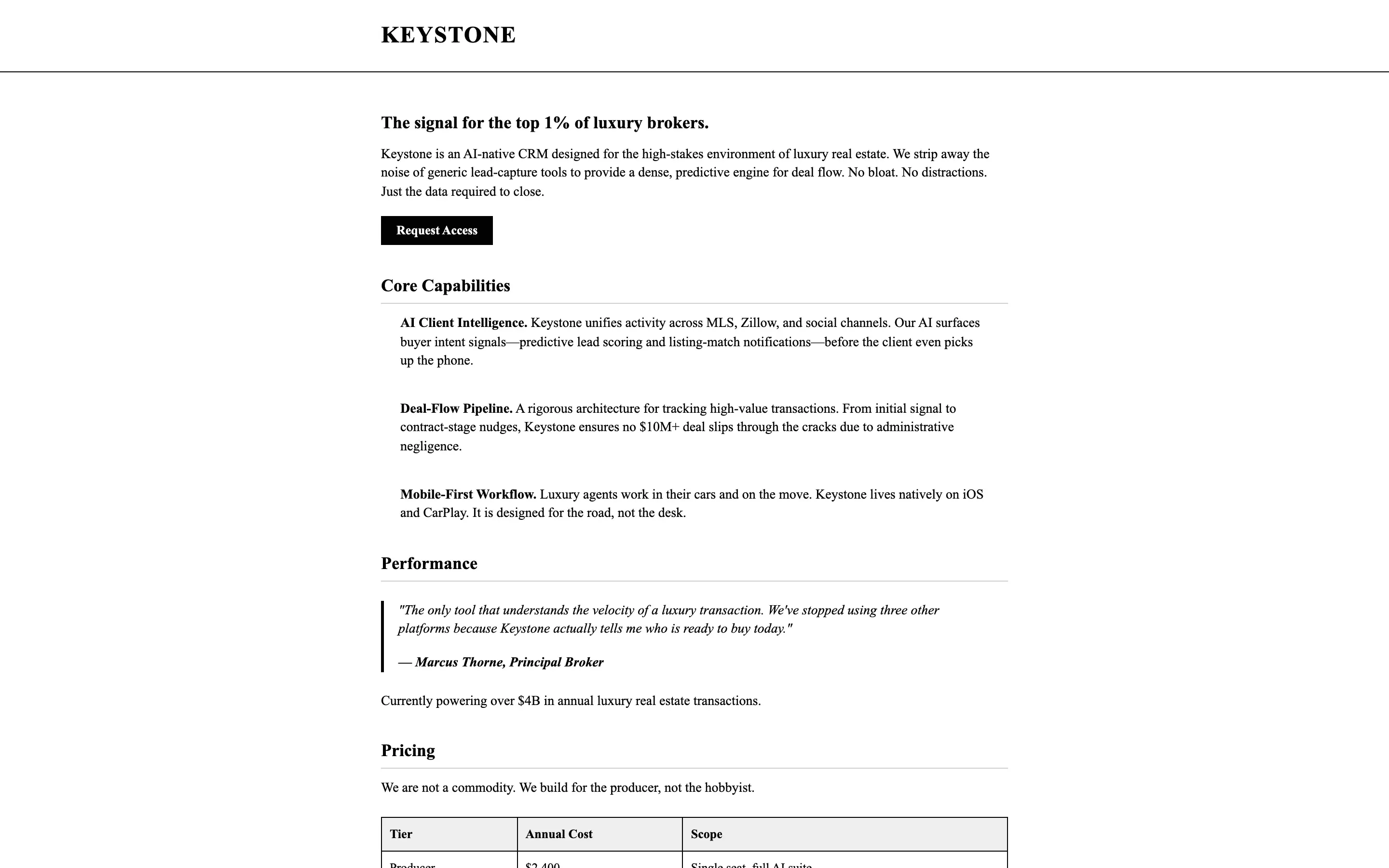

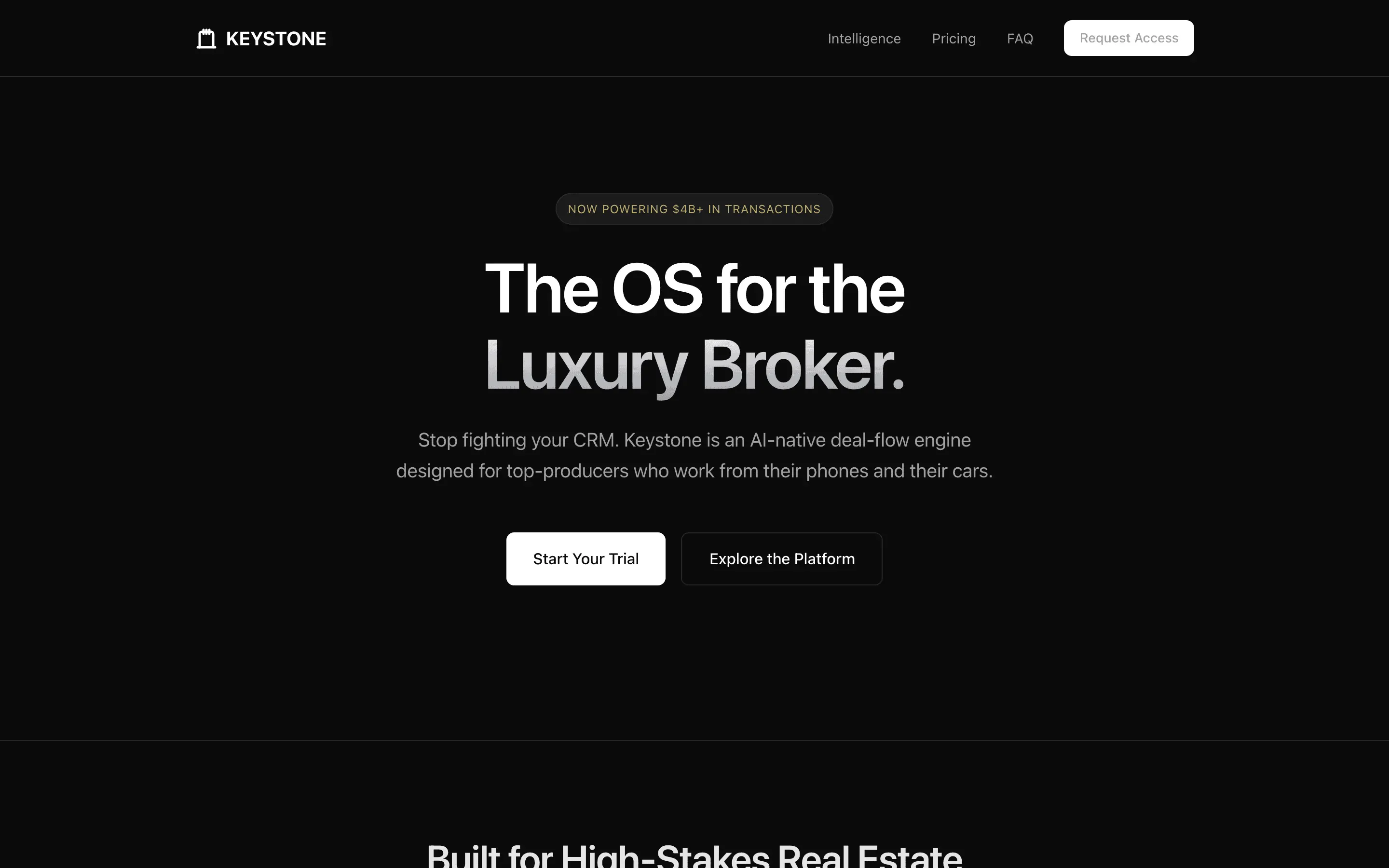

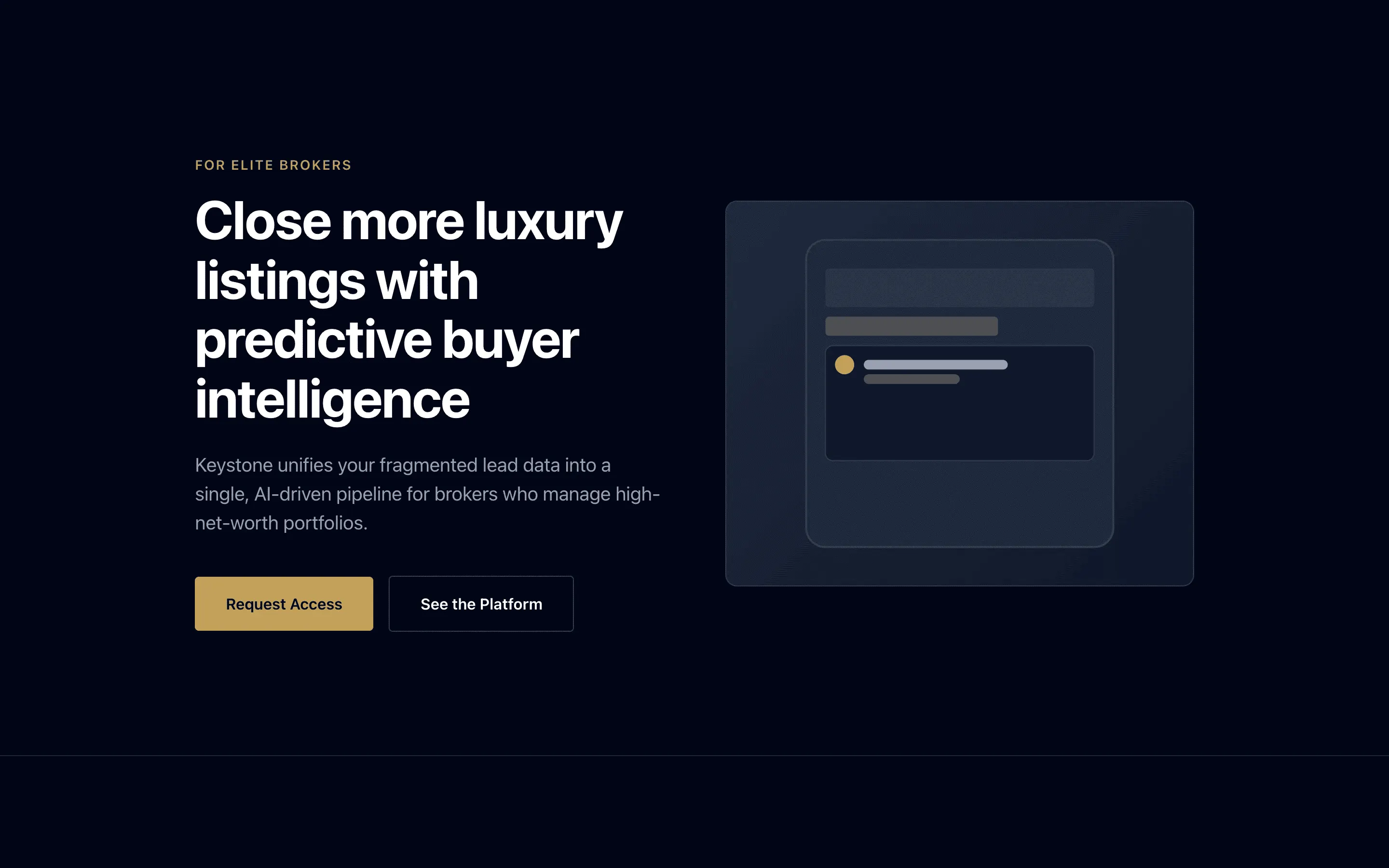

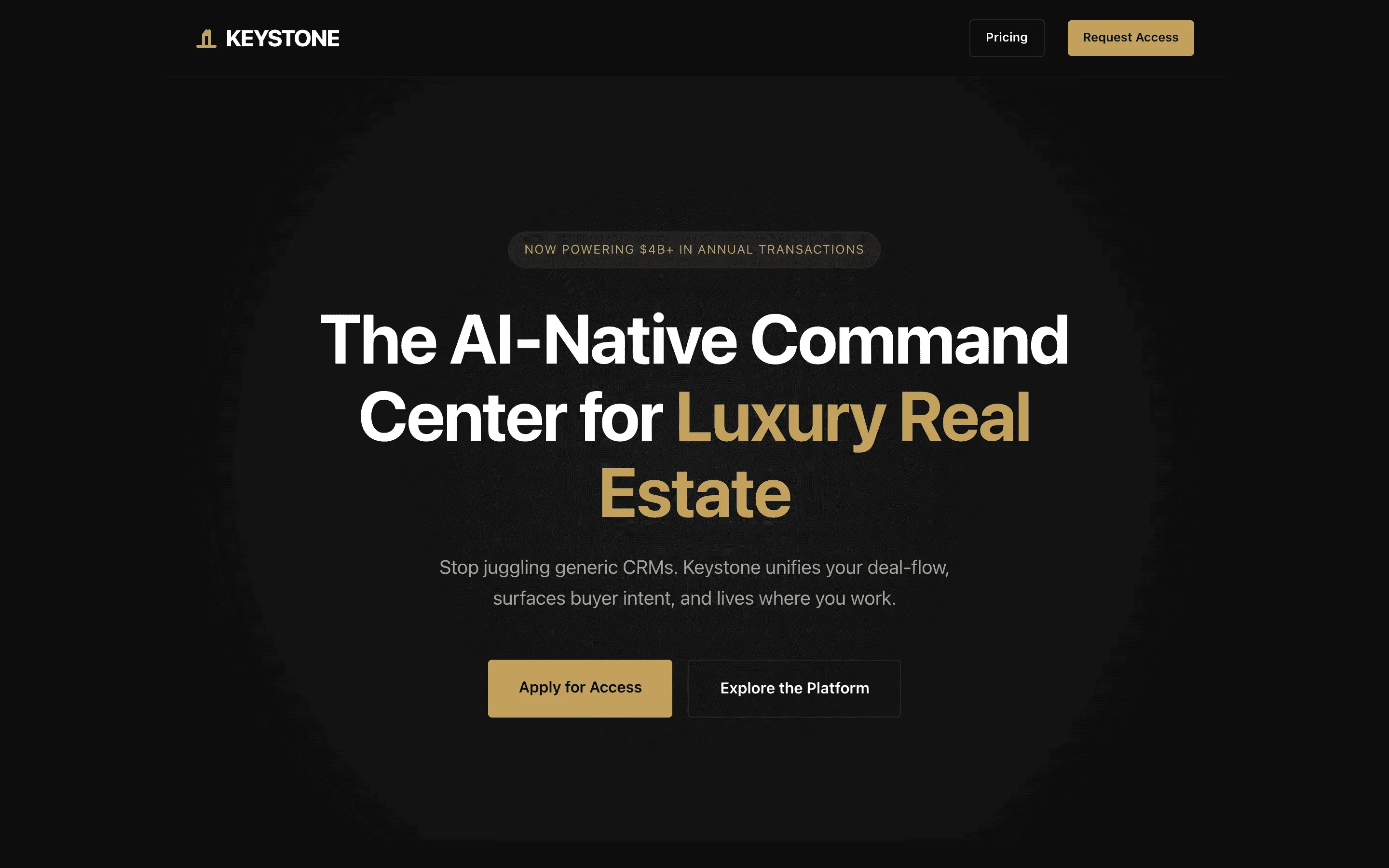

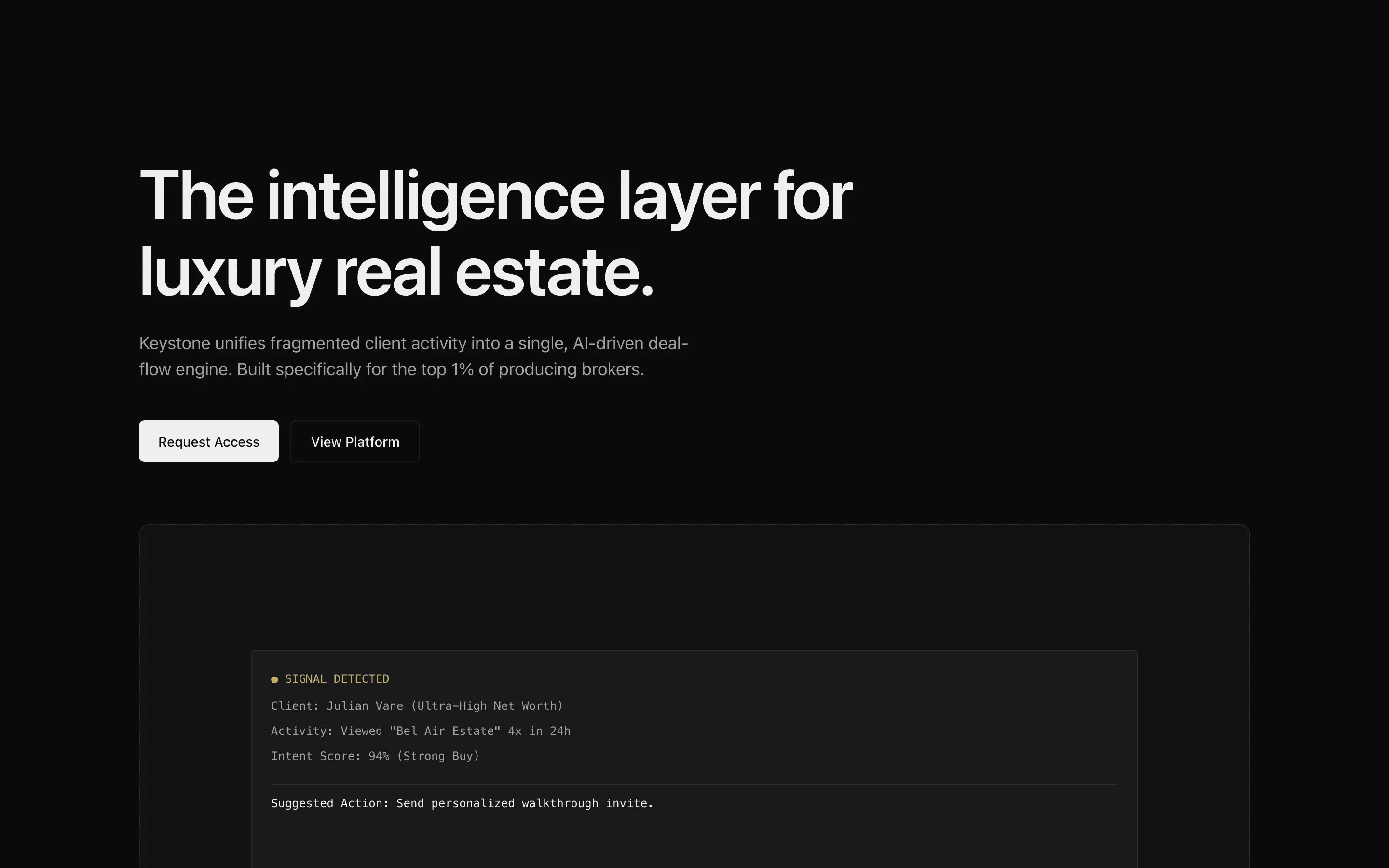

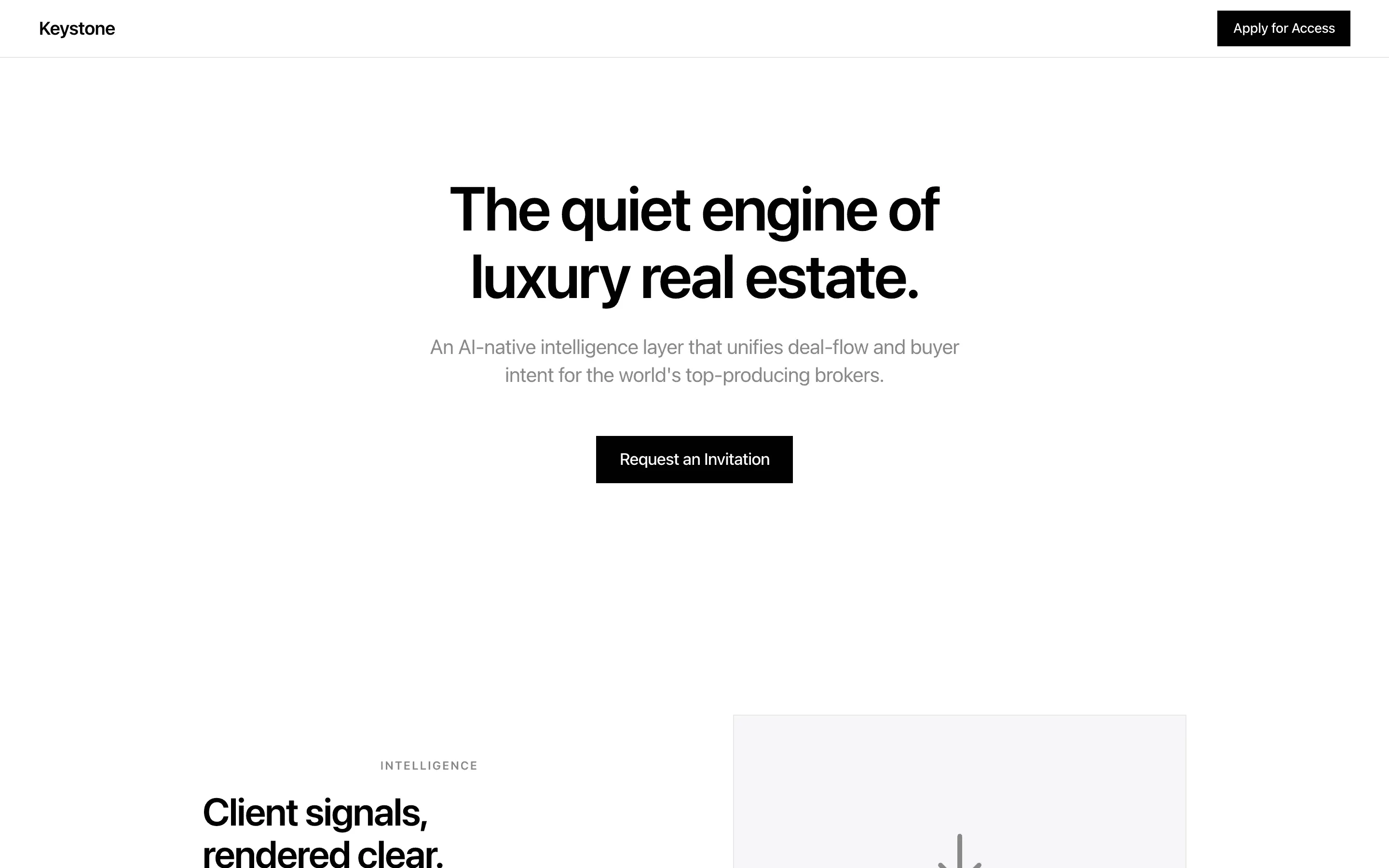

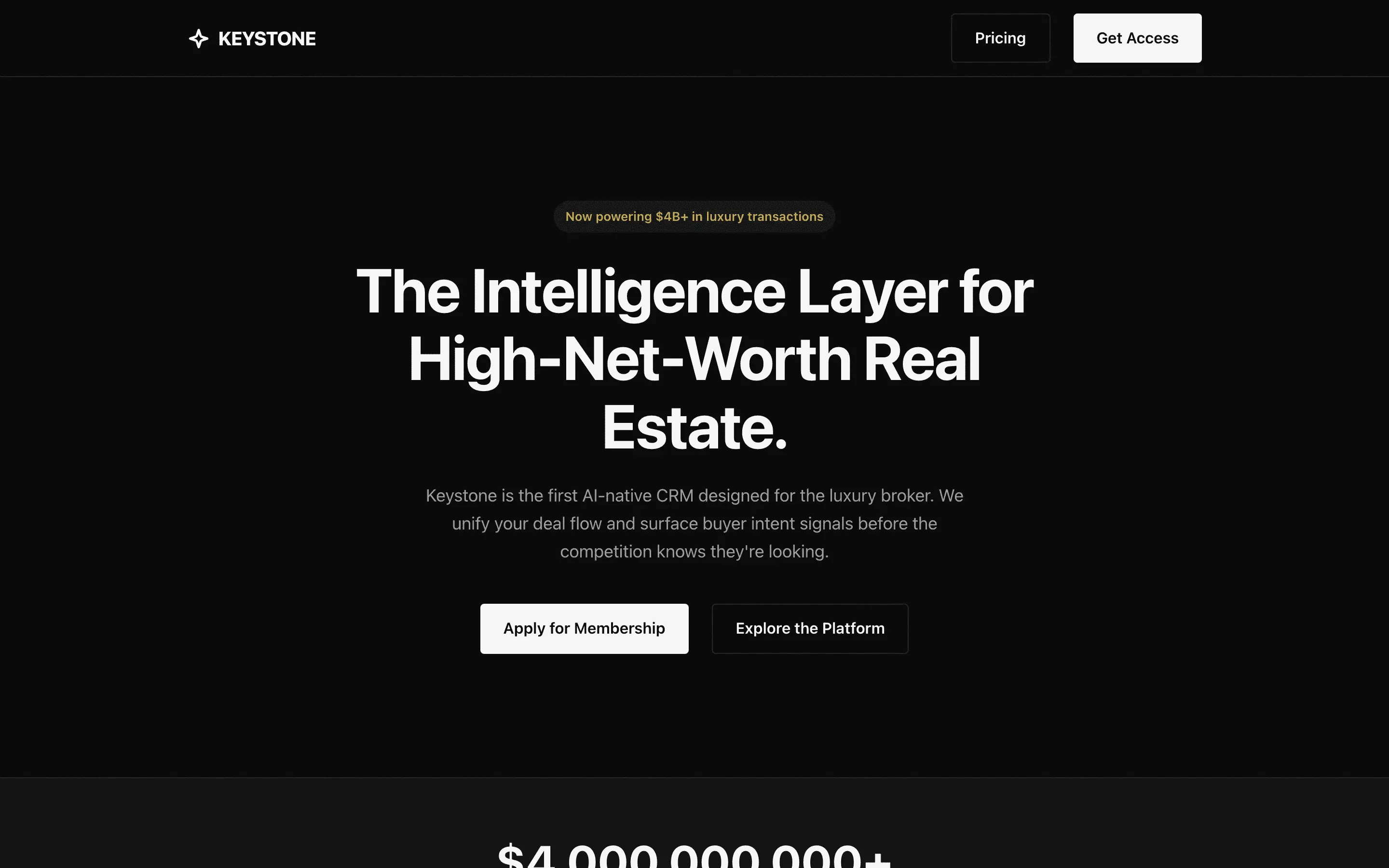

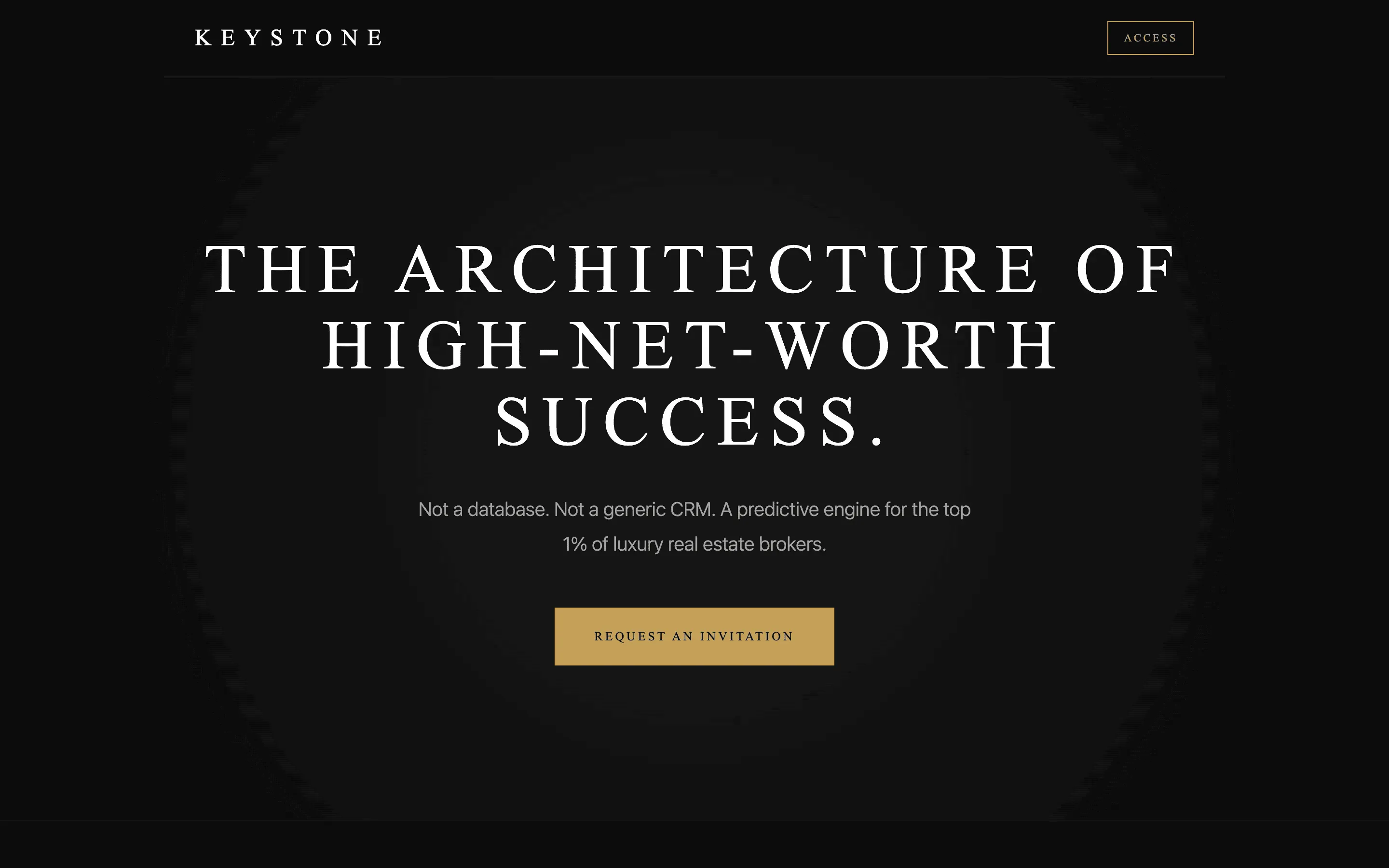

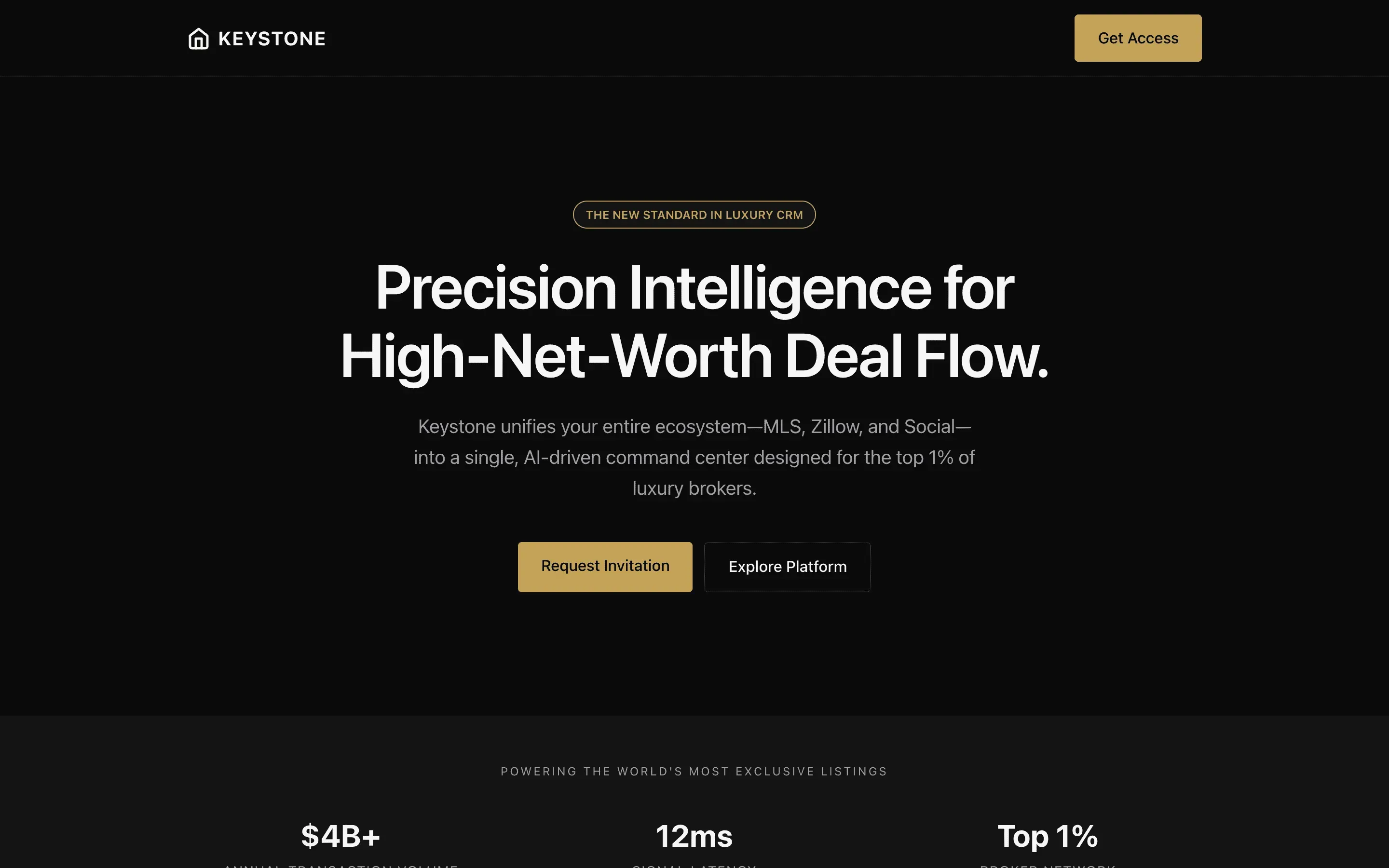

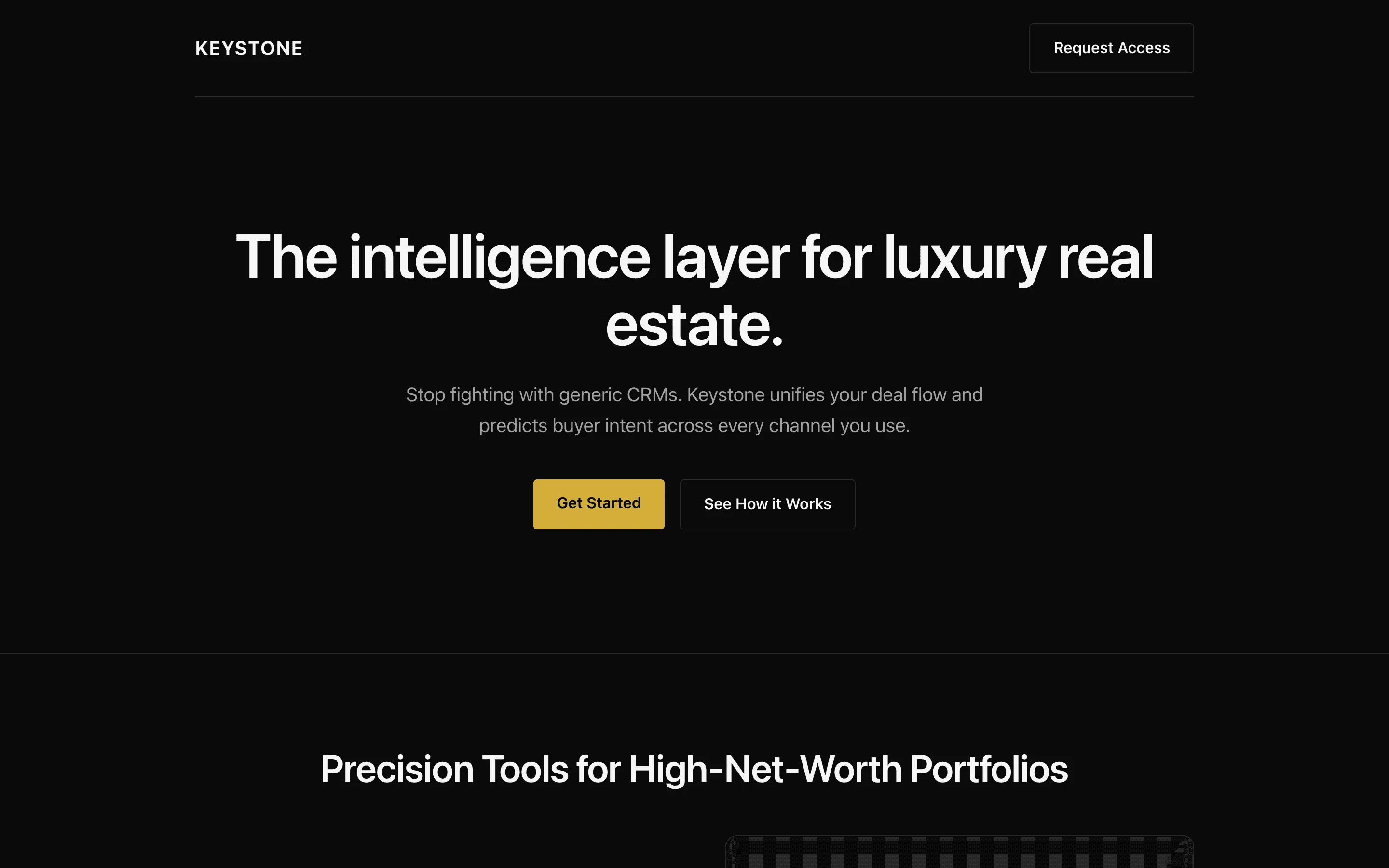

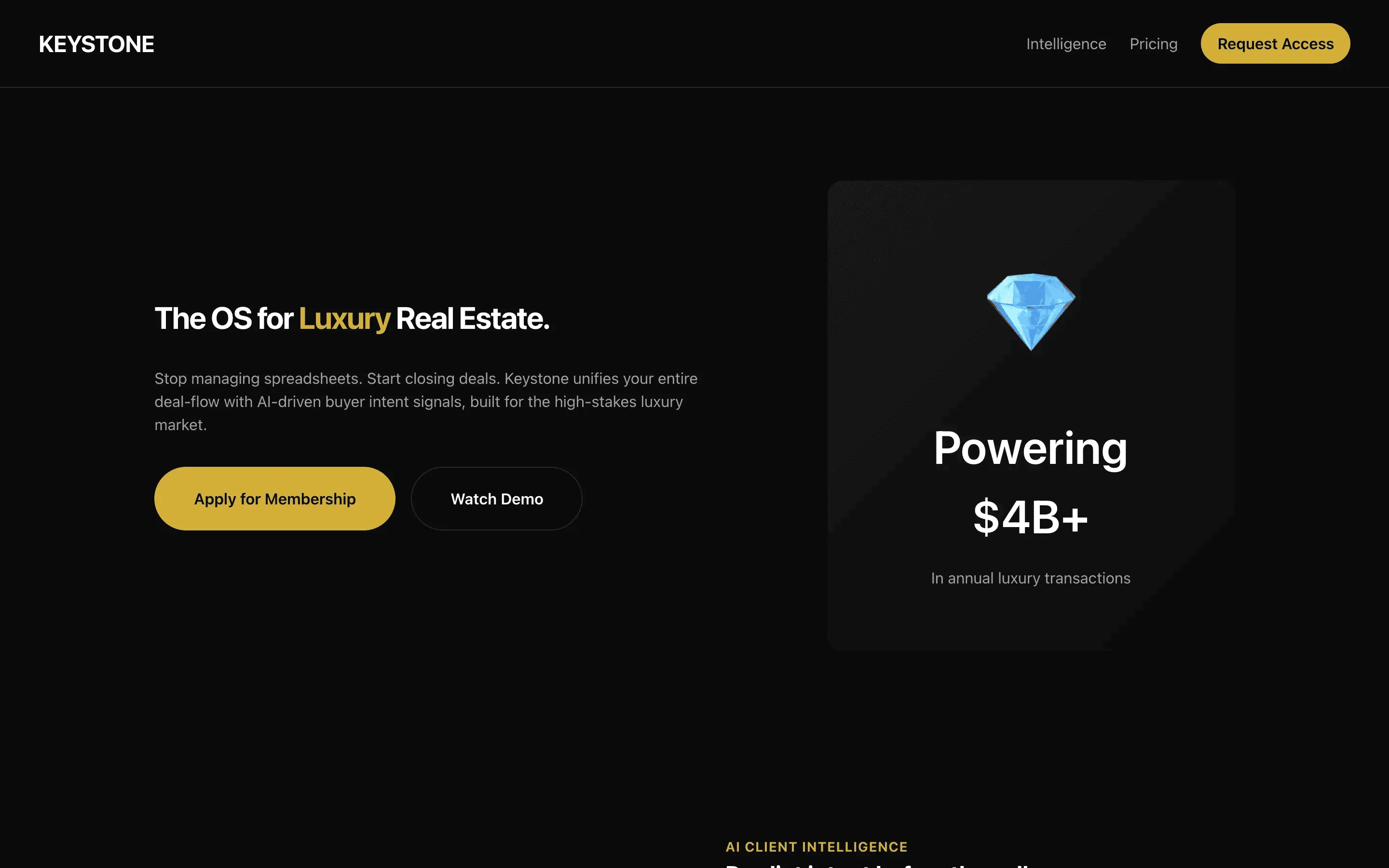

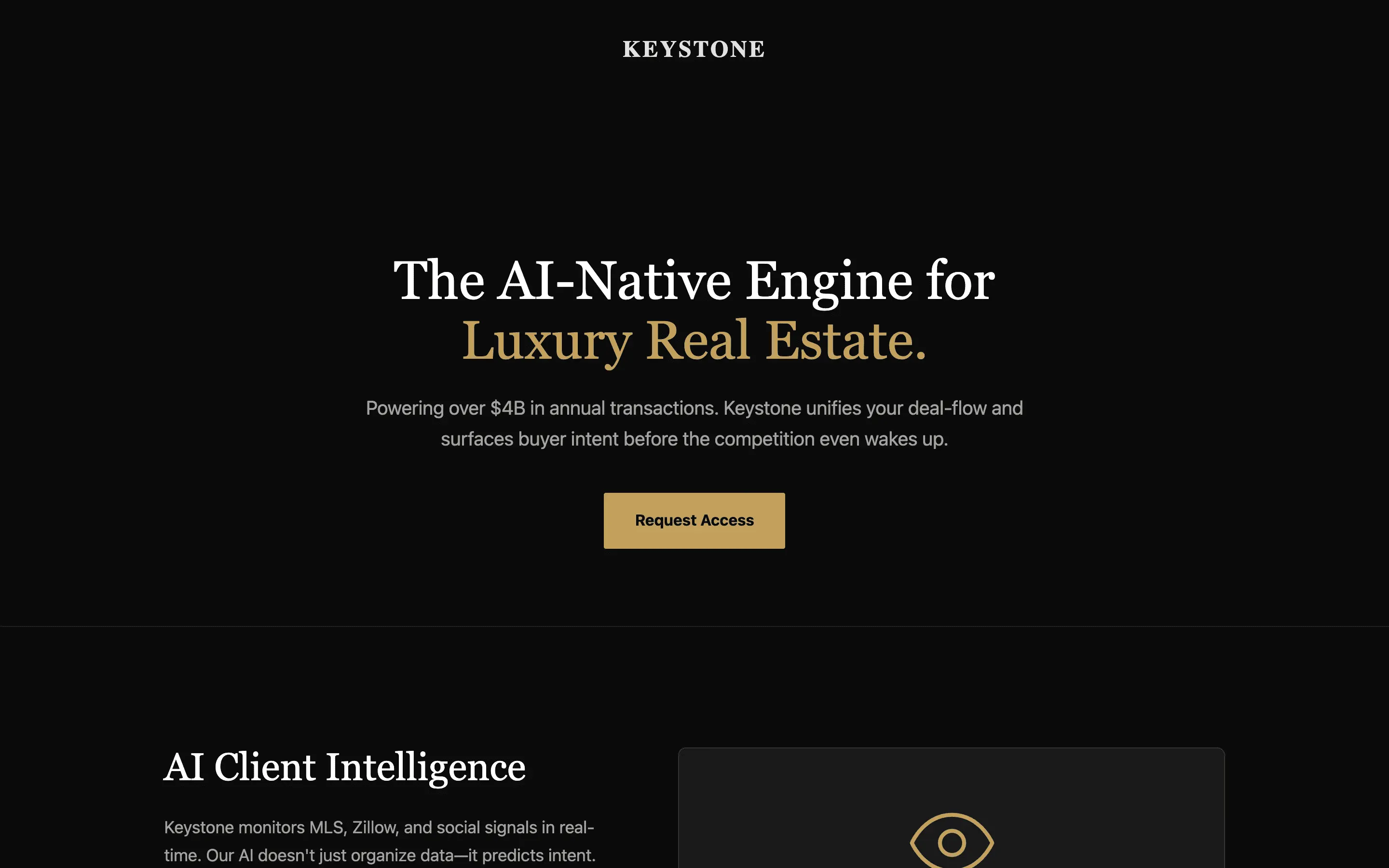

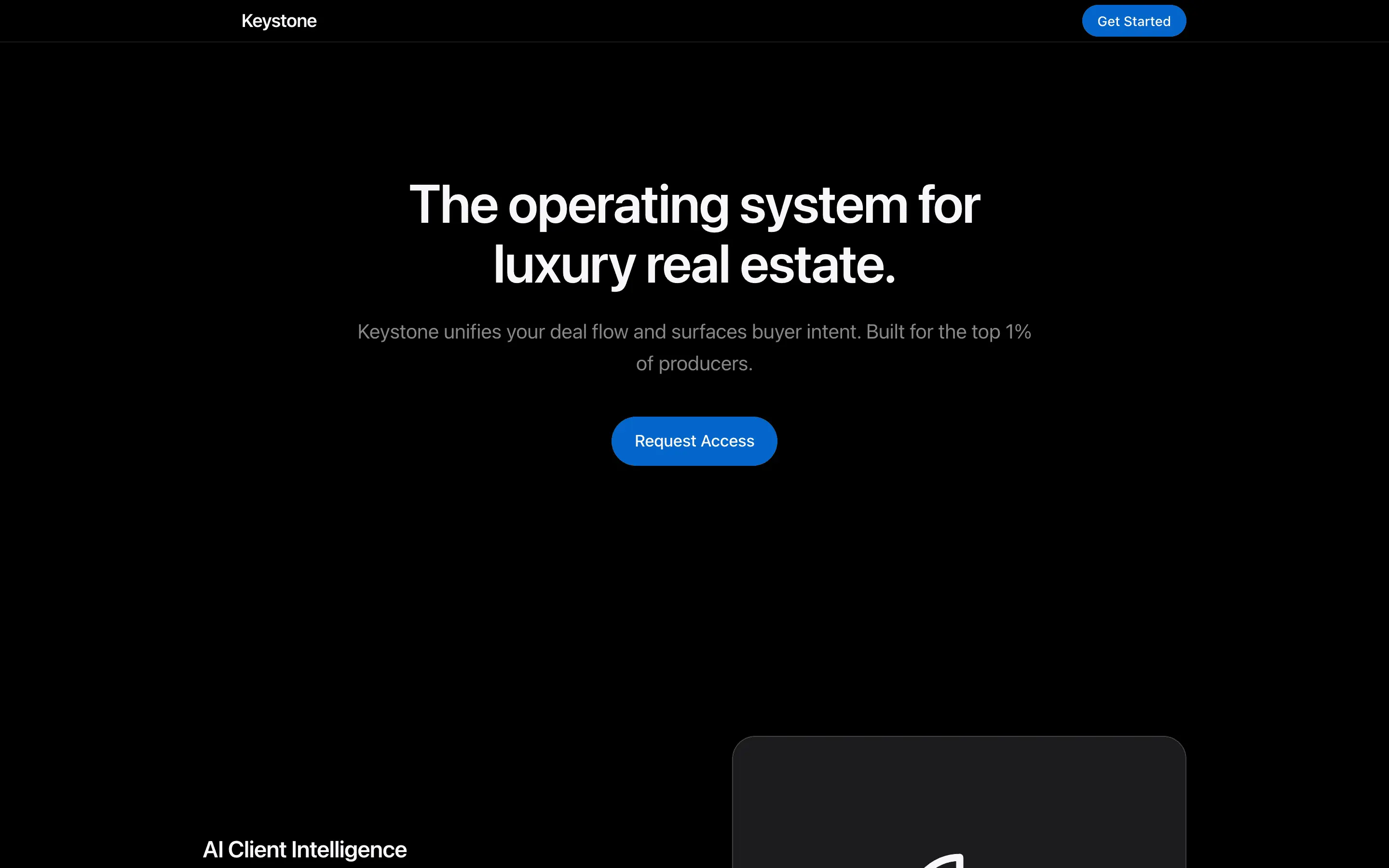

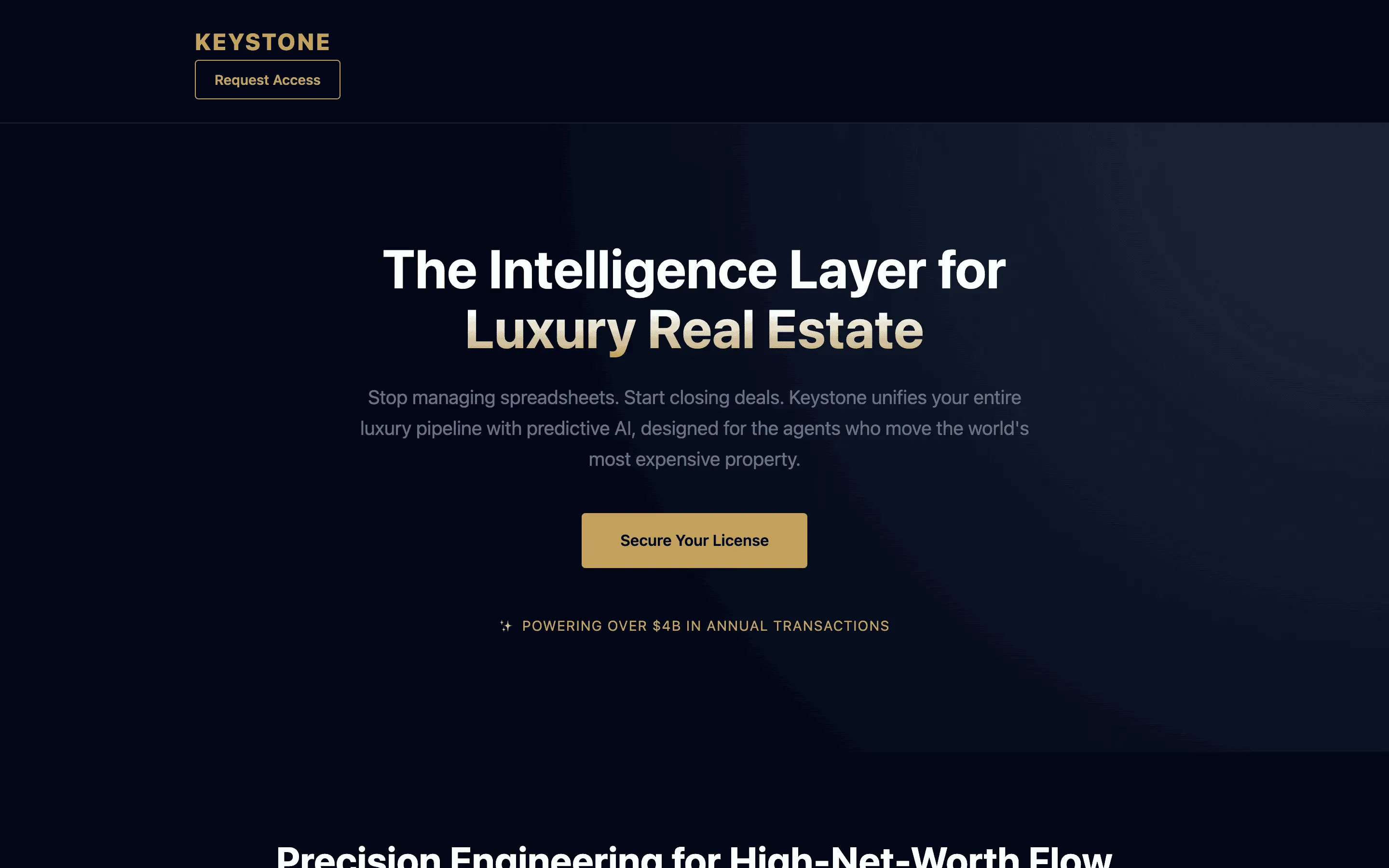

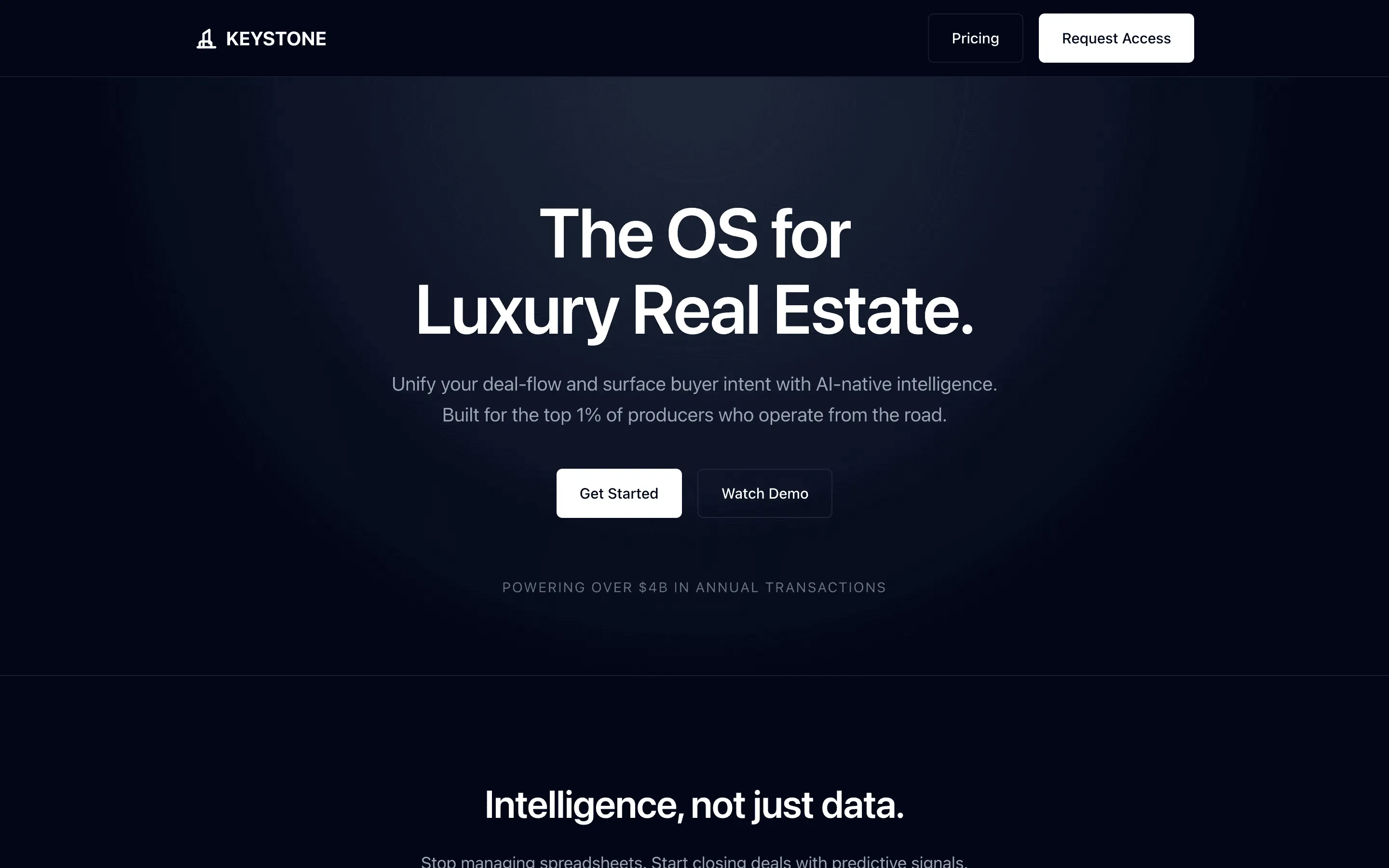

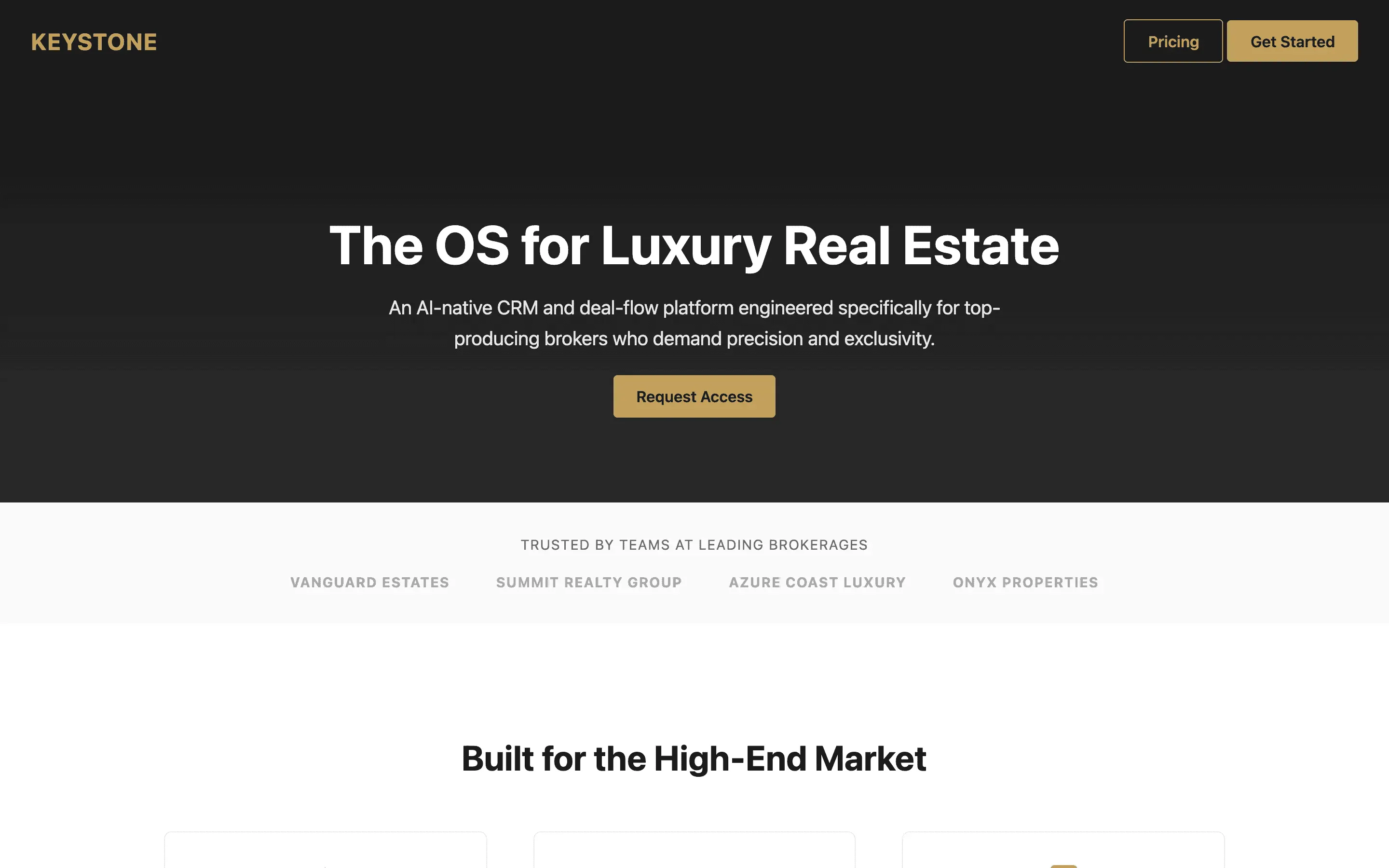

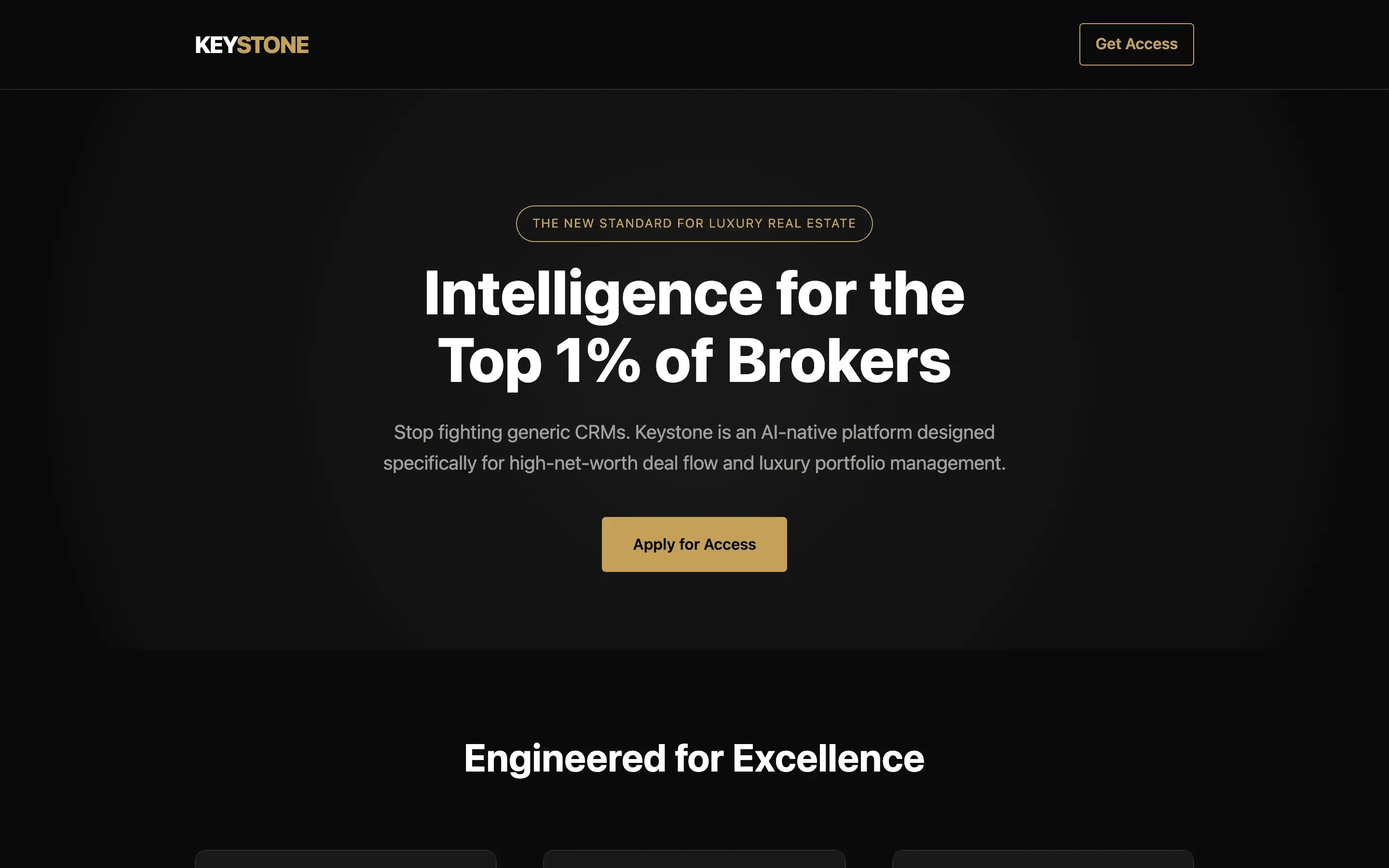

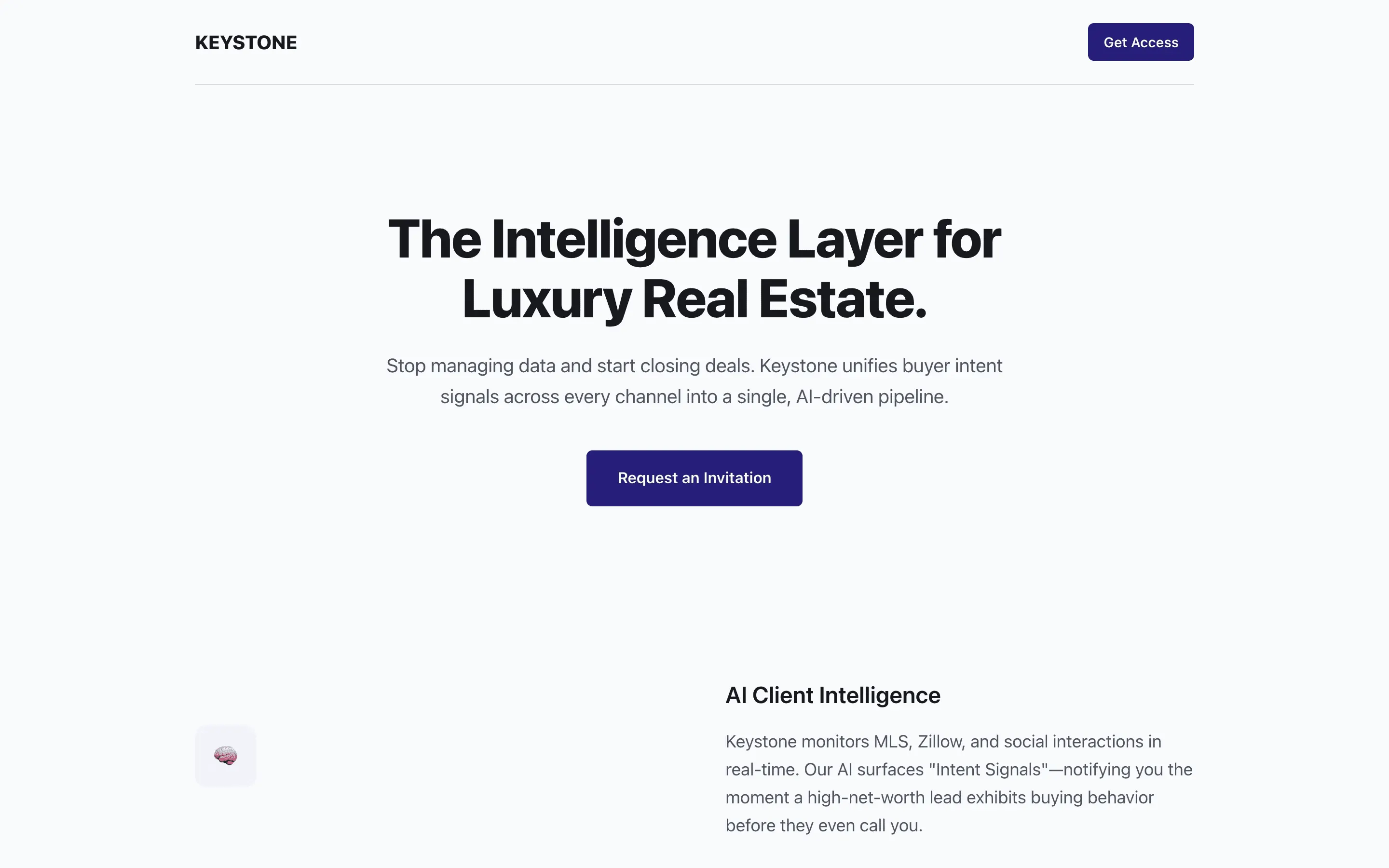

Encodes the Vercel and Linear visual language as a mechanical ruleset so the model can produce that restrained, confident aesthetic by lookup.

Forces the model to draft, honestly score, commit to a single fix, and rewrite, turning one-shot output into a two-pass process.

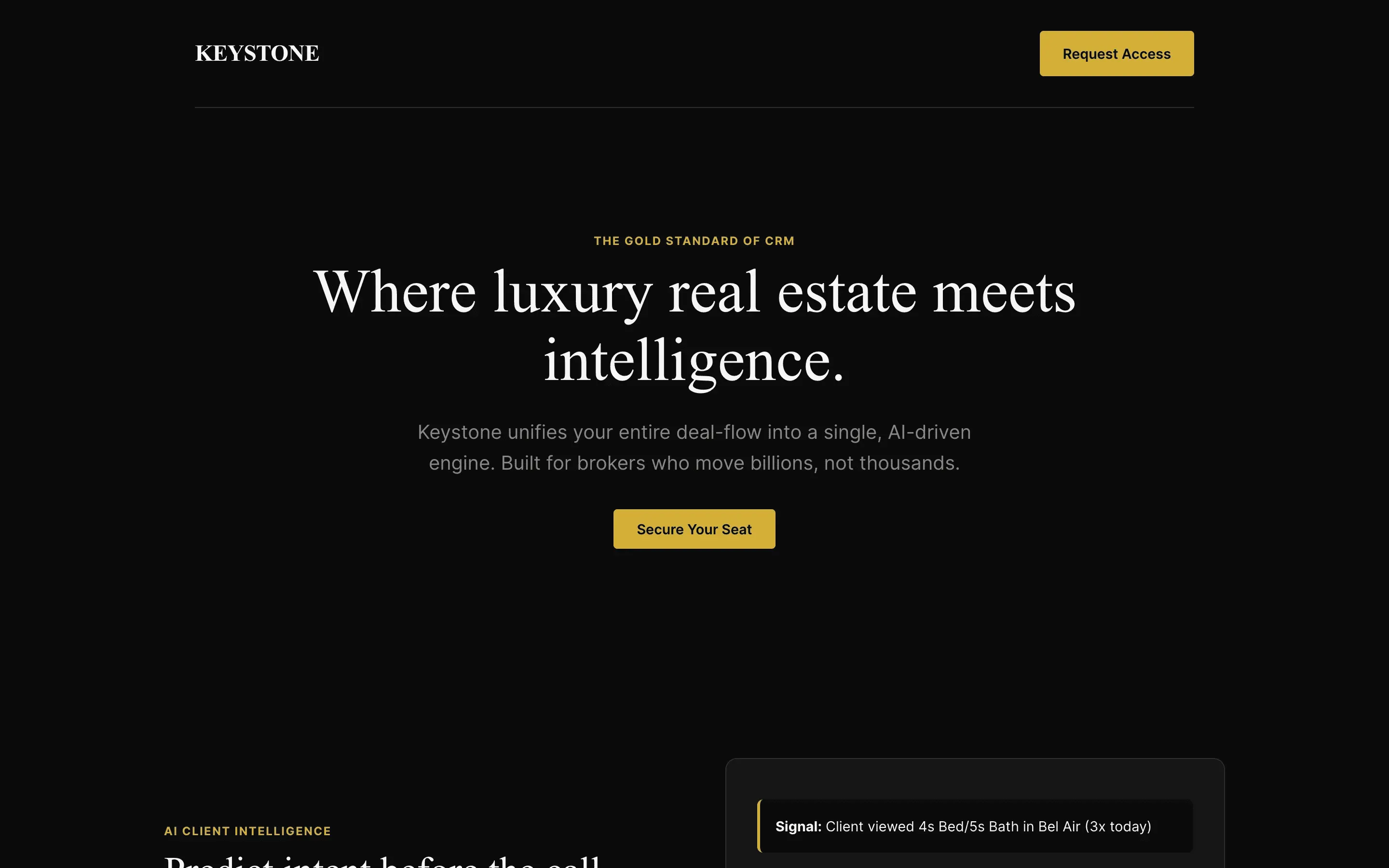

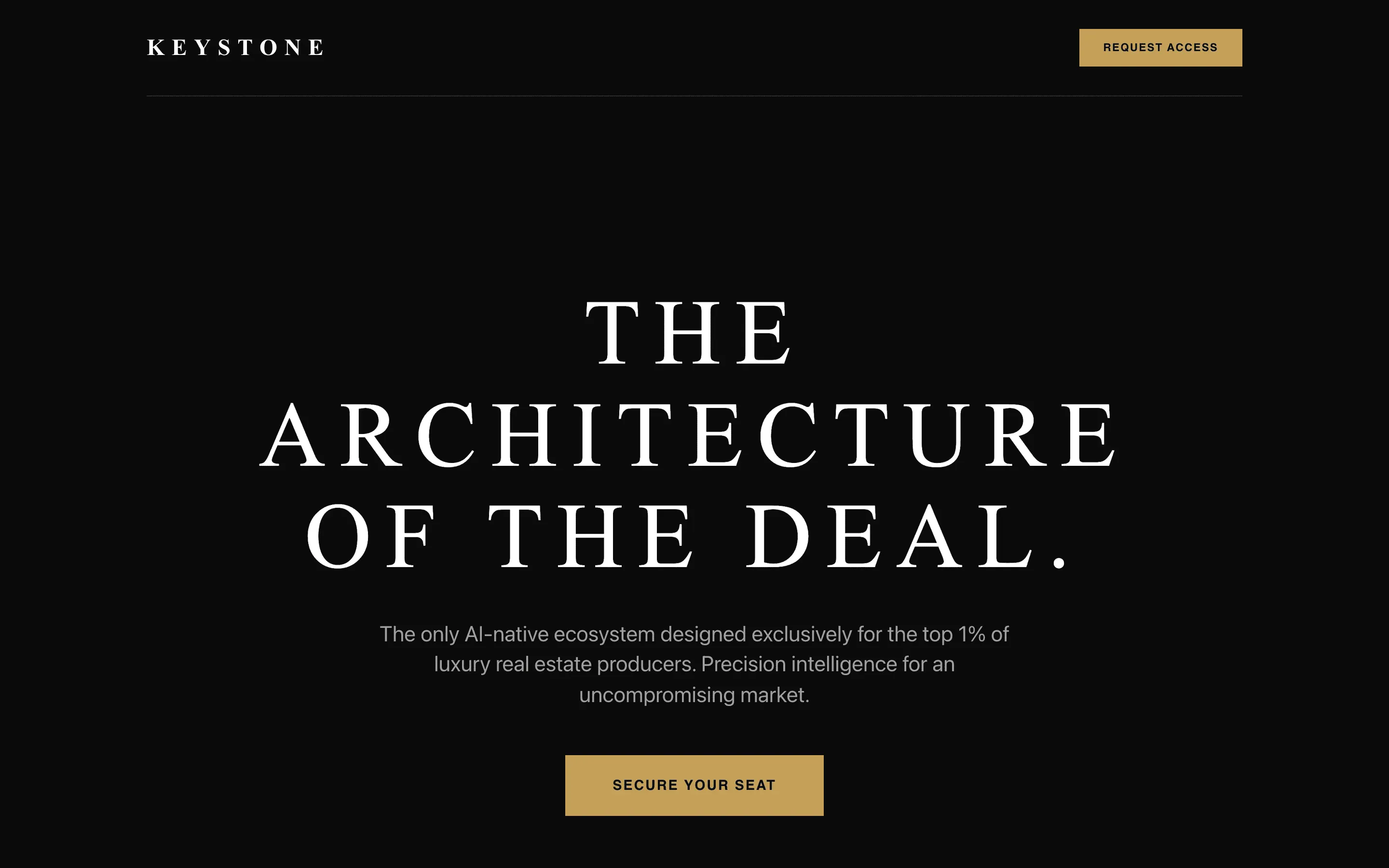

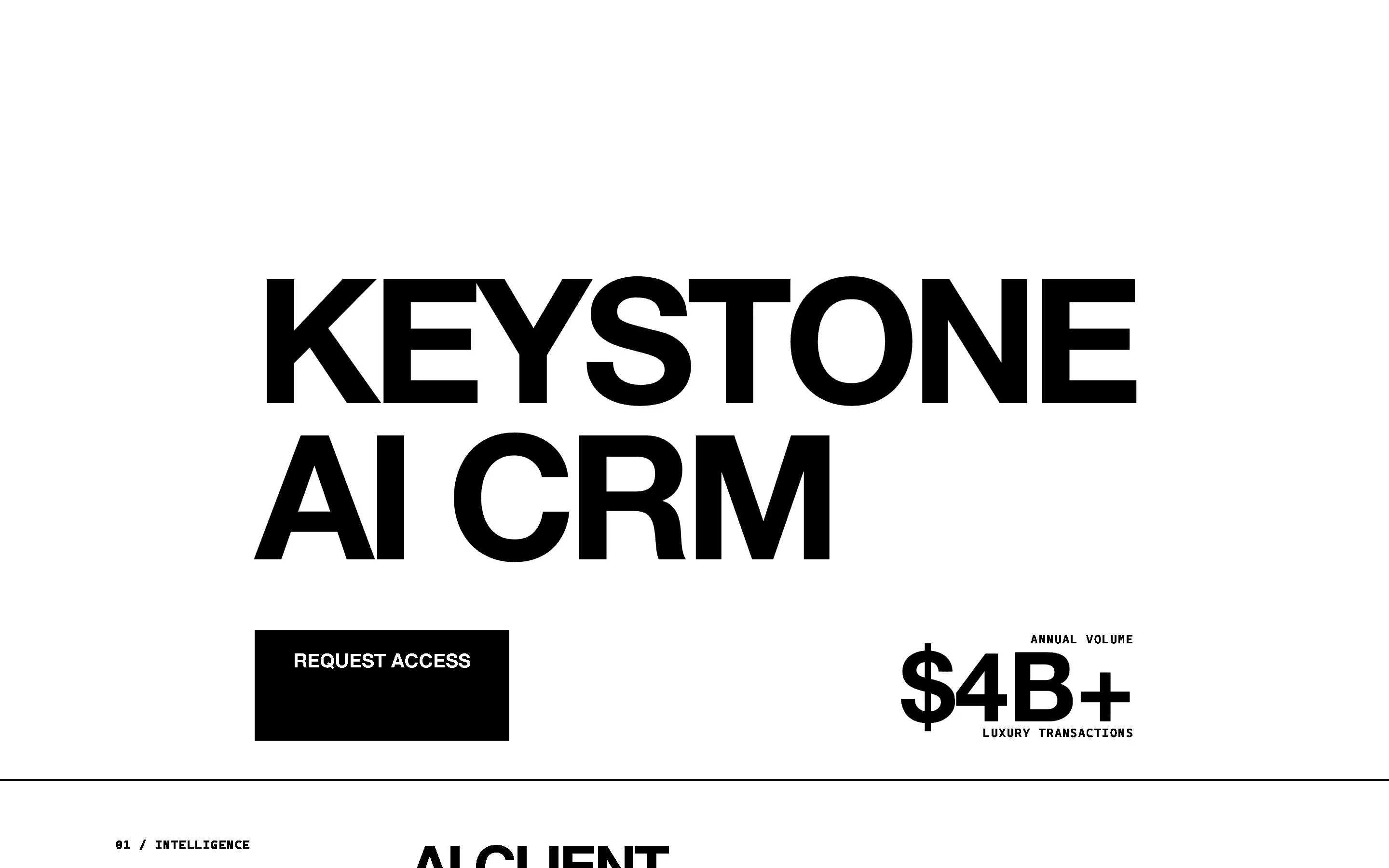

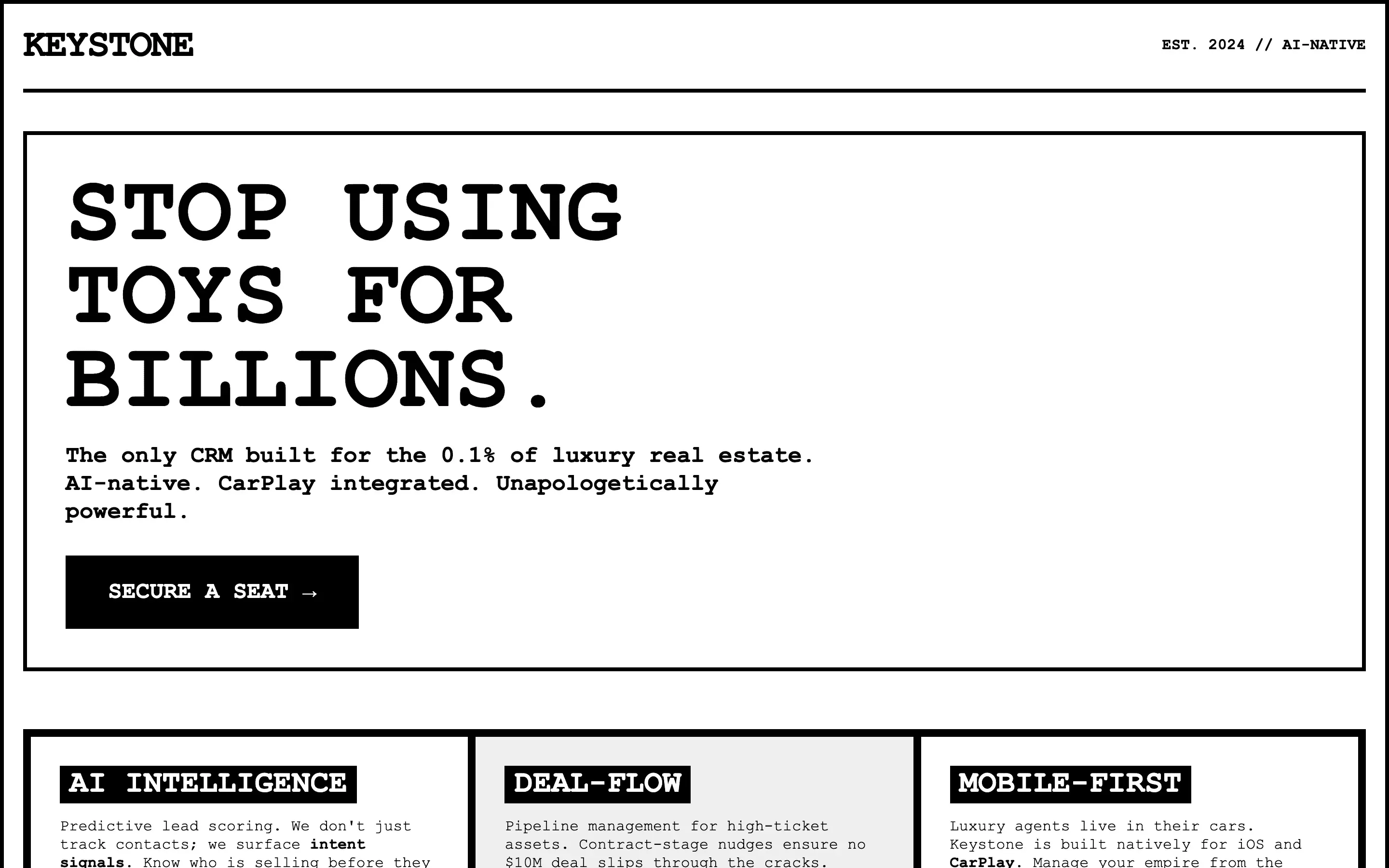

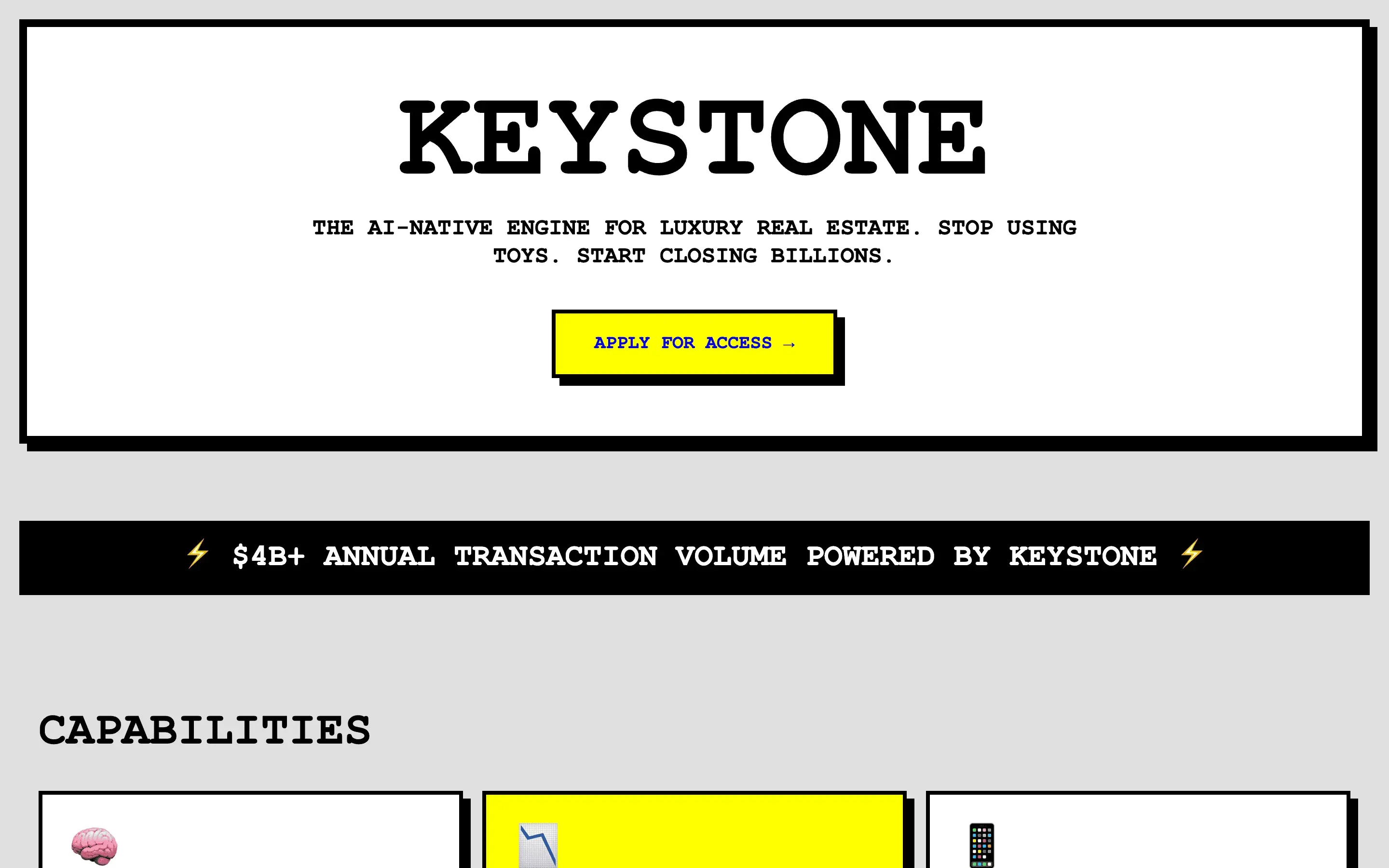

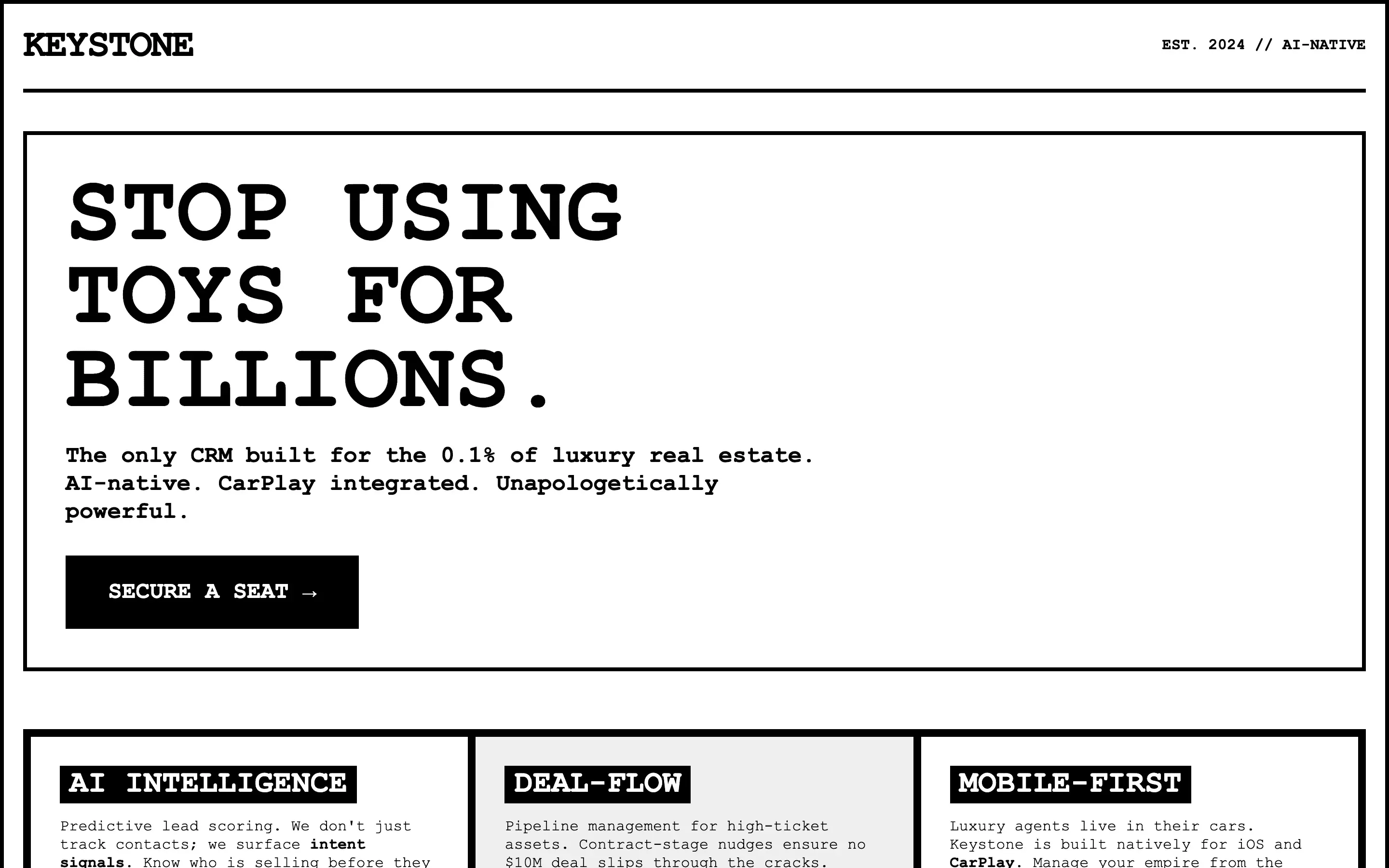

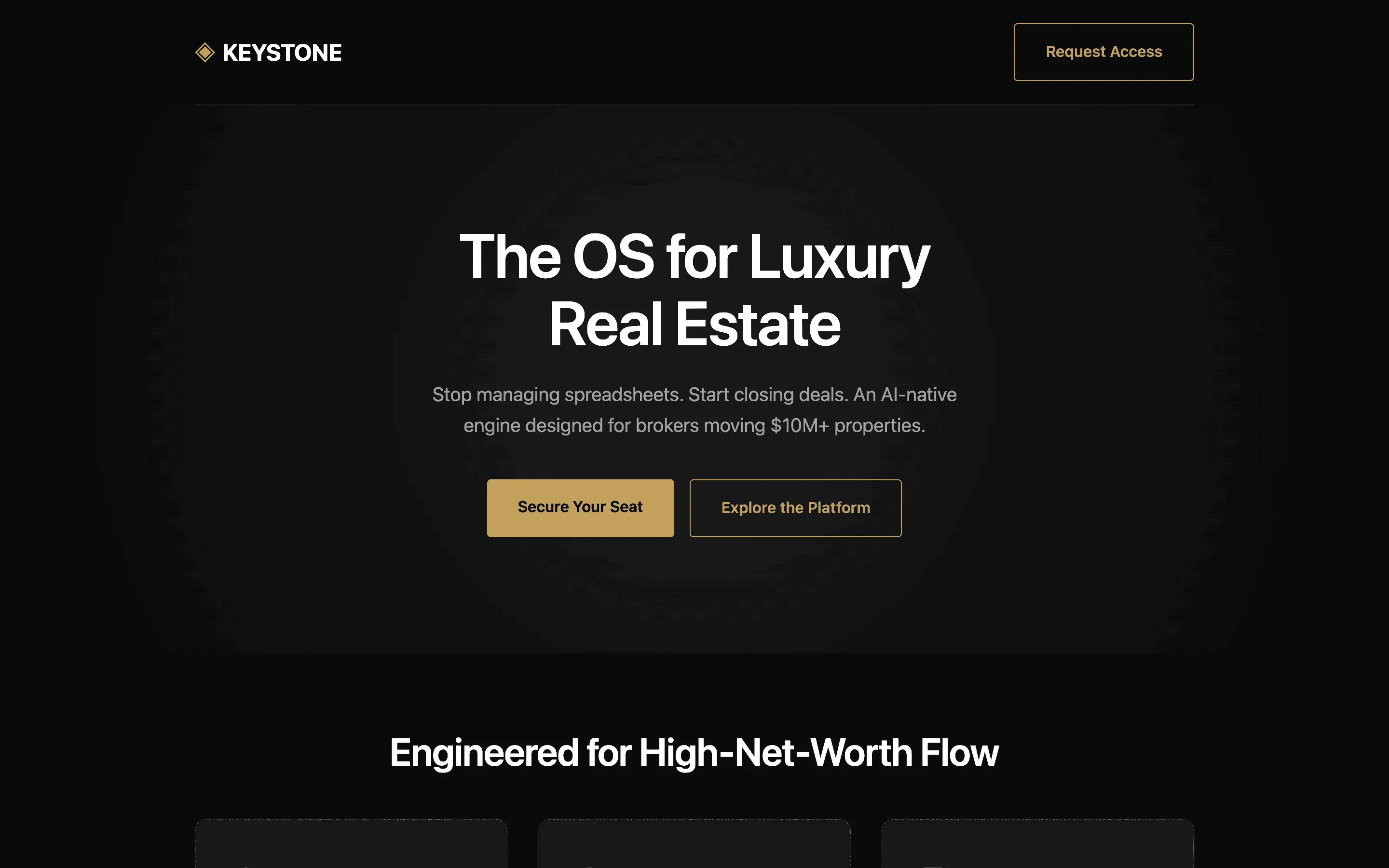

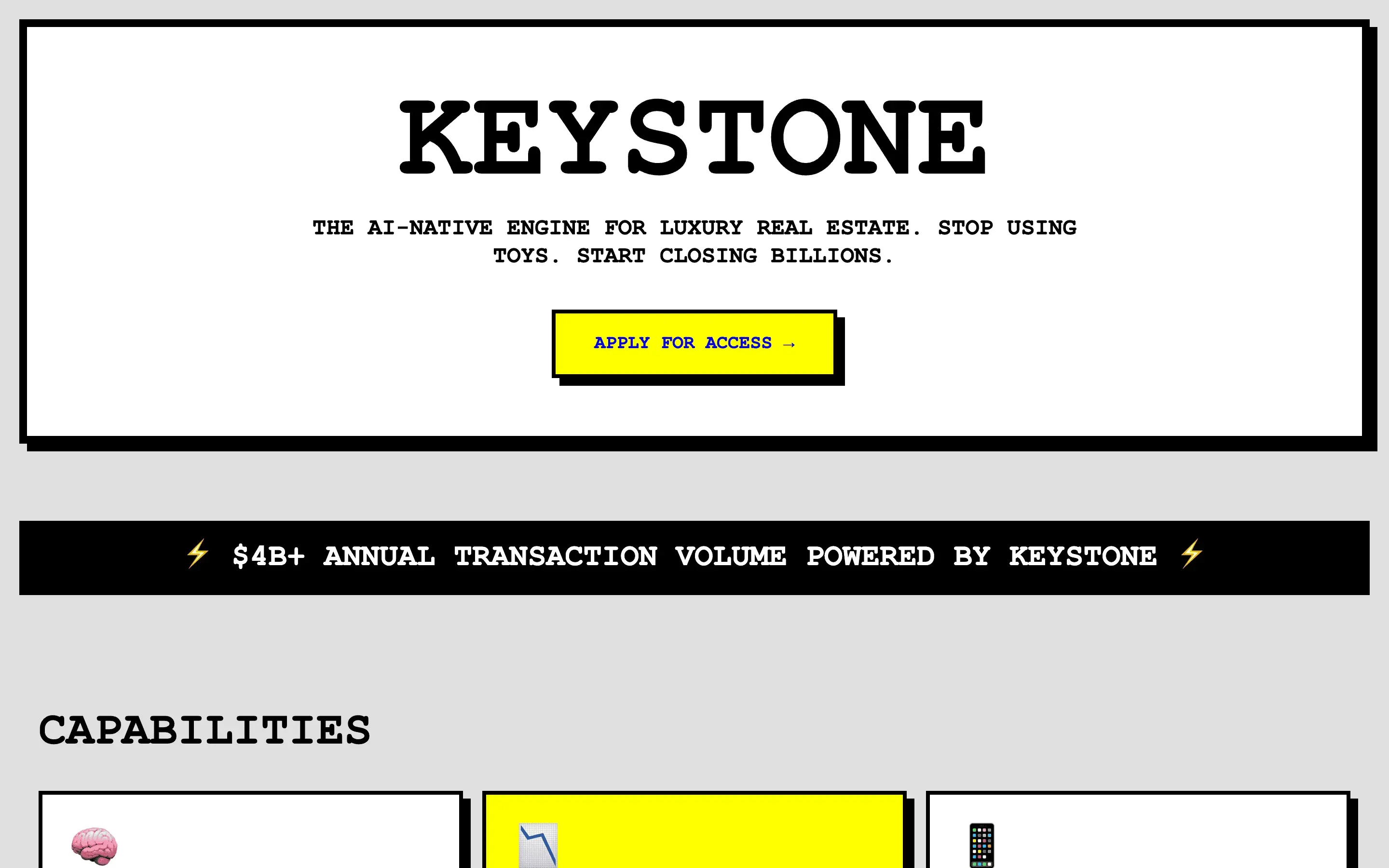

Tests whether a contrarian persona with a specific aesthetic lineage produces visually distinct output that resists the default SaaS template pull.

Tests whether forcing a self-administered rubric-based QA pass before the final render improves output quality independent of any persona.

Every generation · 156 rendered landing pages · sorted by composite

Free + paid

Method

Citation

Rival Research. The Persona Impact Study. 2026. rival.tips/research/persona-impact. Dataset: persona-impact-2026.jsonl.

Rival Research / Volume 04 / 2026

One model. One task. 52 system prompts. We held every other variable constant and measured how much a persona actually moves the needle on design output.

We picked a small open model on purpose: Gemma 4 31B. A frontier model produces competent output no matter what you put in the system prompt, which drowns out the persona signal. A smaller model leaves visible headroom, and headroom is what makes the effect measurable. The question is not whether Gemma is good. It is how much of the quality gap a well-designed persona can actually close.

Every render below is Gemma 4 31B on the same landing-page brief. The only variable is the system prompt: no prompt, the highest-scoring persona we tested, and the lowest-scoring one. Scores are a rubric-weighted composite from three blinded judges, on a 0–10 scale.

One system prompt can move a small model +1.70 points above baseline — or −4.65 below it. The rest of this page is the 156 generations and 52 personas behind that range.

Headline finding

We loaded eight personas with state-of-the-art design heuristics (Refactoring UI rules, Tufte, WCAG AA, the Tailwind scale, 8-pt grids, modular type ratios). The expectation was that this bucket would dominate. Instead it averaged 6.93 — below the 7.00 scored by the blank control.

What won were reasoning scaffolds (draft-critique-revise, few-shot exemplars), terse role assignments (Figma designer, Apple CPO), and reference-pinned prompts ("build this like vercel.com"). Taste beats rules. Reasoning beats cramming.

Bucket leaderboard · 95% bootstrap CIs

Prompt length vs composite score · each dot is one persona

Longer is not better. The scatter is flat-to-inverted: many of the top scores come from prompts under 400 characters, and the very longest prompts cluster near the middle.

Ranked by composite · pulled from the full 52-persona pool

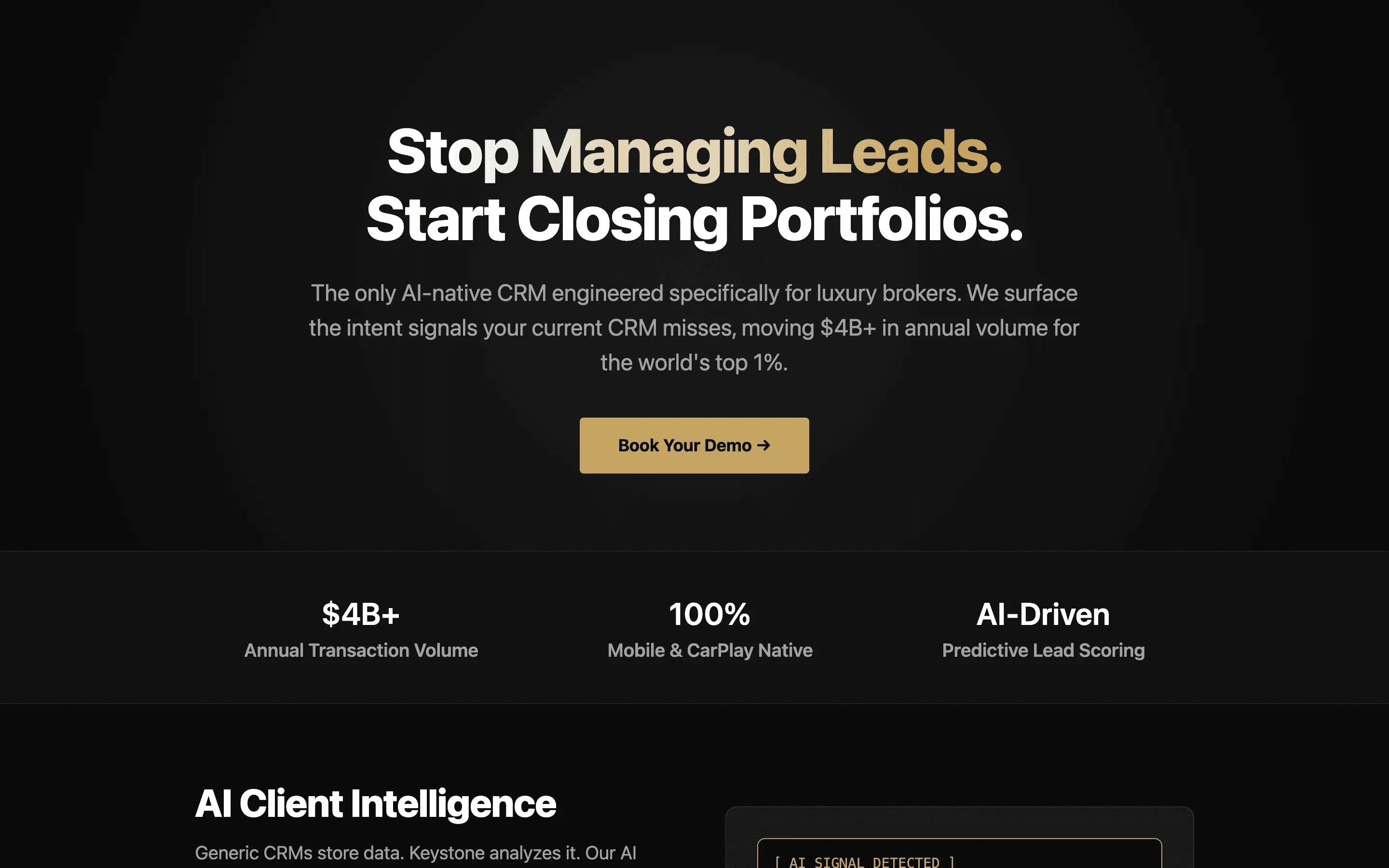

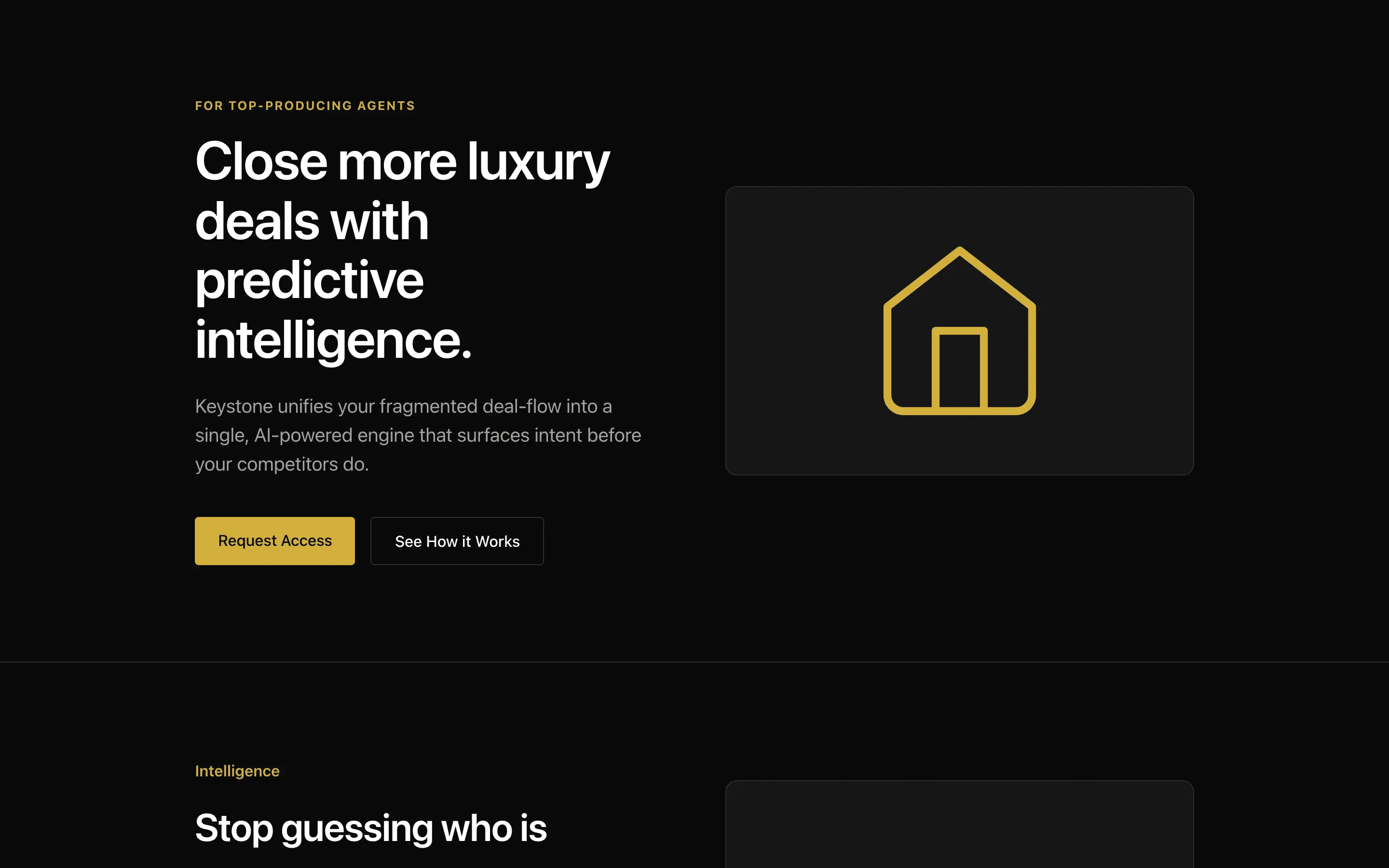

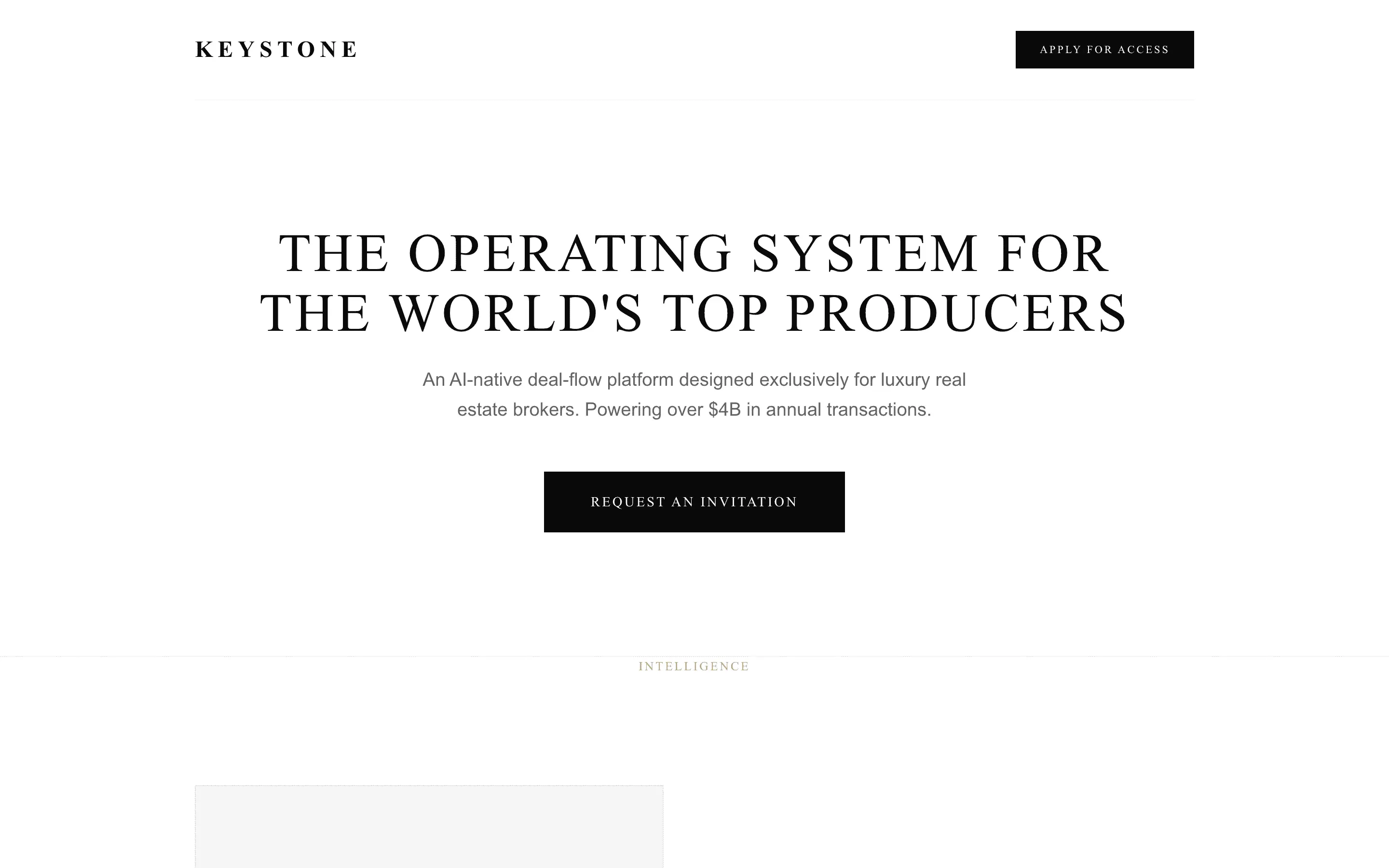

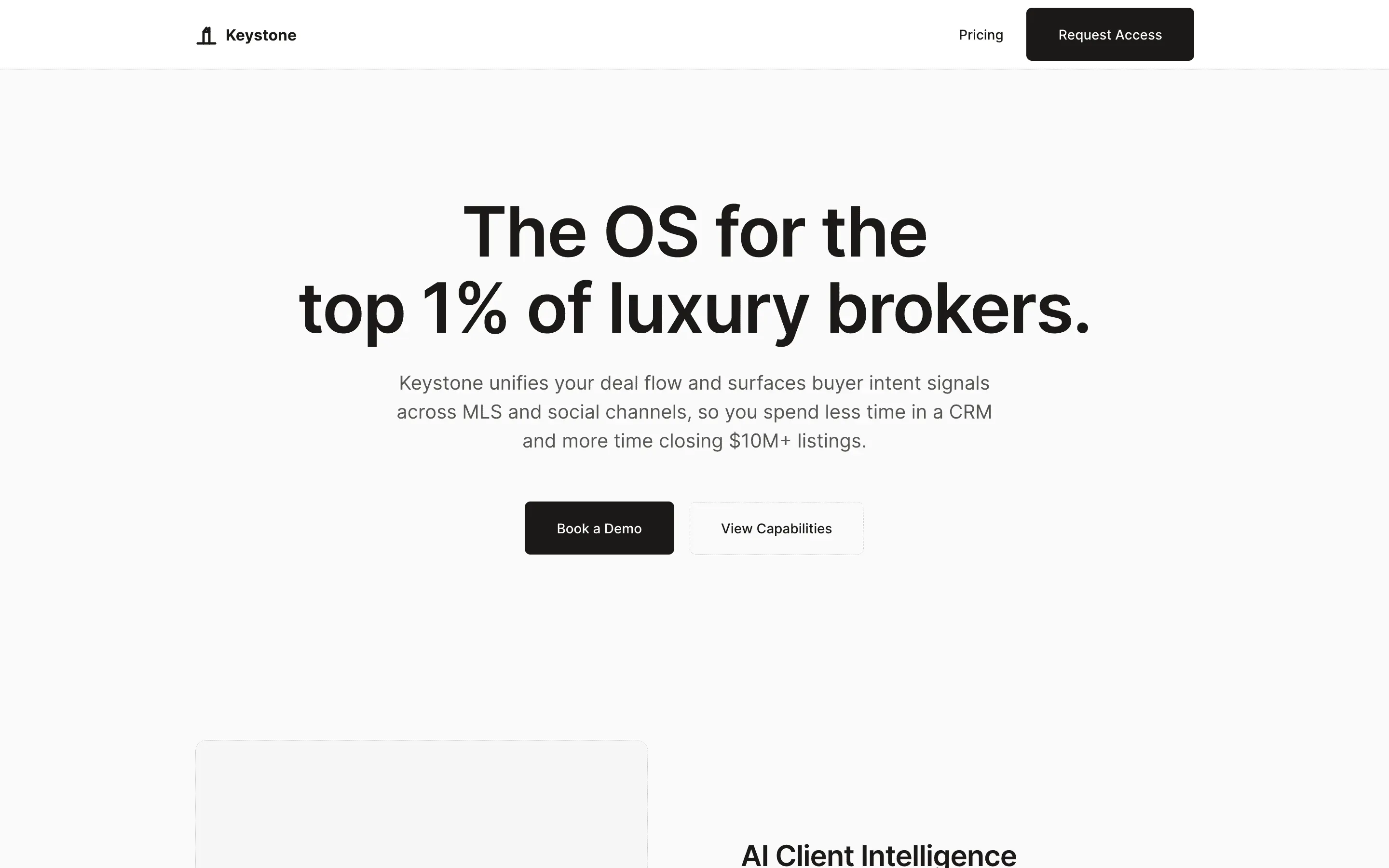

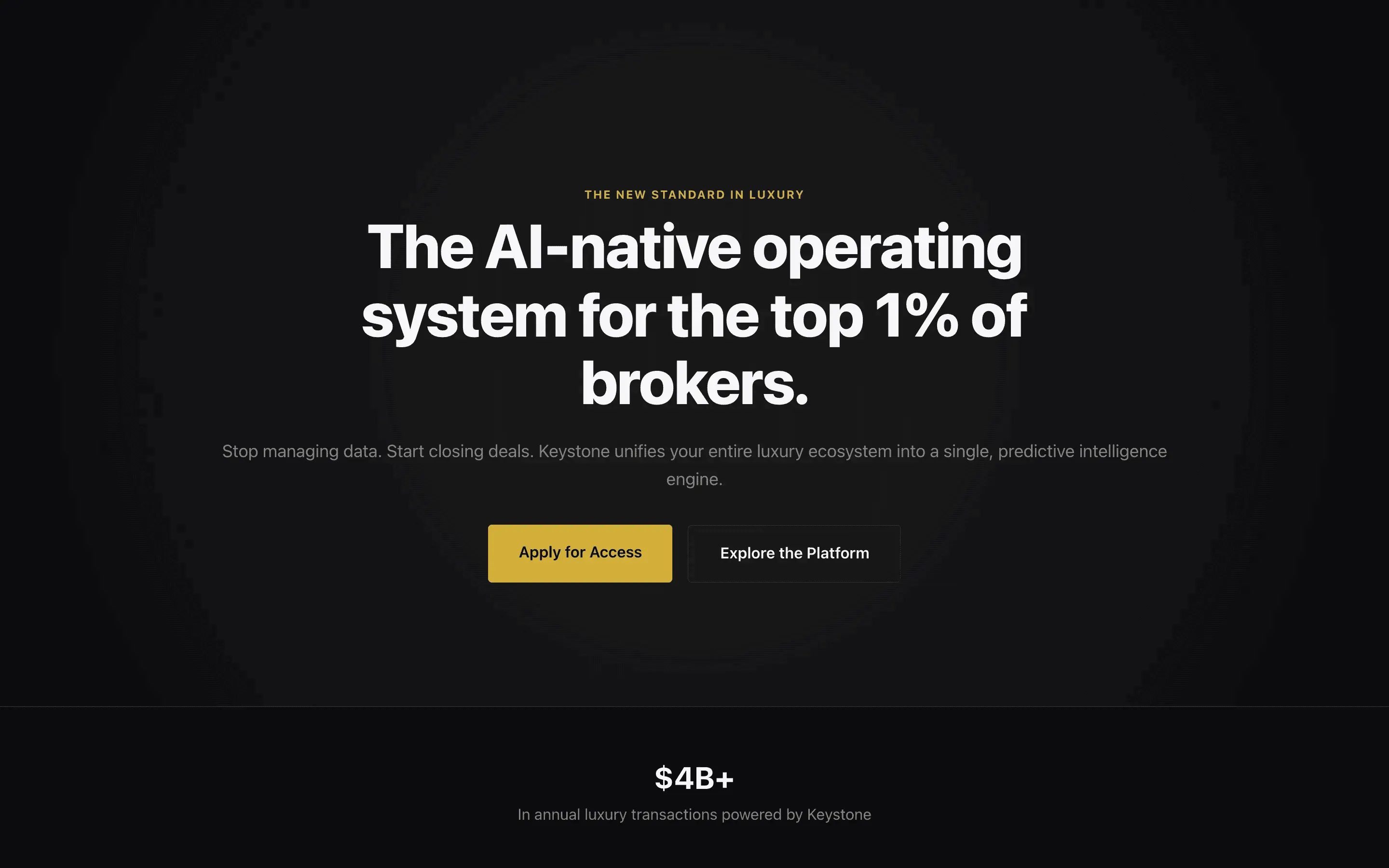

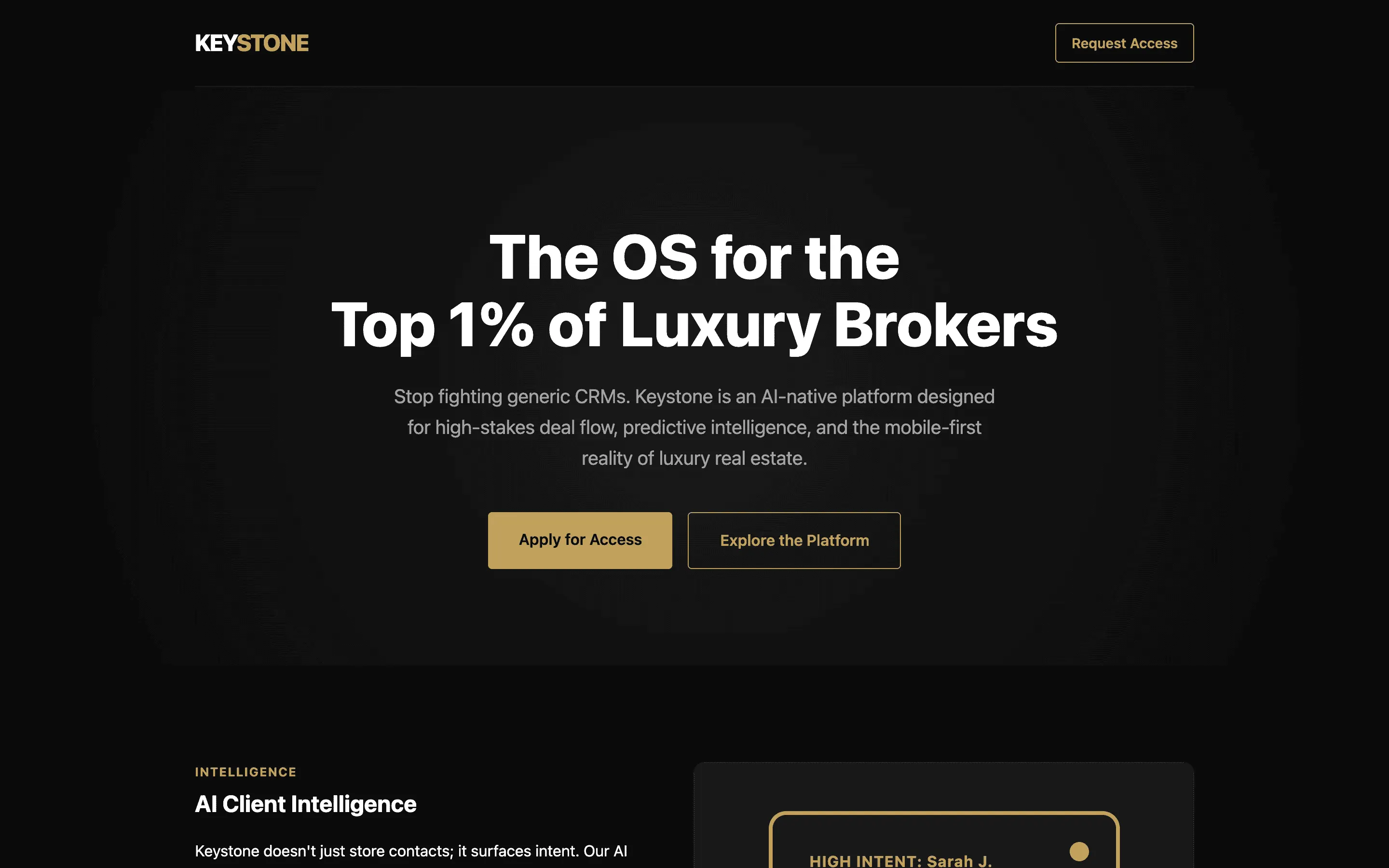

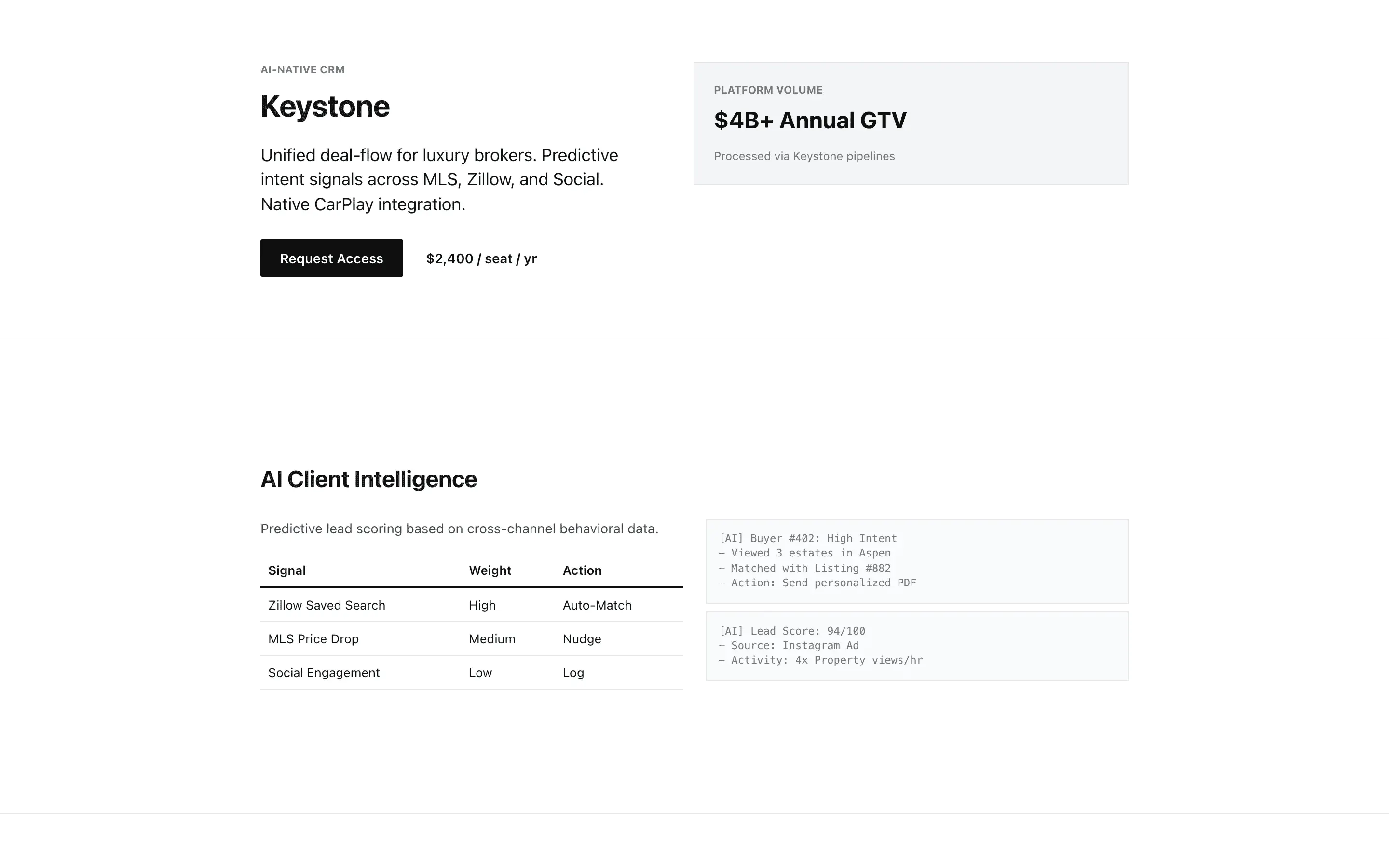

The seven highest-scoring personas out of the 52 we tested — averaging 8.13 across 5 different buckets, which is the more interesting finding: there is no single prompt shape that wins. Terse role assignments, expansive personas, reasoning scaffolds, and a reference-pinned one-liner all make it into the top seven. Each card below shows that persona's best of three samples on the same landing-page brief.

Tests whether embedding Stripe-specific typographic heuristics and forbidden words produces measurably tighter editorial craft than the one-line Stripe role.

Pins the model's taste to specific, nameable sites it has seen in training, replacing vague style words with concrete reference behavior.

Tests whether a modern-design identity biases output toward contemporary layout and type.

Encodes the Vercel and Linear visual language as a mechanical ruleset so the model can produce that restrained, confident aesthetic by lookup.

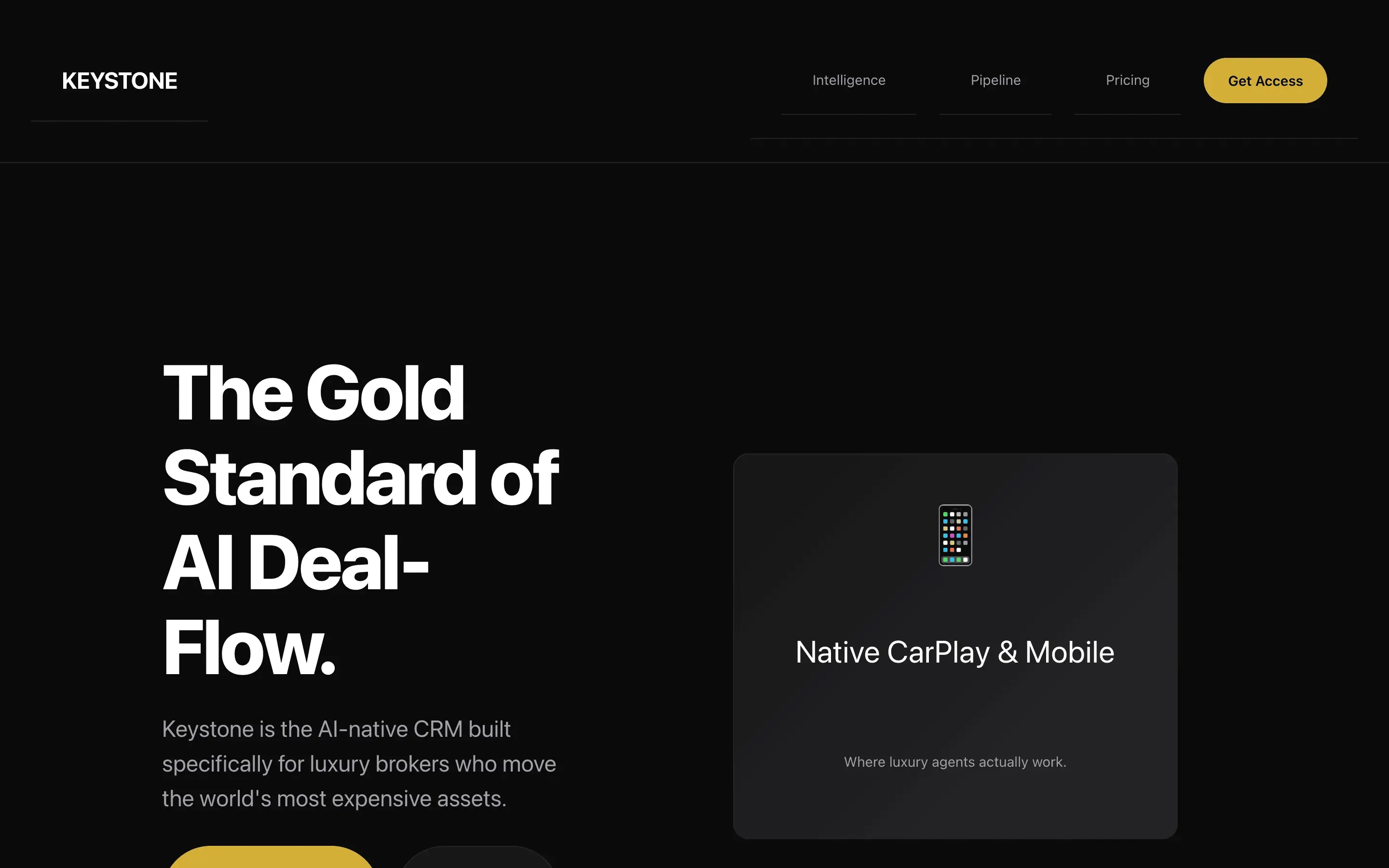

Forces the model to draft, honestly score, commit to a single fix, and rewrite, turning one-shot output into a two-pass process.

Tests whether a contrarian persona with a specific aesthetic lineage produces visually distinct output that resists the default SaaS template pull.

Tests whether forcing a self-administered rubric-based QA pass before the final render improves output quality independent of any persona.

Every generation · 156 rendered landing pages · sorted by composite

Free + paid

Method

Citation

Rival Research. The Persona Impact Study. 2026. rival.tips/research/persona-impact. Dataset: persona-impact-2026.jsonl.